The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTFeeding Pascal, Cont: 4th Gen Delta Color Compression

Now that we’ve seen GDDR5X in depth, let’s talk about the other half of the equation when it comes to feeding Pascal: delta color compression.

NVIDIA has utilized delta color compression for a number of years now. However the technology only came into greater prominence in the previous Maxwell 2 generation, when NVIDIA disclosed delta color compression’s existence and offered a basic overview of how it worked. As a reminder, delta color compression is a per-buffer/per-frame compression method that breaks down a frame into tiles, and then looks at the differences between neighboring pixels – their deltas. By utilizing a large pattern library, NVIDIA is able to try different patterns to describe these deltas in as few pixels as possible, ultimately conserving bandwidth throughout the GPU, not only reducing DRAM bandwidth needs, but also L2 bandwidth needs and texture unit bandwidth needs (in the case of reading back a compressed render target).

Since its inception NVIDIA has continued to tweak and push the technology for greater compression and to catch patterns they missed on prior generations, and Pascal in that respect is no different. With Pascal we get the 4th generation of the technology, and while there’s nothing radical here compared to the 3rd generation, it’s another element of Pascal where there has been an iterative improvement on the technology.

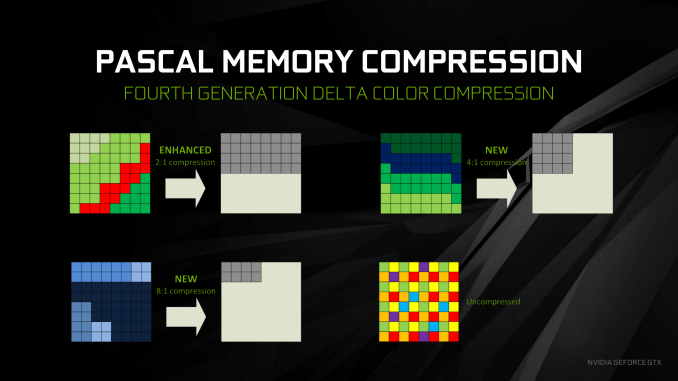

New to Pascal is a mix of improved compression modes and new compression modes. 2:1 compression mode, the only delta compression mode available up through the 3rd generation, has been enhanced with the addition of more patterns to cover more scenarios, meaning NVIDIA is able to 2:1 compress blocks more often.

Meanwhile, new to delta color compression with Pascal is 4:1 and 8:1 compression modes, joining the aforementioned 2:1 mode. Unlike 2:1 mode, the higher compression modes are a little less straightforward, as there’s a bit more involved than simply the pattern of the pixels. 4:1 compression is in essence a special case of 2:1 compression, where NVIDIA can achieve better compression when the deltas between pixels are very small, allowing those differences to be described in fewer bits. 8:1 is more radical still; rather an operating on individual pixels, it operates on multiple 2x2 blocks. Specifically, after NVIDIA’s constant color compressor does its job – finding 2x2 blocks of identical pixels and compressing them to a single sample – the 8:1 delta mode then applies 2:1 delta compression to the already compressed blocks, achieving the titular 8:1 effective compression ratio.

Overall, delta color compression represents one of the interesting tradeoffs NVIDIA has to make in the GPU design process. The number of patterns is essentially a function of die space, so NVIDIA could always add more patterns, but would the memory bandwidth improvements be worth the real cost of die space and the power cost of those transistors? Especially since NVIDIA has already implemented the especially common patterns, which means new patterns likely won’t occur as frequently. NVIDIA of course pushed ahead here, thanks in part to the die and power savings of 16nm FinFET, but it gives us an idea of where they might (or might not) go in future generations in order to balance the costs and benefits of the technology, with less of an emphasis on patterns and instead making more novel use of those patterns.

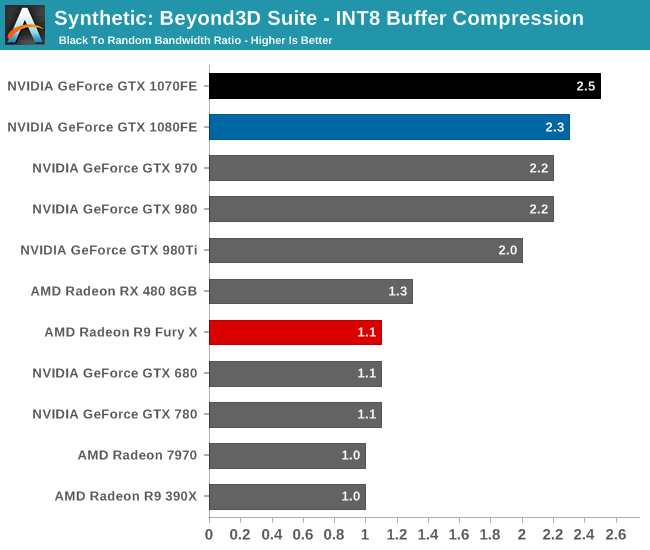

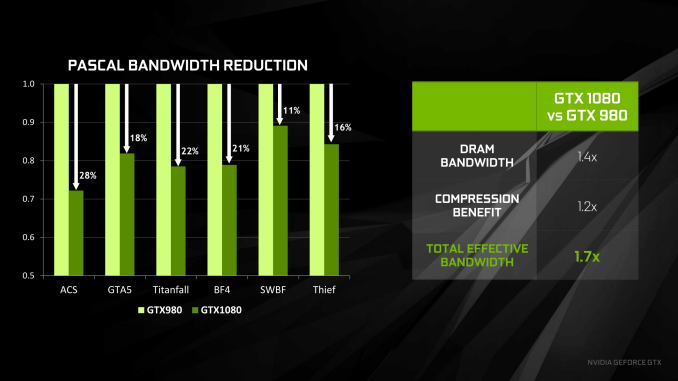

To put all of this in numbers, NVIDIA pegs the effective increase in memory bandwidth from delta color compression alone at 20%. The difference is of course per-game, as the effectiveness of the tech depends on how well a game sticks to patterns (and if you ever create a game with random noise, you may drive an engineer or two insane), but 20% is a baseline number for the average. Meanwhile for anyone keeping track of the numbers over Maxwell 2, this is a bit less than the gains with NVIDIA’s last generation architecture, where the company claimed the average gain was 25%.

The net impact then, as NVIDIA likes to promote it, is a 70% increase in the total effective memory bandwidth. This comes from the earlier 40% (technically 42.9%) actual memory bandwidth gains in the move from 7Gbps GDDR5 to 10Gbps GDDR5X, coupled with the 20% effective memory bandwidth increase from delta compression. Keep those values in mind, as we’re going to get back to them in a little bit.

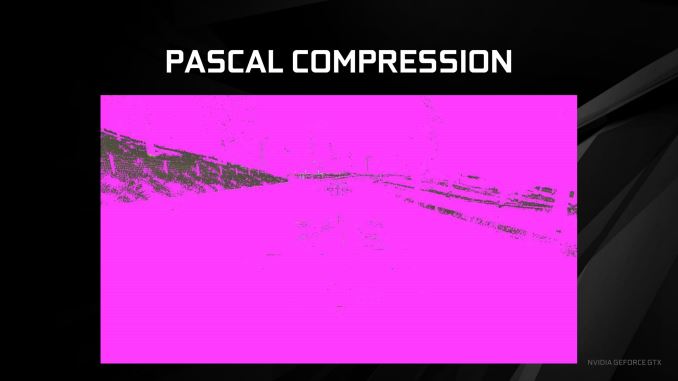

Meanwhile from a graphical perspective, to showcase the impact of delta color compression, NVIDIA sent over a pair of screenshots for Project Cars, colored to show what pixels had been compressed. Shown in pink, even Maxwell can compress most of the frame, really only struggling with finer details such as the trees, the grass, and edges of buildings. Pascal, by comparison, gets most of this. Trees and buildings are all but eliminated as visually distinct uncompressed items, leaving only patches of grass and indistinct fringe elements. It should be noted that these screenshots have most likely been picked because they’re especially impressive – seeing as how not all games compress this well – but it’s none the less a potent example of how much of a frame Pascal can compress.

Finally, while we’re on the subject of compress, I want to talk a bit about memory bandwidth relative to other aspects of the GPU. While Pascal (in the form of GTX 1080) offers 43% more raw memory bandwidth than GTX 980 thanks to GDDR5X, it’s important to note just how quickly this memory bandwidth is consumed. Thanks to GTX 1080’s high clockspeeds, the raw throughput of the ROPs is coincidentally also 43% higher. Or we have the case of the CUDA cores, whose total throughput is 78% higher, shooting well past the raw memory bandwidth gains.

While it’s not a precise metric, the amount of bandwidth available per FLOP has continued to drop over the years with NVIDIA’s video cards. GTX 580 offered just short of 1 bit of memory bandwidth per FLOP, and by GTX 980 this was down to 0.36 bits/FLOP. GTX 1080 is lower still, now down to 0.29bits/FLOP thanks to the increase in both CUDA core count and frequency as afforded by the 16nm process.

| NVIDIA Memory Bandwidth per FLOP (In Bits) | ||||||

| GPU | Bandwidth/FLOP | Total FLOPs | Total Bandwidth | |||

| GTX 1080 | 0.29 bits | 8.87 TFLOPs | 320GB/sec | |||

| GTX 980 | 0.36 bits | 4.98 TFLOPs | 224GB/sec | |||

| GTX 680 | 0.47 bits | 3.25 TFLOPs | 192GB/sec | |||

| GTX 580 | 0.97 bits | 1.58 TFLOPs | 192GB/sec | |||

The good news here is that at least for graphical tasks, the CUDA cores generally aren’t the biggest consumer of DRAM bandwidth. That would fall to the ROPs, which are packed alongside the L2 cache and memory controllers for this very reason. In that case GTX 1080’s bandwidth gains keep up with the ROP performance increase, but only by just enough.

The overall memory bandwidth needs of GP104 still outpace the memory bandwidth gains from GDDR5X, and this is why features such as delta color compression are so important to GP104’s performance. GP104 is perpetually memory bandwidth starved – adding more memory bandwidth will improve performance, as we’ll see in our overclocking results – and that means that NVIDIA will continue to try to conserve memory bandwidth usage as much as possible through compression and other means. How long they can fight this battle remains to be seen – they already encounter diminishing returns in some cases – but in the meantime this allows NVIDIA to utilize smaller memory buses, keeping down the die size and power costs of their GPUs, making PCB costs cheaper, and of course boosting profit margins at the same time.

200 Comments

View All Comments

Ranger1065 - Thursday, July 21, 2016 - link

Your unwavering support for Anandtech is impressive.I too have a job that keeps me busy, yet oddly enough I find the time to browse (I prefer that word to "trawl") a number of sites.

I find it helps to form objective opinions.

I don't believe in early adoption, but I do believe in getting the job done on time, however if you are comfortable with a 2 month delay, so be it :)

Interesting to note that architectural deep dives concern your art and media departments so closely in their purchasing decisions. Who would have guessed?

It's true (God knows it's been stated here often enough) that

Anandtech goes into detail like no other, I don't dispute that.

But is it worth the wait? A significant number seem to think not.

Allow me to leave one last issue for you to ponder (assuming you have the time in your extremely busy schedule).

Is it good for Anandtech?

catavalon21 - Thursday, July 21, 2016 - link

Impatient as I was at the first for benchmarks, yes, I'm a numbers junkie, since it's evident precious few of us will have had a chance to buy one of these cards yet (or the 480), I doubt the delay has caused anyone to buy the wrong card. Can't speak for the smart phone review folks are complaining about being absent, but as it turns out, what I'm initially looking for is usually done early on in Bench. The rest of this, yeah, it can wait.mkaibear - Saturday, July 23, 2016 - link

Job, house, kids, church... more than enough to keep me sufficiently busy that I don't have the time to browse more than a few sites. I pick them quite carefully.Given the lifespan of a typical system is >5 years I think that a 2 month delay is perfectly reasonable. It can often take that long to get purchasing signoff once I've decided what they need to purchase anyway (one of the many reasons that architectural deep dives are useful - so I can explain why the purchase is worthwhile). Do you actually spend someone else's money at any point or are you just having to justify it to yourself?

Whether or not it's worth the wait to you is one thing - but it's clearly worth the wait to both Anandtech and to Purch.

razvan.uruc@gmail.com - Thursday, July 21, 2016 - link

Excellent article, well deserved the wait!giggs - Thursday, July 21, 2016 - link

While this is a very thorough and well written review, it makes me wonder about sponsored content and product placement.The PG279Q is the only monitor mentionned, making sure the brand appears, and nothing about competing products. It felt unnecessary.

I hope it's just a coincidence, but considering there has been quite a lot of coverage about Asus in the last few months, I'm starting to doubt some of the stuff I read here.

Ryan Smith - Thursday, July 21, 2016 - link

"The PG279Q is the only monitor mentionned, making sure the brand appears, and nothing about competing products."There's no product placement or the like (and if there was, it would be disclosed). I just wanted to name a popular 1440p G-Sync monitor to give some real-world connection to the results. We've had cards for a bit that can drive 1440p monitors at around 60fps, but GTX 1080 is really the first card that is going to make good use of higher refresh rate monitors.

giggs - Thursday, July 21, 2016 - link

Fair enough, thank you for responding promptly. Keep up the good work!arh2o - Thursday, July 21, 2016 - link

This is really the gold standard of reviews. More in-depth than any site on the internet. Great job Ryan, keep up the good work.Ranger1065 - Thursday, July 21, 2016 - link

This is a quality article.timchen - Thursday, July 21, 2016 - link

Great article. It is pleasant to read more about technology instead of testing results. Some questions though:1. higher frequency: I am kind of skeptical that the overall higher frequency is mostly enabled by FinFET. Maybe it is the case, but for example when Intel moved to FinFET we did not see such improvement. RX480 is not showing that either. It seems pretty evident the situation is different from 8800GTX where we first get frequency doubling/tripling only in the shader domain though. (Wow DX10 is 10 years ago... and computation throughput is improved by 20x)

2. The fastsync comparison graph looks pretty suspicious. How can Vsync have such high latency? The most latency I can see in a double buffer scenario with vsync is that the screen refresh just happens a tiny bit earlier than the completion of a buffer. That will give a delay of two frame time which is like 33 ms (Remember we are talking about a case where GPU fps>60). This is unless, of course, if they are testing vsync at 20hz or something.