The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTFast Sync & SLI Updates: Less Latency, Fewer GPUs

Since Kepler and the GTX 680 in 2012, one of NVIDIA’s side projects in GPU development has been cooking up ways to reduce input lag. Under the watchful eye of NVIDIA’s Distinguished Engineer (and all-around frontman) Tom Petersen, the company has introduced a couple of different technologies over the years to deal with the problem. Kepler introduced adaptive v-sync – the ability to dynamically disable v-sync when the frame rate is below the refresh rate – and of course in 2013 the company introduced their G-Sync variable refresh rate technology.

Since then, Tom’s team has been working on yet another way to bend the rules of v-sync. Rolling out with Pascal is a new v-sync mode that NVIDIA is calling Fast Sync, and it is designed to offer yet another way to reduce input lag while maintaining v-sync.

It’s interesting to note that Fast Sync isn’t a wholly new idea, but rather a modern and more consistent take on an old idea: triple buffering. While in modern times triple buffering is just a 3-deep buffer that is run through as a sequential frame queue, in the days of yore some games and video cards handled triple buffering a bit differently. Rather than using the 3 buffers as a sequential queue, they would instead always overwrite the oldest buffer. This small change had a potentially significant impact on input lag, and if you’re familiar with old school triple buffering, then you know where this is going.

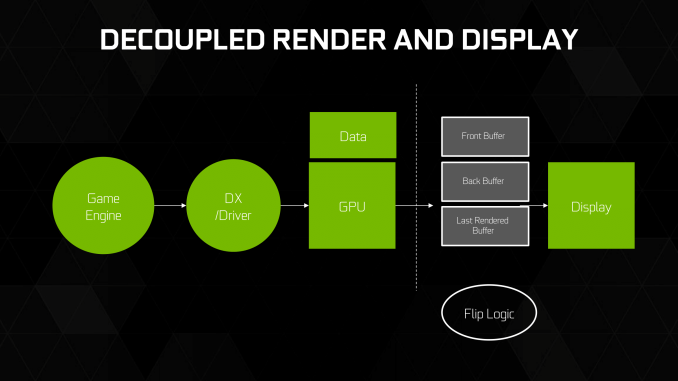

With Fast Sync, NVIDIA has implemented old school triple buffering at the driver level, once again making it usable with modern cards. The purpose of implementing Fast Sync is to reduce input lag in modern games that can generate a frame rate higher than the refresh rate, with NVIDIA specifically targeting CS:GO and other graphically simple twitch games.

But how does Fast Sync actually reduce input lag? To go into this a bit further, we have an excellent article on old school triple buffering from 2009 that I’ve republished below. Even 7 years later, other than the name the technical details are all still accurate to NVIDIA’s Fast Sync implementation.

What are Double Buffering, V-sync, and Triple Buffering?

When a computer needs to display something on a monitor, it draws a picture of what the screen is supposed to look like and sends this picture (which we will call a buffer) out to the monitor. In the old days there was only one buffer and it was continually being both drawn to and sent to the monitor. There are some advantages to this approach, but there are also very large drawbacks. Most notably, when objects on the display were updated, they would often flicker.

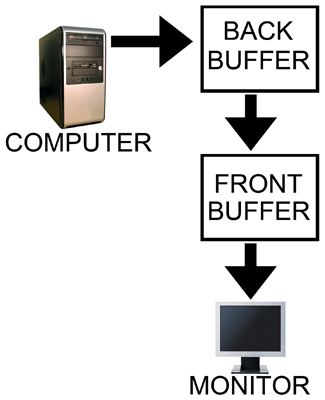

In order to combat the issues with reading from while drawing to the same buffer, double buffering, at a minimum, is employed. The idea behind double buffering is that the computer only draws to one buffer (called the "back" buffer) and sends the other buffer (called the "front" buffer) to the screen. After the computer finishes drawing the back buffer, the program doing the drawing does something called a buffer "swap." This swap doesn't move anything: swap only changes the names of the two buffers: the front buffer becomes the back buffer and the back buffer becomes the front buffer.

After a buffer swap, the software can start drawing to the new back buffer and the computer sends the new front buffer to the monitor until the next buffer swap happens. And all is well. Well, almost all anyway.

In this form of double buffering, a swap can happen anytime. That means that while the computer is sending data to the monitor, the swap can occur. When this happens, the rest of the screen is drawn according to what the new front buffer contains. If the new front buffer is different enough from the old front buffer, a visual artifact known as "tearing" can be seen. This type of problem can be seen often in high framerate FPS games when whipping around a corner as fast as possible. Because of the quick motion, every frame is very different, when a swap happens during drawing the discrepancy is large and can be distracting.

The most common approach to combat tearing is to wait to swap buffers until the monitor is ready for another image. The monitor is ready after it has fully drawn what was sent to it and the next vertical refresh cycle is about to start. Synchronizing buffer swaps with the Vertical refresh is called V-sync.

While enabling V-sync does fix tearing, it also sets the internal framerate of the game to, at most, the refresh rate of the monitor (typically 60Hz for most LCD panels). This can hurt performance even if the game doesn't run at 60 frames per second as there will still be artificial delays added to effect synchronization. Performance can be cut nearly in half cases where every frame takes just a little longer than 16.67 ms (1/60th of a second). In such a case, frame rate would drop to 30 FPS despite the fact that the game should run at just under 60 FPS. The elimination of tearing and consistency of framerate, however, do contribute to an added smoothness that double buffering without V-sync just can't deliver.

Input lag also becomes more of an issue with V-sync enabled. This is because the artificial delay introduced increases the difference between when something actually happened (when the frame was drawn) and when it gets displayed on screen. Input lag always exists (it is impossible to instantaneously draw what is currently happening to the screen), but the trick is to minimize it.

Our options with double buffering are a choice between possible visual problems like tearing without V-sync and an artificial delay that can negatively affect both performance and can increase input lag with V-sync enabled. But not to worry, there is an option that combines the best of both worlds with no sacrifice in quality or actual performance. That option is triple buffering.

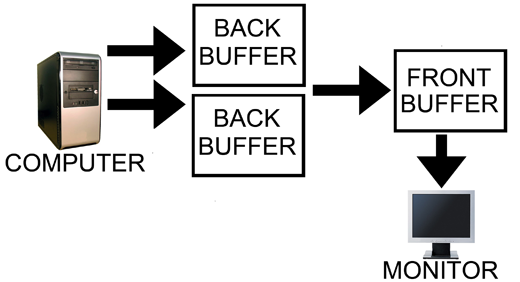

The name gives a lot away: triple buffering uses three buffers instead of two. This additional buffer gives the computer enough space to keep a buffer locked while it is being sent to the monitor (to avoid tearing) while also not preventing the software from drawing as fast as it possibly can (even with one locked buffer there are still two that the software can bounce back and forth between). The software draws back and forth between the two back buffers and (at best) once every refresh the front buffer is swapped for the back buffer containing the most recently completed fully rendered frame. This does take up some extra space in memory on the graphics card (about 15 to 25MB), but with modern graphics card dropping at least 512MB on board this extra space is no longer a real issue.

In other words, with triple buffering we get almost the exact same high actual performance and similar decreased input lag of a V-sync disabled setup while achieving the visual quality and smoothness of leaving V-sync enabled.

Note however that the software is still drawing the entire time behind the scenes on the two back buffers when triple buffering. This means that when the front buffer swap happens, unlike with double buffering and V-sync, we don't have artificial delay. And unlike with double buffering without V-sync, once we start sending a fully rendered frame to the monitor, we don't switch to another frame in the middle.

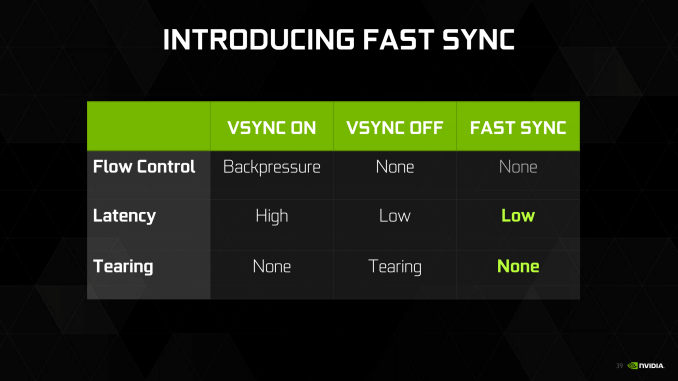

The end result of Fast Sync is that in the right cases we can have our cake and eat it too when it comes to v-sync and input lag. By constantly rendering frames as if v-sync was off, and then just grabbing the most recent frame and discarding the rest, Fast Sync means that v-sync can still be used to prevent tearing without the traditionally high input lag penalty it causes.

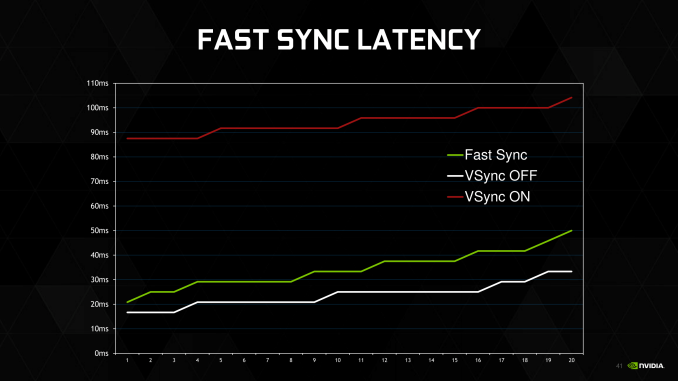

The actual input lag benefits will in turn depend on several factors, including frame rates and display refresh rates. The minimum input lag is still the amount of time it takes to draw a frame – just like with v-sync off – and then you have to wait for the next refresh interval to actually display the frame. Thanks to the law of averages, the higher the frame rate (the lower the frame rendering time), the better the odds that a frame is ready right before a screen refresh, reducing the average amount of input lag. This is especially true on 60Hz displays where there’s a full 16.7ms between frame draws and a traditional double buffered setup (or sequential frame queue).

NVIDIA’s own example numbers are taken from monitoring CS:GO with a high speed camera. I can’t confirm these numbers – and as with most marketing efforts it’s like a best-case scenario – but their chart isn’t unreasonable, especially if their v-sync example uses a 3-deep buffer. Input lag will be higher than without v-sync, but lower (potentially much so) than with v-sync on. Overall the greatest benefits are with a very high framerate, which is why NVIDIA is specifically targeting games like CS:GO, as a frame rate multiple times higher than the refresh rate will produce the best results.

With all of the above said, I should note that Fast Sync is purely about input lag and doesn’t address smoothness. In fact it may make things a little less smooth because it’s essentially dropping frames, and the amount of simulation time between frames can vary. But at high framerates this shouldn’t be an issue. Meanwhile Fast Sync means losing all of the power saving benefits of v-sync; rather than the GPU getting a chance to rest and clock down between frames, it’s now rendering at full speed the entire time as if v-sync was off.

Finally, it’s probably useful to clarify how Fast Sync fits in with NVIDIA’s other input lag reduction technologies. Fast Sync doesn’t replace either Adaptive V-Sync or G-Sync, but rather compliments them.

- Adaptive V-Sync: reducing input lag when framerates are below the refresh rate by selectively disabling v-sync

- G-Sync: reducing input lag by refreshing the screen when a frame is ready, up to the display’s maximum refresh rate

- Fast Sync: reducing input lag by not stalling the GPU when the framerate hits the display’s refresh rate

Fast Sync specifically deals with the case where frame rates exceed the display’s refresh rate. If the frame rate is below the refresh rate, then Fast Sync does nothing since it takes more than a refresh interval to render a single frame to begin with. And this is instead where Adaptive Sync would come in, if desired.

Meanwhile when coupled with G-Sync, Fast Sync again only matters when the frame rate exceeds the display’s maximum refresh rate. For most G-Sync monitors this is 120-144Hz. Previously the options with G-Sync above the max refresh rate were to tear (no v-sync) or to stall the GPU (v-sync), so this provides a tear-free lower input lag option for G-Sync as well.

200 Comments

View All Comments

Ranger1065 - Thursday, July 21, 2016 - link

Your unwavering support for Anandtech is impressive.I too have a job that keeps me busy, yet oddly enough I find the time to browse (I prefer that word to "trawl") a number of sites.

I find it helps to form objective opinions.

I don't believe in early adoption, but I do believe in getting the job done on time, however if you are comfortable with a 2 month delay, so be it :)

Interesting to note that architectural deep dives concern your art and media departments so closely in their purchasing decisions. Who would have guessed?

It's true (God knows it's been stated here often enough) that

Anandtech goes into detail like no other, I don't dispute that.

But is it worth the wait? A significant number seem to think not.

Allow me to leave one last issue for you to ponder (assuming you have the time in your extremely busy schedule).

Is it good for Anandtech?

catavalon21 - Thursday, July 21, 2016 - link

Impatient as I was at the first for benchmarks, yes, I'm a numbers junkie, since it's evident precious few of us will have had a chance to buy one of these cards yet (or the 480), I doubt the delay has caused anyone to buy the wrong card. Can't speak for the smart phone review folks are complaining about being absent, but as it turns out, what I'm initially looking for is usually done early on in Bench. The rest of this, yeah, it can wait.mkaibear - Saturday, July 23, 2016 - link

Job, house, kids, church... more than enough to keep me sufficiently busy that I don't have the time to browse more than a few sites. I pick them quite carefully.Given the lifespan of a typical system is >5 years I think that a 2 month delay is perfectly reasonable. It can often take that long to get purchasing signoff once I've decided what they need to purchase anyway (one of the many reasons that architectural deep dives are useful - so I can explain why the purchase is worthwhile). Do you actually spend someone else's money at any point or are you just having to justify it to yourself?

Whether or not it's worth the wait to you is one thing - but it's clearly worth the wait to both Anandtech and to Purch.

razvan.uruc@gmail.com - Thursday, July 21, 2016 - link

Excellent article, well deserved the wait!giggs - Thursday, July 21, 2016 - link

While this is a very thorough and well written review, it makes me wonder about sponsored content and product placement.The PG279Q is the only monitor mentionned, making sure the brand appears, and nothing about competing products. It felt unnecessary.

I hope it's just a coincidence, but considering there has been quite a lot of coverage about Asus in the last few months, I'm starting to doubt some of the stuff I read here.

Ryan Smith - Thursday, July 21, 2016 - link

"The PG279Q is the only monitor mentionned, making sure the brand appears, and nothing about competing products."There's no product placement or the like (and if there was, it would be disclosed). I just wanted to name a popular 1440p G-Sync monitor to give some real-world connection to the results. We've had cards for a bit that can drive 1440p monitors at around 60fps, but GTX 1080 is really the first card that is going to make good use of higher refresh rate monitors.

giggs - Thursday, July 21, 2016 - link

Fair enough, thank you for responding promptly. Keep up the good work!arh2o - Thursday, July 21, 2016 - link

This is really the gold standard of reviews. More in-depth than any site on the internet. Great job Ryan, keep up the good work.Ranger1065 - Thursday, July 21, 2016 - link

This is a quality article.timchen - Thursday, July 21, 2016 - link

Great article. It is pleasant to read more about technology instead of testing results. Some questions though:1. higher frequency: I am kind of skeptical that the overall higher frequency is mostly enabled by FinFET. Maybe it is the case, but for example when Intel moved to FinFET we did not see such improvement. RX480 is not showing that either. It seems pretty evident the situation is different from 8800GTX where we first get frequency doubling/tripling only in the shader domain though. (Wow DX10 is 10 years ago... and computation throughput is improved by 20x)

2. The fastsync comparison graph looks pretty suspicious. How can Vsync have such high latency? The most latency I can see in a double buffer scenario with vsync is that the screen refresh just happens a tiny bit earlier than the completion of a buffer. That will give a delay of two frame time which is like 33 ms (Remember we are talking about a case where GPU fps>60). This is unless, of course, if they are testing vsync at 20hz or something.