The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTPascal’s Architecture: What Follows Maxwell

With the launch of a new generation of GPUs we’ll start things off where we always do: the architecture.

Discrete GPUs occupy an interesting space when it comes to the relationship between architecture and manufacturing processes. Whereas CPUs have architecture and manufacturing process decoupled – leading to Intel’s aptly named (former) tick-tock design methodology – GPUs have aligned architectures with manufacturing processes, with a new architecture premiering alongside a new process. Or rather, GPU traditionally did. Maxwell threw a necessary spanner into all of this, and in its own way Pascal follows this break from tradition.

As the follow-up to their Kepler architecture, with Maxwell NVIDIA introduced a significantly altered architecture, one that broke a lot of assumptions Kepler earlier made and in the process vaulted NVIDIA far forward on energy efficiency. What made Maxwell especially important from a development perspective is that it came not on a new manufacturing process, but rather on the same 28nm process used for Kepler two years earlier, and this is something NVIDIA had never done before. With the 20nm planar process proving unsuitable for GPUs and only barely suitable for SoCs – the leakage from planar transistors this small was just too high – NVIDIA had to go forward with 28nm for another two years. It would come down to their architecture team to make the best of the situation and come up with a way to bring a generational increase in performance without the traditional process node shrink.

Now in 2016 we finally have new manufacturing nodes with the 14nm/16nm FinFET processes, giving GPU manufacturers a long-awaited (and much needed) opportunity to bring down power consumption and reduce chip size through improved manufacturing technology. The fact that it has taken an extra two years to get here, and what NVIDIA did in the interim with Maxwell, has opened up a lot of questions about what would follow for NVIDIA. The GPU development process is not so binary or straightforward that NVIDIA designed Maxwell solely because they were going to be stuck on the 28nm process – NVIDIA would have done Maxwell either way – but it certainly was good timing to have such a major architectural update fall when it did.

So how does NVIDIA follow-up on Maxwell then? The answer comes in Pascal, NVIDIA’s first architecture for the FinFET generation. Designed to be built on TSMC’s 16nm process, Pascal is the latest and the greatest, and like every architecture before it is intended to further push the envelope on GPU performance, and ultimately push the envelope on the true bottleneck for GPU performance, energy efficiency.

HPC vs. Consumer: Divergence

Pascal is an architecture that I’m not sure has any real parallel on a historical basis. And a big part of that is because to different groups within NVIDIA, Pascal means different things and brings different things, despite the shared architecture. On the one side is the consumer market, which is looking for a faster still successor to what Maxwell delivered in 2014 and 2015. Meanwhile on the high performance compute side, Pascal is the long-awaited update to the Kepler architecture (Maxwell never had an HPC part), combining the lessons of Maxwell with the specific needs of the HPC market.

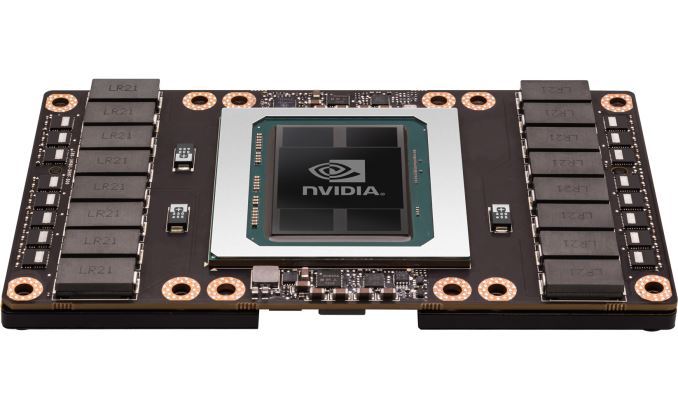

The result is that there’s an interesting divergence going on between the HPC side and its GP100 GPU, and the consumer side and the GP104 GPU underlying GTX 1080. Even as far back as Fermi there was a distinct line separating HPC-class GPUs (GF100) from consumer/general compute GPUs (GF104), but with Pascal this divergence is wider than ever before. Ultimately the HPC market and GP100 is beyond the scope of this article and I’ll pick it up in detail another time, but because NVIDIA announced GP100 before GP104, it does require a bit of addressing to help sort out what’s going on and what NVIDA’s design goals were with GP104.

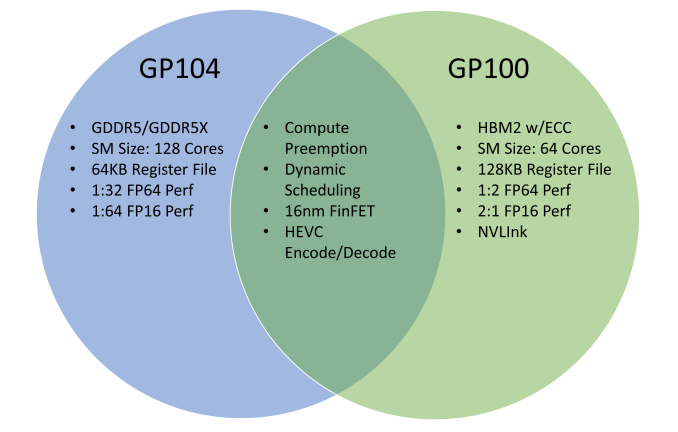

Pascal as an overarching architecture contains a number of new features, however not all of those features are present in all SKUs. If you were to draw a Venn diagram of Pascal, what you would find is that the largest collection of features are found in GP100, whereas GP104, like the previous Maxwell architecture before it, is stripped down for speed and efficiency. As a result while GP100 has some notable feature/design elements for HPC – things such faster FP64 & FP16 performance, ECC, and significantly greater amounts of shared memory and register file capacity per CUDA core – these elements aren’t present in GP104 (and presumably, future Pascal consumer-focused GPUs).

Ultimately what we’re seeing in this divergence is a greater level of customization between NVIDIA’s HPC and consumer markets. The HPC side of NVIDIA is finally growing up, and it’s growing fast. The long term plan at NVIDIA has been to push GPU technology beyond consumer and professional graphics, and while it has taken years longer than NVIDIA originally wanted, thanks in big part to success in the deep learning market, NVIDIA is finally achieving their goals.

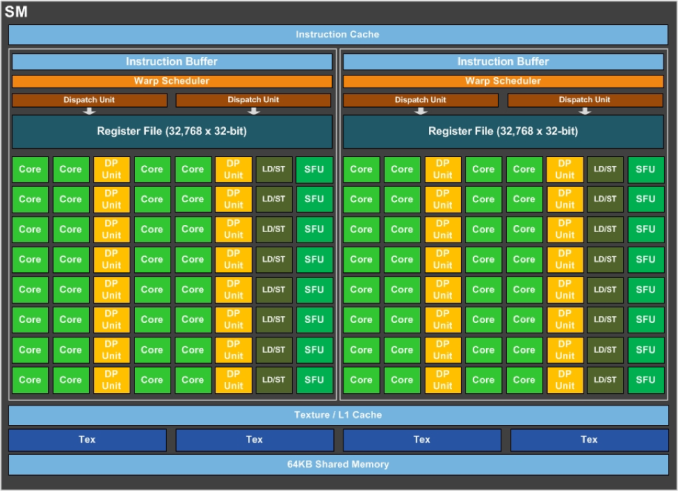

This means that although GP100 is a fully graphics capable GPU, it doesn’t necessarily have to be put into video cards to make sense for NVIDIA to manufacture, and as a result NVIDIA can make it even more compute focused than prior-generation parts like GK110 and GF110. And that in turn means that although this divergence is driven by the needs of the HPC market – what features need to be added to make a GPU more suitable for HPC use cases – from the perspective of the consumer market there is a tendency to perceive that consumer parts are falling behind. Especially with how GP100 and GP104’s SMs are differently partitioned.

This is a subject I’ll revisit in much greater detail in the future when we focus on GP100. But for now, especially for the dozen of you who’ve emailed over the past month asking about why the two are so different, the short answer is that the market needs for HPC are different from graphics, and the difference in how GP100 and GP104 are partitioned reflect this. GP100 and GP104 are both unequivocally Pascal, but GP100 gets smaller SM partitions in order to increase the number of registers and the amount of shared memory available per CUDA core. Shared memory and register contention on graphics workloads isn’t nearly as great as with HPC tasks – pixel shader threads are relatively short and independent from each other – which means that while the increased ratios benefit HPC workloads, for graphics the gains would be minimal. And the costs to power and die space would, in turn, far outweigh any benefits.

200 Comments

View All Comments

DonMiguel85 - Wednesday, July 20, 2016 - link

Agreed. They'll likely be much more power-hungry, but I believe it's definitely doable. At the very least it'll probably be similar to Fury X Vs. GTX 980sonicmerlin - Thursday, July 21, 2016 - link

The 1070 is as fast as the 980 ti. The 1060 is as fast as a 980. The 1080 is much faster than a 980 ti. Every card jumped up two tiers in performance from the previous gen. That's "standard" to you?Kvaern1 - Sunday, July 24, 2016 - link

I don't think there's much evidence pointing in the direction of GCN 4 blowing Pascal out of the water.Sadly, AMD needs a win but I don't see it coming. Budgets matter.

watzupken - Wednesday, July 20, 2016 - link

Brilliant review. Thanks for the in depth review. This is late, but the analysis is its strength and value add worth waiting for.ptown16 - Wednesday, July 20, 2016 - link

This review was a L O N G time coming, but gotta admit, excellent as always. This was the ONLY Pascal review to acknowledge and significantly include Kepler cards in the benchmarks and some comments. It makes sense to bench GK104 and analyze generational improvements since Kepler debuted 28nm and Pascal has finally ushered in the first node shrink since then. I guessed Anandtech would be the only site to do so, and looks like that's exactly what happened. Looking forward to the upcoming Polaris review!DonMiguel85 - Wednesday, July 20, 2016 - link

I do still wonder if Kepler's poor performance nowadays is largely due to neglected driver optimizations or just plain old/inefficient architecture. If it's the latter, it's really pretty bad with modern game workloads.ptown16 - Wednesday, July 20, 2016 - link

It may be a little of the latter, but Kepler was pretty amazing at launch. I suspect driver neglect though, seeing as how Kepler performance got notably WORSE soon after Maxwell. It's also interesting to see how the comparable GCN cards of that time, which were often slower than the Kepler competition, are now significantly faster.DonMiguel85 - Thursday, July 21, 2016 - link

Yeah, and a GTX 960 often beats a GTX 680 or 770 in many newer games. Sometimes it's even pretty close to a 780.hansmuff - Thursday, July 21, 2016 - link

This is the one issue that has me wavering for the next card. My AMD cards, the last one being a 5850, have always lasted longer than my NV cards; of course at the expense of slower game fixes/ready drivers.So far so good with a 1.5yrs old 970, but I'm keeping a close eye on it. I'm looking forward to what VEGA brings.

ptown16 - Thursday, July 21, 2016 - link

Yeah I'd keep an eye on it. My 770 can still play new games, albeit at lowered quality settings. The one hope for the 970 and other Maxwell cards is that Pascal is so similar. The only times I see performance taking a big hit would be newer games using asynchronous workloads, since Maxwell is poorly prepared to handle that. Otherwise maybe Maxwell cards will last much longer than Kepler. That said, I'm having second thoughts on the 1070 and curious to see what AMD can offer in the $300-$400 price range.