IBM, NVIDIA and Wistron Develop New OpenPOWER HPC Server with POWER8 CPUs

by Anton Shilov on April 6, 2016 12:01 PM EST

IBM, NVIDIA and Wistron have introduced their second-generation server for high-performance computing (HPC) applications at the OpenPOWER Summit. The new machine is designed for IBM’s latest POWER8 microprocessors, NVIDIA’s upcoming Tesla P100 compute accelerators (featuring the company’s Pascal architecture) and the company’s NVLink interconnection technology. The new HPC platform will require software makers to port their apps to the new architectures, which is why IBM and NVIDIA plan to help with that.

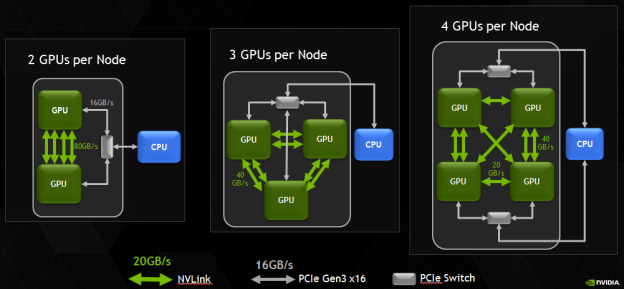

The new HPC platform developed by IBM, NVIDIA and Wistron (which is one of the major contract makers of servers) is based on several IBM POWER8 processors and several NVIDIA Tesla P100 accelerators. At present, the three companies do not reveal a lot of details about their new HPC platform, but it is safe to assume that it has two IBM POWER8 CPUs and four NVIDIA Tesla P100 accelerators. Assuming that every GP100 chip has four 20 GB/s NVLink interconnections, four GPUs is the maximum number of GPUs per CPU, which makes sense from bandwidth point of view. It is noteworthy that NVIDIA itself managed to install eight Tesla P100 into a 2P server (see the example of the NVIDIA DGX-1).

Correction 4/7/2016: Based on the images released by the OpenPOWER Foundation, the prototype server actually includes four, not eight NVIDIA Tesla P100 cards, as the original story suggested.

IBM’s POWER8 CPUs have 12 cores, each of which can handle eight hardware threads at the same time thanks to 16 execution pipelines. The 12-core POWER8 CPU can run at fairly high clock-rates of 3 – 3.5 GHz and integrate a total of 6 MB of L2 cache (512 KB per core) as well as 96 MB of L3 cache. Each POWER8 processor supports up to 1 TB of DDR3 or DDR4 memory with up to 230 GB/s sustained bandwidth (by comparison, Intel’s Xeon E5 v4 chips “only” support up to 76.8 GB/s of bandwidth with DDR4-2400). Since the POWER8 was designed both for high-end servers and supercomputers in mind, it also integrates a massive amount of PCIe controllers as well as multiple NVIDIA’s NVLinks to connect to special-purpose accelerators as well as the forthcoming Tesla compute processors.

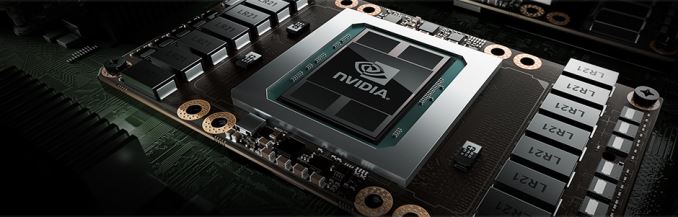

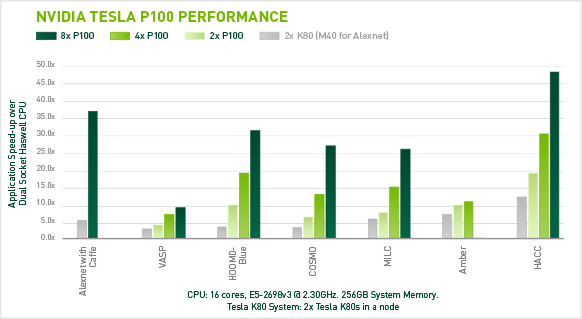

Each NVIDIA Tesla P100 compute accelerator features 3584 stream processors, 4 MB L2 cache and 16 GB of HBM2 memory connected to the GPU using 4096-bit bus. Single-precision performance of the Tesla P100 is around 10.6 TFLOPS, whereas its double precision is approximately 5.3 TFLOPS. A HPC node with eight such accelerators will have a peak 32-bit compute performance of 84.8 TFLOPS, whereas its 64-bit compute capability will be 42.4 TFLOPS. The prototype developed by IBM, NVIDIA and Wistron integrates four Tesla P100 modules, hence, its SP performance is 42.4 TFLOPS, whereas its DP performance is approximately 21.2 TFLOPS. Just for comparison: NEC’s Earth Simulator supercomputer, which was the world’s most powerful system from June 2002 to June 2004, had a performance of 35.86 TFLOPS running the Linpack benchmark. The Earth Simulator consumed 3200 kW of POWER, it consisted of 640 nodes with eight vector processors and 16 GB of memory at each node, for a total of 5120 CPUs and 10 TB of RAM. Thanks to Tesla P100, it is now possible to get performance of the Earth Simulator from just one 2U box (or two 2U boxes if the prototype server by Wistron is used).

IBM, NVIDIA and Wistron expect their early second-generation HPC platforms featuring IBM’s POWER8 processors to become available in Q4 2016. This does not mean that the machines will be deployed widely from the start. At present, the majority of HPC systems are based on Intel’s or AMD’s x86 processors. Developers of high-performance compute apps will have to learn how to better utilize IBM’s POWER8 CPUs, NVIDIA Pascal GPUs, the additional bandwidth provided by the NVLink technology as well as the Page Migration Engine tech (unified memory) developed by IBM and NVIDIA. The two companies intend to create a network of labs to help developers and ISVs port applications on the new HPC platforms. These labs will be very important not only to IBM and NVIDIA, but also to the future of high-performance systems in general. Heterogeneous supercomputers can offer very high performance, but in order to use that, new methods of programming are needed.

The development of the second-gen IBM POWER8-based HPC server is an important step towards building the Summit and the Sierra supercomputers for Oak Ridge National Laboratory and Lawrence Livermore National Laboratory. Both the Summit and the Sierra will be based on the POWER9 processors formally introduced last year as well as NVIDIA’s upcoming code-named Volta processors. The systems will also rely on NVIDIA’s second-generation NVLink interconnect.

Image Sources: NVIDIA, Micron.

Source: OpenPOWER Foundation

50 Comments

View All Comments

JohnMirolha - Wednesday, April 6, 2016 - link

Well...Some things run better in parallel and there is when a GPU with 3584 cores can handle brilliantly.

Other things run sequentially, and then you need the fastest core available to get rid of the contention.

NVLINK is primarily for GPUs to exchange data between them. This avoid going thru PCI bus, passing by the CPU just to get to the other GPU on the system.

CAPI on the other hand is built for this kind of thing you mention with IB. Melanie 100Gbps IB (2016) and 200Gbps IB (2017) will leverage CAPI in order to allow direct memory Access, without the need of going thru the CPU. Can you imagine RDMA over 100Gbps with with adapter writing directly to destination memory address ?!?

frenchy_2001 - Thursday, April 7, 2016 - link

NVLink is actually designed as a processor to processor link with NUMA for memory sharing (think intel QPI or AMD hypertransport).And I thought one of the point of using POWER8 with Pascal was to link the POWER8 CPU and the GPU using NVLink instead of PCIe. That would give better bandwidth and probably better latency, while allowing shared memory.

Kevin G - Thursday, April 7, 2016 - link

POWER8+ is supposed to support nvLink natively and is supposed to launch later this year. It is odd that IBM is showing off Pascal connected via the PCIe bus with POWER8 instead of directly with POWER8+.jesperfrimann - Friday, April 8, 2016 - link

@Kevin G.It's IBM if they can milk an old product a Little longer they will...

// Jesper

extide - Wednesday, April 6, 2016 - link

I wonder if Intel will make a REAL server CPU again one day. I know they tried, and failed, with Itanium, but if they kep the x86 arch and built it massively wide, put huge memory b/w, and targeted similar TDP's as the POWER CPU's typically do (250+w) ... it could be interesting. As it is now, Intel server chips are just a crapton of little mobile cores hooked up (granted, they do use a pretty fancy ringbus network and all).Pinn - Wednesday, April 6, 2016 - link

Hz died a long time ago.Shadow7037932 - Wednesday, April 6, 2016 - link

Intel will do it when they feel the heat. Right now, it looks like they aren't really facing too much competition.JohnMirolha - Wednesday, April 6, 2016 - link

"Intel will do when they feel the heat"... Join the dots below:http://www.digitaltrends.com/computing/intels-lead...

http://arstechnica.com/information-technology/2016...

http://www.businessinsider.com/intel-ceo-brian-krz...

http://wccftech.com/intel-14nm-broadwell-cpu-archi...

They are cornered in technology terms. Lates chip only got 5% better at the core level, so they packed more cores. When you have a GPU at your side you gotta be pristine on single thread.

It might do the trick for cloud with several virtual machines, but for HPC it is not the way to handle it.

They can pull it off, but so far being the do-it-all cpu is not looking good.

Kevin G - Thursday, April 7, 2016 - link

Intel can do higher clock speeds and even higher IPC. The problem is that power consumption would increase at a rate higher than the performance gains from the additional clocks or IPC. Intel has an internal design rule that any major change can only increase power consumption by 1% only if it increases performance by 2% or more. This has forced Intel to focus on efficiency, not absolute raw performance.Things are slowly changing as SkyLake-EP is a slightly different core than the consumer SkyLake chips currently on the market. The workstation/server chip gets AVX-512 and believed to carry 512 KB of L2 cache per core for example. I can see Intel implementing a few IPC increases that don't adhere to their current design rules just to push the market forward.

Michael Bay - Saturday, April 9, 2016 - link

"Intel is cornered" song is so old nobody even registers it consciously anymore.Believing absolute technology leader can`t eke more than 5% IPC increase yearly because of anything other than marketing considerations is plain dumb.