The Dell XPS 15 9550 Review: Infinity Edge Lineup Expands

by Brett Howse on March 4, 2016 8:00 AM ESTBattery Life and Charge Time

The XPS 15 is available with two battery sizes. If you opt for the base model, it comes with a 2.5” SATA drive and a 56 Wh battery. If you opt for a device with the M.2 SSD, the extra space taken up by the 2.5” drive is replaced with more battery cells, giving you 84 Wh of capacity. It also adds about 0.5 lbs of weight to the device, but if you are going to be working away from an outlet, the SSD model should give much better battery life.

But, with the high resolution display, and wider color gamut, battery life is going to take a hit compared to something with a more traditional display. Since Dell sent us the UHD model, that’s the one we have to test.

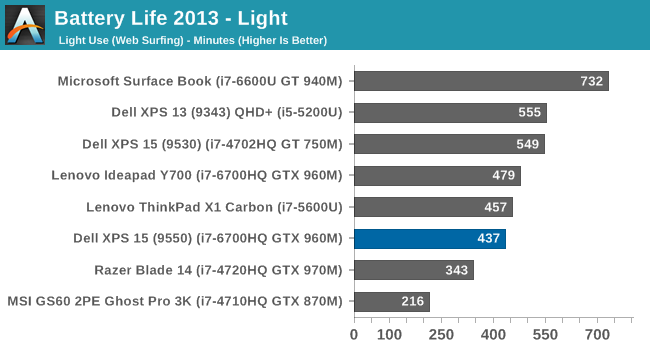

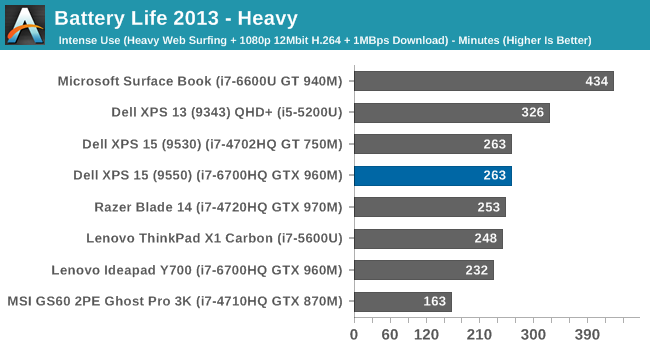

To test battery life we have two tests. The light test involves light web browsing, with the display set to 200 nits brightness. The heavy test increases the pages loaded by the browser, adds a 1 MB/s file download, and includes movie playback. All testing is done with Edge as the browser.

Light Battery

The XPS 15, with its quad-core CPU and high resolution display, can’t keep up with the best devices for battery life, even on light usage. At just under 7.5 hours, it is well under the XPS 13 and Surface Book results, despite the larger battery. It is also below the XPS 15 9530 results, and that device has a 91 Wh battery and 3200x1800 display.

Heavy Battery

With the extra CPU workload, as well as constant network use, the battery life falls to just 4:23. This is exactly the same as the XPS 15 9530 score, so there is certainly some more efficiency because the display is higher resolution and the battery is slightly smaller on the new 9550 model. It’s still not a great result though.

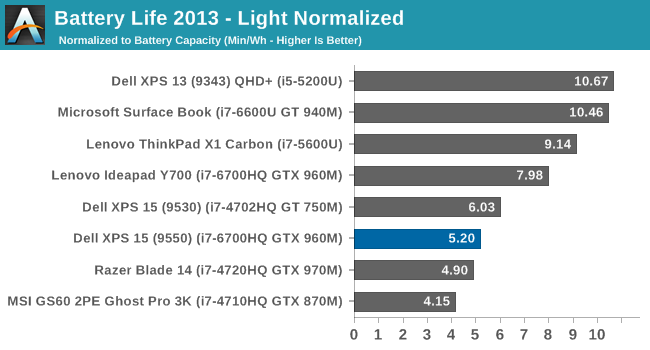

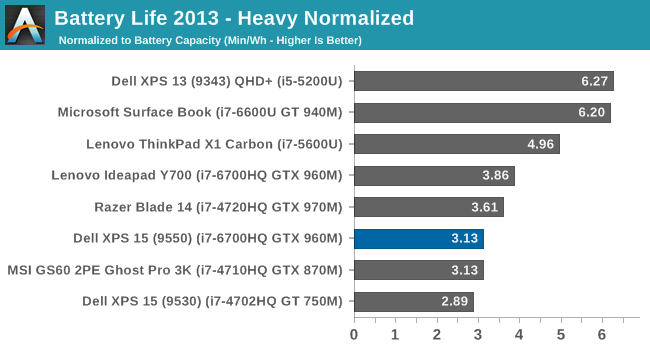

Normalized Battery

By removing the battery size from the equation, we can get an overall feel for platform efficiency. The XPS 15, despite the higher resolution display, does outperform the XPS 15 9530 on the heavy results, but the UHD display certainly hurts it compared to other devices. The Surface Book with discrete GPU is over double the efficiency, but with a dual-core processor. The Lenovo Y700 has the same processor and GPU, but a much lower resolution display, and it comes out quite a bit ahead of the XPS 15. For those that are normally plugged in, the UHD display is fantastic, but be warned, it’s a big hit on battery life.

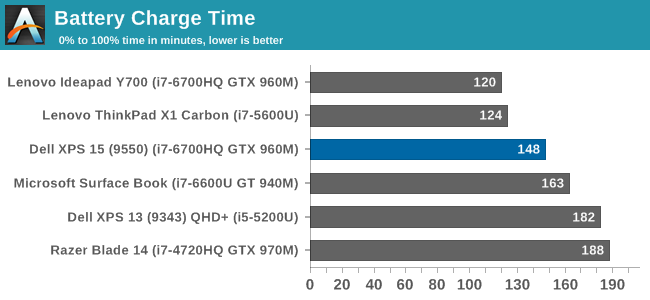

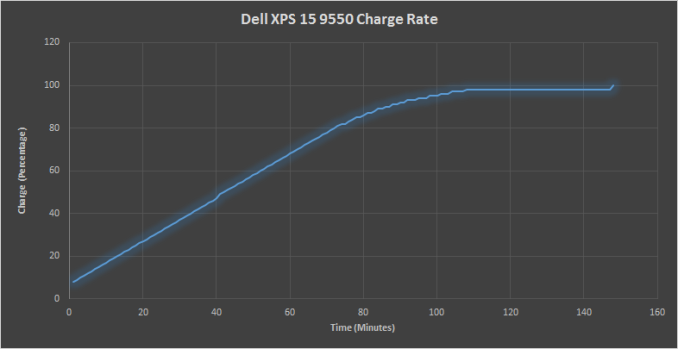

Charge Time

The other side of battery life is how long it takes to charge. With an 84 Wh battery, this is a significant amount of capacity to top up. Luckily Dell ships the XPS 15 with a 130-Watt power adapter.

At 148 minutes, the XPS 15 charges very quickly. At least with the less than stellar battery life, once you do plug it in, it gets back on its feet pretty quickly.

152 Comments

View All Comments

nerd1 - Saturday, March 12, 2016 - link

It's SWITCHABLE. So it won't drain battery when not in use. And it can play most games at 1080p. It's the same GPU as Alienware 13.External GPU is stupid idea in general. You'need a huge separate case and separate monitor, which is as large an often just as expensive as building a separate gaming desktop.

Valantar - Saturday, March 12, 2016 - link

No, no, and no.Even optimus-enabled laptops draw more power than dGPU-less equivalents - the dGPU remains "on", but sleeping. Optimus isn't a hard power-off switch. Also, Heat production is a major concern, which isn't really solveable in a thin+light laptop form factor. I'd rather have a ~45W-60W quad core with Iris/- Pro than a 45W quad core with HD 520 + a 65W dGPU.

Second, you don't need a huge case for an external GPU - that current models are huge is a product of every single one presented aiming for compatibiliby with even the most overpowered GPUs (like the Fury or 980Ti). A case for dual slot mITX sized (17cm length) dektop GPUs with an integrated ~200W power supply wouldn't need to be much larger than, say, 10x20x15cm. That's roughly the size of an external dual HDD case. As the market grows (well, technically as the market comes into existence in this case), more options will appear, and not just huge ones.

Also, there's the option of mobile GPUs in tiny cases. Most of these come in the form of MXM modules, which are 82x100mm. Add a case around that with a beefy blower cooler, and you'd have a box roughly the size of an external 2.5" HDD, but 2-3 times as thick. Easy to carry, easy to connect. Heck, given that USB 3.1/TB3 can carry 100W of power, you could even power this from the laptop, although I don't think that's a great idea for long term use. I'd rather double the width of the external box and add a beefy battery. Thin and light laptop with great battery life? Check. Gaming power? Check. Better battery life than the same laptop with a built-in dGPU? Check.

And third, in case you aren't keeping up with recent announcements, you should read up on modern external GPU tech in regard to gaming on the laptop screen. At least with AMD's (/Intel's/Razer's) solution, this is not a problem. We'll see if Nvidia jumps on board or if they keep being jerks in terms of standards.

nerd1 - Saturday, March 12, 2016 - link

Have you EVER used a Optimus enabled laptop? My XPS 15 typically draws around 10W during idle, which means the GPU should draw less than a couple of watts while idle. I can say that is negligible, considering XPS 15 can equip 84 Wh of battery. Just do the math.And iris pro is a joke, it's much weaker than 940m, which can barely play old games at 768p. 960m is roughly 3-4 times more powerful and can play most games at 1080p.

Finally external GPU. External GPU should have its own case, its own PSU and should be connected to external display unless you want to halve the bandwidth. Then why just don't add CPU and SSD to make a standalone gaming desktop? Dell Graphics Amp cost $300, and Razer one will cost much more (naturally) One can build decent desktop (minus GPU) at that price.

Valantar - Tuesday, March 15, 2016 - link

If the GPU - that is supposed to be switched off! - uses "less than a couple of watts" out of 10W total power draw, that is <20% - definitely not negligible. Of course, with the review unit the 4K display adds to it's subpar battery life, but I have no doubt that the dGPU adds to it as well.And sure, Broadwell Iris Pro was only suitable for 720p gaming, as seen in ATs Broadwell Desktop review. However, Skylake Iris Pro increases EUs by 50% (from 48 to 72), in addition to reduced power and other architectural improvements. It's not out yet, but it will be soon, and it will eat 940ms for breakfast. This would be _perfect_ for mobile use - able to run most games at an acceptable resolution and detail level if absolutely necessary, but with an eGPU for proper gaming.

And lastly: "External GPU should have its own case, its own PSU and should be connected to external display unless you want to halve the bandwidth." What on earth are you talking about? Why SHOULD they do any of this? Of course it would need it's own case and some form of power supply - but that case could easily be a very compact one, and the power supply could be through TB3 if you wanted to, although (as stated in my previous posts), I'd argue for dedicated power supplies and/or dedicated batteries for these. And why would using the integrated display halve the bandwidth? It wouldn't use DP alt mode for this, the signal would be transferred through PCIe - exactly the same as dGPUs in laptops do today. You'd never know the difference: except for some completely imperceptible added latency, this should perform exactly like your current laptop, given the same GPU.

Your argument goes something like this:

You: eGPU cases are too bulky and expensive, and you need to buy a monitor!

Me: They don't have to be, and you don't have to!

You: But that's how it SHOULD be!

This makes no sense.

Of course, there is a difference of opinion here. You want a middling dGPU in your laptop, and don't mind the added weight, heat and power draw. I do mind these, and don't want it - I'd much rather add an external GPU with more processing power. I'm not arguing to remove your option, only to add another, more flexible one. Is that really such a problem for you?

nerd1 - Wednesday, March 16, 2016 - link

a) You haven't provided ANY proof that idle GPU with Optimus affects the battery life a lot. And based on your logic, more powerful iGPU will increase the power consumption JUST AS WELL.b) Intel has been claiming great iGPU performance FOR YEARS. Yet the actual game framerates with iGPU are much worse than benchmark results. For example, BioShock infinite with high setting and 720p shows ~35fps with Iris pro 5200, and ~56fps on 940m with DDR5 (one in the surface book), 115fps with 960m.

c) You need external monitor otherwise the bandwidth will be halved (as you need to send the video signal back to the laptop), hampering the GPU performance. There already are external GPU benchmarks around. Please go check.

d) No sane person will make/buy external GPU system that only accepts very expensive mobile GPUs, which makes the whole point of external GPU moot. External GPU box should at least be able to top double-width GPU (980 ti for example) that requires PSU of at least 300 Watts range.

And you are arguing to remove the dGPU from the system, which is given almost for free (unlike Apple), and way more powerful than whatever iGPU intel has now, and does not affect the battery life too much.

Valantar - Thursday, March 17, 2016 - link

Wow, you really seem opposed to people actually getting to choose what they want.a) The proof for added power draw with idle optimus GPUs is quite simple: as long as it's not entirely powered off, it will be consuming more power than if it wasn't there. That is indisputable. And sure, a larger iGPU would have higher power draw under load, and possibly at idle as well. But it still wouldn't require to power an entire separate card, separate power delivery circuitry, and other dGPU components. So of course, higher performance iGPUs consume more power, but not nearly as much as a dGPU, and probably less than a less powerful iGPU + sleeping dGPU.

b) Sure, Intel has been claiming great iGPU performance for years. Last year, they kinda-sorta delivered with Broadwell Iris Pro (48 eu + 128mb eDRAM), but not really in terms of performance vs. power. This year, there are Iris 540/550 (48 eu + 64MB eDRAM) units with better eDRAM utilization and lower power (BDW was 45-65W, SKL is 15W and upwards with Iris 540, 28W with Iris 550). And then there will soon be Iris Pro 580, with 72 EUs and 128MB eDRAM at the same frequencies, at 45W and upwards. Given GPU parallelism, it's reasonable to expect the Iris Pro 580 to perform ~40% better than the BDW Iris pro given the 50% increase in EUs (in addition to other improvements). In your BioShock Infinite example that falls short of matching the 940m (a 40% increase from 35fps is still only 49fps).

But - and this is the big one - that's comparing it with a separate ~30W (probably slightly higher with the Surface Book due to GDDR5s higher power draw than DDR3) GPU. So, in 45W, you could get a dual core i7 w/low end iGPU (Iris not needed for gaming in this scenario), and a GT 940m. Or you could get a Quad Core i7 with higher base and turbo clocks and an Iris Pro iGPU. Comparing those two hypothetical systems (with identical power draw, and as such fitting in the same chassis) my money would be on the Iris Pro all the way. Sure, you could easily beat this with a 945m, 950m or 960m. But those are 40, 50 and 60W GPUs, respectively. And you'd really want a quad core to saturate those graphics cards, in which case you're stuck with Intel's 45W series. Which leaves you with the need for a drastically larger chassis and higher powered fans to cool this off, as you'd need to remove at least 85W of heat. Sure, there is a market and a demand for those PCs. I'm just saying that there is a market for lower powered solutions as well, and if a 45W system can perform within 90+% of a 75W one, I'd go for the low powered one every time. You might not, but arguing that systems like these shouldn't exist just because you don't want them is just plain dumb.

Edit: ah, bugger, I just noticed that the Iris Pro you're citing (5200) isn't BDW but the HSW version - which had fewer EUs still (40, not 48), and is architecturally far inferior to more current solutions. Which only means that my estimates are really lowball, and could probably be increased by another 20% or so.

c) You seem VERY sure that it would (specifically) halve bandwidth. What is this based on? DP alt mode over USB 3.1 using two lanes? If so: you realize that TB3 and USB 3.1 are not the same, right? Also, that supposes that the GPU transfers this data as a DP signal - which has 20% overhead, compared to the 1,54% overhead of PCIe 3.0. So, it would seem far more logical for the eGPU to transfer the image back through PCIe, and have it converted to eDP at a later stage (or in the iGPU, as Optimus does: http://www.trustedreviews.com/opinions/nvidia-opti... Also, given that AMD specifically mentioned gaming on laptop monitors with XConnect, don't you think this is an issue that their engineers have both discussed and researched quite thoroughly? Sure, there are external GPU benchmarks around. Based either on unreleased (and thus unfinished) technology, or DIY or proprietary solutions that are bound to be technically inferior to an industry standard. Am I saying performance will be unaffected? No, but I'm saying that you probably wouldn't notice.

d) Says you. Sorry, but why not? People buy laptops with these "very expensive mobile GPUs" already installed, right? And sure, the case and PSU would add a small cost (60-90W power bricks are VERY cheap for OEMs, but the TB3 controller, cooling solution and case would cost a bit. I'd say the total would be <$100. First gen ones would probably be expensive, but then they would drop. Quickly.). Saying that ALL eGPU boxes should handle EVERY full size GPU at max power is beyond dumb. Not even all computer cases fit those cards, yet the still sell. Should some? Sure, like the Razer Core. But that's the top-end solution. Not everyone needs - or wants - a case that could fit and power a 980ti. Saying that there shouldn't be lower-end solutions is such a baffling idea that I really don't know how to argue with that - except to say: Why the hell not? It would be EASY for an OEM to build an eGPU case with support for cards up to, say, 150W (which encompasses the vast majority of desktop GPU sales) for $200.

And giving you the GPU for free? Really? Let's look at the $1000 and $1200 versions of the XPS 15. For $200, you get the i5 over the i3, twice the HDD storage, and the 960m. The Recommended Customer Price for the two CPUs is $250 and $225, respectively (http://ark.intel.com/compare/88959,89063). So that's $25 of your $200. At Newegg, the cheapest 500GB 2,5" drive is $39,99, while the cheapest 1TB one is $49,99. And of course, these are retail products at retail prices with retail margins included. OEMs pay far less than this. But I'll be generous, and give you the full $10 difference. So far, we've accounted for $35 of your $200 price hike, and there is one component left. In other words, you're paying $165 for that GPU. The only reason the i5 isn't in the $1000 model is that Dell wants product differentiation.

I for one would gladly buy a $1300 XPS 15 with the i5 and the 960m moved to an external case - or, of course, even more to add a more powerful GPU. The point is the flexibility and upgradeability. Many people are very willing to pay for that, especially when it comes to laptop GPUs.

carrotseed - Wednesday, March 9, 2016 - link

the 15.6in variant would be perfect those working with numbers if there is an option for a keyboard with a separate number padValantar - Saturday, March 12, 2016 - link

There's no room for a numpad due to the smaller chassis - you could fit one, but it would require shrinking keys and ruining the keyboard. No thanks.carrotseed - Saturday, March 12, 2016 - link

you can keep the keyboard the same size and shrink the numpad a little. Do you see those big empty spaces on both sides of those keyboards on the XPS 15? I'm sure the small cost of having two options for the keyboard can be passed on to those who will opt for the numpad variant.carrotseed - Saturday, March 12, 2016 - link

erratum: **...the keyboard on the XPS 15***