Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTThe Performance Impact of Asynchronous Shading

Finally, let’s take a look at Ashes’ latest addition to its stable of DX12 headlining features; asynchronous shading/compute. While earlier betas of the game implemented a very limited form of async shading, this latest beta contains a newer, more complex implementation of the technology, inspired in part by Oxide’s experiences with multi-GPU. As a result, async shading will potentially have a greater impact on performance than in earlier betas.

Update 02/24: NVIDIA sent a note over this afternoon letting us know that asynchornous shading is not enabled in their current drivers, hence the performance we are seeing here. Unfortunately they are not providing an ETA for when this feature will be enabled.

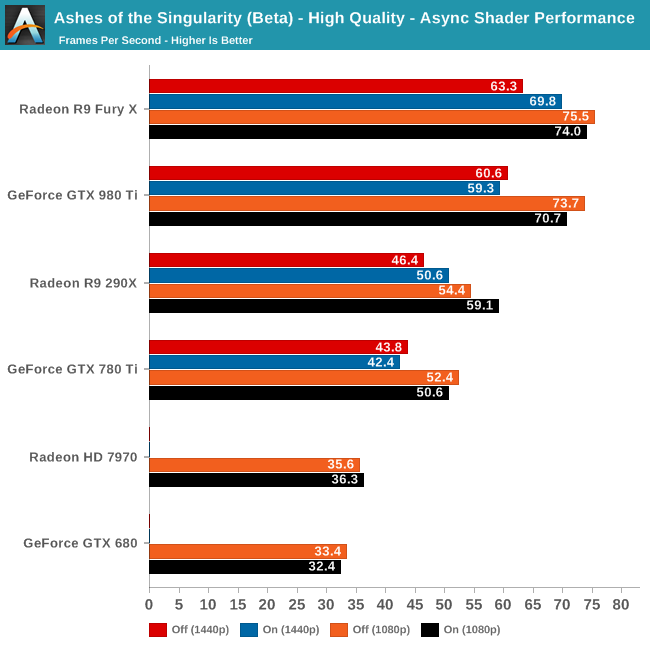

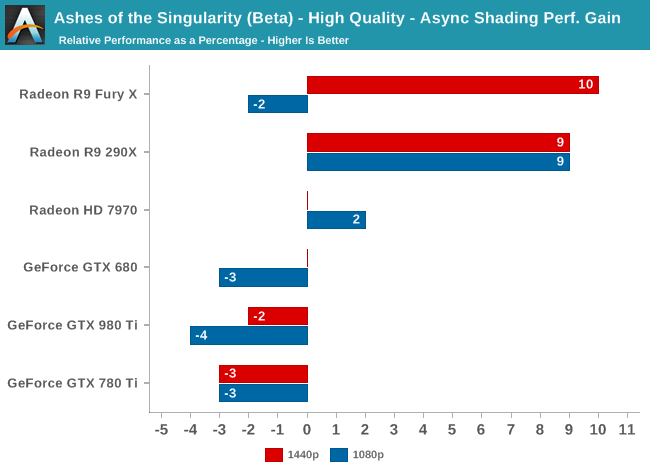

Since async shading is turned on by default in Ashes, what we’re essentially doing here is measuring the penalty for turning it off. Not unlike the DirectX 12 vs. DirectX 11 situation – and possibly even contributing to it – what we find depends heavily on the GPU vendor.

All NVIDIA cards suffer a minor regression in performance with async shading turned on. At a maximum of -4% it’s really not enough to justify disabling async shading, but at the same time it means that async shading is not providing NVIDIA with any benefit. With RTG cards on the other hand it’s almost always beneficial, with the benefit increasing with the overall performance of the card. In the case of the Fury X this means a 10% gain at 1440p, and though not plotted here, a similar gain at 4K.

These findings do go hand-in-hand with some of the basic performance goals of async shading, primarily that async shading can improve GPU utilization. At 4096 stream processors the Fury X has the most ALUs out of any card on these charts, and given its performance in other games, the numbers we see here lend credit to the theory that RTG isn’t always able to reach full utilization of those ALUs, particularly on Ashes. In which case async shading could be a big benefit going forward.

As for the NVIDIA cards, that’s a harder read. Is it that NVIDIA already has good ALU utilization? Or is it that their architectures can’t do enough with asynchronous execution to offset the scheduling penalty for using it? Either way, when it comes to Ashes NVIDIA isn’t gaining anything from async shading at this time.

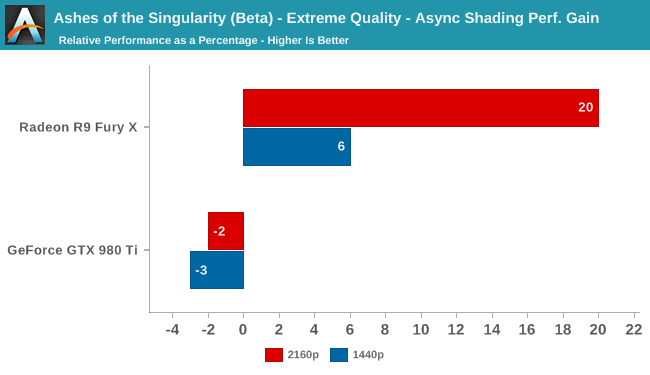

Meanwhile pushing our fastest GPUs to their limit at Extreme quality only widens the gap. At 4K the Fury X picks up nearly 20% from async shading – though a much smaller 6% at 1440p – while the GTX 980 Ti continues to lose a couple of percent from enabling it. This outcome is somewhat surprising since at 4K we’d already expect the Fury X to be rather taxed, but clearly there’s quite a bit of shader headroom left unused.

153 Comments

View All Comments

tuxRoller - Friday, February 26, 2016 - link

It's the simpler drivers which provide less room to hide architectural deficiencies.My point was that, across the board, gcn improves its performance a good deal relative to d3d11. That includes cards that are four years old. I don't think Maxwell is older than that.

I don't think we are really disagreeing, though.

RMSe17 - Wednesday, February 24, 2016 - link

Nowhere near as bad as the DX9 fiasco back in the FX 5xxx days where a low level ATi card would demolish the highest end GeForcept2501 - Thursday, February 25, 2016 - link

Few if anyone here is going to remember the fiasco when the radeon 9700 pro demolished the competition in performance and stability. Even fewer remember nvidia "optimizing" games with lower quality textures to compete.dray67 - Thursday, February 25, 2016 - link

I remember it and it was the reason I went for the 9700 and the later 9800, atm I'm back to Nvidia I've had 2 AMD card die on me due to heat, as much as I like them I've had my fingers burnt and moved away from them, if dx12 and dual gpu support becomes better supported I'll buy a high AMD card in an instant.knightspawn1138 - Thursday, February 25, 2016 - link

I remember it clearly. My Radeon 9800 was the last ATI card I bought. I loved it for years, and only ended up replacing it with an NVidia card when the Catalyst Control Center started sucking all the cycles out of my CPU. It's funny that half of the comments on this article complain that NVidia's drivers are over-optimized for every specific game, yet ATI and AMD were content to allow the CCC to be a resource hog that ruined even non-gaming performance for years. I'm happy with my NVidia cards. I've been able to easily play all modern games with great performance using a pair of GTX 460's, and recently replaced those with a GTX 970.xenol - Thursday, February 25, 2016 - link

Considering there aren't any other async shader games in development and nothing announced and with Pascal coming within the next year (which maybe, a game might actually use DX12) which will probably alleviate the situation, your evaluation of NVIDIA's situation is pretty poor.It takes more than a generation or a game to make a hardware company go down. NVIDIA suffered plenty during its GeForce FX days, and it got right back on its feet.

MattKa - Thursday, February 25, 2016 - link

No, no, no. An RTS game that probably isn't going to sell very well and seems incredibly lacking is going to destroy Nvidia.gamerk2 - Thursday, February 25, 2016 - link

AMD has had an async compute engine in their GPUs going back to the 7000 series. NVIDIA has not. Stands to reason AMD would do better in async compute based benchmarking.Let's see how Pascal compares, since it's being designed with DX12, and async compute, in mind.

agentbb007 - Saturday, February 27, 2016 - link

"NVIDIA telling us that async shading is not currently enabled in their drivers", yeah this pretty much sums it up. This beta stuff is interesting but just that beta...JlHADJOE - Saturday, February 27, 2016 - link

The GTX 680 seems to have done well though. I feel like Maxwell is being let down by the compromises Nvidia made optimizing for FP16 only and sacrificing real compute performance.