Examining Soft Machines' Architecture: An Element of VISC to Improving IPC

by Ian Cutress on February 12, 2016 8:00 AM EST- Posted in

- CPUs

- Arm

- x86

- Architecture

- Soft Machines

- IPC

The VISC Instruction Set and Global Front End

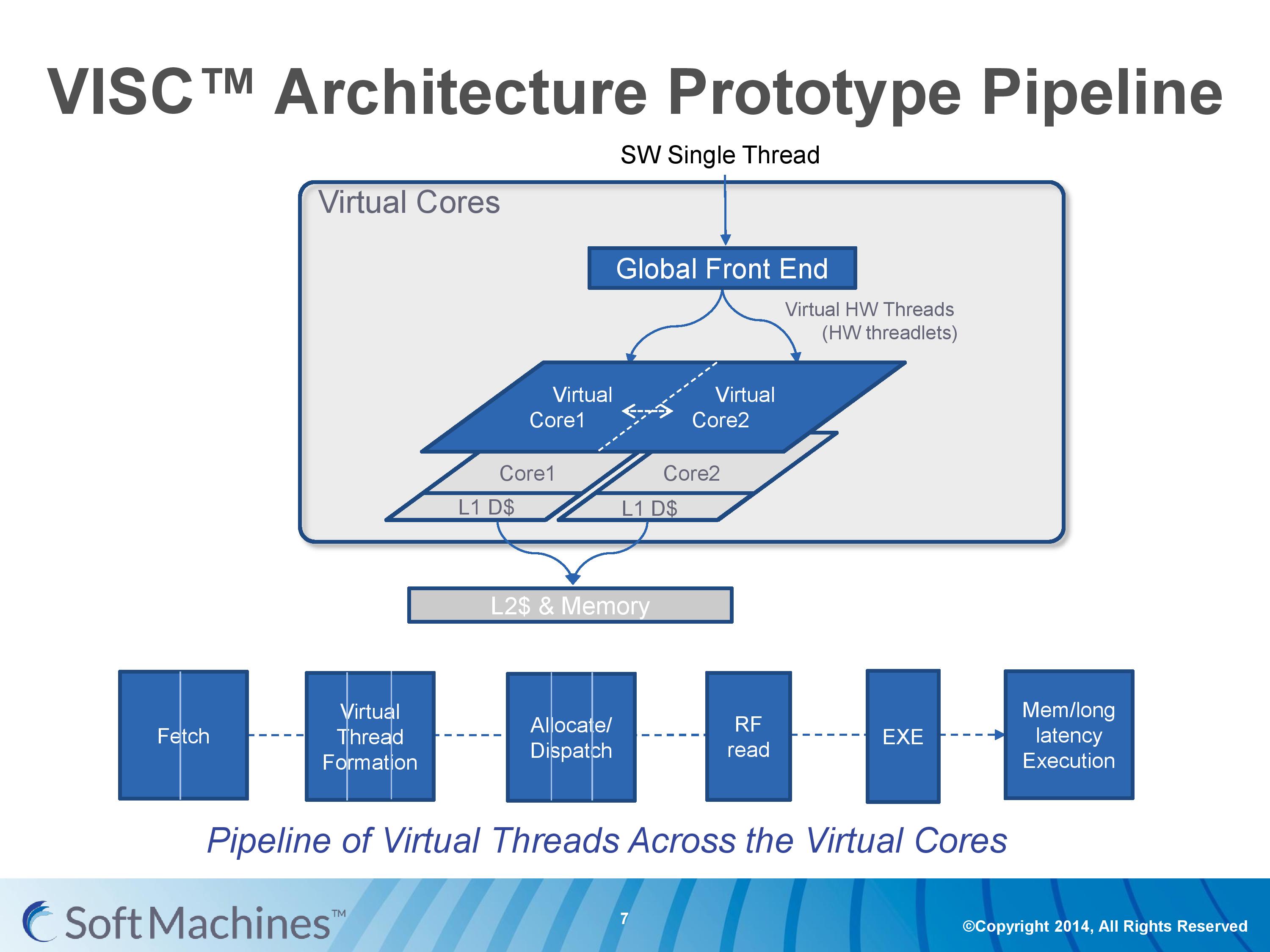

Common instruction set architectures (ISAs) such as x86, ARMv8, Power, SPARC and other more esoteric ones rely on system code converting into predefined instructions that each design can handle. VISC comes with its own ISA as well, separate from the others, which VISC cores and virtual cores use. When using native VISC code, the global front end will split the instructions into smaller ‘virtual hardware threadlets’ which are then dispatched to separate virtual cores. These virtual cores can then issue them to the available resources on any of the physical cores and keep track of where the data goes. Multiple virtual cores can push threadlets into the reorder buffer of a single physical core, which can split partial instructions and data from multiple threadlets through the execution ports at the same time. We were told that each ‘virtual core’ keeps track of the position of the relative output.

The true kicker (and so much of what sets VISC apart) is that when multiple virtual cores are in flight at one time, the core design allows the virtual core allocation of resources to be dynamic on a near-single cycle latency level (we were told from 1-4 cycles depending on the change in allocation). Thus if two virtual cores are competing for resources, there are appropriate algorithms in place to determine what resources are allocated where.

One big area of focus in optimizing processor designs for single-thread performance is speculation – being able to deal with branches in code and/or prefetch relevant data from memory when needed. Typically when speculation occurs, as the data for a single thread is contained within a core, it is easy enough to deal with code paths that rely on previous data or end up with bad speculation.

In the virtual core scenario however this becomes trickier. VISC tackles this in two ways – firstly, the threadlet generation is designed to minimize cross-core communication because this adds latency and reduces performance. Second, each core can communicate through either the register file or the L1 data caches. The register files have a single cycle latency for data but can only transmit tens of values, whereas the L1 cache has a 4-cycle latency but can transmit thousands of values.

Typically communicating through a register file is seen as a risky maneuver and difficult to control, especially when you have multiple physical cores and each core needs each other core to be able to place/take data into the right registers. Soft Machines told us that a large part of their design work has been in this area of speculation and data transfer. Specifically on speculation and branch prediction, we postulated that they were over ten years behind Intel in this, and the response we got was in a similar vein, stating that using Intel’s branch prediction methods could offer at least 20-30% better performance with branching code. However, we were told that the VISC design is quicker to recover in the event of a failed branch, needing only a few cycles.

The Pipeline

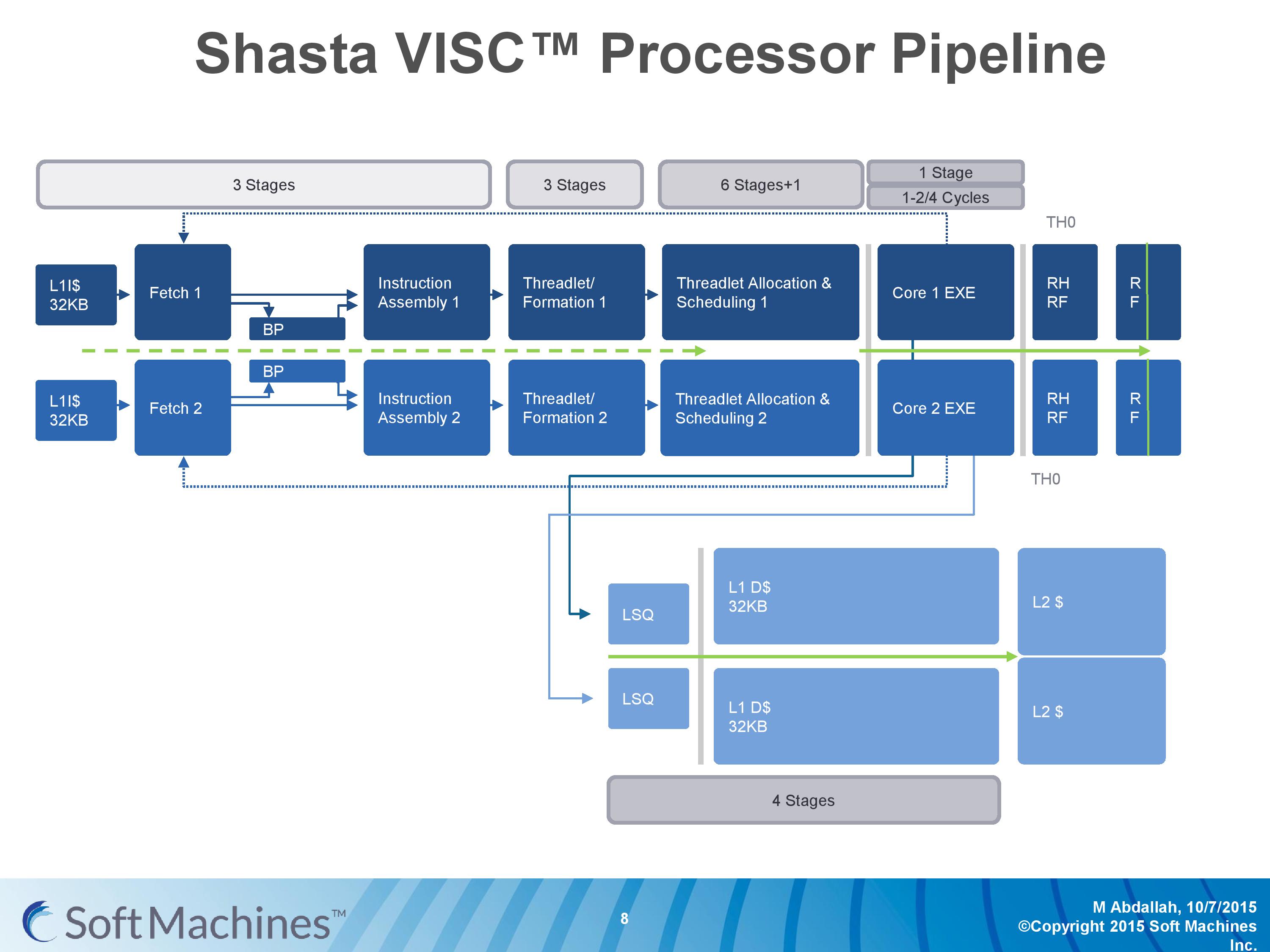

The first VISC core available for license is Shasta, a dual core part that enables up to two virtual cores or threads (2C/2VC), and we were given a base overview of the pipeline.

Normally we would see a pipeline of one core but this is a pipeline of both cores of Shasta. This pipeline, compared to the original VISC prototype, is also deeper. The pipeline looks relatively normal to others to start, where the thread either takes an instruction or issues a fetch for data into the instruction assembly. Making the VISC instructions and data into threadlets takes another three stages, but the allocation and scheduling takes six (plus one). On that subject, Soft Machines mentioned that keeping track of data across multiple cores per virtual core is tricky, as well as dealing with reorder buffers and parallel instruction management, that’s why there are a large amount of stages here. The plus one goes back to variable physical core allocation methodology, ensuring that if there are two threads active that the heavier one will get the most resources. The threadlets are then executed on the ports of each core, with a possible 1-4 cycle delay if data needs to be transferred across the core boundaries via registers or L1 cache.

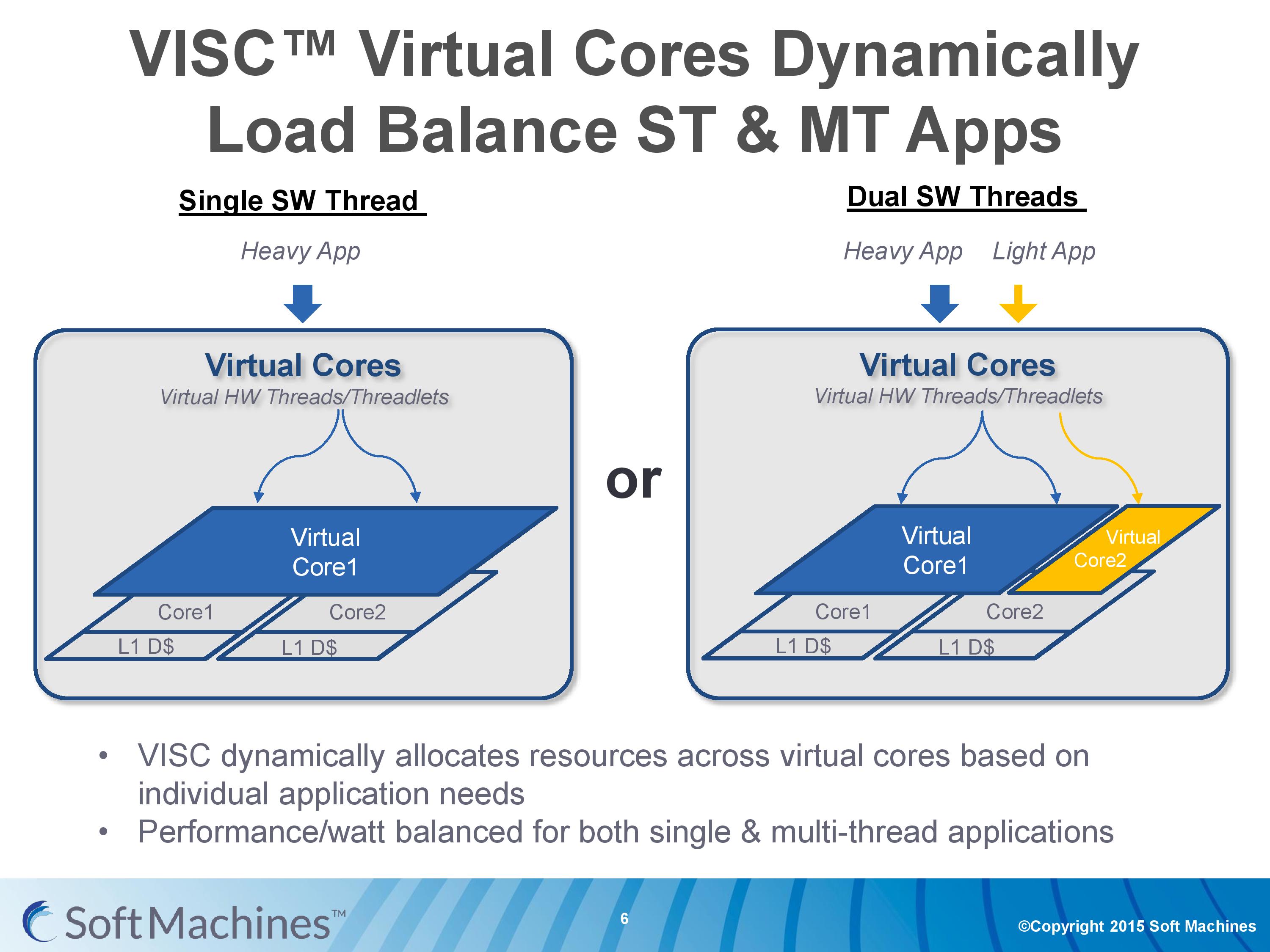

With the variable allocation of fractions of a core to a virtual core, VISC is designed for this situation:

If one heaver thread needs more resources, it can take them from idle ports on a second core (or third, or fourth). The virtual cores can be configured at the software stage as well to limit their use (e.g. keep a VC to half a physical core), and this can be configured at runtime at the expense of 10-12 cycles. There is a quality of service implementation as well, so if a virtual core takes a high priority thread, it will have access to more resources by default.

97 Comments

View All Comments

Bleakwise - Tuesday, March 14, 2017 - link

"Floating point code""Integer code"

Do you have any idea what you're talking about?

Bulldozer does "flaoting poitn code" faster than the fucking 1080Ti

At least one one thread. Unless you're going to go wide it doesn't help.

The point of this isn't to "go wide" it's to massively increase speculation ability.

The 1080Ti has ZERO speculative ability, NONE. GPUs simply don't do branching, that's not what GPUs do, they rely on ACE units and SMX units and so on to balance thousands of cores.

A CPU on the other hand has more speculative branches than cores.

SIMD and SIMT that GPUs do are not "FPU code"

FFS

dcbronco - Friday, February 12, 2016 - link

AMD helped finance this. They may already have a stake and I would bet some right to first refusal. They used their investment in HBM to get earlier access than NVIDIA, I doubt they would have invested without some sort of incentives for themselves.Bleakwise - Tuesday, March 14, 2017 - link

Of course not.bcronce - Saturday, February 13, 2016 - link

There is no such thing as a free lunch. They are trading something. Their benchmarks are for single thread performance, which the graphs showed a much greater efficiency and performance than Intel. Very impressive and I'm sure they'll be great for something.The problem is the platform sounds great for highly coupled cores and very wide single thread execution with few data dependencies. Could be great for computation.

What I'm wondering is how their platform scales for IO workloads like web servers, file servers, or event video games. Suddenly a large part of the work is communicating with other devices and synchronizing many cores.

One thing that has helped ARM for a long time is they were mostly single core and only recently multi-core. They didn't use to have a complex cache-coherency like x86. This dramatically reduced transistor counts, increase efficiency, and allowed for great decoupled core performance. But as soon as you wanted two cores to work together, it went to crap. Cache-coherency is hardware accelerated inter-core communication. Amdahl's law was not very forgiving to ARM's non-cache-coherency cores for anything except GPU like workloads.

Based on the description, VISC sounds like it needs highly couples cores to maintain low latency and high bandwidth. This is probably why they also seem to have lower frequency. Keeping many parts far away from each-other in sync takes time. But lower frequency also means lower voltage, and power consumption scales with the square of the voltage and linear with frequency.

I wonder how tightly coupled they can keep 4, 8, or 16 cores. Maybe they don't need the core counts for their target workloads or possibly they can stay competitive with a fraction the core counts by having better efficiency in power and IPC.

In the end, I'm sure they'll at least find a niche market and I'm glad some new ideas are making it out there. I wouldn't be surprised if they can take over the dual or quad core market, forcing Intel to add more cores.

Bleakwise - Tuesday, March 14, 2017 - link

It's not a "free lunch"Obviously all of this crap is going to cost DIE space, it's not free.

If all we cared about was raw processing power we'd just make 2046kb wide vector units and ignore branching and speculation all together.

Bulldozer has better theortical performance than Haswell i5s. I'd rather have the extra out of order pipes, the SMT unit to use any unused pipes, better branch prediction and so, and in the real world this stuff wins the day.

Not everyone can become a world class programmer and re-factor all their code so that it spreads across thousands of cores like it can on a GPU.

Sometimes it's not even possible. Sometimes what you need is branch prediction, branch prediction lets you see the future, LITERALLY this is what the CPU does. Obviously the more branches you predict, the more cycles you're wasting on that thread, because the more speculations you get wrong.

You also reduce the number of misses and increase cache hits.

As for coupling 4 or 16 cores, they haven't even talked about going beyond 4 cores. Obvoiusly it doesn't scale into infinity, if you're getting 90% speculative accuracy you can only gain 10% more. Spending 30% of your transitor budget to bind up 8 or 16 cores when spending 10% of your budget on 4, for a 10% performance gain would be dumb.

You'd be much better off going for more clock speed, or reducing latency, adding a victim cache, or l2 cache coherency, or beefing up the GPU, a better memory controller, or just beefing up your underlying branch predictor,

Bleakwise - Tuesday, March 14, 2017 - link

You'll never get perfect speculation anyway. Unless a language is developed that puts limits on the number of branches possible per X lines of code and keep the number of branches below the number the CPU can handle. You're going to ALWAYS have to deal with the risk of cache misses.Not sure there is even anything you could gain from 100% target prediction hit grantee beyond having no lost cycles on a miss. Getting there even through a core-binding fabric/bus like this across 16 cores would blow your transistor budget to the point that you could hardly afford a reasonable size cache in the first place.

You'd be better off just reducing the number of stages in the pipelines or just adding more pipelines to each core instead of blowing your budget on this fabric.

For example, binding together 100 in order CPUs to make a virtual 100 pipeline CPU would be ridiculously expensive and power hungry vs just having an 8 core superscaler CPU with 12 out of order pipelines in each CPU.

tipoo - Friday, February 12, 2016 - link

Question, since this is testing their core design in isolation and the rests of the package hasn't been built around it, is that accommodated for in the comparisons to other SoCs, which all have far more die area dedicated to non-core stuff than the cores?Flunk - Friday, February 12, 2016 - link

If VISC is not an Acronym then don't capitalize it, idiots.The technology looks like it could be really good, I'm hoping we see some practical applications.

smilingcrow - Friday, February 12, 2016 - link

They can capitalize it for any reason they like; it's just a word so nothing to GYKIATO (Get your kickers .... over).andychow - Friday, February 12, 2016 - link

It's an acronym, you can't trademark acronyms, so now they claim it's not an acronym. Legal bs 101.