Skylake Overclocking: Regular CPU BCLK Overclocking is Being Removed

by Ian Cutress on February 9, 2016 2:35 PM EST- Posted in

- CPUs

- Intel

- Overclocking

- Skylake

If you follow PC technology news, you would have seen our news on how Supermicro had enabled overclocking for Skylake (Intel’s 6th Generation) processors on non Z170 motherboards. This was a two fold increase in interest – not only was there overclocking (via base frequency rather than multiplier) on an H series chipset more than a few MHz, but it also enabled this type of overclocking on locked processors from $60 and up.

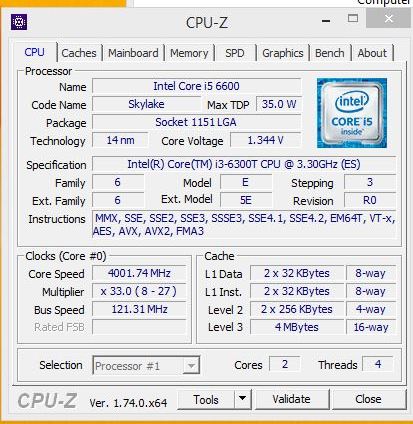

Core i3-6300T overclocked by 20%

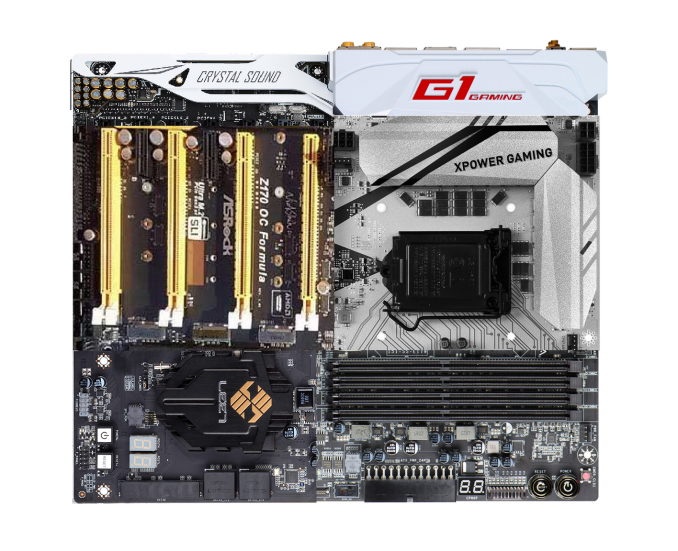

We reported at the time that ASRock was also introducing this feature, and since then they promoted a new series of ‘Sky OC’ features to enable base frequency overclocking on locked (often called ‘non-K’ because these chips do not have the K letter in their name to denote overclocking) processors. At CES we were shown new motherboards that were not Z170 motherboards that also had the vital feature – the extra signal generator required for the processor to enable this and a variant of custom firmware. Other motherboard manufacturers were also interested in pursuing this line, although they were a little more reserved.

Since that news we have sourced both the Supermicro motherboard that started the trend as well as more mid-range Core i3 processor for a review. Testing is almost complete, but there is a new climax to this story.

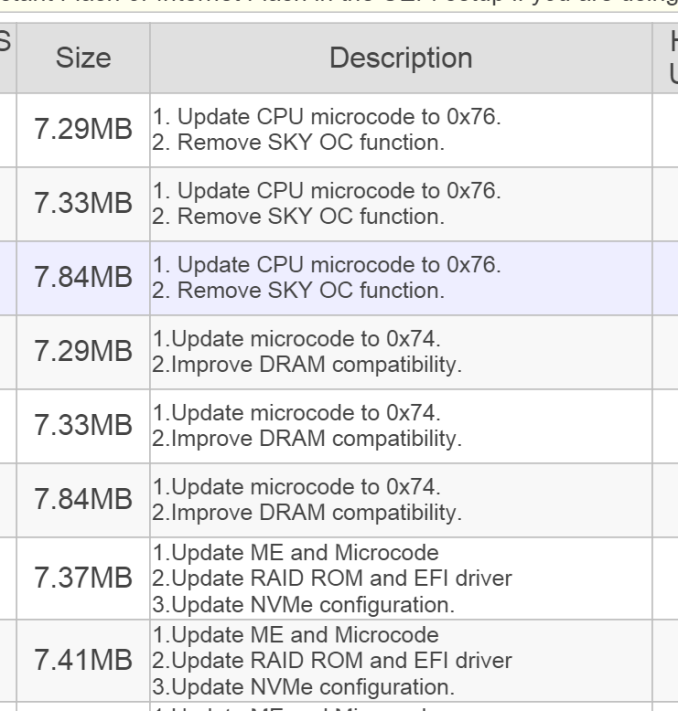

In the past week or so, it turns out that this feature is being removed for non-overclocking focused CPUs. The most obvious indication that this feature is no-longer part of the ecosystem comes from ASRock – their BIOS updates lists new firmware for all of their models which have the feature removed with the following phrase:

The marketing for this feature has all been removed as well, from ASRock’s websites and adverting.

When we (and other media) spoke to the other motherboard manufacturers, noting how reserved they were at the time this ‘feature’ came to prominence; we were told that it was still a work in progress for them. Some were uneasy to guarantee stability, or were not in a position to issue direct updates as some of their products did not have the required hardware and it would have left a confusing product stack with some having the feature and others not. As a result we expected to see new motherboards with the feature over time, either ‘revision/mark 2’ variants or a hold out for the Kaby Lake platform later this year and introduce it there.

Since ASRock were removing the feature, there have been plenty of comments abound on forums as to the reason behind this. The removal of the feature also comes with a CPU microcode update, which is notable because it could mean that both updates are linked. Most are pointing the finger at Intel, wondering if they are flexing some muscle requiring the manufacturer to change the firmware, or it's being done via microcode, while some are blaming the media for featuring it as a big wow factor and bringing it into the radar more prominently. I want to address some of these points and a wider look at Intel’s strategy here.

Firstly, no matter which way you slice it, Intel has been actively promoting overclocking as a big feature of their processors. It was a big part of the Skylake launch back in August last year.

To put some history in here, overclocking the processor by the the base frequency was common place with Conroe, and then with Nehalem there were special SKUs that opened up the multiplier. With Sandy Bridge, the microarchitecture was designed very differently and more parts of the silicon were integrated into the same clock domain which restricted any base frequency overclocking quite severely. Intel also restricted overclocking via the multiplier to a couple of parts with K in the name (typically high end i5 and i7 parts) such that overclocking could be focused on the high margin processors. This meant that users had to focus on getting more out of the better silicon, rather than pushing a mid-range part into a better performance chip. Some may argue this was to increase high end processor sales, while others saw it as Intel having a performance lead and being able to structure their product stack in such a way to maximize that lead.

In July 2014, with Devil’s Canyon, Intel adjusted the beams slightly. Partly due to an increase in temperature generation with the integrated voltage regulator on Haswell and a decrease in thermal interface quality, Devil’s Canyon was released offering more thermal headroom and potentially better overclocking performance – we tested the i7-4790K and i5-4690K and came to this conclusion. Alongside the two Devil’s Canyon processors they also released an overclockable Pentium processor, the G3258, to mark the 20th anniversary of Pentium. This was a dual core part without hyperthreading which offered a 30-40% overclock, but as we found out in the review of the G3258, even with this OC the fact that it was dual core limited its usefulness in a word were software/gaming is designed to handle more than two threads. At the time, for most enthusiasts, it was clear that if Intel wanted to relaunch the mid-range market, then an unlocked Core i3 needed to be made. It has been clear that while Intel holds the competitive advantage, that was not on the cards – releasing an unlocked Core i3 would give users performance of an i5/i7 at a much lower price point, and would cannibalize sales of their high end parts. While they had no competition for raw CPU horsepower, it wasn’t going to happen, regardless of how heavily Intel was promoting overclocking and how good overclocked processors were for gaming.

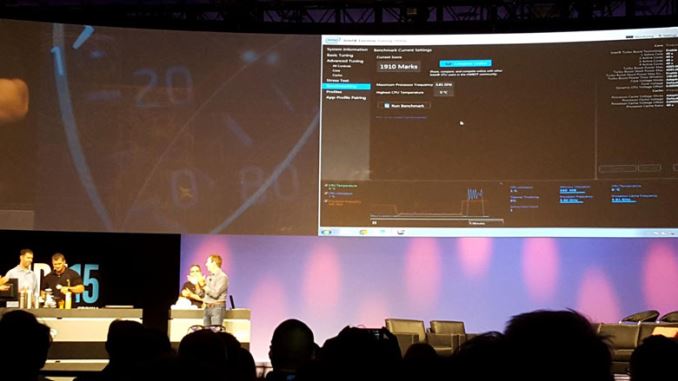

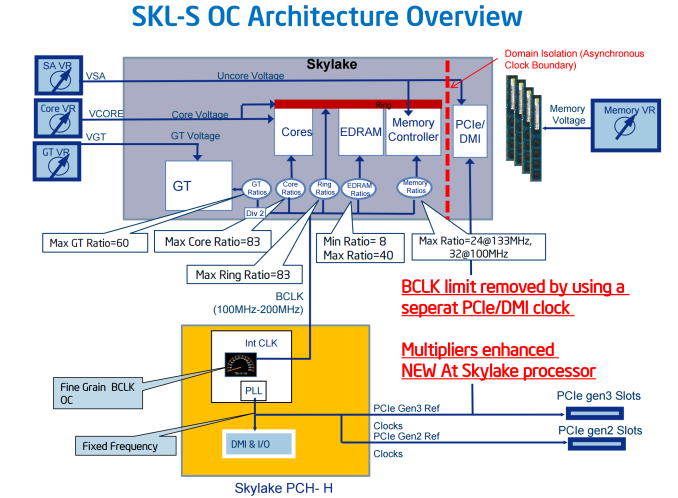

Fast forward to Skylake, and the first processors released were the two overclocked chips – the i7-6600K and i5-6500K, which we reviewed on day one. These were released at Gamescom in August 2015, a primarily gaming focused event and were marketed as unlocked parts ideal for gaming. These processors were arguably as rare as hen’s teeth to find until September. It was more at IDF, a couple of weeks later, that we given that the architectural details of the new CPUs and allowed us to explain why we were seeing the performance numbers we did. The reason why they were released at Gamescom was simply for the gaming crowd, as gaming is one of the few growth markets in the PC industry, but it meant that the overclocking discussions happened later at IDF. Intel invited experienced overclockers on stage during the presentations to show off overclocking on the new parts – it was clear that overclocking is on the agenda. We found out at IDF that the new Skylake microarchitecture uses separate frequency domains for the IO and PCIe, allowing the base frequency of these new unlocked parts to be adjusted as well as the multiplier.

Splave and L0ud_sil3nc3 at IDF 2015 overclocking live with Intel's Kirk Skaugen (source)

Brian Krzanich, CEO of Intel, with Splave, L0ud_sil3nc3 and Fugger during IDF 2015 (source)

In September 2015, the other members of the Skylake family were released: the 65W parts, the lower power parts, the Core i3 and the Pentium processors. Despite what was being said about being committed to overclocking as a concept/feature, these parts as we expected were locked down in terms of multiplier, but surprisingly locked down in base frequency as well. We had a chance to test some 65W parts, but were only able to move 3% or so, and this was a hard wall rather than a decline.

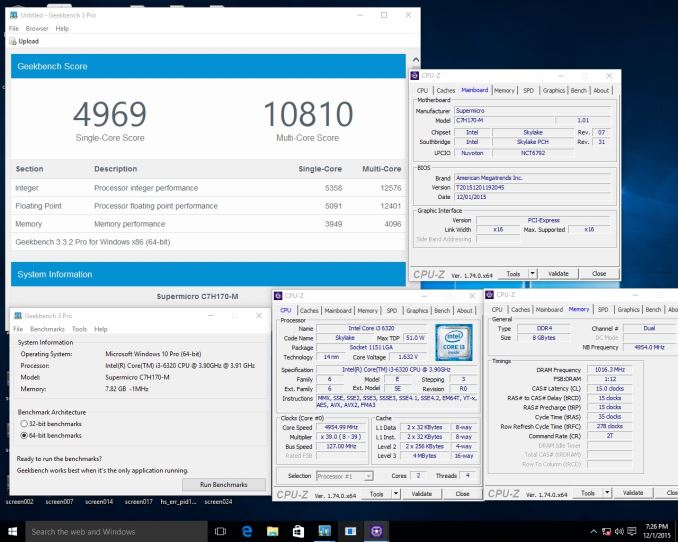

Core i3-6320 overclocked to 127 MHz on a Supermicro C7H170-M

So again, move forward to November 2015, when we wrote about Supermicro working around this 3% limitation using an external clock generator and modified firmware. It essentially opened the floodgates – not only could you overclock by adjusting the base frequency on a non Z-series chipset, but also on processors that were previously locked or only moved 3%. There was still the limitation of the DRAM increasing in frequency, but it was good enough for enthusiasts to start asking about the motherboard and other motherboard manufacturers to do something similar. Very quickly we were speaking to all the major players about their plans, with ASRock leading the way in that regard. They were quick enough to roll out the new feature on motherboards that could support it, and were taking motherboards already on the design stage up a notch to support it. We tested the i3-6100TE and got to 140 MHz very easily without any voltage increases, giving a 40% overclock.

So in this past week, ASRock has rolled back this feature on the latest BIOS updates. I am in contact with Supermicro as to their perspective on all this. Because of the scale of the rollback and how sudden it was, it is understandable that many users are pointing the finger at Intel, and wondering if there is some muscle being flexed to make this rollback occur. There is obvious finger pointing – if motherboard manufacturers had this feature, and a overclocked Core i3 performed as well as a Core i5-6600K in games, then users might spend $100 less on the processor. Not only end users, but system integrators as well would take advantage of this, offering cheaper pre-overclocked systems that gave higher performance. It would mean that users would upgrade today and keep their system longer, which might be contrary to any strategy for reinvigorating the PC market. Not only the CPU, but saving money on chipsets by buying H or B series would also affect the bottom line.

If you believe that Intel is worth pointing the finger at here, there are plenty of signs that show the two conflicting sides of interest – while promoting overclocking as a major part of the platform on one hand, not allowing overclocking on the low end SKUs with the other seems at odds with the overclocking strategy. There is something to be said about controlling the user experience, making sure the user gets what they paid for rather than a burning pile of rubble due to misconfiguration, or we could look to the fact that if base frequency overclocking is occurring now, then it would invariably end up with Kaby Lake as well. Depending on how Kaby Lake turns out, this might (or might not) be a good (or bad) thing for Intel.

Of course, there could be a few dangers given it was enabled mid-cycle. Allowing overclocking on an H-series or B-series chipset might not be a good thing, especially if the motherboard is only designed for 65W parts from a power delivery perspective. If the CPU is designed for 35W/65W and starts to draw 120W+ or 200W+, with a motherboard that was only expecting 65W, then it would not last very long. That would mean some motherboards would have to be engineered to do so, but as mentioned before, having a blanket upgrade regardless of the motherboard design would leave the company product stack with some parts that could and others that couldn’t, potentially confusing end-users.

Also of potential concern/confusion here are warranty matters for overzealous overclocks. As part of their overclocking strategy Intel does in fact offer overclocking warranties in some regions via their optinal 'Performance Tuning Protection' plans, but again this is only for SKUs that are unlocked. With lower-end processors I think it's safe to say that Intel doesn't want to open the door again to replacing lower-margin processors that died under "mysterious circumstances" while trying to balance that with legitimate consumer warranty needs.

Arguably the best way to encourage these CPUs to be opened up is some strong competition. It's at this point that I should add that despite the opening up of the clock domains with Skylake, Intel has been clear in talking about their overclock strategy only in relation to the unlocked parts. This makes sense given their market position.

Back on the motherboard side, assuming that Supermicro will also have to roll back their feature (or limit it to that single motherboard only, which might be difficult to get hold of), then there are two options here for anyone who had invested in the base frequency ecosystem. Either stay on the older BIOS and not update as time goes on, or update and lose the feature. We’re not sure if ASRock will keep the BIOSes that allow base frequency on their website, or if they will be removed so new users cannot roll back the BIOS. I assume that some forums have taken a copy while they were all still available and hosted them elsewhere, such as the overclocking forum HWBot or at XtremeSystems.

Not to mention, there's the consideration for reviewers as well. For those that have an OC capable system for these locked parts, creating data at an overclocked speed and base speed means double the time to test, although there will be fewer users able to buy the hardware necessary to do so as time goes on. From a personal perspective, I still want to see those OC numbers on Core i3 or Pentiums, or even the Core i5-6400/6500 where a user could have saved $60 compared to the i5-6600K, or comparing that to what the competitors have to offer. How OC makes a difference allows us to predict performance. I assume our readers want to see as well?

Relevant Reading

Devil's Canyon Review: Core i7-4790K and Core i5-4690K - CPU Review

The Overclockable Pentium G3258 Review - CPU Review

Skylake-K Review: Core i7-6700K and Core i5-6600K - CPU Review

Comparison between the i7-6700K and i7-2600K in Bench - CPU Comparison

Overclocking Performance Mini-Test to 4.8 GHz - Overclocking

Skylake Architecture Analysis - Architecture

Z170 Chipset Analysis and 55+ Motherboards at Launch - Motherboard Overview

Discrete Graphics: An Update for Z170 Motherboards - PCIe Firmware Update

73 Comments

View All Comments

wolfemane - Wednesday, February 10, 2016 - link

I could understand your desire to see a 4ghz Haswell chip on the charts if there was a reason other than 4ghz. There is little to no performance gains that would show relevance in the i7 class on a comparison chart. As for clock speeds, there are 3.2ghz and 3.4ghz chips that outperform the 4770k. And let me remind you there is a 5ghz chip that doesn't fair well against the 4770k.TSX was part of the Haswell architecture and Intel disabled TSX in all its chips, not just the 4970k. Regardless it would have provided no additional performance boosts in Anandtechs day to day becnhmark suite to begin with. Now whether Intel has re-enabled TSX I have no idea. Nor would VTd to throw that in as well.

Again. I see no reason why Anandtech should replace an already high end i7 with a slightly better i7 on the same socket. Just doesn't make any sense. So I ask again... WHY replace one cpu in with another chip that will offer little gains in either performance or comparison results?

xrror - Wednesday, February 10, 2016 - link

That's the thing, I have no other reason than it being an "official" 4Ghz Haswell datapoint. But I'm making an assumption that anyone buying a 4770K (or any K sku) would at the very least run it at 4Ghz.TomWomack - Wednesday, February 10, 2016 - link

I have a 4970K which I don't overclock - I bought the K to get the extra 400MHz stock speed on a four-core machine with hyperthreading.xrror - Wednesday, February 10, 2016 - link

Thanks for the reply. That makes sense since the stock speed difference between the 4790K and the 4790 is really large compared to earlier generations. Normally it was only 100mhz from the 2500/2600 days even up to the 4770 vs 4770K.Heh, that kinda makes 4790K ironically the first K sku that actually made sense to not overclock. ouch my head ;P

willis936 - Wednesday, February 10, 2016 - link

"here are 3.2ghz and 3.4ghz chips that outperform the 4770k"Uhh no there isn't. Broadwell and Skylake IPC increase are not greater than 20% over Haswell. Frequency is still king in single threaded performance (what really matters for client applications and many server ones). Yeah if you compare a 14 core xeon to a consumer part in threaded surpass the consumer part is going to look like a joke.

wolfemane - Wednesday, February 10, 2016 - link

Ok, agreed. I'll give you that. But We are talking a chip to replace a benchmark cup. General benchmark suites. And yes there are 3.2 and 3.4ghz chips that will operate better than the 4770k. Here are three examples: on benchmarks they didn't surpass they were right on the heals of the 4770k. In real world application the margins would minimal.They aren't practical, but it goes to my point that clock speeds aren't the aole importance of a chip.

http://www.anandtech.com/bench/product/836?vs=1320

http://www.anandtech.com/bench/product/836?vs=1316

http://www.anandtech.com/bench/product/836?vs=1317

jasonelmore - Wednesday, February 10, 2016 - link

I agree with xrror, we need the 4790k in the charts, simply to make it easy to see architecture and IPC gains, without having a clock speed variable. Pitting a 4770K against a skylake 6700K makes the skylake look better than it really is.Ian Cutress - Wednesday, February 10, 2016 - link

"It's always infuriated me that AnandTech never uses the 4970K in it's main articles."It was noted at the time when were doing generational analysis of Skylake that users wanted more 4790K results to be shown. When I lock down my test suite for 2016 and retest the lot of processors I have (which will take time), I'll be doing stock and OC results in future for the big launches.

Though I'm surprised you imply that we've left it out of many articles. I can only really think of one where it ultimately mattered, the Skylake 6700K review, or perhaps a second with the Skylake OC but that was just a scaling piece. What others were there?

xrror - Wednesday, February 10, 2016 - link

"Though I'm surprised you imply that we've left it out of many articles. I can only really think of one where it ultimately mattered"I mean this is the best possible way, you've earned your Ph.D with that non-committal sentence =) (Thesis committees are evil). But to be specific, my request is replacing the 4770(K) with the 4790K.

Bear with me here, I'll try and put my reasoning on this, this is some convoluted Venn diagram I'm trying to form here...

My argument is that a person running a 4 core, 8 virtual thread Haswell (thus. i7) who is reading an Anandtech article, and is interested in processor performance, AND who actually has the ability to upgrade their machine (ie: not corporate customer, NOT a K-sku), is likely to already be running their 47x0K processor over 4Ghz.

I keep harping about 4790K not because it's somehow better than the 4770. It's because it's the only way to have an official, Intel released SKU of Haswell at 4.0Ghz base speed. I am not suggesting making overclocked speeds an official part of the article benches even if personally I'd love that. Mostly since it could imply that Anandtech is officially endorsing overclocking (for other readers - they don't want to open that can of worms) and it also makes more work for an older platform in your reviews. Plus then you open the door for people to complain that you didn't overclock every platform and/or you didn't overclock one enough etc.

wolfemane has a point, in that the difference between a normal 4770 at 3.4, and a 4790K at 4.0 isn't really "that much" but... Is there a way to query readers that have a 4xx0K processor what they run them at?

I guess my argument rests on the assumption that the majority of 4770K and 4790K users are running those processors at least over 4Ghz. And that is an assumption. I'm totally open for more input on this.

My full assumption is that the vast majority of people, who knowingly bought 4770K would seriously run it at the stock 3.5Ghz by choice, thus making it an unrealistic datapoint. Anyone who bought a 4770 non-K (3.4) hopefully would have realized what they were giving up by buying it instead of the K, and (another assumption) aren't interested in overclocking - and likely would just upgrade the entire platform if they need more performance. The computer is an appliance, they aren't looking at Anandtech articles to see if they need to upgrade their processor, they are just looking for a big enough leap for the entire platform to replace it all.

I'm not thinking the person with the 4770 in their Dell Optiplex 7010 really cares about these articles. Again though... I'm now building assumptions on top of more assumptions.

So people using a 4770, or somehow have a 4770K and are not overclocking it... the data you collect on those is wasted on those people? And everyone else who actually cares about processor performance specifically, has to go through the effort to extrapolate the data every time.

I'm saying that the specific audience who would be reading the chart data, trying to extract if they should upgrade or move away from a socket 1150 system, are the ones who are always most inconvenienced by the stock 4770(K) numbers. Because the stock numbers are only for the person with the Dell, and those people don't care anyway.

Well that was all hugely rambley. If I'm totally off the rocker that's fine. Also before people assume I have some vested interest as a socket1150 owner I'm not.

Everyone please feedback here though. If there are tons of you do use a 4770 or 4770K at stock, but DO read Anandtech articles for upgrade info, please chime in! I'm old school, and I fully admit i'm likely not in touch with the "modern builder" ... what scenarios are your machines in? Where would you buy a 4770 over a 4770K in a home build? And I mean that honestly! The only scenerio I can think is if you had the lowest cost B85 board but needed 8 thread - but then you're trying to replicate the Dell?

Lastly, Ian thanks for your time responding. And sorry for making a big buggeroo about a now dead socket. In the end I doubt it matters what you use for 1150 results, since... I doubt the majority of readers really cares about the performance of old sockets =(

cobalt42 - Wednesday, February 10, 2016 - link

Totally agree with this reasoning. It's a little weird because even though there's some movement in peak stock speeds, there's almost none in peak OC, so even though I largely agree that a 4GHz stock 4790k (or 4GHz OC 4770k) is probably the right data point in the reviews, I also see value in a 4GHz OC 2500k/2600k, because that's what many enthusiasts (like me) are still running.