OCZ's Vertex 2 Pro Preview: The Fastest MLC SSD We've Ever Tested

by Anand Lal Shimpi on December 31, 2009 12:00 AM EST- Posted in

- Storage

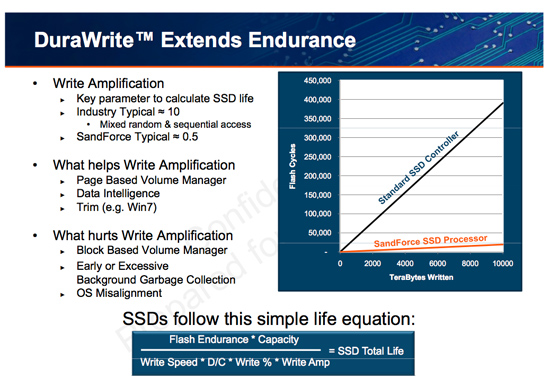

The Secret Sauce: 0.5x Write Amplification

The downfall of all NAND flash based SSDs is the dreaded read-modify-write scenario. I’ve explained this a few times before. Basically your controller goes to write some amount of data, but because of a lot of reorganization that needs to be done it ends up writing a lot more data. The ratio of how much you write to how much you wanted to write is write amplification. Ideally this should be 1. You want to write 1GB and you actually write 1GB. In practice this can be as high as 10 or 20x on a really bad SSD. Intel claims that the X25-M’s dynamic nature keeps write amplification down to a manageable 1.1x. SandForce says its controllers write a little less than half what Intel does.

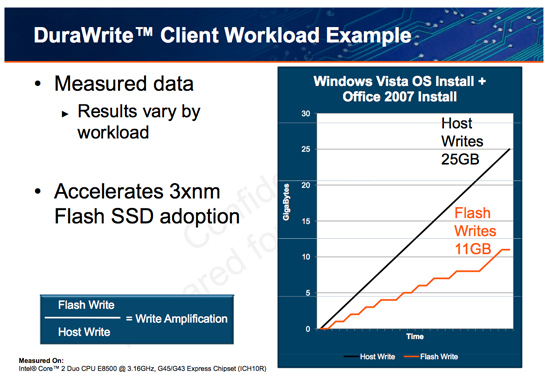

SandForce states that a full install of Windows 7 + Office 2007 results in 25GB of writes to the host, yet only 11GB of writes are passed on to the drive. In other words, 25GBs of files are written and available on the SSD, but only 11GB of flash is actually occupied. Clearly it’s not bit-for-bit data storage.

What SF appears to be doing is some form of real-time compression on data sent to the drive. SandForce told me that it’s not strictly compression but a combination of several techniques that are chosen on the fly depending on the workload.

SandForce referenced data deduplication as a type of data reduction algorithm that could be used. The principle behind data deduplication is simple. Instead of storing every single bit of data that comes through, simply store the bits that are unique and references to them instead of any additional duplicates. Now presumably your hard drive isn’t full of copies of the same file, so deduplication isn’t exactly what SandForce is doing - but it gives us a hint.

Straight up data compression is another possibility. The idea behind lossless compression is to use fewer bits to represent a larger set of bits. There’s additional processing required to recover the original data, but with a fast enough processor (or dedicated logic) that part can be negligible.

Assuming this is how SandForce works, it means that there’s a ton of complexity in the controller and firmware. Much more than what even a good SSD controller needs to deal with. Not only does SandForce have to manage bad blocks, block cleaning/recycling, LBA mapping and wear leveling, but it also needs to manage this tricky write optimization algorithm. It’s not a trivial matter, SandForce must ensure that the data remains intact while tossing away nearly half of it. After all, the primary goal of storage is to store data.

The whole write-less philosophy has tremendous implications for SSD performance. The less you write, the less you have to worry about garbage collection/cleaning and the less you have to worry about write amplification. This is how the SF controllers get by without having any external DRAM, there’s just no need. There are fairly large buffers on chip though, most likely on the order of a couple of MBs (more on this later).

Manufacturers are rarely honest enough to tell you the downsides to their technologies. Representing a collection of bits with a fewer number of bits works well if you have highly compressible data or a ton of duplicates. Data that is already well compressed however, shouldn’t work so nicely with the DuraWrite engine. That means compressed images, videos or file archives will most likely exhibit higher write amplification than SandForce’s claimed 0.5x. Presumably that’s not the majority of writes your SSD will see on a day to day basis, but it’s going to be some portion of it.

100 Comments

View All Comments

Shark321 - Monday, January 25, 2010 - link

Kingston has released a new SSD series (V+) with the Samsung controller. I hope Anandtech will review it soon. Other sites are not reliable, as they test only sequential read/writes.Bobchang - Wednesday, January 20, 2010 - link

Great Article!it's awesome to have new feature SSD and I like the performance

but, regarding your test, I don't get the same random read performance from IOMeter.

Can you let me know what version of IOMeter and configuration you used for the result? I never get more than around 6000 IOPS.

AnnonymousCoward - Wednesday, January 13, 2010 - link

Anand,Your SSD benchmarking strategy has a big problem: there are zero real-world-applicable comparison data. IOPS and PCMark are stupid. For video cards do you look at IOPS or FLOPS, or do you look at what matters in the real world: framerate?

As I said in my post here (http://tinyurl.com/yljqxjg)">http://tinyurl.com/yljqxjg), you need to simply measure time. I think this list is an excellent starting point, for what to measure to compare hard drives:

1. Boot time

2. Time to launch applications

_a) Firefox

_b) Google Earth

_c) Photoshop

3. Time to open huge files

_a) .doc

_b) .xls

_c) .pdf

_d) .psd

4. Game framerates

_a) minimum

_b) average

5. Time to copy files to & from the drive

_a) 3000 200kB files

_b) 200 4MB files

_c) 1 2GB file

6. Other application-specific tasks

What your current strategy lacks is the element of "significance"; is the performance difference between drives significant or insignificant? Does the SandForce cost twice as much as the others and launch applications just 0.2s faster? Let's say I currently don't own an SSD: I would sure like to know that an HDD takes 15s at some task, whereas the Vertex takes 7.1s, the Intel takes 7.0s, and the SF takes 6.9! Then my purchase decision would be entirely based on price! The current benchmarks leave me in the dark regarding this.

rifleman2 - Thursday, January 14, 2010 - link

I think the point made is a good one for an additional data point for the decision buying process. Keep all the great benchmarking data in the article and just add a couple of time measurements so, people can get a feel for how the benchmark numbers translate to time waiting in the real world which is what everyone really wants to know at the end of the day.Also, Anand did you fill the drive to its full capacity with already compressed data and if not, then what happens to performance and reliability when the drive is filled up with already compressed data. From your report it doesn't appear to have enough spare flash capacity to handle a worse case 1:1 ratio and still get decent performance or a endurance lifetime that is acceptable.

AnnonymousCoward - Friday, January 15, 2010 - link

Real world top-level data should be the primary focus and not just "an additional data point".This old article could not be a better example:

http://tinyurl.com/yamfwmg">http://tinyurl.com/yamfwmg

In IOPS, RAID0 was 20-38% faster! Then the loading *time* comparison had RAID0 giving equal and slightly worse performance! Anand concluded, "Bottom line: RAID-0 arrays will win you just about any benchmark, but they'll deliver virtually nothing more than that for real world desktop performance."

AnnonymousCoward - Friday, January 15, 2010 - link

Icing on the cake is this latest Vertex 2 drive, where IOPS don't equal bandwidth.It doesn't make sense to not measure time. Otherwise what you get is inaccurate results to real usage, and no grasp of how significant differences are.

jabberwolf - Friday, August 27, 2010 - link

The better way to test rather then hopping on your mac and thinking thats the end-all be-all of the world is to throw this drive into a server, vmware or xenserver... and create multiple VD sessions.1- see how many you can boot up at the same time and run heavy loads.

The boot ups will take the most IOPS.

Sorry but IOPS do matter so very much in the business world.

For stand alone drives, your read writes will be what your are looking for.

Wwhat - Wednesday, January 6, 2010 - link

This is all great, finally a company that realizes the current SSD's are too cheap and have too much capacity and that people have too much money.Oh wait..

Wwhat - Wednesday, January 6, 2010 - link

Double post was caused by anadtech saying something had gone wrong, prompting me to retry.Wwhat - Wednesday, January 6, 2010 - link

This is all great, finally a company that realizes the current SSD's are too cheap and have too much capacity and that people have too much money.Oh wait..