Further Anti-Aliasing Investigations

When it comes to anti-aliasing in AC, the heart of the matter is the differences in graphics architectures. ATI's current GPUs do not have dedicated anti-aliasing hardware. Instead, they rely on the pixel shaders to achieve the same result. Depending on the type of graphics work being done, this approach can have a negative impact on performance. ATI seems to have gotten around this via some of the extensions to DirectX 10.1. NVIDIA graphics chips do include anti-aliasing hardware, but that hardware cannot be properly utilized in some situations. Specifically, there are post-rendering effects that interfere with the use of anti-aliasing hardware -- that's why some games don't support anti-aliasing at all.

If you haven't figured it out already, there is a universal "solution" for applying anti-aliasing effects that doesn't depend on the use (or non-use) of other shader effects. The solution is to use pixel shaders. ATI has apparently decided that's the most practical solution as we move forward and SM 3.0/SM4.0 usage increases, but it does require work on the part of software developers. Another downside to this approach is that it requires more work from the pixel shader hardware, which can result in lower performance. However, it seems the only other option is to omit anti-aliasing in certain types of graphics rendering.

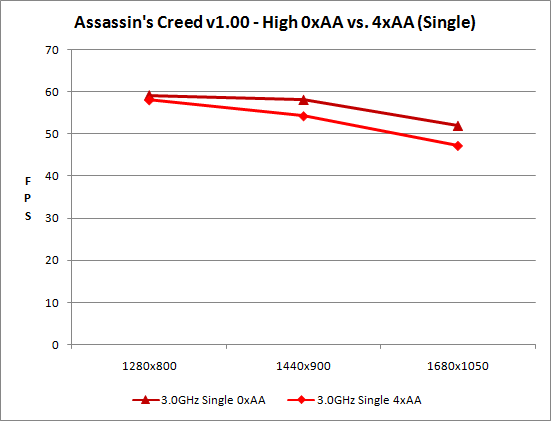

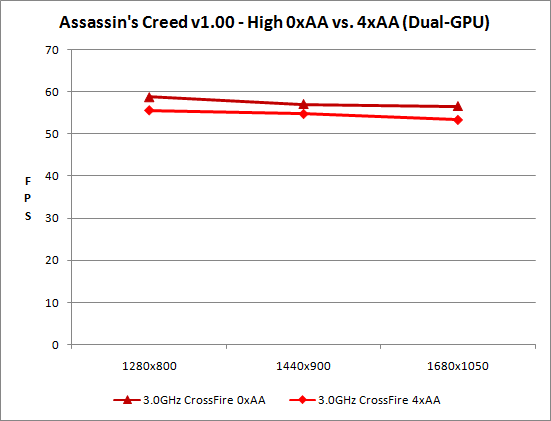

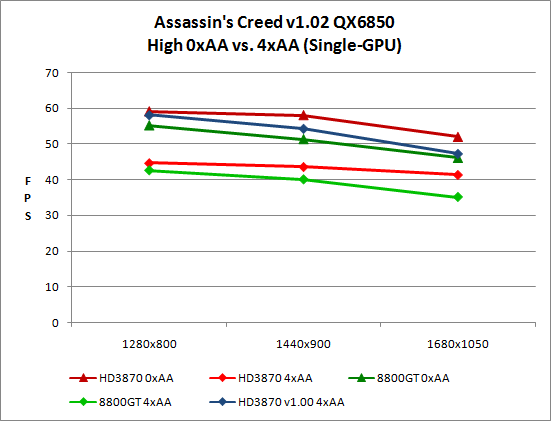

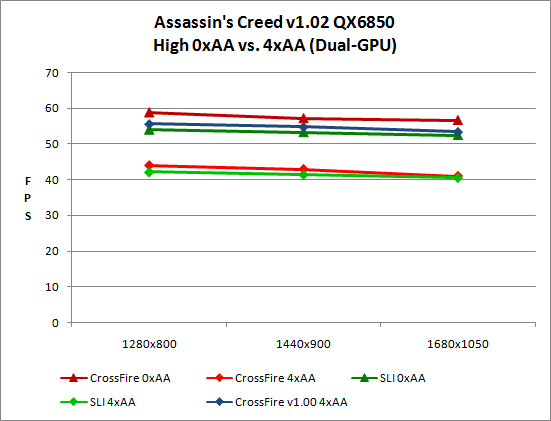

With all that out of the way, let's look at the performance of AC with and without anti-aliasing at several different resolutions. Unfortunately, as previously mentioned we are unable to test anti-aliasing at higher resolutions because the option is disabled inside the game.

We can see the result of using pixel shaders to do anti-aliasing in the resulting performance drop. What's noteworthy is that the drop isn't nearly as bad on ATI hardware running AC version 1.00. In other words, the 1.02 patch levels the playing field and forces ATI and NVIDIA to both use an extra rendering pass in order to do anti-aliasing. That probably sounds fair if you're NVIDIA -- or you own NVIDIA hardware -- but ATI users should be rightly upset.

Something else that's interesting to see is how performance is definitely CPU limited even with a quad-core 3.0GHz Intel chip. We will look at that next, but right now we are more interested in the steady drop caused by anti-aliasing. If the CPU is the bottleneck, putting more of a load on the GPU should not impact performance much. For whatever reason, it seems that the extra rendering pass required for anti-aliasing causes a steady drop in performance even when we're CPU limited. Have we mentioned yet that you really need some beefy hardware in order to play AC at higher detail settings?

32 Comments

View All Comments

Zak - Tuesday, June 3, 2008 - link

I'm usually against AnandTech straying away from their core hardware reviews they've become famous for in the first place, but this is the best, most thorough, in-depth game review I have ever read! Very well done, most enjoyable reading. Thanks:)Zak

mustardman - Tuesday, June 3, 2008 - link

I'm curious why Anandtech recommended Vista without comparing the performance of Windows XP. They didn't even have a test box running XP or did I miss it.From my experience and experience from friends, Vista is still behind XP in gaming performance. In some cases, far behind.

Am I missing something?

JarredWalton - Tuesday, June 3, 2008 - link

With modern DX10 GPUs, Vista is required to even get DX10 support. Having looked at DX9 Assassin's Creed, I can't say the difference is all that striking, but the DX10 mode did seem to run faster. (I could test if there's desire, but it will have to wait as I'm traveling this week and don't have access to the test systems used in this article.)Personally, while Vista had some issues out of the gate, drivers and performance are now much better. XP may still be faster in some situations, but if you're running a DX10 GPU I can't see any reason to stick with XP. In fact, there are plenty of aspects of Vista that I actually prefer in general use.

Since this was primarily a game review, and I already spent 3x as much time benchmarking as I actually did beating the game, I just wanted to get it wrapped up. Adding in DX9 Vista vs. DX9 XP would have required another 20-30 hours of benchmarking, and I didn't think the "payoff" was worthwhile.

Justin Case - Monday, June 2, 2008 - link

[quote]Unlike Oblivion, however, all of the activity you see is merely a façade. The reality is that all the people are in scripted loops, endlessly repeating their activities.[/quote]...which is exactly what Oblivion NPCs do (compounded by the fact that they all have the same handful of voices, that all voices use exactly the same sentences, and that some characters change voice completely depending on which scripted line they're repeating).

If anything, Oblivion's world feels even more artificial than Morrowind. One thing is the AI we were promised for Oblivion while the game was in development, another is what actually shipped. Most of the behaviors shown in the "preview videos" simply aren't in the game at all.

Even with the (many, and very good) 3rd party mods out there, Oblivion NPCs feel like robots.

JarredWalton - Tuesday, June 3, 2008 - link

Oblivion NPCs can actually leave town, they sleep at night, they wander around a much larger area.... Yes, they feel scripted, but compared to the AC NPCs they are geniuses. The people in AC walk in tight loops - like imagine someone walking a path of about 500-1000 feet endlessly, with no interruptions for food, bed, etc. I'm not saying Oblivion is the best game ever, but it comes a lot closer to making you feel like it's a "real" world than Assassin's Creed.But I still enjoyed the game overall.

erwendigo - Monday, June 2, 2008 - link

This is a very old new, the DX10.1 suppor of this game eliminate one render pass BUT with a cost, a inferior quality image.The render image isn´t equal to DX10 version, Ubisoft then dropped suport for DX10.1 in 1.02 patch.

A story very simple, nothing about conspiracy theory, or phantoms.

Anandtech guys, if you believe in these phantoms, then make a review with 1.01 patch (this is yet on this world, men, download and test the f***ing patch), otherwise, your credibility will disminish thanks to this conspiracy theory.

JarredWalton - Tuesday, June 3, 2008 - link

I tested with version 1.00 and 1.02 on NVIDIA and ATI hardware. I provided images of 1.00 and 1.02 on both sets of hardware. The differences in image quality that I see are at best extremely trivial, and yet 1.02 in 4xAA runs about 25% slower on ATI hardware than 1.00.What is version 1.01 supposed to show me exactly? They released 1.01, pulled it, and then released 1.02. Seems like they felt there were some problems with 1.01, so testing with it makes no sense.

erwendigo - Tuesday, June 3, 2008 - link

Well, you writed several pages about the suspicious reasons of the dropped support of DX10.1.If you sow the seeds of doubt, then you´ld have done a test for it.

The story of this dropped suport has a official version (graphical bugs), and in many forums users reported this with 1.01 patch (and DX10.1). Another version is the conspiracy theory, but this version hasn´t proof.

¿This is the truth? I don´t know, I can´t test this with my computer, but if you publish the conspiracy theory and test the performance and quality of 1.0 and 1.02 version, why don´t you do the same with 1.01 patch?

This is not about performance, this is to endorse your version of the story. With this, your words earn respect, without the test, your words are transformed into bad rumors.

JarredWalton - Tuesday, June 3, 2008 - link

I still don't get what you're after. Version 1.00 has DirectX 10.1 support; version 1.02 does not. Exactly what is version 1.01 supposed to add to that mix? Faulty DX10.1? Removed DX10.1 with graphical errors? I don't even know where to find it (if it exists), so please provide a link.The only official word from Ubisoft is that DX10.1 "removed a rendering pass, which is costly." That statement doesn't even make sense, however, as what they really should have said is DX10.1 allowed them to remove a rendering pass, which was beneficial. Now, if it was beneficial, why would they then get rid of this support!?

As an example of what you're saying, Vista SP1 brings together a bunch of updates in one package and offers better performance in several areas relative to the initial release of the OS. So imagine we test networking performance with the launch version of Vista, and then we test it with SP1 installed, and we conclude that indeed somewhere along the way network performance improved. Then you waltz in and suggest that our findings are meaningless because we didn't test Vista without SP1 but with all the other standard updates applied. What exactly would that show? That SP1 was a conglomerate of previous updates? We already know that.

So again, what exactly is version 1.01 supposed to show? Version 1.02 appears to correct the errors that were seen with version 1.00. Unless version 1.01 removed DX10.1 and offered equivalent performance to 1.00 or kept DX10.1 and offered equivalent performance to 1.02, there's no reason to test it.

Maybe the issue is the version numbers we're talking about. I'm calling version 1.0.0.1 of the game - what the DVD shipped with - version 1.00. The patched version of the game is 1.0.2.1, so I call that 1.02. Here's what the 1.02 patch officially corrects:

------------------

* Fixed a rare crash while riding the horse in Kingdom

* Fixed a corruption of Altair’s robe on certain graphics hardware

* Cursor is now centered when accessing the Map

* Fixed a few problems with Alt-Tab

* Fixed a graphical bug in the final fight

* Fixed a few graphical problems with dead bodies

* Fixed pixellation with post-FX enabled on certain graphics hardware

* Fixed a small bug in the DNA Menu that would cause the image to disappear if the arrow was clicked rapidly

* Fixed some graphical corruption in Present Room with low Level Of Detail

* Character input is now canceled if the controller is unplugged while moving

* Added support for x64 versions of Windows

* Fixed broken post-effects on DirectX 10.1 enabled cards

------------------

I've heard more about rendering errors on NVIDIA hardware with v1.00 than I have of ATI hardware having problems. I showed a (rare) rendering error in the images that happens with ATI and 4xAA, but all you have to do is lock onto a target or enter Eagle Vision to get rid of the error (and I never saw it come back until I restarted the game).

Bottom line is I have PROOF that v1.00 and v1.02 differ in performance, specifically in the area of anti-aliasing on ATI 3000 hardware. If a version 1.01 patch ever existed, it doesn't matter in this comparison. The conspiracy "theory" part is why Ubisoft removed DX10.1 support. If you're naive enough to think NVIDIA had nothing to do with that, I wish you best of luck in your life. That NVIDIA and Ubisoft didn't even respond to our email on the subject speaks volumes - if you can't say anything that won't make you look even worse, you just ignore the problem and go on your merry way.

erwendigo - Tuesday, June 3, 2008 - link

Well, you talk me about some links, then here have some of them:The first and foremost:

http://www.rage3d.com/articles/assassinscreed%2Dad...">http://www.rage3d.com/articles/assassinscreed%2Dad...

In this one Rage3D (a proATI website) analyzes the reason of the dropped support of DX10.1, with a comparation of images of the different rendering modes.

In this article Rage3D people found several graphical bugs of the dx10.1, they described them as minor bugs, BUT I don´t think that the lack of some effects in the DX10.1 are minor bugs.

The DX10.1 with activated AA lacks of dust effect, and the HDR rendering is different from the DX10 version.

In Rage3D thinks that this show a DX10 bug in HDR rendering, because they said that Ubisoft declared that HDR rendering in DX9 and DX10 paths are identical, and they tested that DX10 and DX9 HDR rendering are different. This point could be true, but it´s something strange that the DX10 HDR rendering path was buggy in the release version of the game, and in the 1.01 patch too.

It´s more logic that the DX10 HDR was correct and the difference with DX10.1 HDR reflects different and buggy render path (Do you remember the lack of one render pass?).

The speedup of performance of 1.01 patch (in 3DRage test) in the game looks like your test results. Then, the lack of DX10.1 support in 1.02 patch doesn´t affect the performance. Yes, in DX10.1 looks like that the AA is better than in other paths, but with this version you have lack of dust effect and different (buggy or not?) HDR rendering. Good reasons for the dropped support, I think.

Consequences of your rumors about sinister dropped support:

http://forums.vr-zone.com/showthread.php?t=283935">http://forums.vr-zone.com/showthread.php?t=283935

Some people believe this version because you defend it in your review, but you didn´t test the veracity of this. The truth is that DX10.1 render path had bugs, and when you made the review, you didn´t know if the dropped support reason was the conspiracy theory or other reason, but YOU chose one by personal election.

That Ubisoft and Nvidia didn´t respond to your email post proved nothing. At most, they were bad-mannered guys with you.