G.Skill Phoenix Blade (480GB) PCIe SSD Review

by Kristian Vättö on December 12, 2014 9:02 AM ESTPerformance Consistency

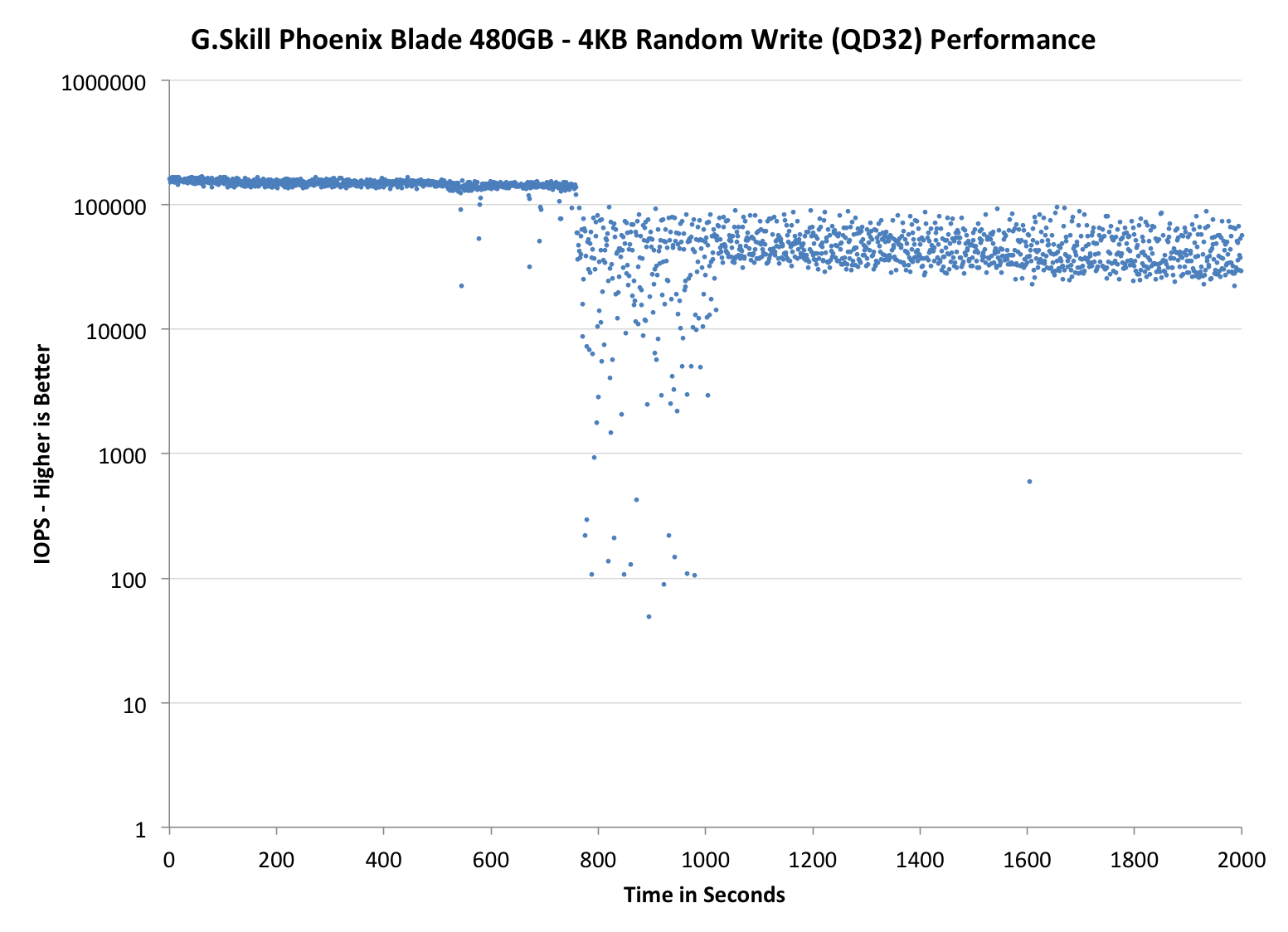

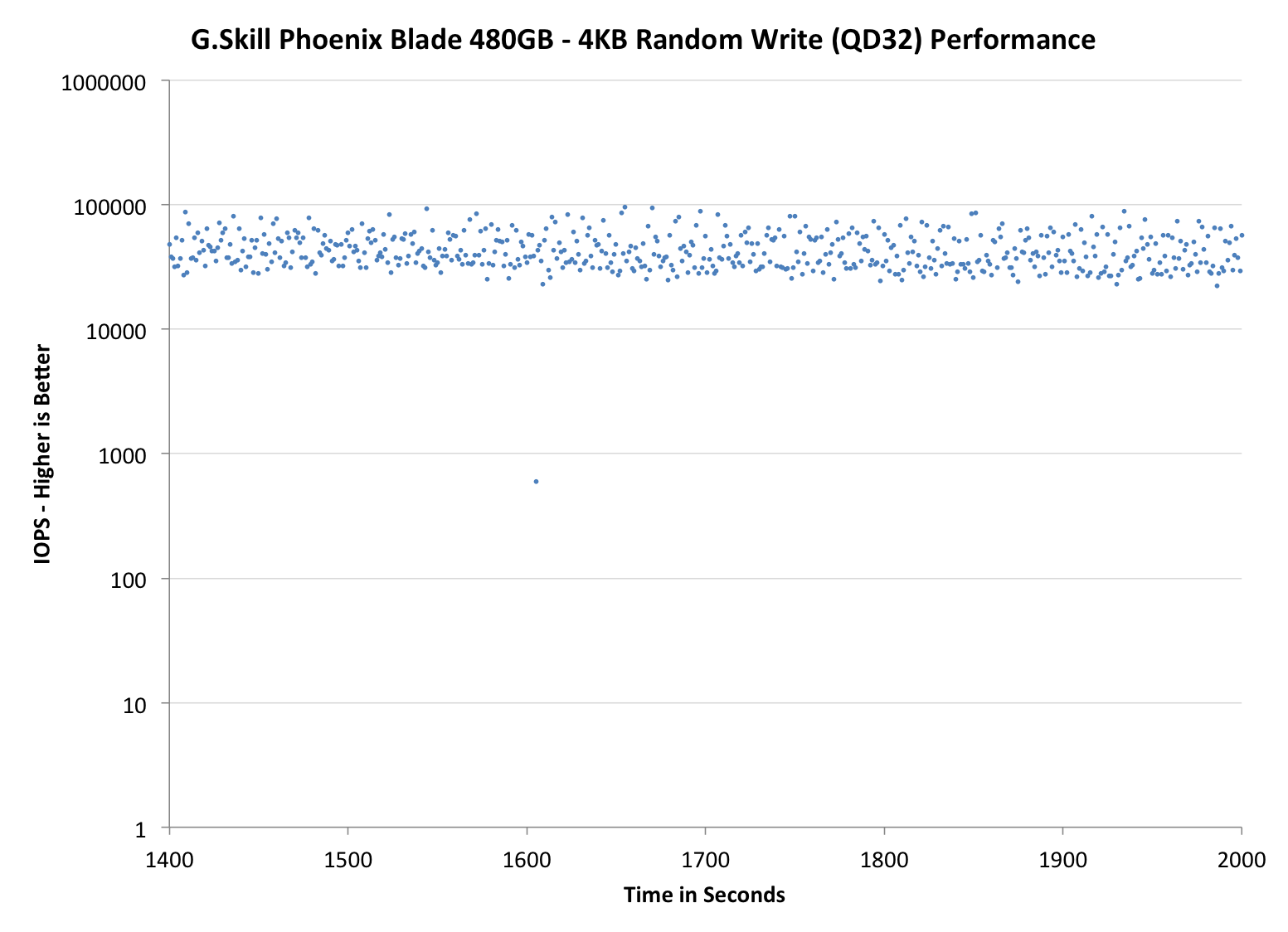

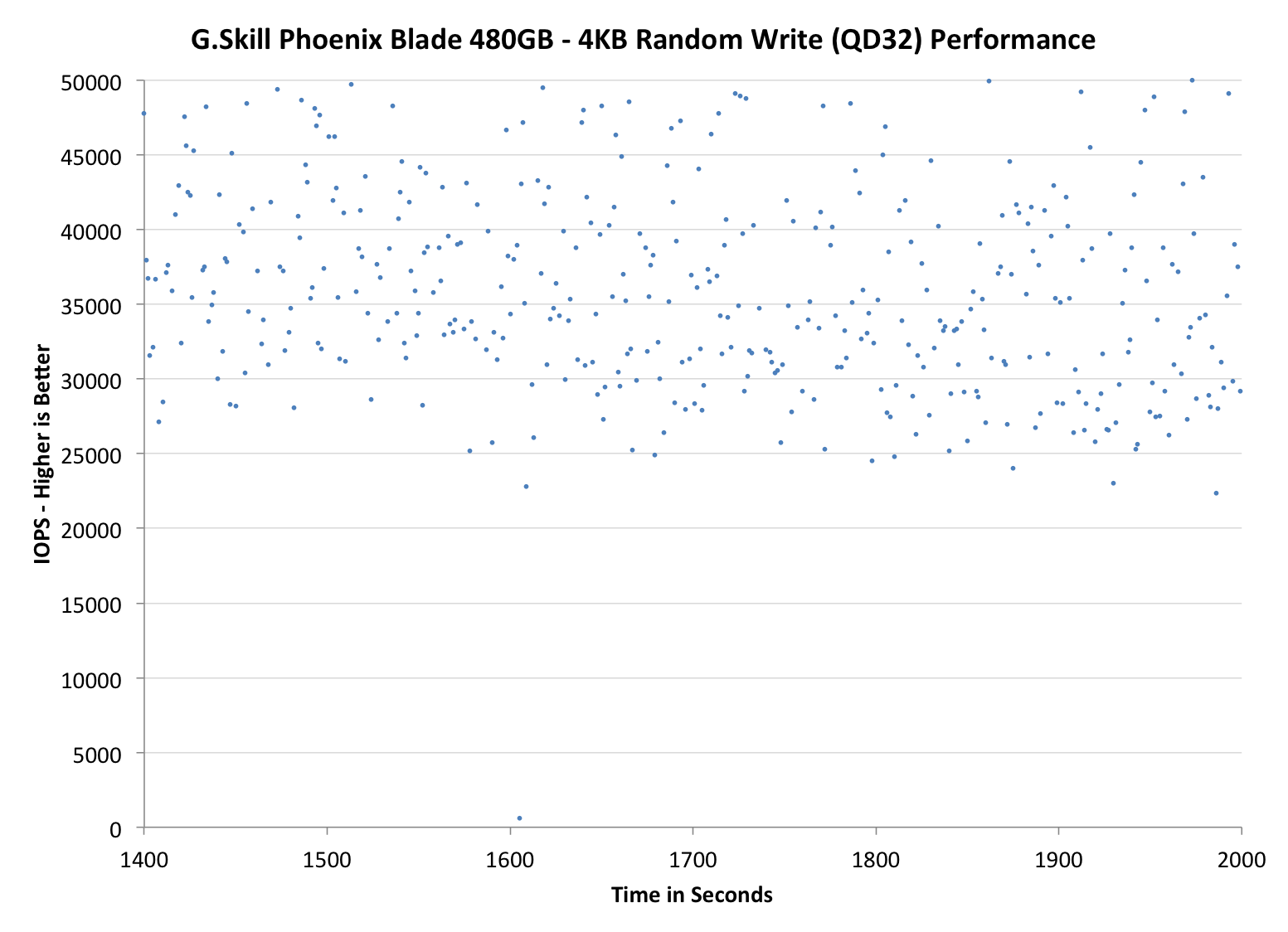

Performance consistency tells us a lot about the architecture of these SSDs and how they handle internal defragmentation. The reason we do not have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag or cleanup routines directly impacts the user experience as inconsistent performance results in application slowdowns.

To test IO consistency, we fill a secure erased SSD with sequential data to ensure that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. The test is run for just over half an hour and we record instantaneous IOPS every second.

We are also testing drives with added over-provisioning by limiting the LBA range. This gives us a look into the drive’s behavior with varying levels of empty space, which is frankly a more realistic approach for client workloads.

Each of the three graphs has its own purpose. The first one is of the whole duration of the test in log scale. The second and third one zoom into the beginning of steady-state operation (t=1400s) but on different scales: the second one uses log scale for easy comparison whereas the third one uses linear scale for better visualization of differences between drives. Click the dropdown selections below each graph to switch the source data.

For more detailed description of the test and why performance consistency matters, read our original Intel SSD DC S3700 article.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

Even though the Phoenix Blade and RevoDrive 350 share the same core controller technology, their steady-state behaviors are quite different. The Phoenix Blade provides quite substantially higher peak IOPS (~150K) and it is also more consistent in steady-state as the RevoDrive frequently drops below 20K IOPS while the Phoenix Blade doesn't.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

TRIM Validation

To test TRIM, I turned to our regular TRIM test suite for SandForce drives. First I filled the drive with incompressible sequential data, which was followed by 60 minutes of incompressible 4KB random writes (QD32). To measure performance before and after TRIM, I ran a one-minute incompressible 128KB sequential write pass.

| Iometer Incompressible 128KB Sequential Write | |||

| Clean | Dirty | After TRIM | |

| G.Skill Phoenix Blade 480GB | 704.8MB/s | 124.9MB/s | 231.5MB/s |

The good news here is that the drive receives the TRIM command, but unfortunately it doesn't fully restore performance, although that is a known problem with SandForce drives. What's notable is that the first LBAs after the TRIM command were fast (+600MB/s), so in due time the performance across all LBAs should recover at least to a certain point.

62 Comments

View All Comments

Duncan Macdonald - Friday, December 12, 2014 - link

How does this compare to 4 240GB Sandforce SSDs in software RAID 0 using the Intel chipset SATA interfaces?Kristian Vättö - Friday, December 12, 2014 - link

Intel chipset RAID tends not to scale that well with more than two drives. I have to admit that I haven't tested four drives (or any RAID 0 in a while) to fully determine the performance gains, but it's safe to say that the Phoenix Blade is better than any Intel RAID solution since it's more optimized (specific hardware and custom firmware).nathanddrews - Friday, December 12, 2014 - link

Sounds like you just set yourself up for a Capsule Review.Havor - Saturday, December 13, 2014 - link

I dont get the high praise of this drive, sure it has value for people that need high sequential speed, or people that use it to host a database on a budget that have tons of request, and can utilize high QD, all other are better off with a SATA SSD that preforms much better with a QD of 2 or less.As desktop users almost never go over QD2 in real word use, so they would be much better of with a 8x0 EVO or so, both performance wise as price wise.

I am actually wane of the few that could use the drive, if i had space for it (running quad SLI), as i use a RAMdrive, and copy programs and games that are stored on the SSD in a RAR file, true a script from a R0 set of SSDs to the RAMdisk, so high sequential speed is king for me.

But i count my self in the 0.1% of nerds, that dose things like that because i like doing stuff like that, any other sane person would just use a SSD to run its programs of.

Integr8d - Sunday, December 14, 2014 - link

The typical self-centered response: "This product doesn't apply to me. So I don't understand why anyone else likes it or why it should be reviewed," followed by, "Not that my system specs have ANYTHING to do with this, but here they are... 16 video cards, raid-0 with 16 ssd's, 64TB ram, blah blah blah..." They literally just look for an excuse to brag...It's like someone typing a response to a review of Crest toothpaste. "I don't really know anything about that toothpaste. But I saw some, the other day, when I went to the store in my 2014 Dodge Charger quad-Hemi supercharged with Borla exhaust, 20" BBS with racing slicks, HID headlights, custom sound system, swimming pool in the trunk and with wings on the side so I can fly around.

It's comical.

dennphill - Monday, December 15, 2014 - link

Thanks, Integ8d, you put a smile on my face this morning! My feelings exactly.pandemonium - Tuesday, December 16, 2014 - link

Hah. Nicely done, Integr8d.alacard - Friday, December 12, 2014 - link

The DMI interface between the chipset and the processor maxes out at about 1800~1850MB/s and this bandwidth has to be split between all the devices connected to the PCH which also incorporates an 8x pci 2.0 link. Simply put, there's not enough bandwidth to go around with more than two drives attached to the chipset in raid, not to mention that the scaling beyond 2 drives is fairly bad in general through the PCH even when nothing else is going on. And to top it all off 4k performance is usually slightly slower in Raid than a on a single SSD (ie it doesn't scale at all).I know Tomshardware had an article or two on this subject if you want to google it.

personne - Friday, December 12, 2014 - link

It takes three SSDs to saturate DMI. And 4k writes are nearly double on long queue depths. So you get more capacity, higher cost, and much of the performance benefit for many operations. Certainly tons more than a single SSD at a linear cost. If you research your statements.alacard - Friday, December 12, 2014 - link

To your first point about saturating DMI, we're in agreement. Reread what i said.To your second point about 4k, you are correct but i've personally had three separate sets of RAID 0 on my performance machine (2 vertex 3s, 2 vertex 4s, 2 vectors), and i can tell you that those higher 4k results were not impactful in any way when compared to a single SSD. (Programs didn't load faster for instance.)

http://www.tomshardware.com/reviews/ssd-raid-bench...

That leaves me curious as to what you're doing that allows you to get the benefits of high queue depth RAID0? What's your setup, what programs do you run? I ask because for me it turned out not to be worth the bother, and this is coming from someone who badly wanted it to be. In the end the higher low queue depth 4k of 1 SSD was a better option for me so i switched back.

http://www.hardwaresecrets.com/article/Some-though...