NVIDIA GeForce4 - NV17 and NV25 Come to Life

by Anand Lal Shimpi on February 6, 2002 8:51 AM EST- Posted in

- GPUs

The NV17 comes home

We really tried our best to hint at where we thought this one was going around the Comdex timeframe with our article on the NV17M. If you remember, the NV17M was announced on the first day of last year's Comdex and interestingly enough the mobile GPU was announced without an official name.

It turned out that the NV17M was eventually going to be a desktop part as well under the codename NV17, which is what we had always known as the GeForce3 MX. The major problem with this name is that the NV17 core lacks all of the DirectX 8 pixel and vertex shader units that made the original GeForce3 what it was. Instead, the NV17 would basically be a GeForce2 MX with an improved memory controller, multisample AA unit, and updated video features; another way of looking at it would be the GeForce3 without two pixel pipelines or DirectX 8 compliance. The problem most developers will have with this is that the uneducated end user would end up purchasing the GeForce3 MX with the idea that it had at least the basic functionality of the regular GeForce3, only a bit slower. While in reality, the GeForce3 MX would not allow developers to assume that a great portion of the market had DX8 compliant cards.

Luckily NVIDIA decided against calling the desktop NV17 the GeForce3 MX, unfortunately they stuck with the name GeForce4 MX. This is even more misleading to those that aren't well informed as it gives the impression that the card at least has the minimal set of features that the GeForce3 had - which it doesn't.

More specifically, the GeForce4 MX features no DirectX 8 pixel shaders and only limited support for vertex shaders. The chip does support NVIDIA's Shading Rasterizer (NSR) from the original GeForce2 but that's definitely a step back from the programmable nature of the GeForce3 core.

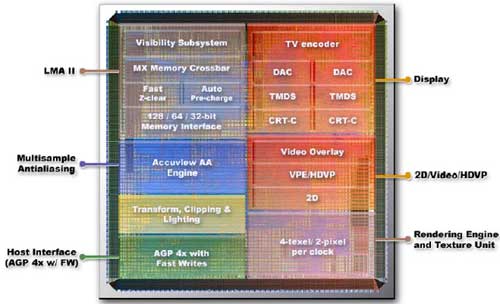

NVIDIA did however make the GeForce4 MX very powerful at running present day titles. While the core still only features two pixel pipelines with the ability of applying two textures per pipeline (thus offering half the theoretical fill rate of an equivalently clocked GeForce4), it does feature the Lightning Memory Architecture II from its elder brother as well as a few other features.

The GeForce4 MX's memory controller isn't directly borrowed from the GeForce4, the only change that was made was that there are only two independent memory controllers that make up the LMA II on the GeForce4 MX. So instead of having 4 x 32-bit load-balanced memory controllers, the GeForce4 MX only has 2 x 64-bit load balanced memory controllers. While it's unclear the performance difference that exists, if any, between the two options (and also very difficult to measure) it is very clear why NVIDIA chose such a setup for the GeForce4 MX's memory controllers. Remember that the nForce chipset features a dual channel 64-bit DDR memory interface, and also remember that the GeForce2 MX was the integrated graphics core of the first-generation nForce chipset. You can just as easily expect the GeForce4 MX core to be used in the next-generation nForce platform especially since the memory controller will work so very well in it without modification.

The rest of the GeForce4 MX is virtually identical to that of the GeForce4; it features the same Accuview AA engine, the same nView support, and the same improved Visibility Subsystem. The only remaining difference is that the GeForce4 MX features dual integrated TMDS transmitters for dual DVI output of resolutions up to 1280 x 1024 per monitor. The inclusion of nView makes the GeForce4 MX a very attractive card, especially at the lower price points for corporate users looking for good dual monitor support.

Today the GeForce4 MX line consists of three cards: the MX 460,

the MX 440 and the MX 420. While all three feature a 128-bit memory bus with

a 64MB frame buffer, only the 460 and 440 use DDR SDRAM, the 420 features

conventional SDR SDRAM.

The

GeForce4 MX 460 will be priced at $179 and it will have a 300MHz GPU clock

and a 275MHz memory clock. The MX 440 will be clocked internally at 270MHz

with a 200MHz memory clock and it will be priced at $149. Finally the entry-level

GeForce4 MX 420 will be clocked at 250MHz core with 166MHz memory and it will

retail for $99.

The

GeForce4 MX 460 will be priced at $179 and it will have a 300MHz GPU clock

and a 275MHz memory clock. The MX 440 will be clocked internally at 270MHz

with a 200MHz memory clock and it will be priced at $149. Finally the entry-level

GeForce4 MX 420 will be clocked at 250MHz core with 166MHz memory and it will

retail for $99.

Now it's time to address the obvious tradeoffs that NVIDIA made on the GeForce4 MX. First of all there is the naming convention which developers have already been complaining to NVIDIA about. No longer can developers assume that going forward, their user base will have a DX8 compliant video card installed. You have to realize that well over 80% of all graphics cards sold are priced under $200. Since that is the market that the GeForce4 MX is after, should the product succeed then a great deal of that 80%+ of the market will not have DX8 support which means that developers will be less inclined to support those features in their games. For anyone that's ever seen what can be done with programmable pixel shaders, it's very clear that we want developers to use those features as soon as possible.

From NVIDIA's standpoint, there are no major titles that require those features and the cost in die size of implementing DX8 pixel and vertex shaders is too great for a 0.15-micron part priced at as low as $99. The GeForce4 MX will do quite well at today's titles while offering great features such as nView for those that care about more than just gaming.

The situation we're placed in is a difficult one; provided that you want cheap but excellent performance in today's games, it would seem that you're limited to the GeForce4 MX. Yet by purchasing the GeForce4 MX you're actually working against developers bringing DX8 features to the mass market. This leaves you with three options, either stick with a GeForce3 Ti 200 or ATI's Radeon 8500LE 128MB.

Remember the GeForce4 Ti 4200 we mentioned earlier? That's your third option. NVIDIA realizes that at the $199 price point the clear recommendation would be to stay far, far away from a GeForce4 MX as you wouldn't be buying any future performance at all. At the same time, ATI realized the weakness in NVIDIA's product line (they've known about it for a while now) and positioned the 128MB Radeon 8500LE at a very similar price point as the GeForce4 MX 460. When picking between the two, the Radeon 8500LE clearly offers better support for the future and would be the better option of the two. In order to prevent the Radeon 8500LE from completely taking over the precious $150 - $199 market, NVIDIA will be providing the GeForce4 Ti 4200 at the $199 price point. This part will offer full DX8 pixel and vertex shader support, yet it will be priced at a very competitive level.

Although this effectively makes the GeForce4 MX 460 pointless as it is priced only $20 less, it does solve the issue of getting too many new non-DX8 compliant cards into the hands of the end users. The important task now is to make sure that all serious gamers opt for at least the GeForce4 Ti 4200 and not a GeForce4 MX card. Again, the GeForce4 4200 won't be available for another 8 weeks or so but it's definitely worth waiting for if you're going to be purchasing a sub-$200 gaming card.

1 Comments

View All Comments

jon450 - Friday, September 11, 2020 - link

Woah! I’m really enjoying the template/theme of this blog.https://www.codeboks.com/c-projects-for-beginners-...