IBM and NVIDIA Announce Data Analytics & Supercomputer Partnership

by Ryan Smith on November 18, 2013 9:02 AM EST

Our other piece of significant NVIDIA news to coincide with the start of SC13 comes via a joint announcement from NVIDIA and IBM. Together the two are announcing a fairly broad partnership that will see GPUs and GPU acceleration coming to both IBM’s data analytic software and to the IBM Power supercomputers running some of those applications.

First and foremost, on the software side of matters IBM and NVIDIA are announcing that the two are collaborating on adding GPU acceleration to IBM’s various enterprise software packages, most notably IBM’s data analytic packages (InfoSphere, etc). Though generally not categorized as a supercomputer scale project, data analytics can be at times a significantly compute bound task, making it a potential growth market for NVIDIA’s Tesla business.

NVIDIA has already landed somewhat similar deals for data analysis in the past, but any potential deal with IBM would represent a significant step up for the Tesla business given the vast scale of IBM’s own business dealings. NVIDIA is still in the phase of business development where they’re looking to vastly grow their customer base, and IBM in turn has no shortage of potential customers. But with that said this is an early announcement of collaboration, and while we’d expect IBM and NVIDIA to find some ways to improve their performance via GPUs – primarily on a power efficiency basis – it’s far too early for either party to be talking about performance numbers.

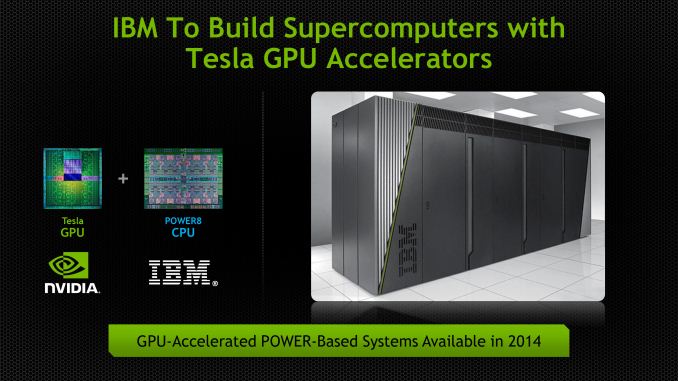

Of course IBM is very much a vertically integrated company, offering mixes of software, hardware, and services to their customers. So any announcement of GPU acceleration for IBM’s software would go hand in hand with a hardware announcement, and that’s what’s occurring today.

So along with IBM and NVIDIA’s software collaboration, the two are announcing that IBM will begin building Tesla equipped Power computers in 2014. IBM announced POWER8 back at Hot Chips 2013, and along with a suite of architectural improvements to the processor itself, it’s the first Power chip to be specifically designed for use with external processors via its CAPI port, and as such it’s the first POWER chip well suited for being used alongside a Tesla card.

At this point NVIDIA and IBM are primarily talking about their hardware partnership as a way to provide hardware for the aforementioned software collaboration for use in data centers and the like, itself a new market for Tesla. But of course there are longer term implications for the supercomputer market. Power CPUs on their own have been enough to hold high spots on the Top500 list for years – BlueGene/Q systems being the most recent example – so the combination of POWER CPUs and Tesla GPUs could be a very potent combination in the right hands. As well as the x86 market treats NVIDIA, there are a number of users of Power and other less common architectures that NVIDIA cannot currently reach, so being able to get their GPUs inside of an IBM supercomputer is a huge achievement for the company in that respect. And if nothing else, this announcement gets NVIDIA a close CPU partner in the server/supercomputer market, which is something they didn't have before.

On a final note, it’s worth pointing out that all of this comes only a few months after IBM formed the OpenPOWER Consortium, of which NVIDIA is also a member. For the moment we’re just seeing the barest levels of integration between NVIDIA’s GPUs and IBM’s POWER CPUs, but a successful collaboration between the two would leave the door open to closer works between the two in the future. Both companies offer licensing of their respective core IP, so if NVIDIA and IBM wanted to make a run at the server/compute market with a fully integrated APU, this would be the first step towards accomplishing that.

11 Comments

View All Comments

Kevin G - Monday, November 18, 2013 - link

The problem with this arrangement is that I see nVidia stabbing IBM in the back almost immediately on the hardware side. Recall that nVidia is developing their own ARM core and will be integrating that into future GPU's. There is still a need for fast serial code but nVidia is simply looking to provide that themselves over the long term. However, IBM currently rules the performance race as they're one of the few companies whom can design chips that out perform Intel's best (IE POWER7 vs. Ivy Bridge-EP). POWER + nVidia will be there as a best serial and best parallel combo but I see this as a small niche subset of an already small niche.On the IBM side of things, this does enable them to grab lots of cheap compute. BlueGene was their solution to this area and while I suspect that it'll carry on, some of BlueGene's customers will be moved over to big POWER + nVidia GPU's for cost concerns.

IBM does sell nVidia hardware already but mainly inside of their x86 based servers. There have been some rumors over the past year that IBM was looking to sell off their x86 line up. If that is true, offering nVidia hardware on the POWER side makes more sense in the long run.

HighTech4US - Monday, November 18, 2013 - link

Quote: The problem with this arrangement is that I see nVidia stabbing IBM in the back almost immediately on the hardware side.Very CharLIEish.

dragonsqrrl - Monday, November 18, 2013 - link

YepKevin G - Tuesday, November 19, 2013 - link

Well with nVidia developing their own ARM based architecture and long term appear to be moving away from the need for a host processor/system. So what would be nVidia's motivation to continue to develop POWER based support long term when they're clearly supporting ARM?mmrezaie - Monday, November 18, 2013 - link

Don't worry about IBM. They are big boys. The thing is IBM's sales were declining. nVidia is also being threatened by Intel MIC. They needed to work together. Lets hope something good and sustainable comes from it.Kevin G - Tuesday, November 19, 2013 - link

Servers thus far have been immune from the decline on the consumer side. The wonderful thing about the mobile sector is that backend servers are needed for many of the applications. Though those servers are typically x86 based, typically VM's on a cluster. The Unix and mainframe markets have started to collapse as the x86 solutions have approached 'good enough' for the majority of use-cases. (I do expect a bit of rebound next year as IBM's POWER8 chip and a new mainframe chip are due next year, right inline with expected upgrade cycles.)I would like to see an IBM POWER + nVidia GPU for the HPC market. It'd look a bit different from anything else we've seen on the consumer side due to IBM's focus on interconnects and bandwidth. Going crazy like a 1024 bit wide memory interface would be overkill for a chip that'd eventually make its way to consumers but for IBM they wouldn't even blink. The interconnects between these SoC's would likely be something unique due to the massive amount of bandwidth being consumed per node. Though as I alluded to above, I can't this relationship lasting long term as nVidia clearly has plans involving ARM.

fteoath64 - Wednesday, November 20, 2013 - link

A great win for Nvidia but why does it take so long ?. It is clear that analytics requires huge floating-point power and those gpus have plenty of those at low latencies and huge parallelism to achieve huge speed gain. I guess it comes a time where upgrading costs are prohibitive for those companies, hence the need for hybrid cpu/gpu compute systems. Having had experience in supercomputers makes it easier for Nvidia, I guess. {in the gpu context, not the cpu context as with AMD).Hector2 - Monday, November 18, 2013 - link

It would seem that IBM reacting to stiff competition. In the related supercomputer world, "Intel continues to provide the processors for the largest share (82.4 percent) of Top500 systems". 13 of the Top500 systems now have the Intel PHI (MIC) technology including #1, Tianhe-2 (MilkyWay-2)fteoath64 - Tuesday, November 19, 2013 - link

A great win for Nvidia but why does it take so long ?. It is clear that analytics requires huge floating-point power and those gpus have plenty of those at low latencies and huge parallelism to achieve huge speed gain. I guess it comes a time where upgrading costs are prohibitive for those companies, hence the need for hybrid cpu/gpu compute systems. Having had experience in supercomputers makes it easier for Nvidia, I guess. {in the gpu context, not the cpu context as with AMD).errorr - Tuesday, November 19, 2013 - link

I wonder how this works on a software pricing level considering how cores are currently priced. From a customer point of view hardware is a fairly insignificant expense for these types of data analytics licenses. It will all depend on if you can get better performance per license.I also would dispute the idea that IBM is as vertically integrated as they like to pretend. Software and hardware certainly are but GBS (services) isn't integrated at all. The only benefit GBS has over Accenture or Delloitte or any other major integrator is that it is easier to get special pricing without the need for lawyers and NDA agreements beforehand. Otherwise the 2 sides of IBM are strangers by design as the Services side has to convince customers that they can give you Oracle or SAP and do just as good of a job. I know this is besides the point of the post but it is something that I like to point out.