Choosing a Gaming CPU October 2013: i7-4960X, i5-4670K, Nehalem and Intel Update

by Ian Cutress on October 3, 2013 10:05 AM ESTDirt 3

Dirt 3 is a rallying video game and the third in the Dirt series of the Colin McRae Rally series, developed and published by Codemasters. Dirt 3 also falls under the list of ‘games with a handy benchmark mode’. In previous testing, Dirt 3 has always seemed to love cores, memory, GPUs, PCIe lane bandwidth, everything. The small issue with Dirt 3 is that depending on the benchmark mode tested, the benchmark launcher is not indicative of game play per se, citing numbers higher than actually observed. Despite this, the benchmark mode also includes an element of uncertainty, by actually driving a race, rather than a predetermined sequence of events such as Metro 2033. This in essence should make the benchmark more variable, but we take repeated in order to smooth this out. Using the benchmark mode, Dirt 3 is run at 1440p with Ultra graphical settings. Results are reported as the average frame rate across four runs.

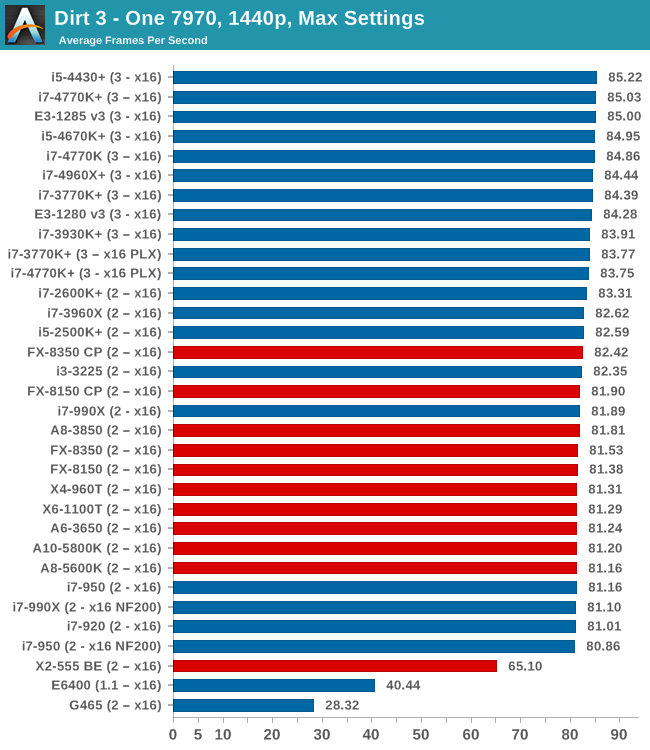

One 7970

Similar to Metro, pure dual core CPUs seem best avoided when pushing a high resolution with a single GPU. The Haswell CPUs seem to be near the top due to their IPC advantage.

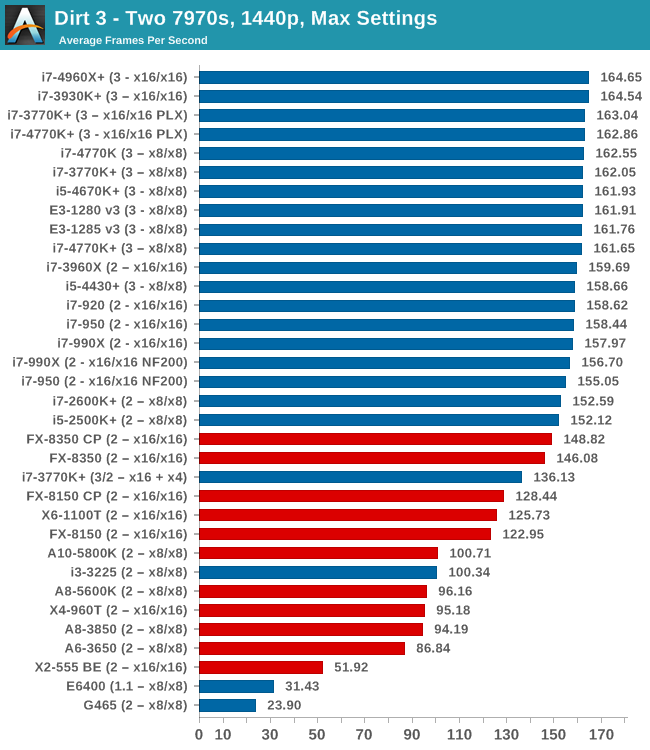

Two 7970s

When running dual AMD GPUs only the top AMD chips seem to click on to the tail of Intel, with the hex-core CPUs taking top spots. Again there's no real change moving from 4670K to 4770K, and even the Nehalem CPUs keep up within 4% of the top spots

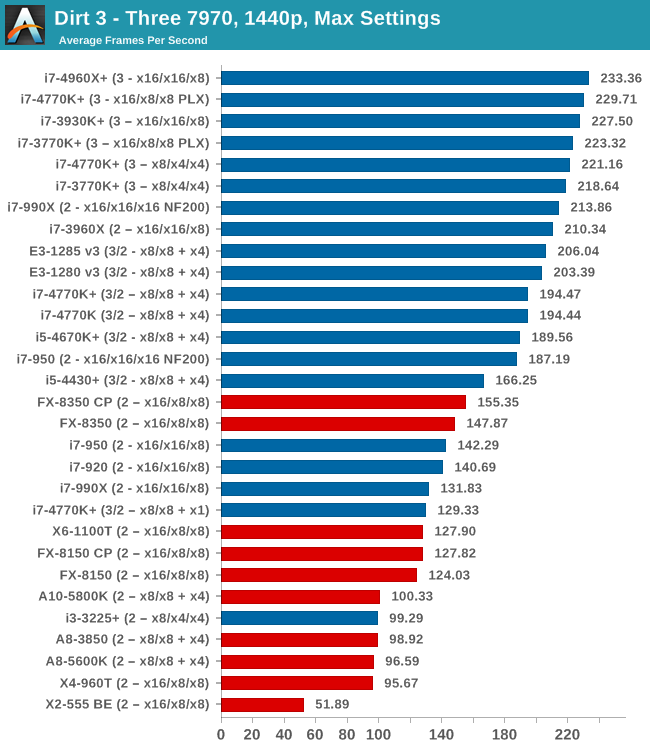

Three 7970s

At three GPUs the 4670K seems to provide the equivalent grunt to the 4770K, though more cores and more lanes seems to be the order of the day. Moving from a hybrid CPU/PCH x8/x8 + x4 lane allocation to a pure CPU allocation (x8/x4/x4) merits a 30 FPS rise in itself. The Nehalem CPUs, without NF200 support, seem to be on the back foot performing worse than Piledriver.

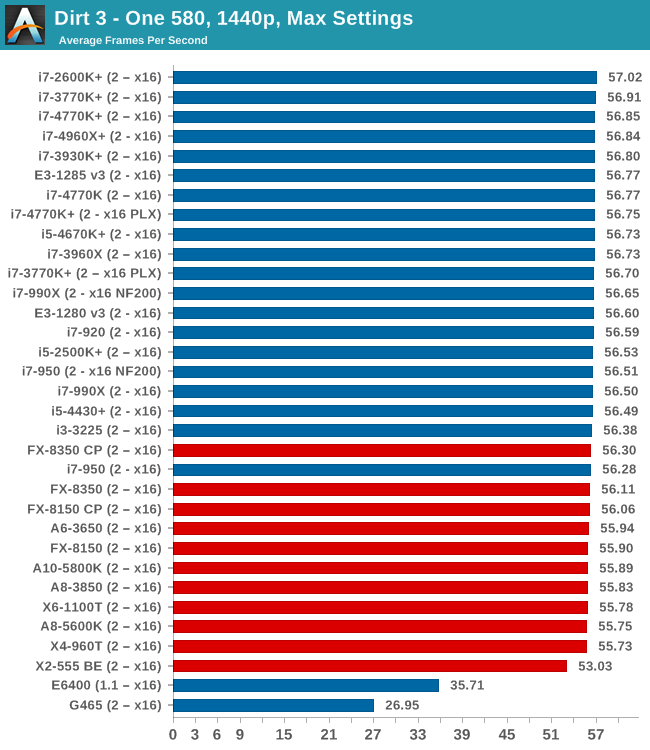

One 580

On the NVIDIA side, one GPU performs similarly across the board in our test.

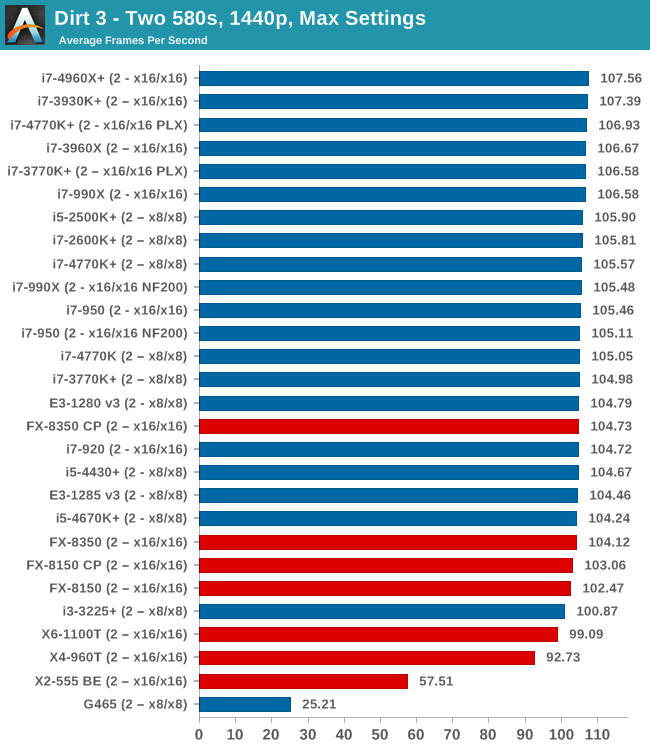

Two 580s

When it comes to dual NVIDIA GPUs, ideally the latest AMD architecture and anything above a dual core Intel Sandy Bridge processor is enough to hit 100 FPS.

Dirt3 Conclusion

Our big variations occured on the AMD GPU side where it was clear that above two GPUs that perhaps moving from Nehalem might bring a boost to frame rates. The 4670K is still on par with the 4770K in our testing, and the i5-4430 seemed to be on a similar line most of the way but was down a peg on tri-GPU.

137 Comments

View All Comments

tim851 - Thursday, October 3, 2013 - link

You know, once you go Quad-GPU, you're spending so much money already that not going with Ivy Bridge-E seems stupid.In the same vein I'd argue that a person buying 2 high end graphics cards should just pay 100 bucks more to get the 4770K and some peace of mind.

Death666Angel - Thursday, October 3, 2013 - link

I'd gladly take a IVB-E, even hex core, but that damned X79 makes me throw up when I just think about spending that much on a platform. :/von Krupp - Thursday, October 3, 2013 - link

It's not that bad. I picked up an X79 ASRock Extreme6 for $220, which is around what you'll pay for the good Z68/Z77 boards and I still got all of the X79 features.cpupro - Sunday, October 6, 2013 - link

"I'd gladly take a IVB-E, even hex core, but that damned X79 makes me throw up when I justthink about spending that much on a platform. :/"

And be screwed.

"von Krupp - Thursday, October 03, 2013 - link

It's not that bad. I picked up an X79 ASRock Extreme6 for $220, which is around what you'll pay

for the good Z68/Z77 boards and I still got all of the X79 features."

Tell that to owners of original not so cheap Intel motherboards, DX79SI. They need to buy new motherboard for IVB-E cpu, no UEFI update like other manufacturers.

HisDivineOrder - Thursday, October 3, 2013 - link

Not if they actually bought one when it was more expensive then waited until these long cycles allowed you to go and buy a second one on the cheap (ie., 670 when they were $400, then another when they were $250).althaz - Thursday, October 3, 2013 - link

Except that you might need the two or four graphics cards to get good enough performance, whereas there's often no real performance benefit to more than four cores (for gaming).Take Starcraft 2, a game which can bring any CPU to its knees, the game is run on one core, with AI and some other stuff offloaded to a second core. This is a fairly common way for games to work as it's easier to make them this way.

Jon Tseng - Thursday, October 3, 2013 - link

<sigh> it was so much easier back in the day when you could just overclock a Q6600 and job done. :-pJlHADJOE - Thursday, October 3, 2013 - link

You can still do the same thing today with the 3/4930k.Back in the day the Q6600 was basically the 2nd tier HEDT SKU, much like the 4930k is today, perhaps even higher considering the $851 launch price.

rygaroo - Thursday, October 3, 2013 - link

I still run an O.C. Q6600 :) but my GPU just died (8800GTS 512MB). Do you suspect that the lack of fps on Civ V for the Q9400 is due more to the motherboard limitations of PCIE 1.1 or more caused by the shortcomings of an old architecture? I don't want to spend a lot of money on a new high end GPU if my Q6600 would be crippling it... but my mobo has PCIE 2.0 x16 so it's not a real apples to apples comparison w/ the shown Q9400 results.JlHADJOE - Friday, October 4, 2013 - link

I tested for that in the FFIV benchmark.Had PrecisionX running and logging stuff in the background while I ran the benchmark. Turned out the biggest FPS drops coincided with the lowest GPU utilization, and that pretty much nailed the fact that my Q6600 @ 3.0 was severely bottlenecking the game.

Tried it again with CPU-Z, and indeed the FPS drops aligned with high CPU usage.