Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

by Brian Klug on February 22, 2013 5:04 PM EST- Posted in

- Smartphones

- camera

- Android

- Mobile

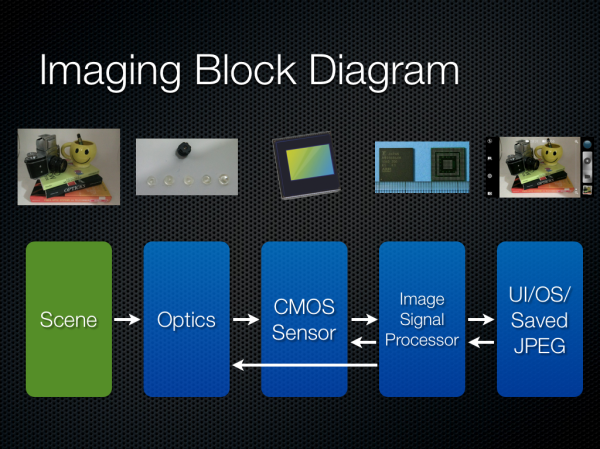

The Imaging Chain

Since we’re talking about a smartphone we must understand the imaging chain, and thus block diagram, and how the blocks work together. There’s a multiplicative effect on quality as we move through the system from left to right. Good execution on the optical system can easily be mitigated away by poor execution on the ISP for example. I put arrows going left to right from some blocks since there’s a closed loop between ISP and the rest of the system.

The video block diagram is much the same, but includes an encoder in the chain as well.

Smartphone Cameras: The Constraints

The constraints for a smartphone camera are pretty unique, and I want to emphasize just how much of a difficult problem this is for OEMs. Industrial design and size constraints are pretty much the number one concern — everyone wants a thin device with no camera bump or protrusion, which often leaves the camera module the thickest part of the device. There’s no getting around physics here unfortunately. There’s also the matter of cost, since in a smartphone the camera is just one of a number of other functions. Material constraints due to the first bullet point and manufacturing (plastic injection molded aspherical shapes) also makes smartphone optics unique. All of this then has to image onto tiny pixels.

Starting with the first set of constraints are material choices. Almost all smartphone camera modules (excluding some exceptions from Nokia) the vast majority of camera optics that go into a tiny module are plastic. Generally there are around 2 to 5 elements in the system, and you’ll see a P afterwards for plastic. There aren’t too many optical plastics around to chose from either, but luckily enough one can form a doublet with PMMA as something of a crown (low dispersion) and Polystyrene as a flint (high dispersion) to cancel chromatic aberration. You almost always see some doublet get formed in these systems. Other features of a smartphone are obvious but worth stating, they almost always are fixed focal length, fixed aperture, with no shutter, sometimes with an ND filter (neutral density) and generally not very low F-number. In addition to keep modules thin, focal length is usually very short, which results in wide angle images with lots of distortion. Ideally I think most users want something between 35 mm or 50 mm in 35mm equivalent numbers.

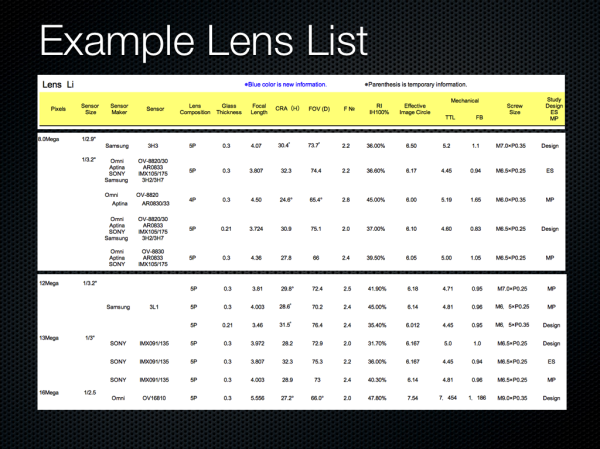

I give an example lens catalog from a manufacturer, you can order these systems premade and designed to a particular sensor. We can see the different metrics of interest, thickness, chief ray angle, field of view, image circle, thickness, and so on.

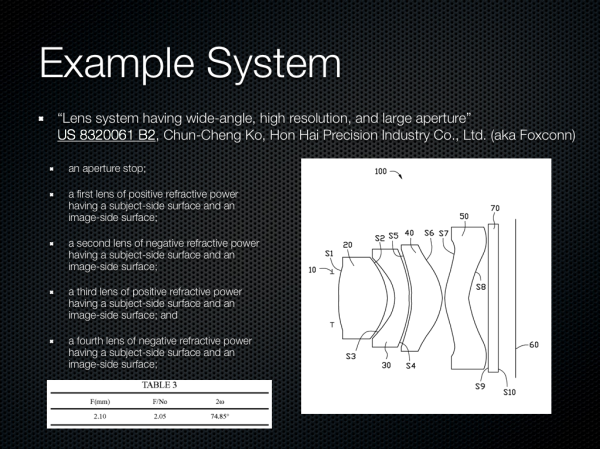

During undergrad a typical homework problem for optical design class would include a patent lens, and then verification of claims about performance. Say what you want about the patent system, but it’s great for getting an idea about what’s out there. I picked a system at random which looks like a front facing smartphone camera system, with wide field of view, F/2.0, and four very aspherical elements.

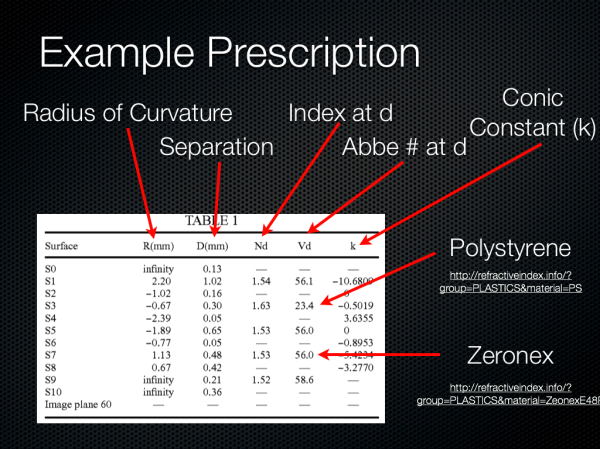

Inside a patent is a prescription for each surface, and the specification here is like almost all others in format. The radius of curvature for each surface, distance between surfaces, index, abbe number (dispersion), and conic constant are supplied. We can see again lots of very aspherical surfaces. Also there’s a doublet right for the first and second element (difference in dispersion and positive followed by negative lens) to correct some chromatic aberrations.

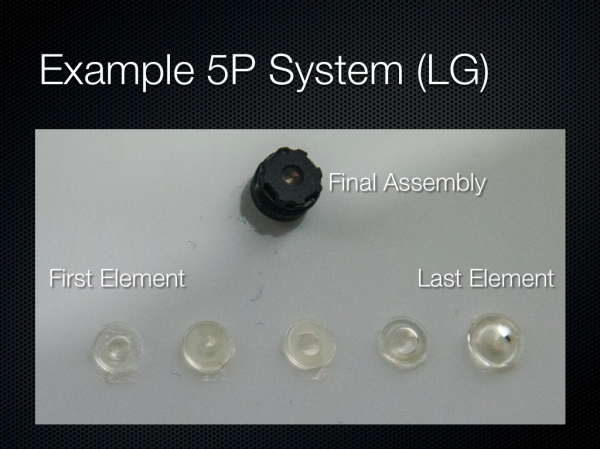

What do these elements look like? Well LG had a nice breakdown of the 5P system used in its Optimus G, and you can see just what the lenses in the system look like.

60 Comments

View All Comments

Sea Shadow - Friday, February 22, 2013 - link

I am still trying to digest all of the information in this article, and I love it!It is because of articles like this that I check Anandtech multiple times per day. Thank you for continuing to provide such insightful and detailed articles. In a day and age where other "tech" sites are regurgitating the same press releases, it is nice to see anandtech continues to post detailed and informative pieces.

Thank you!

arsena1 - Friday, February 22, 2013 - link

Yep, exactly this.Thanks Brian, AT rocks.

ratte - Friday, February 22, 2013 - link

Yeah, got to echo the posts above, great article.vol7ron - Wednesday, February 27, 2013 - link

Optics are certainly an area the average consumer knows little about, myself included.For some reason it seems like consumers look at a camera's MP like how they used to view a processor's Hz; as if the higher number equates to a better quality, or more efficient device - that's why we can appreciate articles like these, which clarify and inform.

The more the average consumer understands, the more they can demand better products from manufacturers and make better educated decisions. In addition to being an interesting read!

tvdang7 - Friday, February 22, 2013 - link

Same here they have THE BEST detail in every article.Wolfpup - Wednesday, March 6, 2013 - link

Yeah, I just love in depth stuff like this! May end up beyond my capabilities but none the less I love it, and love that Brian is so passionate about it. It's so great to hear on the podcast when he's ranting about terrible cameras! And I mean that, I'm not making fun, I think it's awesome.Guspaz - Friday, February 22, 2013 - link

Is there any feasibility (anything on the horizon) to directly measure the wavelength of light hitting a sensor element, rather than relying on filters? Or perhaps to use a layer on top of the sensor to split the light rather than filter the light? You would think that would give a substantial boost in light sensitivity, since a colour filter based system by necessity blocks most of the light that enters your optical system, much in the way that 3LCD projector produces a substantially brighter image than a single-chip DLP projector given the same lightbulb, because one splits the white light and the other filters the white light.HibyPrime1 - Friday, February 22, 2013 - link

I'm not an expert on the subject so take what I'm saying here with a grain of salt.As I understand it you would have to make sure that no more than one photon is hitting the pixel at any given time, and then you can measure the energy (basically energy = wavelength) of that photon. I would imagine if multiple photons are hitting the sensor at the same time, you wouldn't be able to distinguish how much energy came from each photon.

Since we're dealing with single photons, weird quantum stuff might come into play. Even if you could manage to get a single photon to hit each pixel, there may be an effect where the photons will hit multiple pixels at the same time, so measuring the energy at one pixel will give you a number that includes the energy from some of the other photons. (I'm inferring this idea from the double-slit experiment.)

I think the only way this would be possible is if only one photon hits the entire sensor at any given time, then you would be able to work out it's colour. Of course, that wouldn't be very useful as a camera.

DominicG - Saturday, February 23, 2013 - link

Hi Hlbyphotodetection does not quite work like that. A photon hitting a photodiode junction either has enough energy to excite an electron across the junction or it does not. So one way you could make a multi-colour pixel would be to have several photodiode junctions one on top of the other, each with a different "energy gap", so that each one responds to a different wavelength. This idea is now being used in the highest efficiency solar cells to allow all the different wavelengths in sunlight to be absorbed efficiently. However for a colour-sensitive photodiode, there are some big complexities to be overcome - I have no idea if anyone has succeeded or even tried.

HibyPrime1 - Saturday, February 23, 2013 - link

Interesting. I've read about band-gaps/energy gaps before, but never understood what they mean in any real-world sense. Thanks for that :)