3DMark for Windows Launches; We Test It with Various Laptops

by Jarred Walton on February 5, 2013 5:00 AM ESTInitial 3DMark Notebook Results

While we don’t normally run 3DMark for our CPU and GPU reviews, we do like to run the tests for our system and notebook reviews. The reason is simple: we don’t usually have long-term access to these systems, so in six months or a year when we update benchmarks we don’t have the option to go back and retest a bunch of hardware to provide current results. That’s not the case on desktop CPUs and GPUs, which explains the seeming discrepancy. 3DMark has been and will always be a synthetic graphics benchmark, which means the results are not representative of true gaming performance; instead, the results are a ballpark estimate of gaming potential, and as such they will correlate well with some titles and not so well with others. This is the reason we benchmark multiple games—not to mention mixing up our gaming suite means that driver teams have to do work for the games people actually play and not just the benchmarks.

The short story here (TL;DR) is that just as Batman: Arkham City, Elder Scrolls: Skyrim, and Far Cry 3 have differing requirements and performance characteristics, 3DMark results can’t tell you exactly how every game will run—the only thing that will tell you how game X truly scales across various platforms is of course to specifically benchmark game X. I’m also more than a little curious to see how performance will change over the coming months as 3DMark and the various GPU drivers are updated, so with version 1.00 and current drivers in hand I ran the benchmarks on a selection of laptops along with my own gaming desktop.

I tried to include the last two generations of hardware, with a variety of AMD, Intel, and NVIDIA hardware. Unfortunately, there's only so much I can do in a single day, and right now I don't have any high-end mobile NVIDIA GPUs available. Here’s the short rundown of what I tested:

| System Details for Initial 3DMark Results | |||

| System | CPU (Clocks) |

GPU (Core/RAM Clocks) |

RAM (Timings) |

| Gaming Desktop |

Intel Core i7-965X 4x3.64GHz (no Turbo) |

HD 7950 3GB 900/5000MHz |

6x2GB DDR2-800 675MHz@9-9-9-24-2T |

| Alienware M17x R4 |

Intel Core i7-3720QM 4x2.6-3.6GHz |

HD 7970M 2GB 850/4800MHz |

2GB+4GB DDR3-1600 800MHz@11-11-11-28-1T |

| AMD Llano |

AMD A8-3500M 4x1.5-2.4GHz |

HD 6620G 444MHz |

2x2GB DDR3-1333 673MHz@9-9-9-24 |

| AMD Trinity |

AMD A10-4600M 4x2.3-3.2GHz |

HD 7660G 686MHz |

2x2GB DDR3-1600 800MHz@11-11-12-28 |

| ASUS N56V |

Intel Core i7-3720QM 4x2.6-3.6GHz |

GT 630M 2GB 800/1800MHz HD 4000@1.25GHz |

2x4GB DDR3-1600 800MHz@11-11-11-28-1T |

| ASUS UX51VZ |

Intel Core i7-3612QM 4x2.1-3.1GHz |

GT 650M 2GB 745-835/4000MHz |

2x4GB DDR3-1600 800MHz@11-11-11-28-1T |

| Dell E6430s |

Intel Core i5-3360M 2x2.8-3.5GHz |

HD 4000@1.2GHz |

2GB+4GB DDR3-1600 800MHz@11-11-11-28-1T |

| Dell XPS 12 |

Intel Core i7-3517U 2x1.9-3.0GHz |

HD 4000@1.15GHz |

2x4GB DDR3-1333 667MHz@9-9-9-24-1T |

| MSI GX60 |

AMD A10-4600M 4x2.3-3.2GHz |

HD 7970M 2GB 850/4800MHz |

2x4GB DDR3-1600 800MHz@11-11-12-28 |

| Samsung NP355V4C |

AMD A10-4600M 4x2.3-3.2GHz |

HD 7670M 1GB 600/1800MHz HD 7660G 686MHz (Dual Graphics) |

2GB+4GB DDR3-1600 800MHz@11-11-11-28 |

| Sony VAIO C |

Intel Core i5-2410M 2x2.3-2.9GHz |

HD 3000@1.2GHz |

2x2GB DDR3-1333 666MHz@9-9-9-24-1T |

Just a quick note on the above laptops is that I did run several overlapping results (e.g. HD 4000 with dual-core, quad-core, and ULV; A10-4600M with several dGPU options), but I’ve taken the best result on items like the quad-core HD 4000 and Trinity iGPU. The Samsung laptop also deserves special mention as it supports AMD Dual Graphics with HD 7660G and 7670M; my last encounter with Dual Graphics was on the Llano prototype, and things didn’t go so well. 3DMark is so new that I wouldn’t expect optimal performance, but I figured I’d give it a shot. Obviously, some of the laptops in the above list haven’t received a complete review, and in most cases those reviews are in progress.

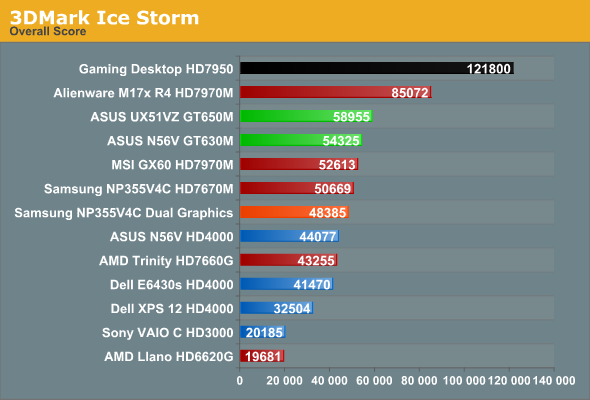

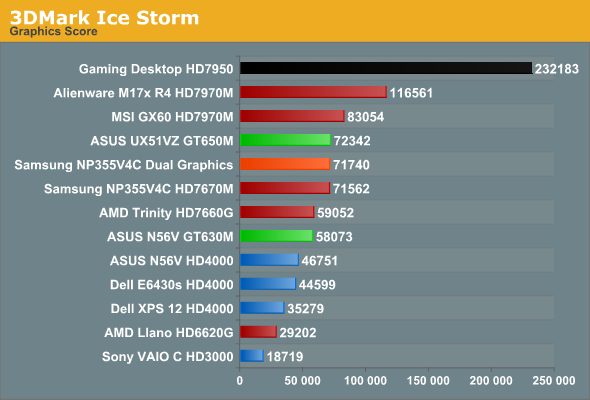

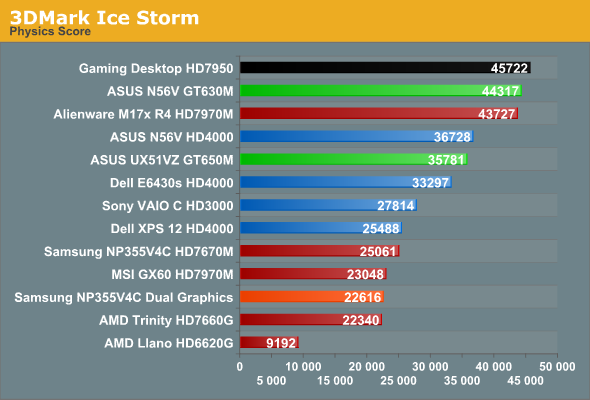

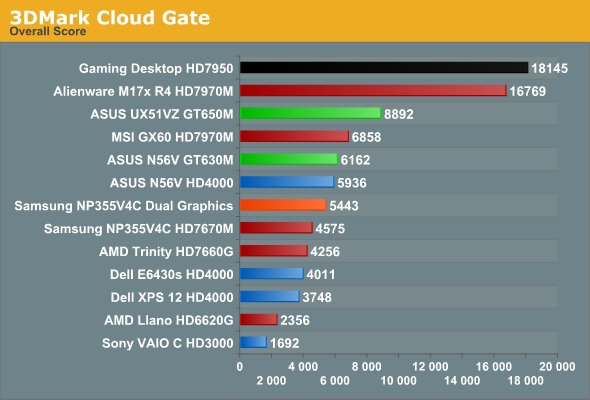

And with that out of the way, here are the results. I’ll start with the Ice Storm tests, followed by Cloud Gate and then Fire Strike.

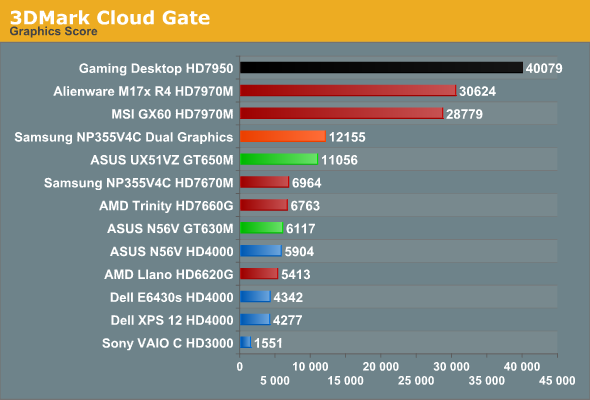

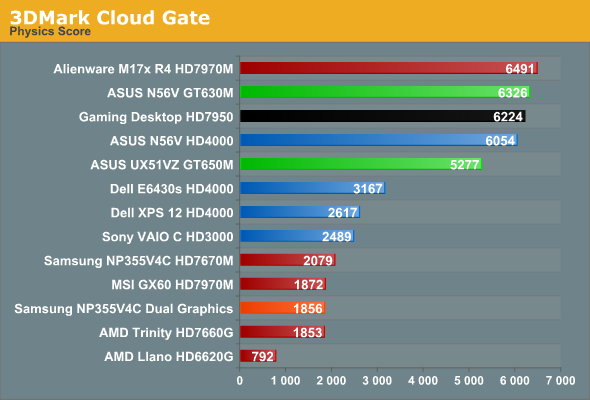

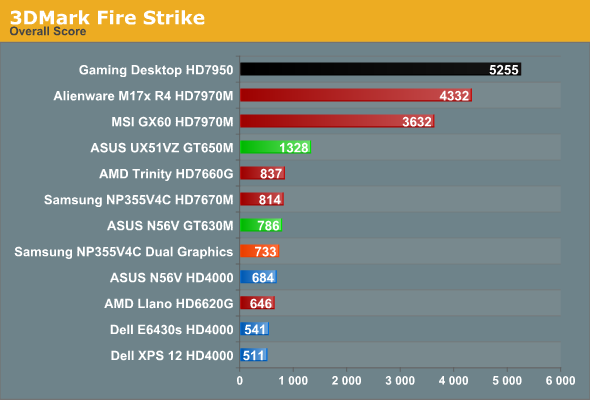

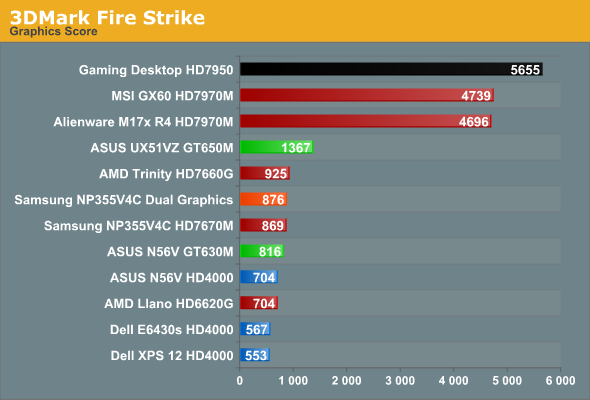

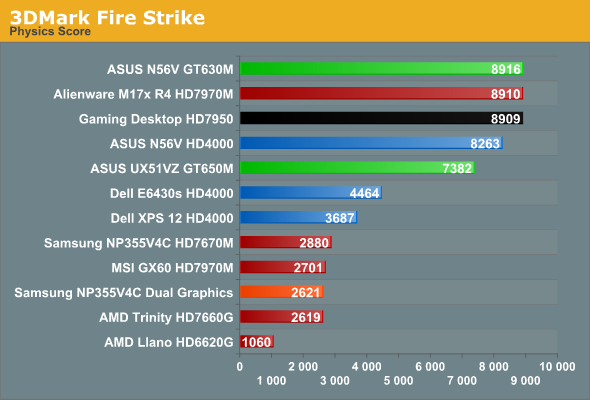

As expected, the desktop typically outpaces everything else, but the margins are a bit closer than what I experience in terms of actual gaming. Generally speaking, even with an older Bloomfield CPU, the desktop HD 7950 is around 30-60% faster than the mobile HD 7970M. Thanks to Ivy Bridge, the CPU side of the equation is actually pretty close, so the overall scores don’t always reflect the difference but the graphics tests do. The physics tests even have a few instances of mobile CPUs besting Bloomfield, which is pretty accurate—with the latest process technology, Ivy Bridge can certainly keep up with my i7-965X.

Moving to the mobile comparisons, at the high end we have two laptops with HD 7970M, one with Ivy Bridge and one with Trinity. I made a video a while back showing the difference between the two systems running just one game (Batman), and 3DMark again shows that with HD 7970M, Trinity APUs are a bottleneck in many instances. Cloud Gate has the Trinity setup get closer to the IVB system, and on the Graphics score the MSI GX60 actually came out just ahead in the Fire Strike test, but in the Physics and Overall scores it’s never all that close. Physics in particular shows very disappointing results for the AMD APUs, which is why even Sandy Bridge with HD 3000 is able to match Llano in the Ice Storm benchmark (though not in the Graphics result).

A look at the ASUS UX51VZ also provides some interesting food for thought: thanks to the much faster CPU, even a moderate GPU like the GT 650M can surpass the 3DMark results of the MSI GX60 in two of the overall scores. That’s probably a bit much, but there are titles (Skyrim for instance) where CPU performance is very important, and in those cases the 3DMark rankings of the UX51VZ and the GX60 are likely to match up; in most demanding games (or games at higher resolutions/settings), however, you can expect the GX60 to deliver a superior gaming experience that more closely resembles the Fire Strike results.

The Samsung Series 3 with Dual Graphics is another interesting story. In many of the individual tests, the second GPU goes almost wholly unused—note that I’d expect updated drivers to improve the situation, if/when they become available. The odd man out is the Cloud Gate Graphics test, which scales almost perfectly with Dual Graphics. Given how fraught CrossFire can be even on a desktop system, the fact that Dual Graphics works at all with asymmetrical hardware is almost surprising. Unfortunately, with Trinity generally being underpowered on the CPU side and with the added overhead of Dual Graphics (aka Asymmetrical CrossFire), there are many instances where you’re better off running with just the 7670M and leaving the 7660G idle. I’m still working on a full review of the Samsung, but while Dual Graphics is now at least better than what I experienced with the Llano prototype, it’s not perfect by any means.

Wrapping things up, we have the HD 4000 in three flavors: i7-3720QM, i5-3360M, and i7-3517U. While in theory they iGPU is clocked similarly, as I showed back in June, on a ULV platform the 17W TDP is often too little to allow the HD 4000 to reach its full potential. Under a full load, it looks like HD 4000 in a ULV processor can consume roughly 10-12W, but the CPU side can also use up to 15W. Run a taxing game where both the CPU and iGPU are needed and something has to give; that something is usually iGPU clocks, but the CPU tends to throttle as well. Interestingly, 3DMark only really seems to show this limitation with the Ice Storm tests; the other two benchmarks give the dual-core i5-3360M and i7-3517U very close results. In actual games, however, I don’t expect that to be the case very often (meaning, Ice Storm is likely the best representation of how HD 4000 scales across various CPU and TDP configurations).

HD 4000 also tends to place quite well with respect to Trinity and some of the discrete GPUs, but in practice that’s rarely the case. GT 630M for instance was typically 50% to 100% (or slightly more) faster than HD 4000 in the ASUS N56V Ivy Bridge prototype, but looking at the 3DMark results it almost looks like a tie. Don’t believe those relative scores for an instant; they’re simply not representative of real gaming experiences. And that is one of the reasons why we continue to look at 3DMark as merely a rough estimate of performance potential; it often gives reasonable rankings, but unfortunately there are times (optimizations by drivers perhaps) where it clearly doesn’t tell the whole story. I’m almost curious to see what sort of results HD 4000 gets with some older Intel drivers, as my gut is telling me there may be some serious tuning going on in the latest build.

69 Comments

View All Comments

IanCutress - Tuesday, February 5, 2013 - link

It is worth noting that FM have integrated a native rendering that scales to your monitor. So Fire Strike on extreme mode is natively rendered at 2560x1440 and then scaled to 1366x768 of the monitor as required. (source: FM_Jarvis on hwbot.org forums)Time to fire up some desktop four way, see if it scales :D

dj christian - Tuesday, February 5, 2013 - link

Thanks for a great walkthrough! However i am wondering about the Llano and Trinity systems. Are those mobile and if what brand and model do they run on?JarredWalton - Tuesday, February 5, 2013 - link

All of the systems other than the desktop are laptops. As for the AMD Llano and Trinity, those are prototype systems from AMD. Llano is probably not up to snuff, as I can't update drivers, but the Trinity laptop runs well -- it was the highest scoring A10 iGPU of the three I have right now (the Samsung and MSI being the other two).Alexvrb - Wednesday, February 6, 2013 - link

I'm annoyed with Samsung for their memory configuration. 2GB+4GB? I'd like to see tests with that same laptop running a decent pair of 4GB DDR3-1600 sticks. On top of this, even if you can configure one yourself online... they gouge so bad on storage and RAM upgrades that it makes more sense for me to buy it poorly preconfigured and upgrade it myself. I could throw the unwanted parts in the trash and STILL come out way cheaper, not that I would do so.Tuvok86 - Tuesday, February 5, 2013 - link

so, does the 3rd test look "next gen" and how does it look compared to the best engines?JarredWalton - Tuesday, February 5, 2013 - link

In terms of the graphics fidelity, these aren't games so it's difficult to compare. I actually find even the Ice Storm test looks decent and makes me yearn for a good space simulation, even though it's clearly the least demanding of the three tests. I remember upgrading from a 286 to a 386 just so I could run the original Wing Commander with Expanded Memory [EMS] and upgrade graphics quality! Tie Fighter, X-Wing, Freespace, and Starlancer all graced my hard drive over the years. Cloud Gate and Fire Strike are more strict graphics demos, though I suppose I could see Fire Strike as fighting game.The rendering effects are good, and I'm also glad we're not seeing any rehashing of old benchmarks with updated graphics (3DMark05 and 3DMark06 come to mind). Really, though, if we're talking about games it's really the experience as much as the graphics that matter--look at indie games like FTL where the graphics are simplistic and yet plenty of people waste hours playing and replaying the game. Ultimately, I see 3DMark more as a way of pushing hardware to extremes that we won't see in most games for a few years, but as graphics demos they don't have all the trappings of real games.

If I were to compare to an actual game, though, even the world of something like Batman: Arkham City looks better in some ways than the overabundant use of particle effects and shaders in Fire Strike. Not that it looks bad (well, it does at single digit frame rates on an HD 4000, but that's another matter), but making a nice looking demo is far different from making a good game. Shattered Horizon is a great example of this, IMO.

Not sure if any of this helps, but of course you can grab the Basic Edition for free and run it on your own system. Or if you don't have a decent GPU, Futuremark posted videos of all three tests on YouTube I think.

euler007 - Tuesday, February 5, 2013 - link

Reminds me of the days where I had a bunch of batch files to replace my config.sys and autoexec.bat to change my setup depending on what I was doing. I used QEMM back in the days, dunno why I remember that.JarredWalton - Tuesday, February 5, 2013 - link

QEMM or similar products were necessary until DOS 5.0 basically solved most of the issues. Hahaha... I remember all the Config.SYS tweaking as well. It was the "game before the game"!HisDivineOrder - Tuesday, February 5, 2013 - link

Kids today have it easy.Remember when buying anything not Sound Blaster meant PC gaming hell? I mean, midi was the best it got if you didn't have a Sound Blaster. And that's if you were lucky. Sometimes, you'd just nothing. Total non-functional hell.

I remember my PC screen being given its first taste of (bad) AA with smeared graphics because you had to put your 2d card through a pass-through to get 3dfx graphics and the signal degraded some doing the pass-through.

I remember having to actually figure out IRQ conflicts. Without much help from either the system or the motherboard. Just had to suss them out. Or tough luck, dude.

I remember back when you had all these companies working on x86 processors. Or when AMD and Intel chips could be used on the same motherboards. I remember when Intel told us we just HAD to have CPU's that slotted in via slot. Then told us all the cool kids didn't use slot any more a few years later.

I can remember a day way back when that AMD used PR performance ratings to make up for the fact that Intel wanted to push speed over performance per clock. Ironic compared to how the two play out now on this front.

I can remember when Plextor made fantastic optical drives on their own of their own design. And I can remember when we had three players in the GPU field.

I remember the joy of the Celeron 300a and boosting that bad boy on an Abit BH6 to 450mhz. That thing flew faster than the fastest Pentium for a brief time there. I remember Abit.

I remember...

Dear God, at some point there, I started imagining myself as Rutger Hauer in Blade Runner at the end.

"I've seen things you wouldn't believe. Attack ships on fire off the shoulder of Orion. I watched C-beams glitter in the dark near the Tannhäuser Gate. All those moments will be lost in time, like tears in rain. Time to die.”

Parhel - Tuesday, February 5, 2013 - link

I remember I bought the Game Blaster sound card maybe 6 months before the Sound Blaster came out, and how disappointed I was when I saw the Sound Blaster at the College of Dupage computer show. God, how I miss going to the computer show with my grandfather. I looked forward to it all month long. And the Computer Shopper magazine, in the early days. And reading newsgroups . . . Gosh, I'm getting old.