The Intel Ivy Bridge (Core i7 3770K) Review

by Anand Lal Shimpi & Ryan Smith on April 23, 2012 12:03 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

Intel HD 4000 Explored

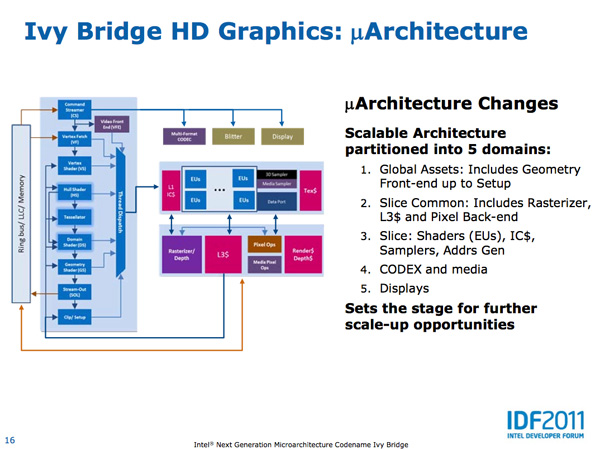

What makes Ivy Bridge different from your average tick in Intel's cycle is the improvement to the on-die GPU. Intel's HD 4000, the new high-end offering, is now equipped with 16 EUs up from 12 in Sandy Bridge (soon to be 40 in Haswell). Intel's HD 2500 is the replacement to the old HD 2000 and it retains the same number of EUs (6). Efficiency is up at the EU level as Ivy Bridge is able to dual-issue more instruction combinations than its predecessor. There are a number of other enhancements that we've already detailed in our architecture piece, but a quick summary is below:

— DirectX 11 Support

— More execution units (16 vs 12) for GT2 graphics (Intel HD 4000)

— 2x MADs per clock

— EU can now co-issue more operations

— GPU specific on-die L3 cache

— Faster QuickSync performance

— Lower power due to 22nm

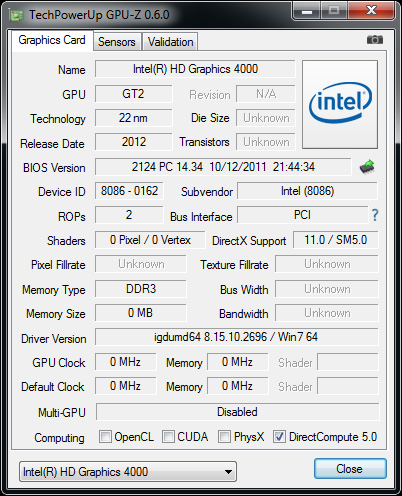

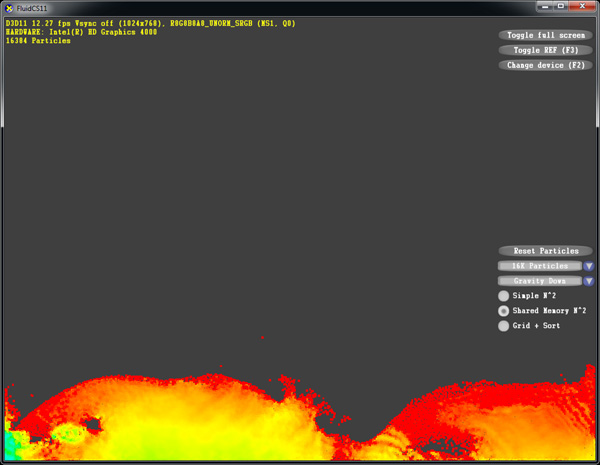

Although OpenCL is supported by the HD 4000, Intel has not yet delivered an OpenCL ICD so we cannot test functionality and performance. Update: OpenCL is supported in the launch driver, we are looking into why OpenCL-Z thought otherwise. DirectX 11 is alive and well however:

Image quality is actually quite good, although there are a few areas where Intel falls behind the competition. I don't believe Ivy Bridge's GPU performance is high enough yet where we can start nitpicking image quality but Intel isn't too far away from being there.

Current state of AF in IVB

Anisotropic filtering quality is much improved compared to Sandy Bridge. There's a low precision issue in DirectX 9 currently which results in the imperfect image above, that has already been fixed in a later driver revision awaiting validation. The issue also doesn't exist under DX10/DX11.

IVB with improved DX9 AF driver

Game compatibility is also quite good, not perfect but still on the right path for Intel. It's also worth noting that Intel has been extremely responsive in finding and eliminating bugs whenever we pointed at them in their drivers. One problem Intel does currently struggle with is game developers specifically targeting Intel graphics and treating the GPU as a lower class citizen. I suspect this issue will eventually resolve itself as Intel works to improve the perception of its graphics in the market, but until then Intel will have to suffer a bit.

173 Comments

View All Comments

wingless - Monday, April 23, 2012 - link

I'll keep my 2600K.....just kidding

formulav8 - Monday, April 23, 2012 - link

I hope you give AMD even more praise when Trinity is released Anand. IMO you way overblew how great Intels igp stuff. Its their 4th gen that can't even beat AMDs first gen.Just my opinion :p

Zstream - Monday, April 23, 2012 - link

I agree..dananski - Monday, April 23, 2012 - link

As much as I like the idea of decent Skyrim framerates on every laptop, and even though I find the HD4000 graphics an interesting read, I couldn't care less about it in my desktop. Gamers will not put up with integrated graphics - even this good - unless they're on a tight budget, in which case they'll just get Llano anyway, or wait for Trinity. As for IVB, why can't we have a Pentium III sized option without IGP, or get 6 cores and no IGP?Kjella - Tuesday, April 24, 2012 - link

Strategy, they're using their lead in CPUs to bundle it with a GPU whether you want it or not. When you take your gamer card out of your gamer machine it'll still have an Intel IGP for all your other uses (or for your family or the second-hand market or whatever), that's one sale they "stole" from AMD/nVidia's low end. Having a separate graphics card is becoming a niche market for gamers. That's better for Intel than lowering the expectation that a "premium" CPU costs $300, if you bring the price down it's always much harder to raise it again...Samus - Tuesday, April 24, 2012 - link

As amazing this CPU is, and how much I'd love it (considering I play BF3 and need a GTX560+ anyway) I have to agree the GPU improvement is pretty disappointing...After all that work, Intel still can't even come close to AMD's integrated graphics. It's 75% of AMD's performance at best.

Cogman - Thursday, May 3, 2012 - link

There is actually a good reason for both AMD and Intel to keep a GPU on their CPUs no matter what. That reason is OpenCV. This move makes the assumption that OpenCV or programming languages like it will eventually become mainstream. With a GPU coupled to every CPU, it saves developers from writing two sets of code to deal with different platforms.froggr - Saturday, May 12, 2012 - link

OpenCV is Open Computer Vision and runs either way. I think you're talking about OpenCL (Open Compute Language). and even that runs fine without a GPU. OpenCL can use all cores CPU + GPU and does not require separate code bases.OpenCL runs faster with a GPU because it's better parallellized.

frozentundra123456 - Monday, April 23, 2012 - link

Maybe we could actually see some hard numbers before heaping so much praise on Trinity??I will be convinced about the claims of 50% IGP improvements when I see them, and also they need to make a lot of improvements to Bulldozer, especially in power consumption, before it is a competitive CPU. I hope it turns out to be all the AMD fans are claiming, but we will see.

SpyCrab - Tuesday, April 24, 2012 - link

Sure, Llano gives good gaming performance. But it's pretty much at Athlon II X4 CPU performance.