ASUS Eee Pad Transformer Prime & NVIDIA Tegra 3 Review

by Anand Lal Shimpi on December 1, 2011 1:00 AM ESTHDMI Output, Controller Compatibility & Gaming Experience

NVIDIA sent along a Logitech Wireless Gamepad F710 with the Eee Pad Transformer Prime to test game controller compatibility. NVIDIA claims the Nintinendo Wiimote, wireless PS3, wired Xbox 360 and various other game controllers will work with Tegra 3 based devices courtesy of NVIDIA's own driver/compatibility work. The Logitech controller worked perfectly, all I had to do was put batteries in the device and plug the USB receiver into the Prime's dock; no other setup was necessary. Note that this same controller actually worked with the original Transformer as well, although there seemed to be some driver/configuration issues that caused unintended inputs there.

By default the Logitech controller navigates the Honeycomb UI just fine. You can use the d-pad to move between icons or home screens, and the start button brings up the apps launcher. The X button acts as a tap/click on an icon (yes, NVIDIA managed to pick a button that's not what Sony or Microsoft use as the accept button - I guess it avoids confusion or adds more confusion depending on who you ask).

Game compatibility with a third party controller is varied. NVIDIA preloaded a ton of Tegra Zone games on the Prime for me to get a good experience of what the platform has to offer. Shadowgun worked just like you'd expect it to, with the two thumbsticks independently controlling movement and aiming. Unfortunately the triggers aren't used in Shadowgun, instead you rely on the A button to fire and the B button to reload. Other games would use the d-pad instead of the thumbsticks for movement or use triggers instead of buttons for main actions. It's not all that different from the console experience, but there did seem to be more variation between control configurations than you'd get compared to what you find on the Xbox 360 or PS3.

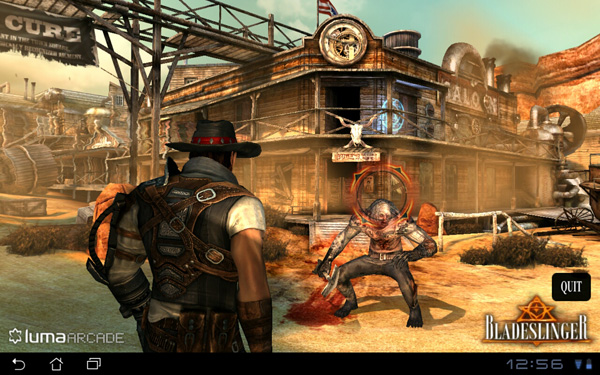

The actual gaming experience ranges from meh to pretty fun depending on the title as you might expect. I'd say I had the most fun with Sprinkle and Riptide, with Bladeslinger looking the best (aside from NVIDIA's own Glowball demo).

Sprinkle is a puzzle game that we've written about in the past. You basically roll around with a fire truck putting out fires before they spread and catch huts on fire. It's like a more chill Angry Birds if you're not sick of that comparison. Sprinkle doesn't make use of external controllers, it's touch only.

Riptide is a jetski racing game that does have controller support. There's not a whole lot of depth to the game but it is reminiscent of simple racing games from several years ago. The Tegra version gets an image quality upgrade and overall the game doesn't look too shabby. I probably wasted a little too much time playing this one during the review process. It runs and plays very smoothly on Tegra 3.

Bladeslinger is the best looking title NVIDIA preloaded on the Prime - it's basically a Western themed Infinity Blade knockoff. Image quality and performance are both good, although the tech demo wasn't deep enough to really evaluate the game itself.

For games that support an external controller, the Logitech pad usually just worked. The only exception was Riptide where I had to go in and enable controller support in the settings menu first before I could use the Logitech in game. I don't believe that better third party controller support alone is going to make Android (or the Prime) a true gaming platform, but it's clear this is an avenue that needs continued innovation. NVIDIA wants to turn these tablets and smartphones into a gaming platform, and letting you hook up a wide variety of controllers up to them is a good idea in my book.

HDMI output was easy to enable; I just plugged the Prime into my TV and I got a clone of my display. I didn't have to fiddle with any settings or do anything other than attach a cable. The holy grail? Being able to do this wirelessly. The controller is there, it's time to make it happen with video output as well.

204 Comments

View All Comments

abcgum091 - Thursday, December 1, 2011 - link

After seeing the performance benchmarks, Its safe to say that the ipad 2 is an efficiency marvel. I don't believe I will be buying a tablet until windows 8 is out.ltcommanderdata - Thursday, December 1, 2011 - link

I'm guessing the browser and most other apps are not well optimized for quad cores. The question is will developers actually bother focusing on quad cores? Samsung is going with fast dual core A15 in it's next Exynos. The upcoming TI OMAP 4470 is a high clock speed dual core A9 and OMAP5 seem to be high clock speed dual core A15. If everyone else standardizes on fast dual cores, Tegra 3 and it's quad cores may well be a check box feature that doesn't see much use putting it at a disadvantage.Wiggy McShades - Thursday, December 1, 2011 - link

If the developer is writing something in java (most likely native code applications too) it would be more work for them to ensure they are at most using 2 threads instead of just creating as many threads as needed. The amount of threads a java application can create and use is not limited to the number of cores on the cpu. If you created 4 threads and there are 2 cores then the 4 threads will be split between the two cores. The 2 threads per core will take turns executing with the thread who has the highest priority getting more executing time than the other. All non real time operating systems are constantly pausing threads to let another run, that's how multitasking existed before we had dual core cpu's. The easiest way to write an application that takes advantage of multiple threads is to split up the application into pieces that can run independently of each other, the amount of pieces being dependent on the type of application it is. Essentially if a developer is going to write a threaded application the amount of threads he will use will be determined by what the application is meant to do rather than the cores he believes will be available. The question to ask is what kind of application could realistically use more than 2 threads and can that application be used on a tablet.Operaa - Monday, January 16, 2012 - link

Making responsive today UI most certainly requires you to use threads, so shouldn't be big problem. I'd say 2 threads per application is absolutely a minimum. For example, talking about browsing web, I would imagine useful to handle ui in one thread, loading page in one, loading pictures in third and running flash in fourth (or more), etc.UpSpin - Thursday, December 1, 2011 - link

ARM introduced big.LITTLE which only makes sense in Quad or more core systems.NVIDIA is the only company with a Quad core right now because they integrated this big.LITTLE idea already. Without such a companion core does a quad core consume too much power.

So I think Samsung released a A15 dual core because it's easier and they are able to release a A15 SoC earlier. They'll work on a Quad core or six or eight core, but then they have to use the big.LITTLE idea, which probably takes a few more months of testing.

And as we all know, time is money.

metafor - Thursday, December 1, 2011 - link

/bogglebig.Little can work with any configuration and works just as well. Even in quad-core, individual cores can be turned off. The companion core is there because even at the lowest throttled level, a full core will still produce a lot of leakage current. A core made with lower-leakage (but slower) transistors can solve this.

Also, big.Little involves using different CPU architectures. For example, an A15 along with an A7.

nVidia's solution is the first step, but it only uses A9's for all of the cores.

UpSpin - Friday, December 2, 2011 - link

I haven't said anything different. I just added that Samsung wants to be one of the first who release a A15 SoC. To speed things up they released a dual core only, because there the advantage of a companion core isn't that big and the leakage current is 'ok'. It just makes the dual core more expensive (additional transistors needed, without such a huge advantage)But if you want to build a quad core, you must, just as Nvidia did, add such a companion core, else the leakage current is too high. But integrating the big.LITTLE idea probably takes additional time, thus they wouldn't be the first who produced a A15 based SoC.

So to be one of the first, they chose to take the easiest design, a dual core A15. After a few months and additional time of RD they will release a quad core with big.LITTLE and probably a dual core and six core and eigth core with big.LITTLE, too.

hob196 - Friday, December 2, 2011 - link

You said:"ARM introduced big.LITTLE which only makes sense in Quad or more core systems"

big.LITTLE would apply to single core systems if the A7 and A15 pairing was considered one core.

UpSpin - Friday, December 2, 2011 - link

Power consumption wise it makes sense to pair an A7 with a single and dual core already.Cost wise it doesn't really make sense.

I really doubt that we will see some single core A15 SoC with a companion core. And dual core, maybe, but not at the beginning.

GnillGnoll - Friday, December 2, 2011 - link

It doesn't matter how many "big" cores there are, big.LITTLE is for those situations where turning on even a single "big" core is a relatively large power draw.A quad core with three cores power gated has no more leakage than a single core chip.