Bulldozer for Servers: Testing AMD's "Interlagos" Opteron 6200 Series

by Johan De Gelas on November 15, 2011 5:09 PM ESTTrueCrypt 7.1 Benchmark

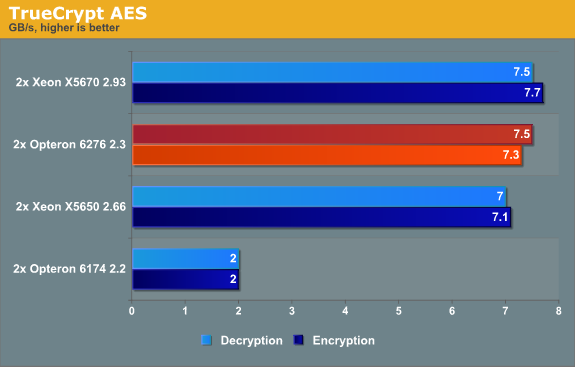

TrueCrypt is a software application used for on-the-fly encryption (OTFE). It is free, open source and offers full AES-NI support. The application also features a built-in encryption benchmark that we can use to measure CPU performance. First we test with the AES algorithm (256-bit key, symmetric).

You can compare those numbers directly with Anand's benchmark here. The Core i7-2600K at 3.4GHz delivers 3.4GB/s and the AMD FX-8150 at 3.6GHz about the same 3.3GB/s. We get about 2.3 times the performance here with four times as many "cores", but at 2.3GHz instead of 3.6GHz.

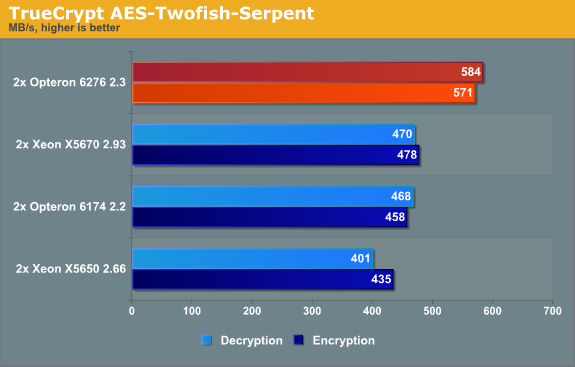

We also test with the heaviest combination of the cascaded algorithms available: Serpent-Twofish-AES.

The combination benchmark is limited by the slowest algorithms: twofish and serpent. The huge advantage that the architectures (Opteron "Bulldozer" and Xeon "Westmere") which support AES-NI had has evaporated: the Opteron 6174 keeps up with the best Xeons. The Opteron 6276 can leverage its higher threadcount as this benchmark scales extremely well.

It is good to realize that these benchmarks are not real-world but rather synthetic. It would be better to test a website that does some encrypting in the background or a fileserver with encrypted partitions. In that case the encryption software is only a small part of the total code being run. A large performance (dis)advantage might translate into a much smaller performance (dis)advantage in that real-world situation.

For example, eight times faster encryption resulted in a website with 23% higher throughput and a 40% faster encrypted file (see here). The advantage that the Xeon had in the first benchmark will not be noticeable, and the Opteron's 24% higher performance will translate into a few percentage points. But this is a benchmark where AMD's efforts to get a 16 integer cores inside a 115W TDP pay off.

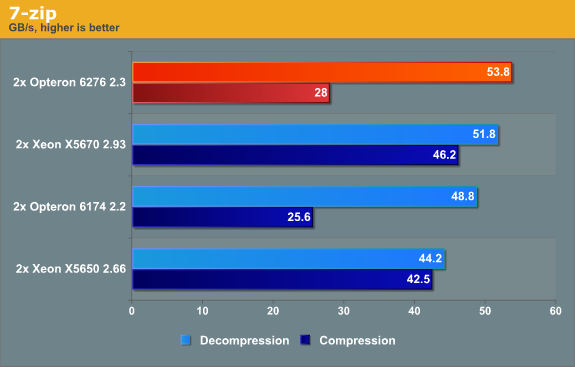

7-Zip 9.2

7-zip is a file archiver with a high compression ratio. 7-Zip is open source software, and most of the source code is under the GNU LGPL license

Compression is more CPU intensive than decompression, and the latter depends a little more on memory bandwidth. When it comes to load/stores and memory bandwidth, the Opteron 6276 is unbeateable. We've also seen indications that Bulldozer's cache does very well in reads but not so well in writes, and that could account for some of the gap between the compress/decompress results.

Compression is for a part determined by the quality of the branch predictor (higher than normal branch mispredictions on mediocre branch predictors). The Opteron 6276 has a better branch predictor than the Opteron 6174, but the branch misprediction penalty has grown from 12 to 20 cycles. As a result, a single branch intensive thread runs slower (see Anand's tests) on the newest AMD architecture. Luckily, the AMD Opteron 6276 can compensate for this with its 16 threads (vs 12 threads for the Opteron 6172) and a little bit of help from Turbo Core.

Intel still has the best branch predictors in the industry. The result is that the Xeon is by far the fastest compressor. The end result is that the Xeon is the more rounded CPU in this discipline.

106 Comments

View All Comments

DigitalFreak - Tuesday, November 15, 2011 - link

Good to see that CPU-Z correctly reports the 6276 as 8 core, 16 thread, instead of falling for AMD's marketing BS.N4g4rok - Tuesday, November 15, 2011 - link

If each module possess two integer cores to a shared floating point core, what's to say that it can't be considered as a practical 16 core?phoenix_rizzen - Tuesday, November 15, 2011 - link

Each module includes 2x integer cores, correct. But the floating point core is "shared-separate", meaning it an be used as two separate 128-bit FPUs or as a single 256 FPU.Thus, each Bulldozer module can run either 3 or 4 threads simultaneously:

- 2x integer + 2x 128-bit FP threads, or

- 2x integer + 1x 256-bit FP threads

It's definitely a dual-core module. It's just that the number of threads it can run is flexible.

The thing to remember, though, is that these are separate hardware pipelines, not mickey-moused hyperthreaded pipelines.

JohanAnandtech - Tuesday, November 15, 2011 - link

You can get into a long discussion about that. The way that I see it, is that part of the core is "logical/virtual", the other part is real in Bulldozer . What is the difference between an SMT thread and CMT thread when they enter the fetch-decode stages? Nothing AFAIK, both instructions are interleaved, and they both have a "thread tag".The difference is when they are scheduled, the instructions enters a real core with only one context in the CMT Bulldozer. With SMT, the instructions enter a real core which still interleave two logical contexts. So the core still consists of two logical cores.

It is gets even more complicated when look at the FP "cores". AFAIK, the FP cores of Interlagos are nothing more than 8 SMT enabled cores.

alpha754293 - Tuesday, November 15, 2011 - link

I think that Johan is partially correct.The way I see it, the FPU on the Interlagos is this:

It's really a 256-bit wide FPU.

It can't really QUITE separate the ONE physical FPUs into two 128-bit wide FPUs, but it more probably in reality, interleaves them (which is really just code for "FPU-starved").

Intel's original HTT had this as a MAJOR problem, because the test back then can range from -30% to +30% performance increase. Floating-point intensive benchmarks have ALWAYS suffered mostly because suppose you're writing a calculator using ONLY 8-byte (64-bit) double precision.

NORMALLY, that should mean that you should be able to crunch through four DWORDs at the same time. And that's kinda/sorta true.

Now, if you are running two programs, really...I don't think that the CPU, the compiler (well..maybe), the OS, or the program knows that it needs to compile for 128-bit-wide FPUs if you're going to run two instances or two (different) calculators.

So it's resource starved in trying to do the calculation processes at the same time.

For non-FPU-heavy workloads, you can get away with that. For pretty much the entire scientific/math/engineering (SME) community; it's an 8-core processor or a highly crippled 16-core processor.

Intel's latest HTT seems to have addressed a lot of that, and in practical terms, you can see upwards of 30% performance advantage even with FPU-heavy workloads.

So in some cases, the definition of core depends on what you're going to be doing with it. For SME/HPC; it's good cuz it can do 12-actual-cores worth of work with 8 FPUs (33% more efficient), but sucks because unless they come out with a 32-thread/16-core monolithic die; as stated, it's only marginally better than the last. It's just cheaper. And going to get incrementally faster with higher clock speeds.

alpha754293 - Tuesday, November 15, 2011 - link

P.S. Also, like Anand's article about nVidia Optimus:Context switching even at the CPU level, while faster, is still costly. Perhaps maybe not nearly as costly as shuffling data around; but it's still pretty costly.

Samus - Wednesday, November 16, 2011 - link

Ouch, this is going to be AMD's Itanium. That is, it has architecture adoption problems that people simply won't build around. Maybe less substantial than IA64, but still a huge performance loss because of underutilized integer units.leexgx - Wednesday, November 16, 2011 - link

think they way CPU-z reporting it for BD cpus is correct each core has 2 FP, so 8 cores and 16 threads is correctto bad windows does not understand how to spread the load correctly on an amd cpu (windows 7 with HT cpus Intel works fine, spreads the load correctly, SP1 improves that more but for Intel cpus only)

windows 7 sp1 makes biger use of core parking and gives better cpu use on Intel cpus as i have been seeing on 3 systems most work loads now stay on the first 2 cores and the other 2 stay parked, on amd side its still broke with cool and quite enabled

Stuka87 - Tuesday, November 15, 2011 - link

So, what is your definition of a core?Bulldozers do not utilize hyper threading, which takes a single integer core and can at times put two threads into that single integer core. A Bulldozer core has actual hardware two run two threads at the same time. This would suggest there are two physical cores.

Does it perform like an intel 16 core (if there was such a thing), no. But that does not mean that it is not in fact a 16 core device. As the hardware is there. Yes they share an FPU, but that doesn't mean they are not cores.

Filiprino - Tuesday, November 15, 2011 - link

Actually, Bulldozer is 16 cores. It has two dedicated integer units and a float point unit which can act as two 128 bit units or one 256 bit unit for AVX. So, you can have 2 and 2 per module.Bulldozer does not use hyperthreading.