NVIDIA's GeForce GTX 580: The SLI Update

by Ryan Smith on November 10, 2010 10:00 AM ESTNormalized Clocks: Separating Architecture & SMs from Clockspeed Increases

While we were doing our SLI benchmarking we got several requests for GTX 580 results with normalized clockspeeds in order to better separate what performance improvements were due to NVIDIA’s architectural changes and enabling the 16th SM, and what changes are due to the 10% higher clocks. So we’ve quickly run a GTX 580 at 2560 with GTX 480 clockspeeds (700Mhz core, 924Mhz memory) in order to capture this data. Games that benefit most from the clockspeed bump are going to be memory bandwidth or ROP limited, while games showing the biggest improvements in spite of the normalized clockspeeds are games that are shader/texture limited or benefit from the texture and/or Z-cull improvements.

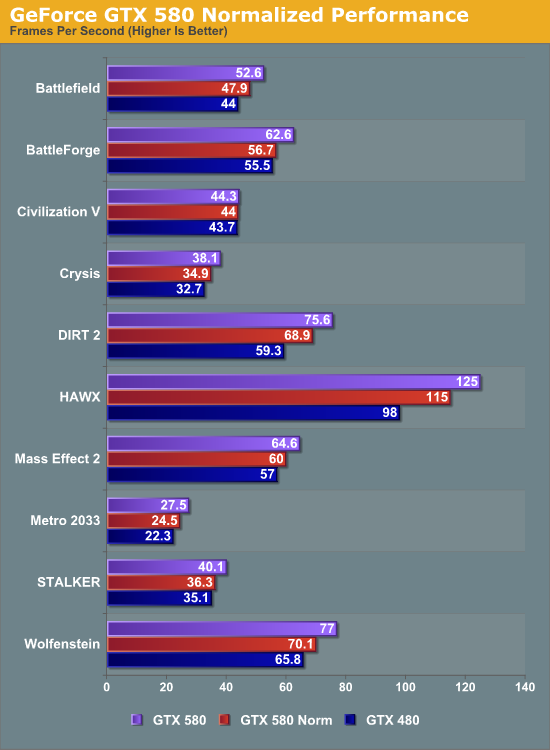

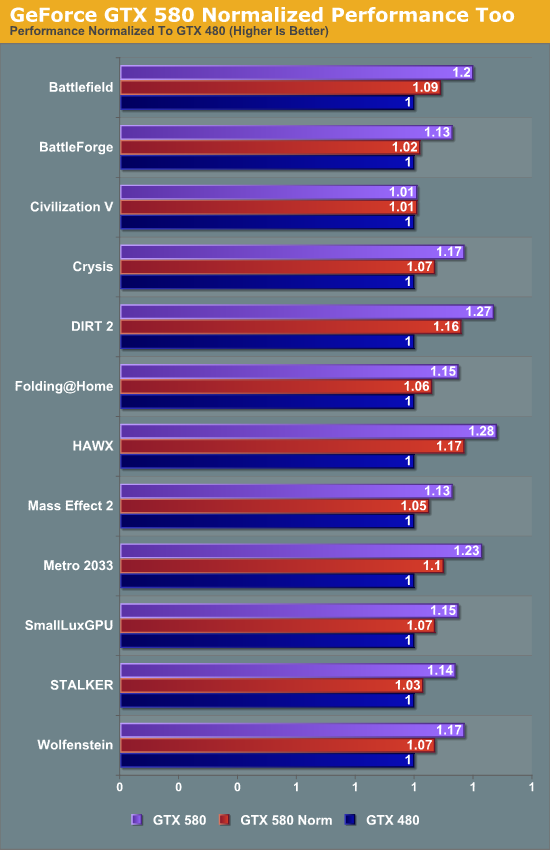

We’ll put 2 charts here, one with the actual framerates and a second with all performance numbers normalized to the GTX 480’s performance.

Games showing the lowest improvement in performance with normalized clockspeeds are BattleForge, STALKER, and Civilization V (which is CPU limited anyhow). At the other end are HAWX, DIRT 2, and Metro 2033.

STALKER and BattleForge hold consistent with our theory that games that benefit the least when normalized are ROP or memory bandwidth limited, as both games only see a pickup in performance once we ramp up the clocks. And on the other end HAWX, DIRT 2, and Metro 2033 still benefit from the clockspeed boost on top of their already hefty boost thanks to architectural improvements and the extra SMs. Interestingly Crysis looks to be the paragon game for the average situation, as it benefits some from the arch/SM improvements, but not a ton.

A subset of our compute benchmarks is much more straightforward here; Folding@Home and SmallLuxGPU improve 6% and 7% respectively from the increase in SMs (theoretical improvement, 6.6%), and then after the clockspeed boost reach 15% faster. From this it’s a safe bet that when GF110 reaches Tesla cards that the performance improvement for Telsa won’t be as great as it was for GeForce since the architectural improvements were purely for gaming purposes. On the flip side with so many SMs currently disabled, if NVIDIA can get a 16 SM Tesla out, the performance increase should be massive.

82 Comments

View All Comments

TonyB - Wednesday, November 10, 2010 - link

But can it play Crysis?Ryan Smith - Wednesday, November 10, 2010 - link

Yes, and with full screen supersample anti-aliasing! ;-)ImSpartacus - Wednesday, November 10, 2010 - link

*Sigh*Ryan, what did I tell you about feeding the BCIPC trolls?

This is why we can't have nice things.

But on a more serious note, nice update. I love reading AT's articles! .)

By the way, was there a reason the original article was posted at exactly 9:00 and the SLI update was posted at 10:00? I looked at a few other articles by you and others and most were not posted exactly on the hour. Just curious, thanks! .)

Ryan Smith - Wednesday, November 10, 2010 - link

It's nothing in particular. When I put an article stub in the system it gives the article the time the stub was created, which means when I publish it the system calls it several hours old when it's not. So I rewrite the article's time to match up with the current time or something close to it.For the GTX 580 article the time listed on it is when the NDA expired and it went up, while for this article it went up a few minutes after the hour so I just put it down on the hour. I could have published this article at any time.

wavetrex - Wednesday, November 10, 2010 - link

Yea, it f***ing finally can !Minimum frames 50 in full res+AA. Amazing!

Oxford Guy - Wednesday, November 10, 2010 - link

Check out the minimum frame rates in Unigine Heaven at anything lower than 2K res. Worse than 480, even dramatically.Oxford Guy - Wednesday, November 10, 2010 - link

links:http://techgage.com/reviews/nvidia/geforce_gtx_580...

http://techgage.com/reviews/nvidia/geforce_gtx_580...

wtfbbqlol - Thursday, November 11, 2010 - link

Well you posted this in the original GTX580 review as well. Thought I'd reply once again here.The GTX480 minimum framerates, as high as they are at the lower resolutions, are likely a measurement error or anomaly. One only needs to look at the GTX470 to compare. There is not a good reason that the GTX480 can outpace the GTX470 by 100% at 1680x1050.

Oxford Guy - Thursday, November 11, 2010 - link

If you notice, one of the minimum frame rate results posted on this site shows the 480 beating the 580. So much for anomalies.I'd like to see this examined. Anandtech can easily post minimum frame rates for Unigine and others and answer the question...

wtfbbqlol - Thursday, November 11, 2010 - link

"If you notice, one of the minimum frame rate results posted on this site shows the 480 beating the 580. So much for anomalies."Well that's yet another reason I think the 480's minimum framerate at 1680x1050 is a bit suspect. The GTX480 can't possibly be faster than the GTX470 AND the GTX580 by such a large amount, right?

But I do agree that it would be good to get more than one source of data for this.