Hybrid Clouds: are we there yet?

by Johan De Gelas on October 18, 2010 2:05 PM EST- Posted in

- IT Computing

Cloud computing for startups: no infrastructure people necessary

The simplest way of cloud computing: renting virtual machines to avoid hiring infrastructure people. It is very tempting for "developers startups" that simply want to develop and let a software service. Buying hardware and getting support of a third party integrator is a costly and time consuming endeavour: you do not know how succesful your application will be so you might over or undersize the hardware. If you have no infrastructure knowledge in house and you have to call in the help of a third party integrators for every little problem or upgrade, consulting costs will explode quickly. After a few years, your integrator is the only one with intimate knowledge of your server, networking and OS configuration. So even if the integrator is too expensive or gives you a lousy service, you can not switch quickly to another one.

Developers just want an OS to run their software on, and that is what the public clouds deliver. In Amazon EC2 you simply chose an instance. An instance is a combination of virtual hardware and an OS template. So in a few minutes you have a fully installed windows or linux VM in front of you and you can start uploading your software. Getting your application available on the internet could not be simpler. The number of instances on EC2 grows with an incredible pace, so they must be doing something right. According to Rightscale, 10.000 instances were launched per day at the end of 2007. At the end of 2009, this number was multiplied by 5!

In theory, using a public cloud should be very cheap. Despite the fact that the public cloud letter has to make a healthy profit, the cloud vendor can leverage the economies of scale. Examples are bulk buying servers and being able to invest in expensive technologies (cooling, high efficient UPS) that only make sense in large deployments.

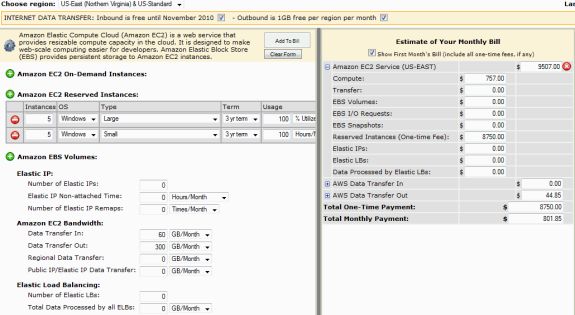

The reality is however that renting instances at Amazon EC2 is far from cheap. A quick calculation showed us that for example reserving 10 (5 large + 5 small) Amazon EC2 Windows based instances cost about $19000 per year (Tip: Linux instances cost a lot less!). The one time fee ($8750) alone costs more than a fast dual socket server and the yearly electricity bill. As you can easily run 10 VMs on one server, it is clear that adopting an Amazon based infrastructure is far from a nobrainer if you have the expertise in house.

Click to enlarge

The cost savings must come from the reducing the number of infrastructure professionals or third party consultants that you hire.

26 Comments

View All Comments

pjkenned - Monday, October 18, 2010 - link

Stuff is still new but is pretty wow in real life. Clients are based on Android and make that Mitel stuff look like 1990's tech.Gilbert Osmond - Monday, October 18, 2010 - link

I enjoy and benefit from Anandtech's articles on the larger-picture network & structural aspects of contemporary IT services. I wonder if, as Anandtech's readership age-cohort "grows up" and matures into higher management- and executive-level IT job positions, the demand for articles with this kind of content & focus will increase. I hope so.AstroGuardian - Tuesday, October 19, 2010 - link

FYI it does to some extent... :) "You can't stop the progress" right?JohanAnandtech - Tuesday, October 19, 2010 - link

While we get less comments on our enterprise articles, they do pretty well. For example the Server Clash article was in the same league as the latest Geforce and SSD reviews. We can't beat Sandy Bridge previews of course :-).And while in the beginning of the IT section we got a lot of AMD vs Intel flames, nowadays we get some very solid discussions, right on target.

HMTK - Tuesday, October 19, 2010 - link

Like back then at Ace's? ;-)rbarone69 - Tuesday, October 19, 2010 - link

You couldn't have said it better! As an IT Director find information that this site gives invaluable to my decision making. Articles like this give me a jumping off point to thinking outside the box or adding tech I never heard of to our existing infrastructure.What's amazing is that we put very little in new equipment and are able to do what cost millions just 10 years ago. We can now offer 99.999% normal availability with only a maximum of 30minutes of downtime during a full datacenter switch from Toronto to Chicago!

The combination of fast multi core processors, virtualization tech and cheaper bandwidth have made this type of service availalbe to companies of all sizes. Very exciting times!

FunBunny2 - Monday, October 18, 2010 - link

The problem with Clouding is that systems are built to the lowest common denominator (which is to say, Cheap) hardware. The cutting edge is with SSD storage, and it's not likely that public Clouds are going to spend the money.Mattbreitbach - Monday, October 18, 2010 - link

I actually see this going forward. I would put money on public cloud hosts offering different storage options, and pricing brackets to match. I also do not believe that many of the emerging cloud environments are being build with the cheapest hardware available. I would be more inclined to think that some of the providers out there are going for high-end clients who are willing to shell out the cash for performance.mlambert - Monday, October 18, 2010 - link

3PAR, HDS (VSP's) and soon EMC will all have some form of block/page/region level dynamic optimization for auto-tiering between SSD/FC-SAS/SATA. When the majority of your storage is 2TB SATA drives but you still have the hot 3-5% on SSD the costs really come down.HDS and 3PAR both do it very well right now... with HDS firmly in the lead come next April...

The problem I see is the 100-120km dark fiber sync limitation. Once someone figures out how to be sync with 20-40ms latency (or the internets somehow figure out how reduce latency) we will have some pretty cool "clouds".

rd_nest - Monday, October 18, 2010 - link

Not willing to start another vendor war here :)Wanted to make a minor correction - EMC already has dynamic sub-LUN block optimization..Also called FAST - fully automated storage teiring like you mentioned. This is in both CLARiiON and V-Max...the implementation is different, but works almost same.

Don't you feel 20-40ms is bit too much?? Most database applications/or any famous MS applications don't like this amount of latency. Though quite subjective, I tend to believe that 10-20ms is what most people want.

Well, I am sure if it is reduced to 10-20ms, people will start asking for 5ms :)