Server Clash: DELL's Quad Opteron DELL R815 vs HP's DL380 G7 and SGI's Altix UV10

by Johan De Gelas on September 9, 2010 7:30 AM EST- Posted in

- IT Computing

- AMD

- Intel

- Xeon

- Opteron

Server number 3: the Quanta QSCC-4R or SGI Altix UV 10

| CPU | Four Xeon X7560 at 2.26GHz |

| RAM | 16 x 4GB Samsung 1333MHz CH9 |

| Motherboard | QCI QSSC-S4R 31S4RMB0000 |

| Chipset | Intel 7500 |

| BIOS version | QSSC-S4R.QCI.01.00.0026.04052010655 |

| PSU | 4 x Delta DPS-850FB A S3F E62433-004 850W |

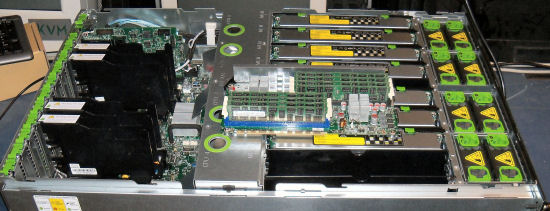

The 50kg 4U beast of Quanta that we reviewed a month ago is the representative of the quad Xeon 7500 platform. The interesting thing about this server is that the hardware is identical to the SGI Altix UV10. SGI confirms that the motherboard inside is designed by QSSC and Intel here. The pretty pictures here at SGI indeed show us an identical server.

Maximum expandability and scalability is the focus of this server: 10 PCIe slots, quad gigabit Ethernet onboard, and 64 DIMM slots. The disadvantage of the enormous amount of DIMM slots is the use of eight separate memory boards.

Each easily accessible memory board has two memory buffers. All these buffers require power, as shown by the heatsinks on top of them. The PSUs use a 2+2 configuration, but that is not necessarily a disadvantage. The PSU management logic is smart enough to make sure that the redundant PSUs do not waste any power at all: "Cold Redundancy".

The QSCC-4R server uses 130W TDP processors, but it is probably the best, albeit most expensive, choice for this server. The lower power 105W TDP Xeon E7540 only has six cores, less L3 cache (18MB), and runs at 2GHz. So it is definitely questionable whether the performance/watt of the E7540 is better compared to the Xeon X7560 which has 33% more cores and runs at a 10% higher clock.

51 Comments

View All Comments

jdavenport608 - Thursday, September 9, 2010 - link

Appears that the pros and cons on the last page are not correct for the SGI server.Photubias - Thursday, September 9, 2010 - link

If you view the article in 'Print Format' than it shows correctly.Seems to be an Anandtech issue ... :p

Ryan Smith - Thursday, September 9, 2010 - link

Fixed. Thanks for the notice.yyrkoon - Friday, September 10, 2010 - link

Hey guys, you've got to do better than this. The only thing that drew me to this article was the Name "SGI" and your explanation of their system is nothing.Why not just come out and say . . " Hey, look what I've got pictures of". Thats about all the use I have for the "article". Sorry if you do not like that Johan, but the truth hurts.

JohanAnandtech - Friday, September 10, 2010 - link

It is clear that we do not focus on the typical SGI market. But you have noticed that from the other competitors and you know that HPC is not our main expertise, virtualization is. It is not really clear what your complaint is, so I assume that it is the lack of HPC benchmarks. Care to make your complaint a little more constructive?davegraham - Monday, September 13, 2010 - link

i'll defend Johan here...SGI has basically cornered themselves into the cloud scale market place where their BTO-style of engagement has really allowed them to prosper. If you wanted a competitive story there, the Dell DCS series of servers (C6100, for example) would be a better comparison.cheers,

Dave

tech6 - Thursday, September 9, 2010 - link

While the 815 is great value where the host is CPU bound, most VM workloads seem to be memory limited rather than processing power. Another consideration is server (in particularly memory) longevity which is something where the 810 inherits the 910s RAS features while the 815 misses out.I am not disagreeing with your conclusion that the 815 is great value but only if your workload is CPU bound and if you are willing to take the risk of not having RAS features in a data center application.

JFAMD - Thursday, September 9, 2010 - link

True that there is a RAS difference, but you do have to weigh the budget differences and power differences to determine whether the RAS levels of either the R815 (or even a xeon 5600 system) are not sufficient for your application. Keep in mind that the xeon 7400 series did not have these RAS features, so if you were comfortable with the RAS levels of the 7400 series for these apps, then you have to question whether the new RAS features are a "must have". I am not saying that people shouldn't want more RAS (everyone should), but it is more a question of whether it is worth paying the extra price up front and the extra price every hour at the wall socket.For virtualization, the last time I talked to the VM vendors about attach rate, they said that their attach rate to platform matched the market (i.e. ~75% of their software was landing on 2P systems). So in the case of virtualization you can move to the R815 and still enjoy the economics of the 2P world but get the scalability of the 4P products.

tech6 - Thursday, September 9, 2010 - link

I don't disagree but the RAS issue also dictates the longevity of the platform. I have been in the hosting business for a while and we see memory errors bring down 2 year+ old HP blades in alarming numbers. If you budget for a 4 year life cycle, then RAS has to be high on your list of features to make that happen.mino - Thursday, September 9, 2010 - link

Generally I would agree except that 2yr old HP blades (G5) are the worst way to ascertain commodity x86 platform reliability.Reasons:

1) inadequate cooling setup (you better keep c7000 input air well below 20C at all costs)

2) FBDIMM love to overheat

3) G5 blade mobos are BIG MESS when it comes to memory compatibility => they clearly underestimated the tolerances needed

4) All the points above hold true at least compared to HS21* and except 1) also against bl465*

Speaking about 3yrs of operations of all three boxen in similar conditions. The most clear thi became to us when building power got cutoff and all our BladeSystems got dead within minutes (before running out of UPS by any means) while our 5yrs old BladeCenter (hosting all infrastructure services) remained online even at 35C (where the temp platoed thanks to dead HP's)

Ironically, thanks to the dead production we did not have to kill infrastructure at all as the UPS's lasted for the 3 hours needed easily ...