The World's First 3TB HDD: Seagate GoFlex Desk 3TB Review

by Anand Lal Shimpi on August 23, 2010 12:39 AM EST- Posted in

- Storage

- Seagate

- HDDs

- GoFlex Desk

The Heat Problem

When I first ripped the hard drive out of its enclosure I realized that there’s very little in the GoFlex design that promotes airflow over the drive. In fact, there only appear to be three places for air to get into/out of the enclosure: a small opening at the top, the two separations that run down the case and the openings at the bottom. The bottom is mostly covered however by the dock.

The poorly cooled enclosure becomes a real problem when you stick a high density, 5 platter, 3.5” 7200RPM hard drive in it. I measured the surface temperature of the drive out of the chassis, under moderate load over SATA (sequential writes) at 96F. With the drive in the enclosure, the plastic never got more than warm - 85F. Taking the drive off the dock and pointing an IR thermometer at the SATA connectors I measured 126F.

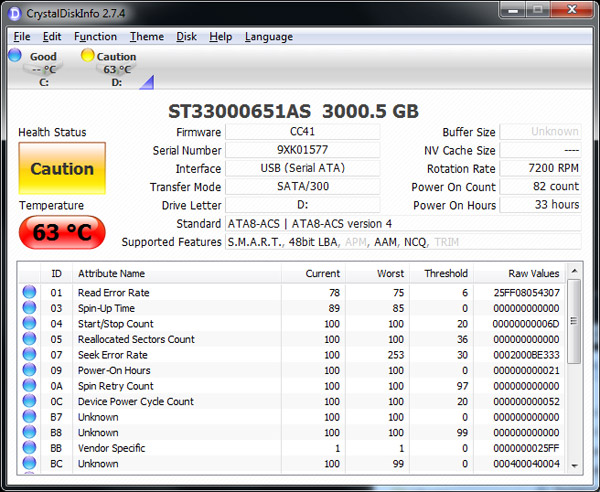

After 2 hours of copying over USB 2.0 I hit 63C on the Seagate GoFlex Desk

The drive’s internal temperatures were far worse. After 3 hours of copying files to the drive over USB 2.0 (a real world scenario since some users may want to move their data over right away) its internal temperature reached 65C, that’s 149F. The maximum internal drive temperature I recorded was 69C or 156.2F.

Hard drives aren’t fond of very high temperatures, it tends to reduce their lifespan. But in this case, the temperatures got high enough that performance went down as well. Over a USB 3.0 connection you can get > 130MB/s write speed to the drive in the 3TB GoFlex Desk. Once the drive temperature hit the mid-60s, sequential write speed dropped to ~50MB/s. The drop in write speed has to do with the increased number of errors while operating at high temperatures. I turned the drive off, let it cool and turned it back on, which restored drive write speeds to 130MB/s. Keep writing to the drive long enough in this reduced performance state and you’ll eventually see errors. I ran a sequential read/write test over night (HDTach, full test) and by the morning the drive was responding at less than 1MB/s.

Quick copies, occasional use and even live backup of small files worked fine. I never saw the internal drive temperature go above 50C in those cases. It’s the long use sequential reads/writes that really seem to wreak havok on the 3TB GoFlex Desk.

And unfortunately for Seagate, there’s no solution. You can put a cooler drive in the enclosure but then you lose the capacity sell. Alternatively Seagate could redesign the enclosure, which admittedly looks good but is poorly done from a thermal standpoint.

I asked Seagate about all of this and their response was that the temperatures seemed high and they were expecting numbers in the 60s or below but anything below 70C is fine. Seagate did concede that at higher temperatures HDD reliability is impacted but the company didn’t share any specifics beyond that.

The GoFlex Desk comes with a 2 year warranty from Seagate. Given that Seagate said the temperatures were acceptable, I’m guessing you’ll have no problems during that 2 year period. Afterwards however, I’d be very curious to see how long this thing will last at those temperatures.

81 Comments

View All Comments

gigahertz20 - Monday, August 23, 2010 - link

The heat problem mentioned in this article makes me wonder why engineers fail to correct issues like these. It can't be that much more expensive to put a fan in the unit along with more ventilation. If it was me, I would have installed a fan inside the enclosure that would only turn on when the unit reaches a certain temperature. That way it still stays quiet, but when it gets heated up to the point where it can affect its life span, the fan will cool it down.MarkLuvsCS - Monday, August 23, 2010 - link

lol so first they have a bit of an issue with some firmware and such, but now they decide their 3TB drives should double as coffee warmers?!?!?I used to consider Seagate pretty good mfg but honestly ever since their 1TB fiasco days I don't even consider them. I certainly don't want to see less competitors out there but they really need to get their acts in order.

siuol11 - Monday, August 23, 2010 - link

I used to use Seagate exclusively... I had a RAID 0 array of 7200.10 320's, and one failed completely, erasing most of my papers and photos I'd saved from college. I also had a 500GB 7200.11, one of the few to not suffer from the .11's random fail bug- 5 months in to using it, the SATA connector snapped off (there was nothing putting pressure on it, it just snapped. I booted up my computer one morning and it couldn't find the drive). My last Seagate was a 1TB 7200.12, which started getting massive amounts of bad clusters 10 months in to using it. Thankfully it lasted long enough for me to transfer my files.Since then I've switched to Maxtor... I know you can't really use the retail drives in RAID arrays, but at least none of them have blown up on me.

Belard - Monday, August 23, 2010 - link

RAID-0 is pretty much pointless... And are more acceptable to failures.If your data was that important, then a backup drive should have been used, ESPECIALLY with a RAID-0 setup.

- MAXTOR is owned by Seagate and both "brands" come off the same assembly lines... Never heard of "Can't raid a retail drive" before. Most OEMs are single drive setups... a drive is a drive.

xded - Monday, August 23, 2010 - link

> Never heard of "Can't raid a retail drive" before. Most OEMs are single drive setups... a drive is a drive.Not entirely true. The problem is that, in case of errors, the firmware on retail drives will keep trying reading the faulty sector for too long. This delay will make the RAID controller assume that the drive is gone and it will drop it out of the chain. This unnecessarily increases the load on the array due to the subsequent rebuild phase. If then another drive should fail under the increased load, you will most likely lose the whole array, while correcting the unreadable sector in the first place would have been trivial.

This is why most manufacturers also sell "RAID edition" HDDs which, other than a tweaked firmware, also have a considerably higher MTBF.

For further information, see here http://en.wikipedia.org/wiki/Time-Limited_Error_Re...

see

Belard - Monday, August 23, 2010 - link

Oh... okay. I've not forgotten about enterprise class drives, for a REAL RAID setup, I wouldn't use consumer grade drives.But for most home users, using off the shelf is usually fine. But still RAID-0 is useless compared to the speed to todays drives. The complexity, the overheard and errors aren't worth it.

Want to improve BOOT up time and startup of your apps, spend $150~$200 for an SSD.

pcfxer - Tuesday, August 24, 2010 - link

Complexity? You must be retarded.You can geom Mirror, ZFS RAID-Z, HFS+ RAID or use the onboard software RAID.

If you know what you are doing RAID is fine, but thinking RAID will improve game load speeds is lol-eriffic.

siuol11 - Monday, October 11, 2010 - link

Man, I had completely forgotten about this comment till I came back to this thread today. Thanks for the comments guys, I'm aware of all of this. The 2 .10's in RAID 0 were 320's that I was using as data drives, I'm aware that RAID 0 on physical disks doesn't help latency.I'm fairly sure Maxtor ans Seagate have different QC mechanisms, which make all the difference in the world... And after failures of 3 successive generations of their drives, I think I'll pass. I'm still pissed that I lost all that stuff (and yes, yes, I know I should have had a backup. It just wasn't possible at that time).

Wolfpup - Thursday, September 16, 2010 - link

Yikes, this gives me yet MORE reason to avoid "RAID" 0.adamdz - Monday, August 23, 2010 - link

But you had a backup, right? So you were able to get all your papers and photos back, right?And, yeah Maxtor is owned by Seagate and it was always garbage.