The RV870 Story: AMD Showing up to the Fight

by Anand Lal Shimpi on February 14, 2010 12:00 AM EST- Posted in

- GPUs

Process vs. Architecture: The Difference Between ATI and NVIDIA

Ever since NV30 (GeForce FX), NVIDIA hasn’t been first to transition to any new manufacturing process. Instead of dedicating engineers to process technology, NVIDIA chooses to put more of its resources into architecture design. The flipside is true at ATI. ATI is much less afraid of new process nodes and thus devotes more engineering resources to manufacturing. Neither approach is the right one, they both have their tradeoffs.

NVIDIA’s approach means that on a mature process, it can execute frustratingly well. It also means that between major process boundaries (e.g. 55nm to 40nm), NVIDIA won’t be as competitive so it needs to spend more time to make its architecture more competitive. And you can do a lot with just architecture alone. Most of the effort put into RV770 was architecture and look at what it gave ATI compared to the RV670.

NVIDIA has historically believed it should let ATI take all of the risk jumping to a new process. Once the process is mature, NVIDIA would switch over. That’s great for NVIDIA, but it does mean that when it comes to jumping to a brand new process - ATI has more experience. Because ATI puts itself in this situation of having to jump to an unproven process earlier than its competitor, ATI has to dedicate more engineers to process technology in order to mitigate the risk.

In talking to me Carrell was quick to point out that moving between manufacturing processes is not a transition. A transition implies a smooth gradient from one technology to another. But moving between any major transistor nodes (e.g. 55nm to 45nm, not 90nm to 80nm) it’s less of a transition and more of a jump. You try to prepare for the jump, you try your best to land exactly where you want to, but once your feet leave the ground there’s very little to control where you end up.

Any process node jump involves a great deal of risk. The trick as a semiconductor manufacturer is how you minimize that risk.

At some point, both manufacturers have to build chips on a new process node otherwise they run the risk of becoming obsolete. If you’re more than one process generation behind, it’s game over for you. The question is, what type of chip do you build on a brand new process?

There are two schools of thought here: big jump or little jump. The size refers to the size of the chip you’re using in the jump.

Proponents of the little jump believe the following. In a new process, the defect density (number of defects per unit area on the wafer) isn’t very good. You’ll have a high number defects spread out all over the wafer. In order to minimize the impact of high defect density, you should use a little die.

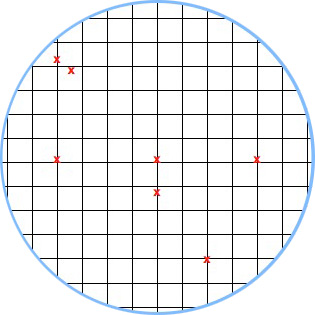

If we have a wafer that has 100 defects across the surface of the wafer and can fit 1000 die on the wafer, the chance that any one die will be hit with a defect is only 10%.

A hypothetical wafer with 7 defects and a small die. Individual die are less likely to be impacted by defects.

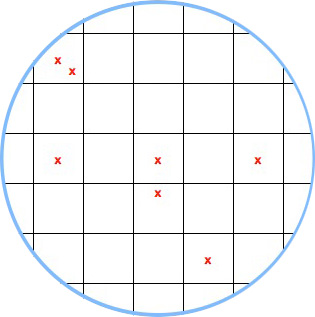

The big jump is naturally the opposite. You use a big die on the new process. Now instead of 1000 die sharing 100 defects, you might only have 200 die sharing 100 defects. If there’s an even distribution of defects (which isn’t how it works), the chance of a die being hit with a defect is now 50%.

A hypothetical wafer with 7 defects and a large die.

Based on yields alone, there’s no reason you’d ever want to do a big jump. But there is good to be had from the big jump approach.

The obvious reason to do a big jump is if the things you’re going to be able to do by making huge chips (e.g. outperform the competition) will net you more revenue than if you had more of a smaller chip.

The not so obvious, but even more important reason to do a big jump is actually the reason most don’t like the big jump philosophy. Larger die are more likely to expose process problems because they will fail more often. With more opportunity to fail, you get more opportunity to see shortcomings in the process early on.

This is risky to your product, but it gives you a lot of learning that you can then use for future products based on the same process.

132 Comments

View All Comments

simtex - Sunday, February 21, 2010 - link

Excellent article ;) Insider info is always interesting, makes me a little more happy about my -50% AMD stocks, hopefully they will one go up again.NKnight - Wednesday, February 17, 2010 - link

Great read.- Friday, February 19, 2010 - link

you should write a (few)book(s); of course in multi eReader formats

asH

- Saturday, February 20, 2010 - link

Nvidia blames sales shortfall on TSMChttp://www.eetimes.com/news/latest/showArticle.jht...">http://www.eetimes.com/news/latest/show...E1GHPSKH...

one dot you didnt connect in the article was AMD's foundry experience, which gives AMD a big advantage over NVIDIA; must have been an oversight?

asH

truk007 - Tuesday, February 16, 2010 - link

These articles are why I keep coming here. The other sites could learn a lesson here.dstigue1 - Tuesday, February 16, 2010 - link

I think the title says it all. But to add I also like the technical level. You can understand it with some cursory knowledge in graphics technology. Wonderfully written.sotoa - Tuesday, February 16, 2010 - link

Excellent work and very insightful indeed!juzz86 - Tuesday, February 16, 2010 - link

An amazing read Anand, I can wholeheartedly understand why you'd enjoy your dinners so much. One reader said that these were among the best articles on the site, and I have to agree. Inside looks into developments in the industry like this one are the real hidden gems of reviewing and analysis today. You should be very proud. Thanks again. Justin.talon262 - Tuesday, February 16, 2010 - link

Hell of an article...congrats to Anand for putting it out there and to the team at ATI for executing on some hard-learned lessons. Since I have a 4850 X2, I'm most likely going to sit Evergreen out (the only current ATI card that specs higher than my 4850 X2 (other than the 4970 X2) is Hemlock/5970 and Cypress/5870 would be a lateral move, more or less); while I run Win7, DX11 compatibilty is not a huge priority for me right this moment, but I will use the mid-range Evergreen parts for any systems I'll build/refurb over the next few months.Northern Islands, that has got me salivating...

(Crossposted at Rage3D)

Peroxyde - Monday, February 15, 2010 - link

Thanks for the great article. Do you have any info regarding ATI's commitment to the Linux platform? I used to see in Linux forums about graphics driver issues that ATI is the brand to avoid. Is it still the case?