AMD’s Radeon HD 5450: The Next Step In HTPC Video Cards

by Ryan Smith on February 4, 2010 12:00 AM EST- Posted in

- GPUs

The Almost Perfect HTPC Card

When AMD told us that the Radeon 5000 series would offer bitstreaming audio support, our first thought was “this will make an excellent HTPC card.” Unfortunately since AMD started the launch at the high-end, we’ve had to wait some time for bitstreaming audio to cascade down to the passive cards best suited for an HTPC. With the launch of the 5450, that has finally come to fruition.

This in turn has lead us to reexamine the HTPC situation in order to see if the 5450 is really powerful enough for video-only HTPCs. While the Radeon 4350 and 4550 raised the bar for HTPC cards by offering 8-channel LPCM audio, problems ultimately surfaced with their video capabilities. Since deinterlacing and other AVIVO post-processing actions are done by the shader hardware, the limited shading capabilities of these cards meant that AMD couldn’t offer the full suite of AVIVO abilities at once. It was still enough to get perfect scores on the HD HQV test, but these deficiencies became apparently under the wrong situations.

The chief complaint here was that AMD’s best and most computationally intensive deinterlacing mode - Vector Adaptive Deinterlacing - wasn’t always made available for HD resolution video. This has waffled back and forth depending on the driver version, but right now AMD’s drivers lock out this feature (and most of the other AVIVO features) if Smooth Video Playback is enabled. So 4350/4550 owners had to choose between VA and other features without being able to have it all.

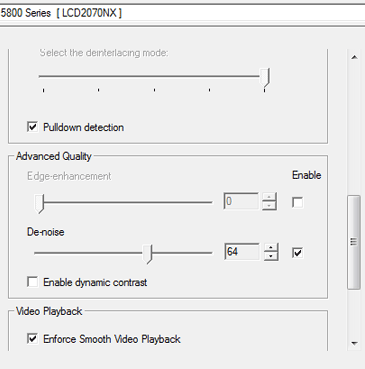

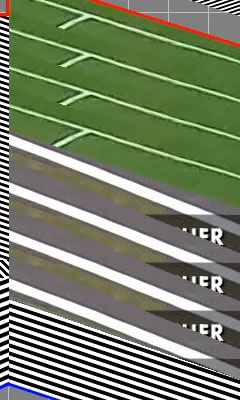

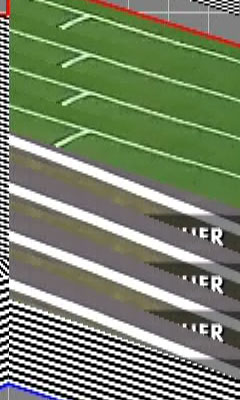

AVIVO Control Panel

When doing the research for this review we came across these complaints, and also a very interesting test for the issue: a specially crafted heavily interlaced 1080i MPEG-2 file called Cheese Slices, made by blaubart of the AV Science Forum. Cheese Slices is what amounts to an stress test that has more noise and interlacing artifacts in it than any real video would have, and is more than deinterlacers today can handle. It’s an unfair test – but that’s by design – as it does a very good job of highlighting when Vector Adaptive Deinterlacing is in use. With Cheese Slices, we can easily tell if Vector Adaptive mode is being used, or a lesser mode like Motion Adaptive is in use by what happens to the angled lines inside the geometric figures. Smooth lines are Vector Adaptive deinterlaced, jagged lines are deinterlaced with a lesser mode.

Reference quality: non-interlaced

Radeon 5670 Deinterlacing

Radeon 5450 Deinterlacing

For the sake of reference, the Radeon 5670 and higher pass this test. Cards at that performance level have more than enough shader power to process all of the AVIVO effects and provide Vector Adaptive Deinterlacing at the same time, and this is the same case for the 4600 series. The 4550 on the other hand fails this test so long as Smooth Video Playback is enabled, as it’s outright disabling Vector Adaptive Deinterlacing.

As for the 5450, the results aren’t quite what we were hoping for. AMD believes that the 5450 is powerful enough that they allow it to use all of the AVIVO processing features at once, which means the drivers allow us to turn on things like Vector Adaptive Deinterlacing and denoising even when Smooth Video Playback is enabled. However in our tests with Smooth Video Playback enabled, Vector Adaptive Deinterlacing isn’t used even when we force it through the drivers. Everything else works with Smooth Video Playback enabled, just not Vector Adaptive Deinterlacing. If we disable Smooth Video Playback, then Vector Adaptive Deinterlacing will kick in.

We’ve informed AMD of our findings, and they’re still looking in to the issue. However we believe that the problem is ultimately a lack of resources on the 5450 to handle all of this, so we’ll see what they come up with.

It also wouldn’t be fair on our part to harp on this too much, as by no means is the 5450 a bad HTPC card. Everything else works correctly and AMD’s Motion Adaptive mode is quite good. In fact the only practical reason that Vector Adaptive mode matters is that it handles one thing better than Motion Adaptive: angled lines. The lines on sports fields suffer badly from interlacing artifacts, and Motion Adaptive mode can’t completely reconstruct them correctly; you need Vector Adaptive to accomplish this. So the only issue with the 5450 as an HTPC card is that it’s not a great choice for watching sports on a large TV where Motion Adaptive Deinterlacing would result in jagged lines on the field.

Sports field lines: Non-interlaced on the left, 5450 Deinterlaced on the right

It’s a small edge case, but it’s something that keeps the 5450 from being the perfect HTPC card. Most people should be fine with Motion Adaptive deinterlacing, but if you need perfect AVIVO performance from an AMD card, you’re going to need a Redwood card such as the 5670 at this point in time. This stands as something AMD can improve for videophiles for their next series of low-end cards, similar to how they improved things for audiophiles by adding bitstreaming audio support for the 5000-series.

We also quickly tested NVIDIA’s lower-end cards here to see how they fare. NVIDIA doesn’t offer as many post-processing controls as AMD does, instead leaving it up to the drivers to decide on most things. NVIDIA’s hardware is capable of similar deinterlacing abilities as AMD’s hardware, it just isn’t user-selectable.

GeForce 210 Deinterlacing

GeForce GT 220 Deinterlacing

The GeForce 210 fares better than the 5450 with Smooth Video Playback here, but it still produces a rough output. Once we move up to the GT220, NVIDIA’s deinterlacer fully catches up and perfectly deinterlaces the angled lines on Cheese Slices.

Finally, if you’d like to see the full 1920x1080 resolution screenshots of our tests, you can get them here.

77 Comments

View All Comments

Purri - Monday, March 8, 2010 - link

Ok, so i read a lot of comments that the cheap passive DP-Adapters wont work for a EyeFinity 3 Monitor setup.But, can i use this card for a 3 monitor windows-desktop setup without eyefinity - or do i need an expensive adapter for this too?

I'm looking for a cheapish, passivly(silent) cooled card that supports 3 monitors for windows applications, that has enough performance to play a few old games now and then (like quake3) on 1 monitor.

Will this card work?

waqarshigri - Wednesday, December 4, 2013 - link

yes of course it has amd eyefinity technology .... i played new games on it like nfs run,call of duty MW3, battlefield 3,plopke - Friday, February 5, 2010 - link

:o what about the 5830 , wasn't it delayed until the 5th. It is suddenly very quiet about it on all techsite. And not launched today.yyrkoon - Thursday, February 4, 2010 - link

Your charts are all buggered up. Just looking over the charts, in Crysis: Warhead, you test the nvidia 9600GT for performance. Ok fine. Then we move a long to the Power consumption charts, and you omit the 9600GT for the 9500GT ? Better still, we move to both heat tests, and both of these card are omitted.WTH ?! Come on guys, is there something wrong with a bit of consistency ?

Ryan Smith - Friday, February 5, 2010 - link

Some of those cards are out of Anand's personal collection, and I don't have a matching card. We have near-identical hardware that produces the same performance numbers; however we can't replicate the power/noise/temperature data due to differences in cases and environment.So I can put his cards in our performance tests, but I can't use his cards for power/temp/noise testing. It's not perfect, but it allows us to bring you the most data we can.

yyrkoon - Friday, February 5, 2010 - link

Well, the only real gripe that I have here is that I actually own a 9600GT. Since we moved last year, and are completely off grid ( solar / wind ), I would have liked to compare power consumption between the two. Without having to actually buy something to find out.Oh well, nothing can be done about it now I suppose.

I can say however that a 9600GT in a P35 system with a Core 2 E6550, 4GB of ram, and 4 Seagate barracudas uses ~167-168W idle. While gaming, the most CPU/GPU intensive games for me were world in conflict, and Hellgate: London. The two games "sucked down" 220-227W at the wall. This system was also moderately over clocked to get the memory and "FSB" at 1:1. Also these numbers are pretty close, but not super accurate, But as close as I can come eyeballing a kill-a-watt while trying to create a few numbers. The power supply was an 80Plus 500W variant. Manufactured by Seasonic if anyone must know( Antec EarthWATTS 500 ).

yyrkoon - Friday, February 5, 2010 - link

Ah I forgot. The numbers I gave for the "complete" system at the wall included powering a 19" WS LCD that consistently uses 23W.dagamer34 - Thursday, February 4, 2010 - link

Where's the low-profile 5650?? I don't want to downgrade my 4650 to a 5450 just for HD bitstreaming. =/Roy2001 - Thursday, February 4, 2010 - link

Video game is on XBOX360 and Wii, so i3-530 for $117 is a better solution for me. It supports bitstream through HDMI too. My 2 cents.Taft12 - Thursday, February 4, 2010 - link

I apologize if this has been confirmed already, but does this mean we won't see a chip from ATI that falls between 5450 and 5670?There were four GPUs in this range last gen (4350, 4550, 4650, 4670)