Anand's Thoughts on Intel Canceling Larrabee Prime

by Anand Lal Shimpi on December 6, 2009 8:00 PM EST- Posted in

- GPUs

Larrabee is Dead, Long Live Larrabee

Intel just announced that the first incarnation of Larrabee won't be a consumer graphics card. In other words, next year you're not going to be able to purchase a Larrabee GPU and run games on it.

You're also not going to be able to buy a Larrabee card and run your HPC workloads on it either.

Instead, the first version of Larrabee will exclusively be for developers interested in playing around with the chip. And honestly, though disappointing, it doesn't really matter.

The Larrabee Update at Fall IDF 2009

Intel hasn't said much about why it was canceled other than it was behind schedule. Intel recently announced that an overclocked Larrabee was able to deliver peak performance of 1 teraflop. Something AMD was able to do in 2008 with the Radeon HD 4870. (Update: so it's not exactly comparable, the point being that Larrabee is outgunned given today's GPU offerings).

With the Radeon HD 5870 already at 2.7 TFLOPS peak, chances are that Larrabee wasn't going to be remotely competitive, even if it came out today. We all knew this, no one was expecting Intel to compete at the high end. Its agents have been quietly talking about the uselessness of > $200 GPUs for much of the past two years, indicating exactly where Intel views the market for Larrabee's first incarnation.

Thanks to AMD's aggressive rollout of the Radeon HD 5000 series, even at lower price points Larrabee wouldn't have been competitive - delayed or not.

I've got a general rule of thumb for Intel products. Around 4 - 6 months before an Intel CPU officially ships, Intel's partners will have it in hand and running at near-final speeds. Larrabee hasn't been let out of Intel hands, chances are that it's more than 6 months away at this point.

By then Intel wouldn't have been able to release Larrabee at any price point other than free. It'd be slower at games than sub $100 GPUs from AMD and NVIDIA, and there's no way that the first drivers wouldn't have some incompatibly issues. To make matters worse, Intel's 45nm process would stop looking so advanced by mid 2010. Thus the only option is to forgo making a profit on the first chips altogether rather than pull an NV30 or R600.

So where do we go from here? AMD and NVIDIA will continue to compete in the GPU space as they always have. If anything this announcement supports NVIDIA's claim that making these things is, ahem, difficult; even if you're the world's leading x86 CPU maker.

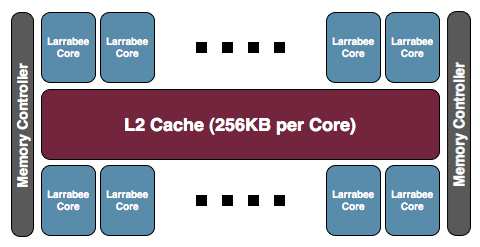

Do I believe the 48-core research announcement had anything to do with Larrabee's cancelation? Not really. The project came out of a different team within Intel. Intel Labs have worked on bits and pieces of technologies that will ultimately be used inside Larrabee, but the GPU team is quite different. Either way, the canceled Larrabee was a 32-core part.

A publicly available Larrabee graphics card at 32nm isn't guaranteed, either. Intel says they'll talk about the first Larrabee GPU sometime in 2010, which means we're looking at 2011 at the earliest. Given the timeframe I'd say that a 32nm Larrabee is likely but again, there are no guarantees.

It's not a huge financial loss to Intel. Intel still made tons of money all the while Larrabee's development was underway. Its 45nm fabs are old news and paid off. Intel wasn't going to make a lot of money off of Larrabee had it sold them on the market, definitely not enough to recoup the R&D investment, and as I just mentioned using Larrabee sales to pay off the fabs isn't necessary either. Financially it's not a problem, yet. If Larrabee never makes it to market, or fails to eventually be competitive, then it's a bigger problem. If heterogenous multicore is the future of desktop and mobile CPUs, Larrabee needs to succeed otherwise Intel's future will be in jeopardy. It's far too early to tell if that's worth worrying about.

One reader asked how this will impact Haswell. I don't believe it will, from what I can tell Haswell doesn't use Larrabee.

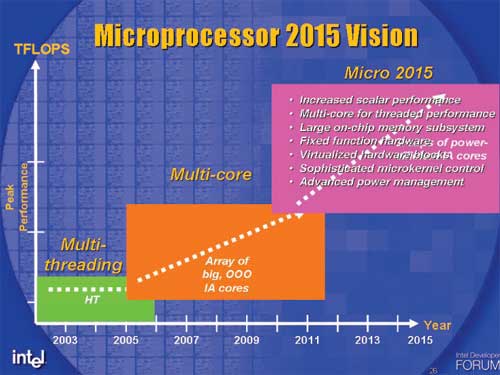

Intel has a different vision of the road to the CPU/GPU union. AMD's Fusion strategy combines CPU and GPU compute starting in 2011. Intel will have a single die with a CPU and GPU on it, but the GPU isn't expected to be used for much compute at that point. Intel's roadmap has the CPU and AVX units being used for the majority of vectorized floating point throughout 2011 and beyond.

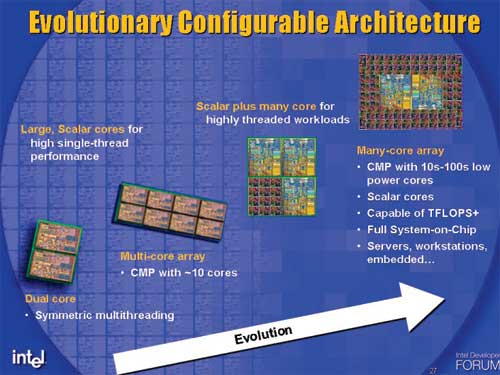

Intel's vision for the future of x86 CPUs announced in 2005, surprisingly accurate

It's not until you get in the 2013 - 2015 range that Larrabee even comes into play. The Larrabee that makes it into those designs will look nothing like the retail chip that just got canceled.

Intel's announcement wasn't too surprising or devastating, it just makes things a bit less interesting.

75 Comments

View All Comments

filotti - Wednesday, December 16, 2009 - link

Guess now we know why Intel cancelled Larrabee...Coolmike890 - Thursday, December 10, 2009 - link

I find this whole thing laughable. I remember Intel's previous attempts at graphics. I remember people saying the same things back then: "Intel is going to dominate the next gen graphics" etc. It is for this reason I have pretty much ignored everything to do with Larrabee. I figured it would be as successful as their previous attempts were.The nVidia guy is right. GPU's are hard to make. Look at all of the work they and AMD have put into graphics. Intel doesn't have the patience to develop a real GPU. After awhile they are forced to give up.

Scali - Friday, December 11, 2009 - link

A lot has changed though. Back in the early days, everything was hardwired, and a GPU had very little to do with a CPU. GPUs would only do very basic integer/fixedpoint math, and a lot of the work was still done on the CPU.These days, GPUs are true processors themselves, and issues like IEEE floating point processing, branch prediction, caching and such are starting to dominate the GPU design.

These are exactly the sort of thing that Intel is good at.

nVidia is now trying to close the gap with Fermi... delivering double-precision performance that is more in line with CPUs (where previous nVidia GPUs and current AMD GPUs have a ratio of about 1:5 for single-precision vs double-precision, rather than 1:2 that nVidia is aiming for), adding true function calling, unified address space, and that sort of thing.

With the introduction of the GeForce 8800 and Cuda, nVidia clearly started to step on Intel's turf with GPGPU. Fermi is another step closer to that.

If nVidia can move towards Intel's turf, why can't Intel meet nVidia halfway? Because some 15 years ago, Intel licensed video technology from Real3D which didn't turn out to be as successful as 3dfx' Voodoo? As if that has ANYTHING at all to do with the current situation...

Coolmike890 - Saturday, December 12, 2009 - link

You make excellent points. I will happily acknowledge that things have changed a lot. However, Intel has not. Like I say, I doubt they have the patience to develop a fully-functional competitive GPU. It takes too long. A couple of business cycles, after all of the grand press releases, someone up in the business towers decides that it's taking too long, and it's not worth the investment.I think when they decide to do these things, the boardroom people think they'll be #1 inside of a year, and when it doesn't turn out that way, they pull the plug. THAT's why they won't pull it off.

Scali - Monday, December 14, 2009 - link

Well, it's hard to say at this point.Maybe it was a good decision to not put Larrabee into mass production in its current form. That saves them a big investment on a product that they don't consider to have much of a chance of being profitable.

They haven't cancelled the product, they just want another iteration before they are going to put it on the market.

Who knows, they may get it right on the second take. I most certainly hope so, since it will keep nVidia and AMD on their toes.

erikejw - Thursday, December 10, 2009 - link

"Larrabee hasn't been let out of Intel hands, chances are that it's more than 6 months away at this point. "Some developers have working silicon(slow) since 6 months.

That is all I will say.

erikejw - Thursday, December 10, 2009 - link

3 months.I love the editing feature.

WaltC - Tuesday, December 8, 2009 - link

___________________________________________________________"Do I believe the 48-core research announcement had anything to do with Larrabee's cancelation? Not really. The project came out of a different team within Intel. Intel Labs have worked on bits and pieces of technologies that will ultimately be used inside Larrabee, but the GPU team is quite different."

___________________________________________________________

It wasn't the Larrabee team or any other product development team that canceled Larrabee, from what I gather. It was management, and management sits above the development teams and is the entity responsible for making product cancellations as well as deciding which products go to market. I don't think the 48-core research announcement had anything directly to do with Larrabee's cancellation, either, because that 48-core chip doesn't itself exist yet. But I surely think the announcement was made just prior to the Larrabee cancellation in order to lessen the PR impact of Larrabee getting the axe, and I also don't think Larrabee being canceled just before the week-end was an accident, either.

________________________________________________________

"With the Radeon HD 5870 already at 2.7 TFLOPS peak, chances are that Larrabee wasn't going to be remotely competitive, even if it came out today. We all knew this, no one was expecting Intel to compete at the high end. Its agents have been quietly talking about the uselessness of > $200 GPUs for much of the past two years, indicating exactly where Intel views the market for Larrabee's first incarnation.

Thanks to AMD's aggressive rollout of the Radeon HD 5000 series, even at lower price points Larrabee wouldn't have been competitive - delayed or not."

_______________________________________________________

Well, if Larrabee wasn't intended by Intel to occupy the high end, and wasn't meant to cost >$200 anyway, why would the 5870's 2.7 TFLOPS benchmark peak matter at all? It shouldn't have mattered at all, should it, as Larrabee was never intended as a competitor? Why bring up the 5870, then?

And, uh, what were the "real-time ray tracing" demos Intel put on with Larrabee software simulators supposed to mean? Aside from the fact that the demos were not impressive in themselves, the demos plainly said, "This is the sort of thing we expect to be able to competently do once Larrabee ships," and I don't really think this fits the "low-middle" segment of the 3d-gpu market. Any gpu that *could* do RTRT @ 25-30 fps consistently would be, by definition, anything but "low-middle end," wouldn't it?

That's why I think Larrabee was canceled simply because the product would have fallen far short of the expectations Intel had created for it. To be fair, the Larrabee team manager never said that RTRT was a central goal for Larrabee--but this didn't stop the tech press from assuming that anyway. Really, you can't blame them--since it was the Intel team who made it a part of the Larrabee demos, such as they were.

_______________________________________________________

"It's not until you get in the 2013 - 2015 range that Larrabee even comes into play. The Larrabee that makes it into those designs will look nothing like the retail chip that just got canceled."

_______________________________________________________

Come on, heh...;) If the "Larrabee" that ships in 2013-2015 (eons of time in this business) looks "nothing like the retail chip that just got canceled"--well, then, it won't be Larrabee at all, will it? I daresay it will be called something else entirely.

Point is, if the Larrabee that just got canceled was so uncompetitive that Intel canceled it, why should the situation improve in the next three to five years? I mean, AMD and nVidia won't be sitting still in all that time, will they?

What I think is this: "Larrabee" was a poor, hazy concept to begin with, and Intel canceled it because Intel couldn't make it. If it is uncompetitive now, I can't see how a Larrabee by any other name will be any more competitive 3-5 years from now. I think Intel is aware of this and that's why Intel canceled the product.

ash9 - Wednesday, December 9, 2009 - link

Larrabee Demo:Did anyone note the judder effects of the flying objects in the distance?

Penti - Monday, December 7, 2009 - link

I really think It's a shame. I was hoping for a discreet Intel larrabee-card. It would be perfect for me that aren't a die hard gamer but still wants decent performance. I don't think the "software" route is a bad one, people don't seem to realize that that's exactly what AMD/ATi and nVidia is doing. Vidcards don't understand DX and OGL. Plus Video-cards is for so much more then DX and OGL now days. It's shaders that help in other apps, it's OpenCL, it's code written for specific gpus and so on. Also Intels Linux support is rock solid. An discreet GPU from Intel with Open source drivers would really be all it can be. Something the others wouldn't spend the same amount of resources trying to accomplish. Even the AMD server boards uses Intel network chips because they can actually write software. But at least we can look forward to the Arrandale/Clarkdale / H55. But I was also looking forward to something more resembling workstation graphics. But at least I hope Arrandale/Clarkdale helps to bring multimedia to alternative systems. Even though that will never fully happen in patent-ridden world. But at least it's easier to fill the gap with good drivers that can handle video. I also see no reason why Apple wouldn't go back to Intel chipsets including IGPs.