Understanding the iPhone 3GS

by Anand Lal Shimpi on July 7, 2009 12:00 AM EST- Posted in

- Smartphones

- Mobile

Superscalar to the Rescue

If deepening the pipeline gives us higher clock speeds and more instructions being worked on at a time, but at the expense of lower performance when things aren’t working optimally, what other options do we have for increasing performance?

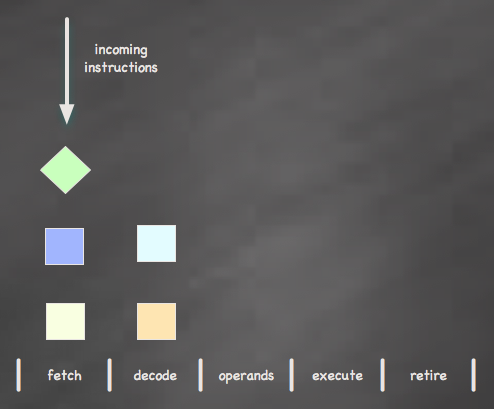

Instead of going deeper, what about making our chip wider? In our previous example only a single instruction could be active at any given stage in the pipeline - what if we removed that limitation?

A superscalar processor is one that allows multiple instructions to be active at any given stage in the pipeline. Through some duplication of resources you can now have two or more instructions at the same stage at the same time. The simplest superscalar implementation is a dual-issue, where two instructions can go down the pipe in parallel. Today’s Core 2 and Core i7 processors are four issue (four instructions go down the pipe in parallel); the high end hasn’t been dual issue since the days of the original Pentium processor.

The benefits of a superscalar chip are obvious: you potentially double the number of completed instructions at any given time. Combine that with a reasonably pipelined, high clock speed architecture and you have the makings of a high performance processor.

The drawbacks are also obvious; enabling a multi-issue architecture requires more transistors, which drive up die size (cost) and power (heat). Only recently have superscalar designs made their way into mobile devices thanks to smaller and cooler switching transistors (e.g. 45nm). You also have to worry even more about keeping the CPU fed with instructions, which means larger caches, faster memory buses and clever architectural tricks to extract as much instruction level paralellism as possible. A dual issue chip is a waste if you can’t keep it fed consistently.

Raw Clock Speed

The previous two examples of architectural enhancements are major improvements in design. To design a modern day CPU with more pipeline stages or to go from a single to dual-issue design takes a team years to implement; these are not trivial improvements.

A simpler path to improving performance is to just increase the clock speed of the CPU. In the first example I provided, our CPU could only run as fast as the most complex pipeline stage allowed it. In the real world however, there are other limitations to clock speed.

Manufacturing issues alone can severely limit clock speed. Even though an architecture may be capable of running at 1GHz, the transistors used in making the chip may only be yielding well at 600MHz. Power is also a major concern. A transistor usually has a range of switching speeds. Our hypothetical 45nm process may be able to run at 300MHz at 0.9500V or 600MHz at 1.300V; higher frequencies generally mean higher voltage, which results in higher power consumption - a big issue for mobile devices.

The iPhone’s processor is based on a SoC that can operate at up to 600MHz, for power (and battery life) concerns Apple/Samsung limit the CPU core to running at 412MHz. The architecture can clearly handle more, but the balance of power and battery life gate us. In general, increasing clock speed alone isn’t a desirable option to improve performance in a mobile device like a smartphone because your performance per watt doesn’t improve tremendously if at all.

In terms of sheer performance however, just increasing clock speed is preferred to deepening your pipeline and increasing clock speed. With no increase in pipeline depth you don’t have to worry about keeping any more stages full, everything just works faster if you increase your clock speed.

The key take away here is that you can’t just look at clock speed when it comes to processors. We learned this a long time ago in the desktop space, but it seems that it’s getting glossed over in the smartphone market. A 400MHz dual-issue core is going to be a better performer than a 500MHz single-issue core with a deeper pipeline, and the 528MHz processor in the iPod Touch is no where near as fast as the 600MHz processor in the iPhone 3GS.

60 Comments

View All Comments

lightzout - Saturday, July 11, 2009 - link

My wife actually offered to give me her 3g if she got the the 3gs but I didnt think it was worth it. She asked me this morning how it was better and I didnt know (didnt admit it of course)Now I want her 3G "free" and she really does need the 3gs since since is always multitasking/social/mail..me, including aim.

I thought the 3gs would have some radical new gps stuff but the compass is not impressive. Nothing to get me geeked on to the tune of $200. For my purposes having the older iphone would make travel and remodeling job estimating easier over my tattered razr.

My media mogul mamacita however needs that sleek new 3gs like yesterday as every gripe she has about the 3g phone seems to have been addressed somehow.

Great write-up!

Only regret is when I saw the new screen and sleek size of the 3gs at the apple store a couple days ago it does screem "arent I beautiful?" but that is what apple does so well right?

MrBowmore - Saturday, July 11, 2009 - link

Give the magic, or hero another chance!Your numbers for those phones are whacked, its faster than the 3G at alot of things. Try to kill all the backgroundapps. (yes, it multitasks)

RadnorHarkonnen - Friday, July 10, 2009 - link

Very good analisys.I was just surprised ARM CPUs still made on 90nm and 65nm. With the performance and power saving 55nm and 45 nm processes i would imagine they would jump the bandwagon fast.

nubie - Thursday, July 9, 2009 - link

Some people can't drop $600 in a lump or $2600 over 3 years on something as stupid as a cellphone. No matter what it can do.Besides the fact that Apple is killing all support for proper hardware acceleration and access to OpenGL 2.0, whatever.

Can we get more Android and G1 coverage? Please?

psonice - Friday, July 10, 2009 - link

Like the guy above said, you buy a phone, you either pay a lot upfront, or you get it with a contract. Either way you'll still need to pay a ton of money each month to for your voice and data. You could get a cheap phone that only makes calls and costs almost nothing, but that's not the same is it?And what's this about apple not supporting hardware acceleration / opengl es 2.0??? Almost everything in the gui is hardware accelerated. And there's very good opengl es 1.1/2.0 support in the sdk, hence the ton of hardware accelerated games. There may not be much supporting es2.0 yet, but that's because the first 2.0 capable device has only just been released.

Affectionate-Bed-980 - Friday, July 10, 2009 - link

You know what? The cost is:$199 up front

$70 / year * 24 months

= $1680 + $199

But let's face it, most of you already have cell phones. A quick look at a WinMo phone like the HTC Touch Pro is $70 / month too at minimum ($39.99 voice + $30 data. Same with a Blackberry.

SO WHY THE HELL ARE YOU COMPLAINING?

So if $1880 is too much for you, don't get a cell phone period.

Stop complaining. The iPhone is actually pretty damn cheap. You're locked in a contract, but even if you had another phone WHY WOULD YOU GO DATALESS?

araczynski - Thursday, July 9, 2009 - link

i'll care about the iphone/ipod when they start sporting VGA screens. if my digital camera can have a 3" 640x480 display, so should these overpriced toys.psonice - Friday, July 10, 2009 - link

Higher res screens look pretty, but 640x480 needs 2x more power to fill than 480x320. The screen is more than acceptable already, so I'd take faster running apps/games and longer battery life over more pixels any day.Kougar - Thursday, July 9, 2009 - link

Thanks for the informative crash course in CPU instructions, that filled in some gaps I didn't understand. It's nice to now understand how some aspects of the design fit into or affect the rest of the design.Unfortunately, you've only drummed up the excitement factor for Intel's Sandy Bridge... from some general info that's been around and based on what you've given it sound like the potential is very much there for some very significant performance jumps. So much for Gulftown's allure!

christinme7890 - Thursday, July 9, 2009 - link

I love the attention to detail when describing the CPUs and the graphics processor and stuff. Very cool. I hate that other people are dissing the iphone hardware. If you don't like Macs rules get a pre. Plain and simple. I for one support these people that want to sell their apps for a good price and are trying to make it big in the dev world. Kudos and I will buy your apps.I will be honest, I am sick of the multitasking argument. You do hit on a point that needs to be addressed imho by Apple and that is that there is no good app for chatting. I really think that Apple needs to include their own IM App that stays on in the background (if you want it to) and collects all your SMS, MMS, IM, facebook, Twitter, etc messages. This would be great. While it would be great I recognize that this would totally sap the power on the iphone. If you had all this info push to your phone, the servers would be constantly sending you messages every second. As for multitasking, I don't really care to have it. There are areas where I wish I had it but it is not necessary. Not to mention that the palm pre has a horrible battery life...plain horrible. I hear people talk like they need 3 backup batteries just to get through the day.

I have noticed myself that the compass is a little sketchy. There was a time on 07/04 that a friend and I were lost in the city walking around and we used my maps app to find where we are and I tried to get the compass to work to make reading the map easy and it wouldn't work. The map wouldn't rotate and it was frustrating. Oh well.

Your review of the camera was spot on. It will never replace my uber camera but when I am out and about doing whatever it does great for quick and easy pics. And the movie functions are awesome as well. Now if only you could cut out middle pieces of a movie. Hopefully soon.

I love the speed of the 3gs. I notice, not tested but notice, a large speed increase and I absolutely love it.

The one major place the 3GS has over the pre is the App store. No company has been able to implement an app store like Apple. I get all my multimedia from one source (itunes) which is great....Movies, podcasts, video, audio, apps, etc...all in one place is the best thing that apple has done in forever. I will not argue prices or app submission ethics because I truly believe that apple keeps the People as their top priority.

Great article.