Optimizing for Virtualization, Part 2

by Liz van Dijk on June 29, 2009 12:00 AM EST- Posted in

- IT Computing

Layout changes: noticing a drop in sequential read performance?

When considering virtualization of a storage system, an important step is researching how actual LUN layout changes when migrating to a virtualized environment. Adding an extra layer of abstraction and consolidating everything into vmdk-files can sometimes make it complicated to keep up with how it all maps to actual physical storage.

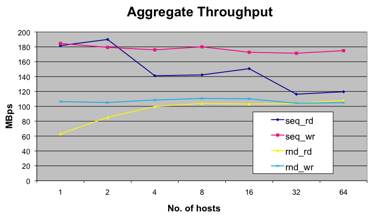

By consolidating previously separate storage systems into a single array, servicing multiple VM’s, we notice a change in access patterns. Because of queueing systems on all levels (guest, ESX and the array itself), what are actually supposed to be sequential reads coming from a single system get interleaved with the reads of other VM’s, resulting in a random access pattern. Keep this in mind when you notice a drop in otherwise solidly performing sequential read operations.

An important aspect about these queues is the fact that they can be tampered with. If, for example, one would like to perform a test on a single VM that really requires it to get the most out of the LUN as possible, ESX is able to change its queues to temporarily fit the requirements. As it is, the queues’ standard size is 32 outstanding IO’s per VM, which should be optimal for a standard VM-layout.

Linux database consideration

Specifically for Linux database machines, there is another important factor to consider (Windows takes care of this automatically). This is something we need to be extra careful about during the development of vApus Mark II: the Linux version of our benchmark suite, but anyone using databases under Linux should be familiar with this. It is generally recommended to cache as much of the database in the database cache, rather than allowing the more general OS buffer cache to take care of it. This recommendation once more plays a big role when virtualizing the workloads, as managing the file system buffer pages is more costly to the hypervisor.

This parameter should be set from inside the database system however, and usually comes down to configuring O_DIRECT mode as the preferred method of approaching storage (in mysql, it comes down to setting the innodb_flush_method to O_DIRECT).

13 Comments

View All Comments

vorgusa - Monday, June 29, 2009 - link

Just out of curiosity will you guys be adding KVM to the list?JohanAnandtech - Wednesday, July 1, 2009 - link

In our upcoming hypervisor comparison, we look at Hyper-V, Xen (Citrix and Novell) and ESX. So far KVM has got lot of press (in the OS community), but I have yet to see anything KVM in a production environment. We are open to suggestions, but it seems that we should give priority to the 3 hypervisors mentioned and look at KVM later.It is only now, June 2009, that Redhat announces a "beta-virtualization" product based on KVM. When running many VMs on a hypervisor, robustness and reliability is by far the most important criteria, and it seems to us that KVM is not there yet. Opinions (based on some good observations, not purely opinions :-) ?

Grudin - Monday, June 29, 2009 - link

Something that is becoming more important as higher I/O systems are virtualized is disk alignment. Make sure your guest OS's are aligned with the SAN blocks.yknott - Monday, June 29, 2009 - link

I'd like to second this point. Mis-alignment of physical blocks with virtual blocks can result in two or more physical disk operations for a single VM operation. It's a quick way to kill I/O performance!thornburg - Monday, June 29, 2009 - link

Actually, I'd like to see an in-depth article on SANs. It seems like a technology space that has been evolving rapidly over the past several years, but doesn't get a lot of coverage.JohanAnandtech - Wednesday, July 1, 2009 - link

We are definitely working on that. Currently Dell and EMC have shown interest. Right now we are trying to finish off the low power server (and server CPUs) comparison and the quad socket comparison. After a the summer break (mid august) we'll focus on a SAN comparison.I personally have not seen any test on SANs. Most sites that cover it seem to repeat press releases...but I have may have missed some. It is of course a pretty hard thing to do as some of this stuff is costing 40k and more. We'll focus on the more affordable SANs :-).

thornburg - Monday, June 29, 2009 - link

Some linux systems using the 2.6 kernel make 10x as many interrupts as Windows?Can you be more specific? Does it matter which specific 2.6 kernel you're using? Does it matter what filesystem you're using? Why do they do that? Can they be configured to behave differently?

The way you've said it, it's like a blanket FUD statement that you shouldn't use Linux. I'm used to higher standards than that on Anandtech.

LizVD - Monday, June 29, 2009 - link

As yknott already clarified, this is not in any way meant to be a jab at Linux, but is in fact a real problem caused by the gradual evolution of the Linux kernel. Sure enough, fixes have been implemented by now, and I will make sure to have that clarified in the article.If white papers aren't your thing, you could have a look at http://communities.vmware.com/docs/DOC-3580">http://communities.vmware.com/docs/DOC-3580 for more info on this issue.

thornburg - Monday, June 29, 2009 - link

Thanks, both of you.thornburg - Monday, June 29, 2009 - link

Now that I've read the whitepaper, and looked at the kernel revisions in question, it seems that only people who don't update their kernel should worry about this.Based on a little search and a wikipedia entry, it appears that only Red Hat (or the major distros) is still on the older kernel version.