Phenom II X3 720BE & CrossFire X Performance - Does it Compete?

by Gary Key on March 28, 2009 12:00 AM EST- Posted in

- Motherboards

Company of Heroes: Opposing Fronts

The oldest title in our test suite is still the most played. CoH has aged like fine wine and we still find it to be one of the best RTS games on the market. We look forward to the Tales of Valor standalone expansion in a couple of weeks. In the meantime, we crank all the options up to their highest settings, enable AA at 2x, and run the game under DX9. The DX10 patch offers some improved visuals but with a premium penalty in frame rates. We track a custom replay of Able Company’s assault at Omaha Beach with FRAPS and average three test runs for our results.

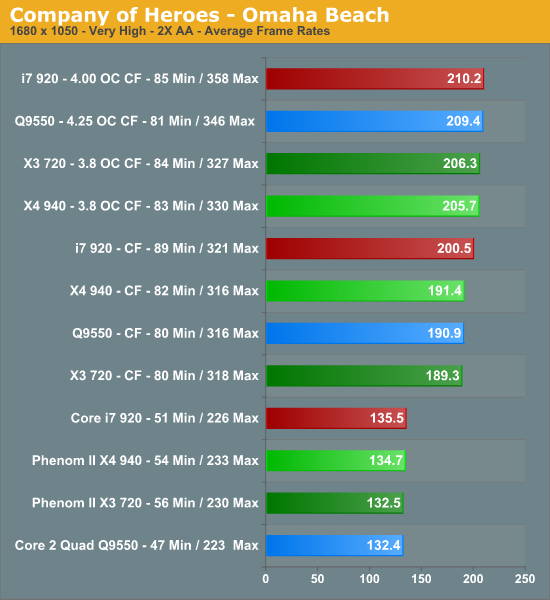

Single card scores at 1680x1050 are fairly close between each platform. In this particular game, the Phenom II X4 940 offers slight better performance than the X3 720BE in the single card and CrossFire results with a 1% advantage thanks to a higher clock speed. When overclocked the 720BE finishes ahead ever so slightly but within our margin of error on the benchmark. Installing a second card for CrossFire operation improves average frame rates by 42% and minimum frame rates by 43% for the X3 720BE. Overclocking the 720BE resulted in a 9% improvement in average frame rates and 5% in minimum frame rates, indicating we are largely GPU limited at this point.

In our previous testing with the 8.12 drivers, the Intel systems would generate minimum frame rates in the 23~24fps range on a couple of runs and then jump to their current results or higher on the others. Guess what, we still noticed that problem with the 9.3 drivers. However, the hitch and pausing we encountered previously was mitigated somewhat in our new tests. It was only in intensive ground scenes with numerous units that we really noticed the problem and it was primarily with the Q9550 platform. Both Phenom II systems had extremely stable frame rates along with very fluid game play during the heavy action sequences.

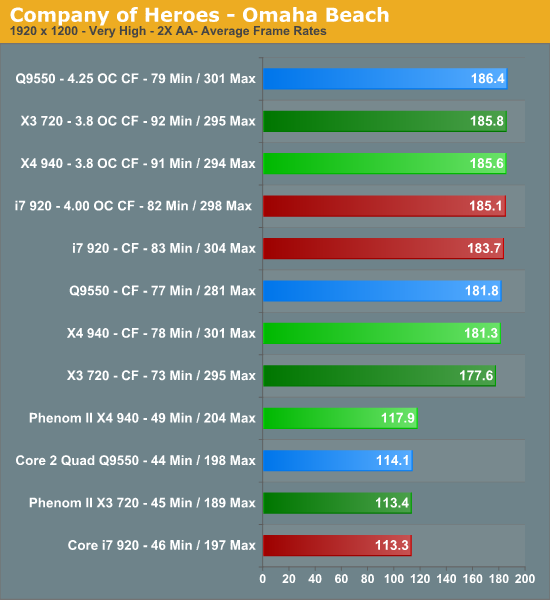

The Phenom II X4 940 leads the group at 1920x1200 with a single card. The X4 940 is 4% faster in average frame rates in single card mode and 2% faster than the 720BE in CrossFire. The X4 940 has a 8% advantage in minimum frame rates in single card results and 7% in CrossFire. When overclocked, both Phenoms are equal for all intent purposes. Adding a second card for CrossFire operation improves average frame rates by 57% and minimum frame rates by 62% for the 720BE. Overclocking the 720BE only improved frame rates 5% as we continue to be GPU bound at this resolution.

What about the game play experience? As we mentioned earlier, the Intel Q9550 platform had some problems with minimum frame rates throughout testing - not just in the benchmarks, but also during game play in various levels and online. The i7 platform would behave in the same manner at times, but the game play experience with it has certainly improved with the 9.3 driver set and BIOS upgrades. The problem is very likely driver related in some manner (as the man who helped to start DirectX once put it, "the drivers are always broken"), but nevertheless this continues to be a problem on the two Intel platforms.

We could not discern any differences between the X3 720BE and the X4 940 during game play. Actually, how could we, the frame rates were basically even in all situations. Even the slight gap in minimum frame rate differences between the two processors did not create any problems during our gaming sessions.

59 Comments

View All Comments

marsspirit123 - Sunday, May 31, 2009 - link

For the $160 less with amd 720 you can get 4890 in cf and beat I7 for the same money in thouse games .Royal13 - Saturday, April 11, 2009 - link

Which system will perform better in games? I can not oc mine E6600 more then 3GHz with box cooler, but was hopping for 3.5GHz at least with X3 720. Can it be done without any alternative cooling?GFX does not really metter. I have 9800GX2, 3870X2 and 4870 at the moment.

Or should I just go for PII940 instead? It costs around 100$ more, but a don't have a lot to spend, so CPU should hold 2 years. The same as mine E6600 did.

What do you think?

I want to oc, less power usage, more like a standard internet pc, but with a good gfx, course I have SyncMaster T260HD, so I am forced to run games at 1900x1200.

7Enigma - Tuesday, March 31, 2009 - link

Guys, I love CoH. I'm currently playing OF and will probably be getting the latest expansion in a couple weeks but for the love of God please use DX10!You've used this same introduction for ages now:

"In the meantime, we crank all the options up to their highest settings, enable AA at 2x, and run the game under DX9. The DX10 patch offers some improved visuals but with a premium penalty in frame rates."

That premium penalty is what exactly? 20%? 50%? In this test you have a MINIMUM frame rate of 45 with a single card at the stock cpu frequencies, while the average is >110fps. That's like running at 800X600 resolution so you can have 400fps. I get that at some crazy high resolutions or with very lopsided hardware (fast cpu with slow gpu or vice-versa) you may have some frame rate issues, but please this is a RTS and not a FPS. If you want it to age better and have it actually stress the system properly (regardless of whether it looks tremendously better) use DX10.

All you have to do is change the introduction to say, "In the meantime, we crank all the options up to their highest settings, enable AA at 2x, and run the game under DX10. DX9 offers virtually the same graphical experience minus some improved visuals but at a significantly increased framerate".

Great article btw!

Plyro109 - Tuesday, March 31, 2009 - link

Well, my memory isn't the best sometimes, but if I remember, on my computer going from DX9 to DX10 on CoH resulted in about a 70% performance hit. This equates from about 120FPS to about 30-35 for the average, and on the minimum end, from 35 to about 10.I'd rather have the MUCH higher framerate than the SLIGHTLY improved visuals.

7Enigma - Wednesday, April 1, 2009 - link

So I did a bit of googling and you are correct; seems to be about a 70% performance penalty which is significant. For gaming I agree with you, but for benchmarking I still think DX10 should be used, if only to stress the gpu/system more in line with the other titles used.I didn't realize it was so bad since I'm gaming on a 19" LCD and cranked everything up to max with 4X AA...but I did just build my gaming rig in January with a 4870 so the combination of good hardware and low res is probably what hid the huge drop in framerate.

Niteowler - Monday, March 30, 2009 - link

I had to make the decision between a Phenom 2 quad-core 940 or a Phenom 3 720 triple-core cpu about 3 weeks ago. I went with the 940 because it's a stronger performer across the board and one obvious reason that i figured out that wasn't mentioned in this article.....the cost of each system. Phenom 3 motherboards cost more in general and DDR3 is certainly more expensive. There was only about $10 to $15 difference for both systems. It basically came down to whether I wanted DDR3 or an extra core more. Phenoms don't really take full advantage of DDR3 yet in any of the reviews that I have read. The 720 is a decent performer in it's own right and I wouldn't have felt to bad buying one until the black edition quad core Phenom 3's come out.Visual - Monday, March 30, 2009 - link

What you call "Phenom 3" is more properly "Phenom X3", or triple-core. you make it sound confusingly like third-generation or something.Also, it runs perfectly fine on AM2+ motherboards, with DDR2. You are right that AM3 and DDR3 are more expencive, but that is not related to the CPU choice.

Not that there's anything wrong with going for the quad 940 - I think it is worth the extra cash... just pointing out, it is indeed some extra cash, quite more than the $10-$15 you state, compared to a X3 AM2+ setup.

XiZeL - Sunday, March 29, 2009 - link

grate article... really makes you think twice before spending extra cash on an intel rig.tshen83 - Sunday, March 29, 2009 - link

There used be a time where reviewers would properly review a platform. It is funny to read articles that say "Phenom is a great gaming platform" because the so called "equivalent gaming performance" compared to last-gen Intel core2 based CPU is simply GPU bound, even with crossfire.X3 processors are junk. They are broken POS that AMD couldn't sell unless one core is disabled. The question is why would you want to buy a triple core 95W processor, when you can buy a quad core 75W ACP(95W TDP) Opteron 1352 for about $110 now on newegg. Pay more and get less core :)

I can hardly recommend Phenoms when the Q8200 is a much better performer from a previous generation. Then again, the i7-920 simply trumps anything AMD has right now.

If you are a casual gamer, even Atom 330 on Nvidia 9400M can be a great platform for only 20W total platform power consumption. AMD better pick up their pathetic engineering effort and start doing some thinking, because the time bomb is ticking for them. I actually like Dirk Myers, compared to that POS Hector Ruiz.

waffle911 - Sunday, March 29, 2009 - link

I fail to see a single valid and substantiated argument in your poorly written post, other than the fact that the PII 720BE costs more than the Opty 1352, and has a higher TDP.The X3 is an X4 with one core disabled either because it has a defect or because AMD needed to boost there quota of X3's to meet demand, which is actually becoming the more frequent of the two situations. Your arguments for "energy efficiency", if that is what you're arguing, are comparing apples to oranges. You have performance, or you have efficiency. But overall efficiency is also affected by how much data can be processed for each joule of energy expended, and in that vein the 720 still trumps the 1352, because it can process a certain amount of information faster enough than the 1352 that it can expend less energy overall despite having higher peak energy use. And that 95W rating isn't very indicative of how much energy it will actually use for any given task, either (nor, for that matter, is the 75W rating of the Opteron).

PII 720:

2.8GHz

4000MHz HT

L1 2x128kB

L2 3x512kB

L3 6MB

45nm

$135.99

Opt 1352:

2.1GHz

2000MHz HT

L2 4x512kB

L3 2MB

65nm

$114.99

You are definitely getting less processor for less money. Not only is the Opteron (a server/workstation processor!) using technology that's approaching 2 generations old, but price/performance wise the 720 is a better performer for most desktop applications. Not many (if any) games use 4 cores, so they benefit more from the added speed than the number of cores. Plus, you get an unlocked multiplier and actual room to overclock. For my $20 extra, I would gladly take a 720 clocked at 3.6 or a conservative 3.4 over an Opty 1352 at an optimistic 2.6, if you can get it to overclock at all. It's all about quality over quantity. I would rather have one BMW 335i over 2 Honda Civic Si's if I'm going to be the only one driving them. And it would only be me, because games only use 2 cores at best, not 4, and I can only drive one car at a time. You have 2 cores left over doing nothing, just like I've got one Civic Si sitting parked in my driveway while I drive the other.

And no, the Q8200 is not necessarily a better performer. In most applications, the PII 920 will outperform it (it'll even compete with the Q9300, which is more recent), and now it is priced similarly ($164), making it a much better value. But the PII 720 has longevity on its side, because its compatible with AM3. The Q8200 has nowhere to go, and LGA775 is a dying breed. I like knowing I can upgrade my system in bits and pieces further on down the road as I can afford them, rather than having to fully replace my motherboard, CPU, and RAM all at once. I can do the CPU now, the motherboard and RAM later when prices come down, and then when a better high-end CPU comes along I can upgrade to that as well.

Plus, while the i7-920 may beat anything AMD has right now, it's a terrible value for the money. Between an $800 PII 720 gaming rig and an $800 i7-920 gaming rig, the 720 allows room in the budget for better graphics, more RAM, more HD space... the i7 just doesn't allow for a very balanced system on a budget.

And I have yet to see a single commercially available example of an Atom paired with a 9400M. All of the ones out there are engineering/testing samples. But when that does come along, I will gladly get it and put it in my car PC.