The RV770 Story: Documenting ATI's Road to Success

by Anand Lal Shimpi on December 2, 2008 12:00 AM EST- Posted in

- GPUs

The Bet, Would NVIDIA Take It?

In the Spring of 2005 ATI had R480 on the market (Radeon X850 series), a 130nm chip that was a mild improvement over R420 another 130nm chip (Radeon X800 series). The R420 to 480 transition is an important one because it’s these sorts of trends that NVIDIA would look at to predict ATI’s future actions.

ATI was still trying to work through execution on the R520, which was the Radeon X1800, but as you may remember that part was delayed. ATI was having a problem with the chip at the time, with a particular piece of IP. The R520 delay ended up causing a ripple that affected everything in the pipeline, including the R600 which itself was delayed for other reasons as well.

When ATI looked at the R520 in particular it was a big chip and it didn’t look like it got good bang for the buck, so ATI made a change in architecture going from the R520 to the R580 that was unexpected: it broke the 1:1:1:1 ratio.

The R520 had a 1:1:1:1 ratio of ALUs:texture units:color units:z units, but in the R580 ATI varied this relationship to be a 3:1:1:1. Increasing arithmetic power without increasing texture/memory capabilities; ATI noticed that shading complexity of applications went up but bandwidth requirements didn’t, justifying the architectural shift.

This made the R520 to R580 transition a much larger one than anyone would’ve expected, including NVIDIA. While the Radeon X1800 wasn’t really competitive (partially due to its delay, but also due to how good G70 was), the Radeon X1900 put ATI on top for a while. It was an unexpected move that undoubtedly ruffled feathers at NVIDIA. Used to being on top, NVIDIA doesn’t exactly like it when ATI takes its place.

Inside ATI, Carrell made a bet. He bet that NVIDIA would underestimate R580, that it would look at what ATI did with R480 and expect that R580 would be similar in vain. He bet that NVIDIA would be surprised by R580 and the chip to follow G70 would be huge, NVIDIA wouldn’t want to lose again, G80 would be a monster.

ATI had hoped to ship the R520 in early summer 2005, it ended up shipping in October, almost 6 months later and as I already mentioned, it delayed the whole stack. The negative ripple effect made it all the way into the R600 family. ATI speculated that NVIDIA would design its next part (G71, 7900 GTX) to be around 20% faster than R520 and not expect much out of R580.

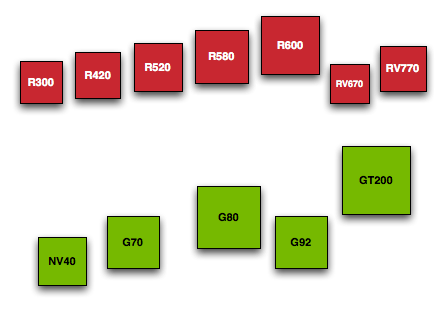

A comparison of die sizes for ATI and NVIDIA GPUs over the year, these boxes are to scale. Red is ATI, Green is NV.

ATI was planning the R600 at the time and knew it was going to be big; it started at 18mm x 18mm, then 19, then 20. Engineers kept asking Carrell, “do you think their chip is going to be bigger than this?”. “Definitely! They aren’t going to lose, after the 580 they aren’t going to lose”. Whether or not G80’s size and power was a direct result of ATI getting too good with R580 is up for debate, I’m sure NVIDIA will argue that it was by design and had nothing to do with ATI, and obviously we know where ATI stands, but the fact of the matter is that Carrell’s prediction was correct - the next generation after G70 was going to be a huge chip.

If ATI was responsible, even in part, for NVIDIA’s G80 (GeForce 8800 GTX) being as good as it was then ATI ensured its own demise. Not only was G80 good, but R600 was late, very late. Still impacted by the R520 delay, R600 had a serious problem with its AA resolve hardware that took a while to work through and ended up being a part that wasn’t very competitive. Not only was G80 very good, but without AA resolve hardware the R600 had an even tougher time competing. ATI had lost the halo, ATI’s biggest chip ever couldn’t compete with NVIDIA’s big chip and for the next year ATI’s revenues and marketshare would suffer. While this was going on, Carrell was still trying to convince everyone working on the RV770 that they were doing the right thing, that winning the halo didn’t matter...just as ATI was suffering from not winning the halo. He must’ve sounded like a lunatic at the time.

When Carrell and crew were specing the RV770 the prediction was that not only would it be good against similarly sized chips, but it would be competitive because NVIDIA would still be in overshoot mode after G80. Carrell believed that whatever followed G80 would be huge and that RV770 would have an advantage because NVIDIA would have to charge a lot for this chip.

Carrell and the rest of ATI were in for the surprise of their lives...

116 Comments

View All Comments

Sahrin - Monday, January 25, 2010 - link

Anand,I love this piece. Not sure if you'll get notified, but while doing some research on the performance of Hybrid Crossfire, I came back - it was interesting to see the tone of the piece, and hear about the guys at ATI talking vageuly about what would become the 5870. Fascinating stuff, I've got to put a bookmark in my calendar to remind me to come back to this next year when RV970 is released (pending no further difficulties).

Any chance of a follow-up piece with the guys in SC?

caldran - Wednesday, December 24, 2008 - link

the gpu industry is squeezing more and more transistors(SP s or what ever) .it would be energy efficient if it could disable some cores when there is less load than reducing clock frequency and 2D mode.just like in the latest AMD processor.a HD 4350 would consume power less than HD 4850 in IDLE right.bupkus - Wednesday, December 10, 2008 - link

I couldn't put it down until I had finished.Extremely enjoyable write!

yacoub - Tuesday, December 9, 2008 - link

"that R580 would be similar in vain"You want vein. Not vain, not vane. Vein. =)

CEO Ballmer - Sunday, December 7, 2008 - link

You people don't mention their alliance with MS!http://fakesteveballmer.blogspot.com">http://fakesteveballmer.blogspot.com

BoFox - Sunday, December 7, 2008 - link

LOL!!!!!BoFox - Sunday, December 7, 2008 - link

Great article--a nice read!However...

From how I remember history:

In 2006, when the legendary X1900XTX took the world by surprise, actually beating the scarce and coveted 7800GTX-512, I bought it. It was king of the hill from January 2006 until the 7950GX2 stole the crown back for the fastest "single-slot" solution about 6 months later around June 2006, only a few months after the smaller 90nm 7900GTX was *finally* released in April 2006. Everybody started hailing Nvidia again although it was really an SLI dual-gpu solution sandwiched into one PCI-E slot. Perhaps it was the quad-gpu thingy that sounded so cool. It was obviously over-hyped but really took the attention away from ATI.

GDDR4 on the X1950XTX hardly did any good, since it was a bit late (Sept 2006) with only like 3-4 performance increase over the X1900. Well then the 8800GTX came in Nov 2006 and had a similar impact that the 9700Pro had.

As everybody wanted to see how the R600 would do, it was delayed, and disappointed hugely in June 2007. The 8800GTX/Ultra kept on selling for around $600 for nearly 12 months straight, making history. 80nm just did not cut it for the R600, so ATI wanted to have its dual-GPU single card REVENGE against Nvidia. And it would be even better this time since it's done on a single PCB, not a sandwiched solution like Nvidia's 7900GX2. Hence the tiny RV770 chips made on unexpected 55nm process! The 3870X2 did beat the 8800GTX in most reviews, but had to use Crossfire just like with SLI. Also, the 3870X2 only used GDDR3, unlike the single 3870 with fast GDDR4.

But Nvidia still took the attention away from the 3870 series by tossing an 8800GT up for grabs. When the 3870X2 came out in Jan 2008, Nvidia touted its upcoming 9800GX2 (to be released one month afterwards). So, Nvidia stopped ATI with an ace up its sleeve.

Round 2 for ATI's revenge: The 4870X2. And it worked this time! There was no way that Nvidia could expect the 4870 to be *that much* better than the 3870. Everybody was saying the 4870 would be 50% faster, and Nvidia yawned at that, thinking that the 4870 still couldnt touch the 9800GTX or 9800GX2 when crossfired. Plus Nvidia expected the 4870 to still have the "AA bug" since the 3870 did not fix it from the 2900XT, and the 4870 had a similar architecture. Boy, Nvidia was all wrong there! The 4870 actually ended up being *50%* faster than the 9800GTX in some games.

So, now ATI has earned its vengeance with its single-slot dual-GPU solution that Nvidia had with its 7900GX2 and 9800GX2 a while ago. With the 4870X2 destroying the GTX 280, ATI does indeed have its crown or "halo".

Unfortunately, Quad-crossfire hardly does well against the GTX 280 in SLI. We now know that quad-GPU solutions give a far lower "bang-per-GPU" due to poor driver optimizations, etc.. So most enthusiast gamers with the money and a 2560x1600 monitor are running two GTX 280's right now instead of two 4870X2's.. oh well!

One thing not mentioned about GDDR5 is that it eats power like mad! The memory alone consumes 40W, even at idle, and that is one of the reasons why the 4870 does not idle so well. If ATI reduces the speed low enough, it messes up the Aero graphics in Vista. It would have been nice if ATI released an intermediate 4860 version with GDDR4 memory at 2800+MHz effective.

Now, I cannot even start to expect what the RV870 will be like. I think Nvidia is going to really want its own revenge this time around, being so financially hurt with the whole 9800 - GTX 200 range plus being unable to release a 55nm version of G200 to this day. Nvidia just cannot beat the 4870X2 with a dual G200 on 55nm, and this is the reason for the re-spins (delays) with an attempt to reduce the power consumption while maintaining the necessary clock speed. Pardon me for pointing out the obvious...

Hope my mini-article was a nice supplement to the main article! :)

CarrellK - Sunday, December 7, 2008 - link

Not bad at all.BTW, 55nm has less to do with how good the RV770 is than the re-architecture & re-design our engineers did post-RV670.

To illustrate, scale the RV770 from 55nm to 65nm (only core scales, not pads & analog) and see how big it is. Now compare that to anything else in 65nm.

Pretty darned good engineers I'd say.

BoFox - Sunday, December 7, 2008 - link

True, and nowhere in the article was it pointed out that since the AA algorithm relied on the shaders, simply upping the shader units from 320 to a whopping 800 completely solved the weak AA performance that plagued 2900's and 3870's. It did not cost too much chip die size or power consumption either. ATI certainly did design the R600 with the future in mind (by moving AA to the shader units, with future expansion). Now the 4870 does amazing well with 8x FSAA, even beating the GTX 280 in some games.I wanted to edit my above post by saying that the dual G200 needed to have low enough power consumption so that it could still be cooled effectively in a single-slot sandwich cooling solution. The 4870X2 has a dual-slot cooler, but Nvidia just cannot engineer the G200 on a single PCB with the architecture that they are currently using (monster chip die size, and 16 memory chips that scales with 448-bit to 512-bit bandwidth instead of using 8 memory chips with 512-bit bandwidth). That is why Nvidia must make the move to GDDR5 memory, or else re-design the memory architecture to a greater degree. Just my thoughts... I still have no idea what we'll be seeing in 2009!

papapapapapapapababy - Saturday, December 6, 2008 - link

more like uber mega retards, right? if they are so smart... why do they keep making such terrible, horrible, shitty drivers?why?

i really really, really want to buy a 4850, i really do. but im not going to do it. im going to go and buy the 9800gt. And i know is just a re branded 8800gt. And i know nvidia is making shitty @ explosive hardware ( my 8600gt just died) And i know that gpu is slower, older, oced 65nm tech. And that nvidia is pushing gimmicky tricks " physics" and buying devs. but guess what? NVIDIA = good, clean drivers. New game? New drivers there. Fast. UN-Bloated drivers, that work, is is that hard ati? Really. or maybe you guys just suck?

Im going to pick all tech@ because of that. Thats how much i fkn hate your bloated and retarded drivers ATI. Install ms, framework for a broken control panel? stupid. And whats up with all those unnecessary services eating my memory and cpu cycles? ATI Hotkey poller?, ATI Smart?, ATI2EVXX.exe, ATI2EVXX.exe,NET 2.0 ? always there and the damm thing takes forever to load? Nvidia dsnt use any bloated crap, so why do you feel entitled to polute my pc with your bloated drivers?

AGAIN HORRIBLE DRIVERS ATI! I DONT WANT A SINGLE EXTRA SERVICE! i just build a pc for a friend. I choose the hd4670, beautiful card, really cool, fast, efficient. I love it. I want one for myself. But the drivers? ARg, i ended up using just the display driver and still the memory consumption was utterly retarded compared to my nvidia card.

so geniuses? move your asses and fix your drivers.

thanks, and good job with your hard.