AMD's B3 Stepping Phenom Previewed, TLB Hardware Fix Tested

by Anand Lal Shimpi on March 12, 2008 12:00 AM EST- Posted in

- CPUs

The "TLB Bug" Explained

Phenom is a monolithic quad core design, each of the four cores has its own internal L2 cache and the die has a single L3 cache that all of the cores share. As programs are run, instructions and data are taken into the L2 cache, but page table entries are also cached.

Virtual memory translation is commonplace in all modern OSes, the premise is simple: each application thinks it has contiguous access to all memory locations so they don't have to worry about complex memory management. When an application attempts to load or store something in memory, the address it thinks it's accessing is simply a virtual address - the actual location in memory can be something very different.

The OS stores a table of all of these mappings from virtual to physical addresses, the CPU can cache frequently used mappings so memory accesses take place much quicker.

If the CPU didn't cache page table entries, each memory access would proceed as follows:

1) Read from a pagetable directory

2) Read a pagetable entry

3) Then read the translated address and access memory

Then there's something called a Translation Lookaside Buffer (TLB) which takes the addresses and maps them one to one, so you don't even need to perform a cache lookup - there's just a direct translation stored in the TLB. The TLB is much smaller than the cache so you can't store too many items in the TLB, but the benefit is that with a good TLB algorithm you can get good hit rates within your TLB. If the mapping isn't contained in the TLB then you have to do a lookup in cache, and if it's not there then you have to go out to main memory to figure out the actual memory address you want to access.

Page table entries eventually have to be updated, for example there are situations when the OS decides to move a set of data to another physical location so all of the virtual addresses need to be updated to reflect the new address.

When page table entries are updated the cached entries stored in a core's L2 cache also need to be updated. Page table entries are a special case in the L2, not only does the cache controller have to modify the data in the entries to reflect their new values, but it also needs to set a couple of status bits in the page table entries in order to mark that the data has been modified.

Page table entries in cache are very different than normal data. With normal data you simply modify it and your cache coherency algorithms take care of making sure everything knows that the data is modified. With page table entries the cache controller must manually mark it by setting access and dirty bits because page tables and TLBs are actually managed by the OS. The cache line has to be modified, have a couple of bits set and then put back into the cache - an exception to the standard operating procedure. And herein lies the infamous TLB erratum.

When a page table entry is modified, the core's cache controller is supposed to take the cached entry, place it in a register, modify it and then put it back in the cache. However there is a corner case whereby if the core is in the middle of making this modification and about to set the access/dirty bits and some other activity goes into the cache, hits the same index that the page table entry is stored in and if the page table entry is marked as the next thing to be evicted, then the data will be evicted from the L2 cache and put into the L3 cache in the middle of this modification process. The line that's evicted from L2 is without its access and dirty bits set and now in L3, and technically incorrect.

Now the update operation is still taking place and when it finishes setting the appropriate bits, the same page table data will be placed into the core's L2 cache again. Now the L2 and L3 cache have the same data, which shouldn't happen given AMD's exclusive cache hierarchy.

If the line in L2 gets evicted once more, it'll be sent off to the L3 and there will be a conflict creating a L3 protocol error. But the more dangerous situation is what happens if another core requests the data.

If another core requests the data it will first check for it in L3, and of course find it there, not knowing that an adjacent core also has the data in its L2. The second core will now pull the data from L3 and place it in its L2 cache but keep in mind that the data is marked as unmodified, while the first core has it marked as modified in its L2.

At this point everything is ok since the data in both L2 caches is identical, but if the second core modifies the page table data that could create a dangerous problem as you end up in a situation where two cores have different data that is expected to be the same. This could either result in a hard lock of the system or even worse, silent data corruption.

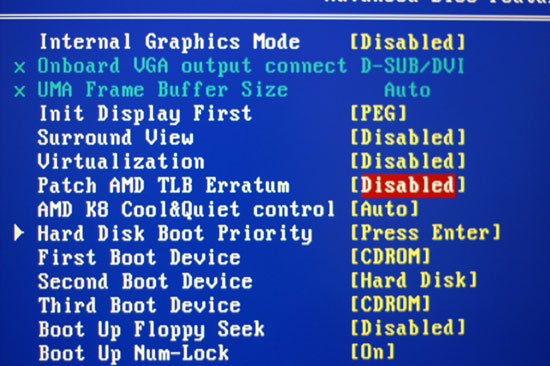

The BIOS fix

The workaround in B2 stepping Phenoms is a BIOS fix that tells the TLB it can't look in the cache for page table entries upon lookup. Obviously this drives memory latencies up significantly as it adds additional memory requests to all page table accesses.

The hardware fix implemented in B3 Phenoms is that whenever a page table entry is modified, it's evicted out of L2 and placed in L3. There's a very minor performance penalty because of this but no where near as bad as the software/BIOS TLB fix mentioned above.

AMD gave us two confirmed situations where the TLB erratum would rear its ugly head in real world usage:

1) Windows Vista 64-bit running SPEC CPU 2006

2) Xen Hypervisor running Windows XP and an unknown configuration of applications

AMD insisted that the TLB erratum was a highly random event that would not occur during normal desktop usage and we've never encountered it during our testing of Phenom. Regardless, the two scenarios listed above aren't that rare and there could be more that trigger the problem, which makes a great case for fixing the problem

29 Comments

View All Comments

eok - Thursday, March 20, 2008 - link

The article, while great news, still leaves me guessing at what they actually tested and how.They say they got their hands on a 2.2ghz B3. The CPUZ data confirms that. But in the final "extreme" test, they show the B3 @ 2.3ghz. So, they overclocked?? Via unlocked multiplier or by increasing the FSB???

eye smite - Saturday, March 15, 2008 - link

I don't typically read your site anymore as the articles since phenom launch, particularly the phenom launch article reads more like a rant than a review. Every AMD article reads the same, it's a good cpu but........and then this laundry list of issues and why you see them as critical or distasteful and so on. Why the hell can't you just do a straight forward review based on the facts and leave the colorful commentary for the political videos on youtube? You lot suffer from some idiotic perception issues.Narg - Friday, March 14, 2008 - link

Personally I think the problem lies within the 3 levels used. When I first read the Phenom specs, I was sad to see there were 3 levels of cache. The complexity that adds to a processor is exponential! I would have been far more happy to see a single L2 cache between all processors of much larger size and better access. I can't imagine why they opted for 3 levels. Seems to be a hurried solution to get the Phenom to market, since so many of the single core chips AMD has built in the past have 3 levels of cache, which of course helped those chips. They need to design an effective 2 level cache only chip.BernardP - Friday, March 14, 2008 - link

About the article, I am thankful for the most complete and understandable explanation of the TLB error and fixes I have seen since Phenom was released.bradley - Wednesday, March 12, 2008 - link

We finally have more concrete instances of the bug being induced and documented. Though maybe we have different opinions of what constitutes a rare bug. If it took AMD to inform us, perhaps they are fairly rare instance. In hindsight, the coverage on the TLB issue does appear vastly disproportionate to the actual threat itself.DigitalFreak - Wednesday, March 12, 2008 - link

On the desktop, yes. My understanding is that it's a huge issue with the Opterons, which are more likely to be used in situations where the bug crops up (VMWare, etc.) It also explains why you still can't buy a quad-core AMD server from HP, Dell, etc.Griswold - Thursday, March 13, 2008 - link

Its not so much of an issue with opterons if you use a unix/linux derivate and apply AMDs own kernel patch which solves the problem with almost zero performance loss (it doesnt just disable the TLB like the BIOS option does). Windows Servers on the other hand...Still, understandable that some vendors just stay away from it until B3.

JumpingJack - Friday, March 14, 2008 - link

http://www.amd.com/us-en/assets/content_type/white...">http://www.amd.com/us-en/assets/content...e/white_...As most have stated, errata are a fact of life for every CPU, the fact that Intel or AMD publish errata is because the found something so obscure, the typical quality assurance testing (which must be rigorous and thorough) never expressed the bug. The occurence so rare that the problems it may cause would likely go unnoticed to the average user. This is fine for DT, a crash or lock up would probably result in a few choice, colorful words with regard to Microsoft and they reboot and on their way.

In the enterprise space, though, uptime is everything, and more importantly the sanctity of the data.... from AMD's own publication on Errata 298 ...

" One or more of the following events may occur:

• Machine check for an L3 protocol error. The MC4 status register (MSR 0000_0410) will be

equal to B2000000_000B0C0F or BA000000_000B0C0F. The MC4 address register (MSR

0000_0412) will be equal to 26h.

• Loss of coherency on a cache line containing a page translation table entry.

• Data corruption. "

It is last possibility that most likely resulted in AMDs decision to hold off service the mainstream server market and they made the absolute right decision doing so...

bradley - Wednesday, March 12, 2008 - link

Yes, that goes without saying. I guess most assumed the same held true for Phenom as Barcelona, when taking the TLB errata into consideration. And there wasn't one clear voice to dissent or say otherwise, which is unfortunate. For whatever reason Intel's own C2D TLB bug didn't receive nearly as much press, which could also cause system instability. Every chip has bugs, but only when documented does it become errata and revised.larson0699 - Wednesday, March 12, 2008 - link

SPEC CPU 2006 in Vista x64 may be real world for enough to warrant the fix (though IMO it should have been right the first time), but it's really not that common.Only labrats and enthusiasts run benchmarks (but at least they have my respect), and only complete tools run the version of an already heavy OS that further bottlenecks most of today's apps. As a tech, I have no sympathy for anyone who chooses to run down MS's path and patronize their every mistake--yes, it may be a hasty opinion, but it is backed by common sense. There is nothing XP or an Xbox 360 cannot do better.

Anyway... *sigh*

High fives to Anand for another awesome in-depth review, for making me one article smarter, and to AMD for more practical results as of late.

P.S. These guys run their own show--not plagiarize others. Please cite your evidence to them directly instead of FUD the forums.