NVIDIA Acquires AGEIA: Enlightenment and the Death of the PPU

by Derek Wilson on February 12, 2008 11:00 AM EST- Posted in

- GPUs

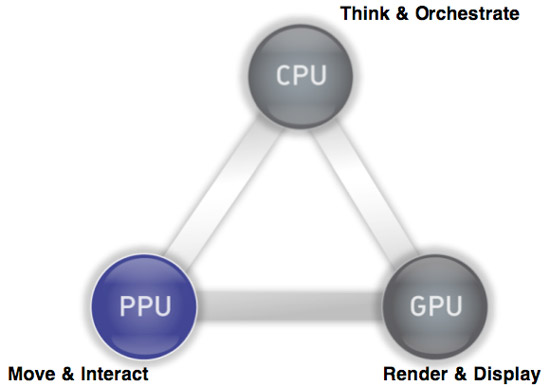

Last week, NVIDIA announced that they have agreed to acquire AGEIA. As most here probably know, AGEIA is the company that make the PhysX physics engine and acceleration hardware. The PhysX PPU (physics processing unit) is designed to accelerate the processing of physics calculations in order to offer developers the potential to deliver more realistic and immersive worlds. The PhysX SDK is there for developers to be able to write game engines that can take advantage of either a CPU or dedicated hardware.

While this has been a terrific idea in theory, the benefits of the hardware are currently a tough sell. The problem stems from the fact that game developers can't rely on gamers having a physics card, and thus they are unable to base fundamental aspects of gameplay on the assumption of physics hardware being present. It is similar to the issues we saw when hardware 3D graphics acceleration first came on to the scene, only the impact from hardware 3D was more readily apparent. The long term benefit from physics hardware is less in what you see and more in the basic principles of how a game world works.

Currently, the way the developers make use of PhysX is based on the lowest common denominator performance: how fast can it run on a CPU. With added hardware, effects can scale (more particles, more objects, faster processing, etc.), but you can't get much beyond "eye candy" style enhancements; you can't yet expect game developers to implement worlds dependent on hardware accelerated physics.

The NVIDIA acquisition of AGEIA would serve to change all that by bringing physics hardware to everyone via a software platform already tailored to scale physics capabilities and performance to the underlying hardware. How is NVIDIA going to be successful where AGEIA failed? After all, not everyone has or

will have NVIDIA graphics hardware. That's the interesting bit.

PPU/GPU, What's the Difference?

Why Dedicated Hardware?

Ever since AGEIA hit the scene, GPU makers have been jumping up and down saying "we can do that too." Sure, physics can run on GPUs. Both graphics and physics lend themselves to a parallel architecture. There are differences though, and AGEIA claimed to be able to handle massively parallel and dependent computations much better than anything else out there. And their claim is probably true. They built hardware to do lots of physics really well.

The problem with that is the issue we mentioned above: developers aren't going to push this thing to the limits by creating games centered on dedicated physics hardware. The type of "effects" physics that developer are currently using the PhysX hardware for is also well suited to a GPU. Certainly complex systems with collisions between rigid and soft bodies happening everywhere would drown a GPU, but adding particles or more fragments from explosions or more gibs or debris is not a problem for either NVIDIA or AMD.

The Saga of Havok FX

Of course, that's why Havok FX came along. Attempting to make use of shaders to implement a physics engine, Havok FX would have enabled developers to start looking at using more horsepower for effects physics without regard for dedicated hardware. While the contemporary GPUs might not have been able to match up to the physics processing power of the PhysX hardware, that really didn't matter because developers were never going to push PhysX to its limits if they wanted to sell games.

But, now that Intel has acquired Havok, it seems that Havok FX is no longer a priority. Or even a thing at all from what we can find. Obviously Intel would prefer all the physics processing stay on the CPU at this point. We can't really blame them; it's good business sense. But it is certainly not the most beneficial thing for the industry as a whole or for gamers in particular.

And now, with no promise of a physics SDK to support graphics cards, and slow adoption of PhysX hardware, NVIDIA saw itself with an opportunity.

Seriously: Why Dedicated Hardware?

In light of the Intel / Havok situation, NVIDIA's acquisition of AGEIA makes sense. They get the PhysX physics engine that they can port over to their graphics hardware. The SDK is already used in many games across many platforms. Adding NVIDIA GPU acceleration to PhysX instantly provides all owners of games that make use of PhysX with hardware for physics acceleration when running on an NVIDIA GPU.

As we pointed out, compared to current GPUs, dedicated physics hardware has more potential physics power. But we also are not going to see a high level of relative physics complexity implemented until developers can be sure consumers have the hardware to handle it. The GPU is just as good as the PhysX card at this stage in hardware accelerated physics. At this point in time there is no benefit to all the power that sits dormant in a PhysX card, and the GPU offers a good solution to the kinds of effects developers are actually using PhysX to implement.

The PhysX software engine is capable of scaling complexity and performance if there is hardware present, and with NVIDIA GPUs essentially being that hardware there is certainly an instantaneously larger install base for PhysX. This totally tips the scales away from the need for dedicated hardware and towards the replacement of the PPU with the GPU at this point in time. We'll look at the future in a second.

32 Comments

View All Comments

Soubriquet - Monday, February 18, 2008 - link

Ageia was always a dog without a home. They were simply looking for an exit strategy and they must have made nVidia a tempting offer.IMHO nVidia didn't need Ageia, but thought it might come in handy for reasons mentioned hereabouts. Not nearly as expensive as some speculative acquisitions which spring to mind (AMD+ATI) but not likely to be particularly revolutionary either, IMHO.

Physics processing for the mainstream has to be API lead. So we can expect that to come from MS so Intel, AMD and nVidia will all need to get in touch with them and develope their own hardware to suit.

I get the sense that virtualisation may assist here, since physics on a GPU is not like physics on a CPU unless you have that layer in between software and hardware that can make the distinction insignificant to the software. In which case AMD have a decision to make, do they add their own version to the GPU (& compete with nVidia) or the CPU (and compete with Intel) or both ?

In any case virtualisation is just jargon for the time being and we are heading down the multicore CPU route so the CPU seems the obvious place for physics. But nVidia dont make CPUs and I wonder if not a little of their motivation in getting Ageia was to prevent anyone else getting it. A dog in the manger, as it were!

goku - Saturday, February 16, 2008 - link

Thanks for destroying the best thing that could've happened to gaming, now I'll be waiting 10 years for a feature to be added to a game while I could've had that feature in 2 years had there been dedicated physics cards.I don't want to have to buy an nvidia GPU just to get add on physics. At least with the PPU card, it didn't matter what video card I had, and if I don't want or need to improve the visual effects of the game I'm playing but would like more interactivity, all I have to do is buy a new PPU and not a whole new video card.

perzy - Thursday, February 14, 2008 - link

Everyone knows that the CPU is dead, it's not developing beacuse of the frequency-/heat-wall.So the foreseeable future is the discret processors, gpu, fpu and maybe spu. Whatever x-pu you can imagine or come up with.

The gpumakers whant the fpumarket also for sure but the want to do it on their product in the channel they know and trust.

This is like when GM and Ford bought the bus-companies in the USA and closed them down.

Kill the competition, and a cheap low-selling...no brainer.

I'm just waiting for Intel to release their high-performance GPU's and later FPU's.

They are in desperate need to branch out.

mlambert890 - Thursday, February 14, 2008 - link

Everyone knows that the CPU is dead? Really? Have you sent a note off to Intel and AMD? I dont think they got the memo.A GPU, FPU or PPU are all processing units. Any efficiency implemented in those parts can be implemented in a CPU. Any physical challenges in terms of die size, heat, and signaling noise faced by the CPU are also faced by those other, transistor based, parts. What are you getting on about?

All of these parts are a collection of a ton of transistors arranged on a die and coupled with some defined microcode. How they are arranged is a shell game where various sides basically bet on the most commercially viable packaging for any given market segment.

There is no such thing as "dead" or "alive". With transistor based electronic ICs, there are simply various ways of solving various problems and an ever moving landscape target based on what end users want to do. Semiconductor firms can adapt pretty easily and the semantics dont matter at all.

I remember similarly ridiculous comments with the advent of digital media when people were saying the "CPU is dead" and the future would be "all DSPs". Back then I was equally amazed at just how far some can be from "getting it"

forsunny - Wednesday, February 13, 2008 - link

So basically from the article it appears that Intel wants PPU hardware to fail so that the CPU is needed for processing Physics and they can continue to push (sell) for newer CPUs for higher performance.Therefor it appears that Nvidia may want to compete in the CPU market or even in the PPU market so that extra performance can be gained out of the existing CPU power. Nvidia is already in competition with intel in the general chipset market. Then they could claim better performance than intel by adding the PPU technology; unless intel starts to do the same.

I don't see where AMD fits into the picture?

FXi - Wednesday, February 13, 2008 - link

I'm thinking there might be some near term benefit from adding a few secondary chips to the gpu card. There is bandwidth enough in pci-e 2.0 to handle some additional calculations.I'm thinking:

phyics ppu

sound apu

Nvidia has experience (some a bit dated) in both, but they now own the harder of the two to provide. Now they may run into power and heat budgets that constrain them from pursuing this route, but there is plenty of pci-e bandwidth to handle all these things on a single or even dual (sli) style card(s).

With even Asus going into the sound route that sounds like an easy one to cover (and one that has benefits in the home theater arena as well). Now that they have the physics I'd think the trio would work well. And when the gpu advances enough to cover one or both of the secondary chips you just remove them, let the gpu take over the calculations, lower the transistor count and move along. You continue to keep the same set of calculations all based on same card and all under the same roof.

And with their experience in OpenGL acceleration, I'd take a reasonable bet they could do OpenAL acceleration as well. Who knows, will we see eventually OpenPL? :)

haplo602 - Wednesday, February 13, 2008 - link

I think that the whole PPU market missed it's target. Instead of targeting the single-player FPS/RPG etc market, they should have targeted the MMO developers market.Any advanced physics needed for a single-player FPS can be handled by a multicore CPU or alternatively on the GPU shaders with a bit of work.

However imagine a large non-instanced MMORPG with tens of thousands of players and NPCs interating in combat and other tasks. All the collision handling, hit calculations etc HAVE to be done on the server side to prevent cheating. This puts a large strain on the server hardware.

Now imagine a server farm basicaly a multinode cluster handling a large world with each server hosting an area of the game. It has to handle all the interaction and in addition network traffic, backend database handling. A single or dual PPU setup with proper software could make worlds (if not universes) of difference to the player experience, offloading the server CPUs from a bulk of operations.

Huge improvements to player experience here. Maybe I just miss information on these, but I have yet to hear about an MMO that actualy uses this kind of technology.

DigitalFreak - Tuesday, February 12, 2008 - link

HA HA HA HA HA HA HA! Chumps!Zan Lynx - Tuesday, February 12, 2008 - link

Nvidia should start calling their next-gen cards with PhysX support "VRPU"s instead of GPUs. Call it a Virtual Reality Processing Unit.The CPU feeds it objects. The VRPU can use the vertex and texture data for both graphics and physics. Add some new data to the textures for physics and off it goes. The CPU can sit back and feed in user and network inputs to update the virtual world state.

cheburashka - Tuesday, February 12, 2008 - link

Larrabee