The GPU Advances: ATI's Stream Processing & Folding@Home

by Ryan Smith on September 30, 2006 8:00 PM EST- Posted in

- GPUs

Enter the GPU

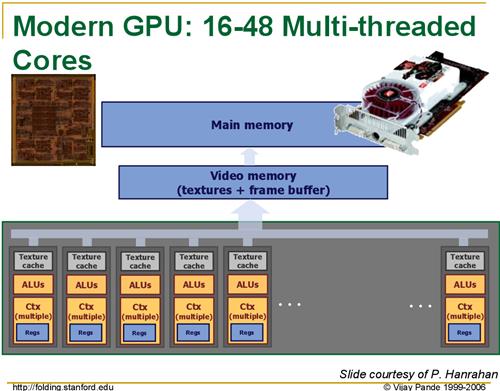

Modern GPUs such as the R580 core powering ATI's X19xx series have upwards of 48 pixel shading units, designed to do exactly what the Folding@Home team requires. With help from ATI, the Folding@Home team has created a version of their client that can utilize ATI's X19xx GPUs with very impressive results. While we do not have the client in our hands quite yet, as it will not be released until Monday, the Folding@Home team is saying that the GPU-accelerated client is 20 to 40 times faster than their clients just using the CPU. Once we have the client in our hands, we'll put this to the test, but even a fraction of this number would represent a massive speedup.

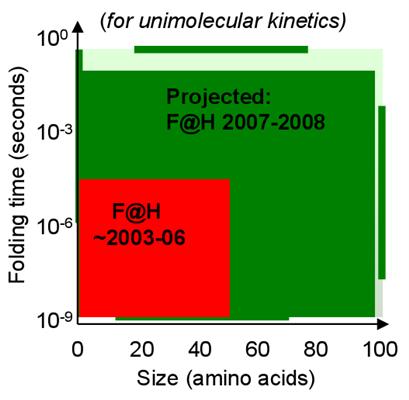

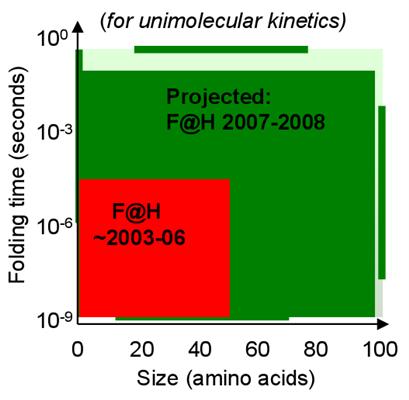

With this kind of speedup, the Folding@Home research group is looking to finally be able to run simulations involving longer folding periods and more complex proteins that they couldn't run before, allowing them to research new proteins that were previously inaccessible. This implementation also allows them to finally do some research on their own, without requiring the entire world's help, by building a cluster of (relatively) cheap video cards to do research, something they've never been able to do before.

Unfortunately for home users, for the time being, the number of those who can help out by donating their GPU resources is rather limited. The first beta client to be released on Monday only works on ATI GPUs, and even then only works on single X19xx cards. The research group has indicated that they are hoping to expand this to CrossFire-enabled platforms soon, along with less-powerful ATI cards.

The situation for NVIDIA users however isn't as rosy, as while the research group would like to expand this to use the latest GeForce cards, their current attempts at implementing GPU-accelerated processing on those cards has shown that NVIDIA's cards are too slow compared to ATI's to be used. Whether this is due to a subtle architectural difference between the two, or if it's a result of ATI's greater emphasis on pixel shading with this generation of cards as compared to NVIDIA we're not sure, but Folding@Home won't be coming to NVIDIA cards as long as the research group can't solve the performance problem.

Conclusion

The Folding@Home project is the first of what ATI is hoping will be many projects and applications, both academic and commercial, that will be able to tap the power of GPUs. Given the results showcased by the Folding@Home project, the impact on the applications that would work well on a GPU could be huge. In the future we hope to be testing technologies such as GPU-accelerated physics processing for which both ATI and NVIDIA have promised support, and other yet to be announced applications that utilize stream processing techniques.

It's been a longer wait than we were hoping for, but we're finally seeing the power of the GPU unleashed as was promised so long ago, starting with Folding@Home. As GPUs continue to grow in abilities and power, it should come as no surprise that ATI, NVIDIA, and their CPU-producing counterparts are looking at how to better connect GPUs and other such coprocessors to the CPU in order to further enable this kind of processing and boost its performance. As we see AMD's Torrenza technology and Intel's competing Geneseo technology implemented in computer designs, we'll no doubt see more applications make use of the GPU, in what could be one of the biggest-single performance improvements in years. The GPU is not just for graphics any more.

As for our readers interested in trying out the Folding@Home research group's efforts in GPU acceleration and contributing towards understanding and finding a cure for Alzheimer's, the first GPU beta client is scheduled to be released on Monday. For more information on Folding@Home or how to use the client once it does come out, our Team AnandTech members over in our Distributed Computing forum will be more than happy to give a helping hand.

Modern GPUs such as the R580 core powering ATI's X19xx series have upwards of 48 pixel shading units, designed to do exactly what the Folding@Home team requires. With help from ATI, the Folding@Home team has created a version of their client that can utilize ATI's X19xx GPUs with very impressive results. While we do not have the client in our hands quite yet, as it will not be released until Monday, the Folding@Home team is saying that the GPU-accelerated client is 20 to 40 times faster than their clients just using the CPU. Once we have the client in our hands, we'll put this to the test, but even a fraction of this number would represent a massive speedup.

|

| Click to enlarge |

With this kind of speedup, the Folding@Home research group is looking to finally be able to run simulations involving longer folding periods and more complex proteins that they couldn't run before, allowing them to research new proteins that were previously inaccessible. This implementation also allows them to finally do some research on their own, without requiring the entire world's help, by building a cluster of (relatively) cheap video cards to do research, something they've never been able to do before.

Unfortunately for home users, for the time being, the number of those who can help out by donating their GPU resources is rather limited. The first beta client to be released on Monday only works on ATI GPUs, and even then only works on single X19xx cards. The research group has indicated that they are hoping to expand this to CrossFire-enabled platforms soon, along with less-powerful ATI cards.

The situation for NVIDIA users however isn't as rosy, as while the research group would like to expand this to use the latest GeForce cards, their current attempts at implementing GPU-accelerated processing on those cards has shown that NVIDIA's cards are too slow compared to ATI's to be used. Whether this is due to a subtle architectural difference between the two, or if it's a result of ATI's greater emphasis on pixel shading with this generation of cards as compared to NVIDIA we're not sure, but Folding@Home won't be coming to NVIDIA cards as long as the research group can't solve the performance problem.

Conclusion

The Folding@Home project is the first of what ATI is hoping will be many projects and applications, both academic and commercial, that will be able to tap the power of GPUs. Given the results showcased by the Folding@Home project, the impact on the applications that would work well on a GPU could be huge. In the future we hope to be testing technologies such as GPU-accelerated physics processing for which both ATI and NVIDIA have promised support, and other yet to be announced applications that utilize stream processing techniques.

It's been a longer wait than we were hoping for, but we're finally seeing the power of the GPU unleashed as was promised so long ago, starting with Folding@Home. As GPUs continue to grow in abilities and power, it should come as no surprise that ATI, NVIDIA, and their CPU-producing counterparts are looking at how to better connect GPUs and other such coprocessors to the CPU in order to further enable this kind of processing and boost its performance. As we see AMD's Torrenza technology and Intel's competing Geneseo technology implemented in computer designs, we'll no doubt see more applications make use of the GPU, in what could be one of the biggest-single performance improvements in years. The GPU is not just for graphics any more.

As for our readers interested in trying out the Folding@Home research group's efforts in GPU acceleration and contributing towards understanding and finding a cure for Alzheimer's, the first GPU beta client is scheduled to be released on Monday. For more information on Folding@Home or how to use the client once it does come out, our Team AnandTech members over in our Distributed Computing forum will be more than happy to give a helping hand.

43 Comments

View All Comments

BikeAR - Monday, October 2, 2006 - link

I may be living in a vacuum these days, but did anyone notice the following comment on theF@D site, "Help" page?...

Intel has been helping support our project(Stanford/Intel Alzheimer's Research Program), but has announced that it is ending their contribution to distributed computing in general and no longer supports any distributed computing clients, including F@H.

What is up with this?

Staples - Monday, October 2, 2006 - link

I'd love to know how a fully loaded X1900 folding would be compared to an E6600 Core 2 Duo? These GPUs have always been said to be 100s of times faster than CPUs at what they are designed to do so I'd love to see if it is really true or not. If not, it looks like we may have been lied to for so so many years.Ryan Smith - Monday, October 2, 2006 - link

Unfortunately it looks like they'll be using larger units. We thought we'd be able to use the same units for both the normal and GPU-accelerated clients, but this appears to not be the case. There's no direct way to compare the clients then, the closest we could get is comparing the number of points given for a work unit.peternelson - Sunday, October 1, 2006 - link

"So far, the only types of programs that have effectively tapped this power other than applications and games requiring 3D rendering have also been video related, such as video decoders, encoders, and video effect processors. In short, the GPU has been underutilized, as there are many tasks that are floating-point hungry while not visual in nature, and these programs have not used the GPU to any large degree so far."Erm, not so! Try looking at GPGPU.org

Also see the books GPU Gems and GPU Gems 2.

mostlyprudent - Sunday, October 1, 2006 - link

BTW, a type-o in the 1st paragraph of the article: "...manipulate data in ways that goes far beyond" -- should read "in ways that GO far beyond..".I knew about SETI, but was completely unaware of F@H. Thanks. I will look to get involved!

imaheadcase - Sunday, October 1, 2006 - link

Did anandtech just post a article on the weekend when most PC users are at home so they can read it? Amazing! :PCupCak3 - Sunday, October 1, 2006 - link

When loading the client, our team number is 198. :)If anyone has any questions and/or would like to join the TeAm, come and visit us http://forums.anandtech.com/categories.aspx?catid=...">here. We have many people which would be more than happy to answer any question.

I'll try to answer some of the questions and comments which have been posted thus far:

NVIDIA support may come later. This is a BETA right now so only a small number of devices will be supported. The supported ATI line will expand and when NVIDIA gets the kinks worked out on using GPGPU processing for their cards, I"m sure the Pande group would be glad to them up :)

No one knows about Crossfire support yet. We'll know more about this tomorrow.

Same goes for using CPU + GPU.

Scalability: A multithreaded client I've heard is in the works but I'm sure its on hiatus with the GPU client coming into BETA.

That is correct in saying for each core, a separate folding@home client must be loaded. Right now the max amount of RAM one core will use is between 100 and 120 megs of RAM. Other workunits utilize around 5 or 10 megs. The client will only load workunits with this amount of RAM usage if you have the resources to spare. (so not just having 1 256 meg stick in your XP box and then using 110 megs of that for folding) We still yet do not know the RAM requirements for the vid cards.

The Pande Group has posted that 1600 and 1800 series cards will be the next ones supported :) (if all goes well of course)

Linux and Max OSX client is also available for those wondering.

I hope this helps!

Messire - Sunday, October 1, 2006 - link

Hi folksIt is surprising to me that this Stanford project works only on the most modern and powerful ATI GPUs, because i know of an another Stanford project called BrookGPU. Here is the URL: http://graphics.stanford.edu/projects/brookgpu/">http://graphics.stanford.edu/projects/brookgpu/

It is only in beta now and seems to be abandoned a little bit, but they've made a very usable GENERAL PURPOSE streaming programming language wich works on much more older GPUs as well. And it is very fast...

Messire

lopri - Sunday, October 1, 2006 - link

I know this question is somewhat off the discussion at the moment, but I can't help but ask. Would this crank up the GPU usage to 100% as it does to CPU? All the time? Then it could be a problem for average users, because the X1900 is hot as it is even in idle.GhandiInstinct - Sunday, October 1, 2006 - link

Don't get me wrong, I'd love to contribute, as I have with SETI, but what evidence is there that this will help anything or even at all?I mean we've had super computers working in science for a while now and I haven't heard of any major breakthroughs because of it, and if my computer is going to exhaust a little extra heat I want some numbers to crunch before I do so.

That's all.