ATI's New High End and Mid Range: Radeon X1950 XTX & X1900 XT 256MB

by Derek Wilson on August 23, 2006 9:52 AM EST- Posted in

- GPUs

A Faster, Cheaper High-End

While the X1900 XTX made its debut at over $600USD, this new product launch sees a card with a bigger, better HSF and faster memory debuting at a much lower "top end" price of $450. Quite a few factors play into this, not the least of which is the relatively small performance improvement over the X1900 XTX. We never recommended the X1900 XTX over the X1900 XT due to the small performance gain, but those small differences add up and with ATI turning their back on the X1900 XTX for its replacement. We can finally say that there is a tangible difference between the top two cards offered by ATI.

This refresh part isn't as different as other refresh parts, but the price and performance are about right for what we are seeing. Until ATI brings out a new GPU, it will be hard for them to offer any volume of chips that run faster than the X1950 XTX. The R5xx series is a very large 384 million transistor slice of silicon that draws power like its going out of style, but there's nothing wrong with using the brute force method every once in a while. The features ATI packed in the hardware are excellent, and now that the HSF is much less intrusive (and the price is right) we can really enjoy the card.

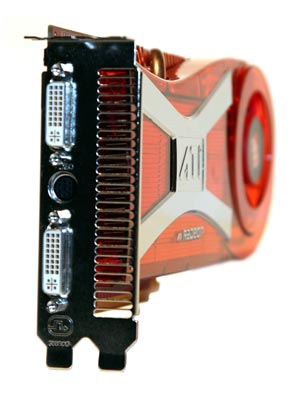

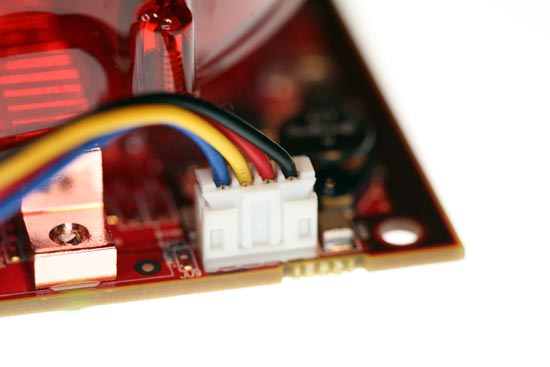

Speaking of the thermal solution, it is worth noting that ATI has put quite abit of effort into improving the aural impact of its hardware. The X1900 XTX is not only the loudest card around, but it also possesses a shrill and quite annoying sound quality. In contrast, the X1950 XTX is not overly loud even during testing when the fan runs at full speed, and the sound is not as painful to hear. We are also delighted to find that ATI no longer spins the fan at full speed until the drivers load. After the card spins up, it remains quiet until it gets hot. ATI has upgraded their onboard fan header to a 4-pin connection (following in the footsteps of NVIDIA and Intel), allowing them a more fine grained control over their fan speed.

While the X1950 XTX is not as quiet as NVIDIA's 7900 GTX solution, it is absolutely a step in the right direction. That's not to say their aren't some caveats to this high end launch.

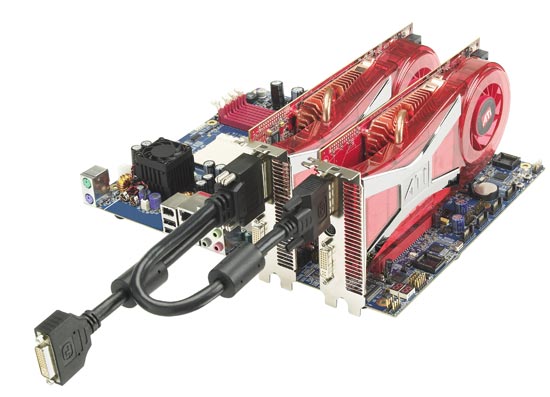

Even before the introduction of SLI, every NVIDIA GPU had the necessary components to support multiple GPU configurations in silicon. Adding an "over the top" SLI bridge connector to cards has resulted in the fact that nearly every NVIDIA card sold is capable of operating in multi-GPU mode. While lower end ATI products don't require anything special to work in tandem, the higher end products have needed a special "CrossFire" branded card with an external connector and dongle capable of receiving data from a slave card.

While this isn't necessarily a bad solution to the problem, it is certainly less flexible than NVIDIA's implementation. In the past, in order to run a high end multi-GPU ATI configuration, a lower clocked (compared to the highest speed ATI cards) more expensive card was needed. With the introduction of X1950 CrossFire, we finally have an ATI multi-GPU solution available at the highest available clock speed offered and at the same price as a non-CrossFire card.

While this may not be a problem for us, it might not end up making sense for ATI in the long run. Presumably, they will see higher margins from the non-CrossFire X1950 card, but the consumer will see no benefit from staying away from CrossFire. (Note that the CrossFire cable still offers a second DVI port.) In fact, the benefits of having a CrossFire version are fairly significant in the long run. As we mentioned, 2 CrossFire cards can be used in CrossFire with no problem, each card could be used as a master in other systems offering greater flexibility and a higher potential resale value in the future.

If the average consumer realizes the situation for what it is, we could see some bumps in the road for ATI. It's very likely that we will see lower availability of CrossFire cards, as the past has shown a lower demand for such cards. Now that ATI has taken the last step in making their current incarnation of multi-GPU technology as attractive and efficient as possible, we wouldn't be surprised if demand for CrossFire cards comes to completely eclipse demand for the XTX. If demand does go up for the CrossFire cards, ATI will either have a supply problem or a pricing problem. It will be very interesting to watch the situation and see which it will be.

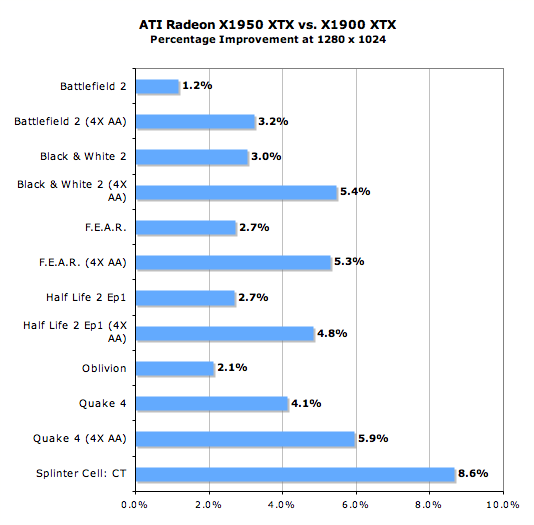

Before we move on to the individual game tests, lets take a look at how the X1950 XTX stacks up against its predecessor the X1900 XTX. Mouse over the links below the image to look at the performance difference between the X1950 XTX and the X1900 XTX at that resolution.

1280 x 1024 1920 x 1440 2048 x 1536

For our 29% increase in memory clock speed, we are able to gain at most an 8.5% performance increase in SC:CT. This actually isn't bad for just a memory clock speed boost. Battlefield 2 without AA took home the least improvement with a maximum of 2.3% at our highest resolution.

Our DirectX games seem to show a consistently higher performance improvement with AA enabled due to memory speed. This is in contrast to our OpenGL games (Quake 4 and F.E.A.R.) which show a pretty constant percent improvement at each resolution with AA enabled while scaling without AA improves as resolution increases. Oblivion improvement seems to vary between 2% and 5%, but this is likely due to the variance of our benchmark between runs.

74 Comments

View All Comments

SixtyFo - Friday, September 15, 2006 - link

So do they still use a dongle between the cards? If you had 2 xfire cards then it won't be connecting to a dvi port. Is there an adaptor? I guess what I'm asking is are you REALLY sure I can run 2 crossfire ed. x1950s together? I'm about to drop a grand on video cards so that piece of info may come in handy.unclebud - Friday, September 1, 2006 - link

"And 10Mhz beyond the X1600 XT is barely enough to warrant a different pair of letters following the model number, let alone a whole new series starting with the X1650 Pro."nvidia has been doing it for years with the 4mx/5200/6200/7300/whatever and nobody here said boo!

hm.

SonicIce - Thursday, August 24, 2006 - link

How can a whole X1900XTX system use only 267 watts? So a 300w power supply could handle the system?DerekWilson - Saturday, August 26, 2006 - link

generally you need something bigger than a 300w psu, because the main problem is current supply on both 12v rails must be fairly high.Trisped - Thursday, August 24, 2006 - link

The crossfire card is not the same as the normal one. The normal card also has the extra video out options. So there is a reason to buy the one to team up with the other, but only if you need to output to a composite, s-video, or component.JarredWalton - Thursday, August 24, 2006 - link

See discussion above under the topic "well..."bob4432 - Thursday, August 24, 2006 - link

why is the x1800xt left out of just about every comparison i have read? for the price you really can't beat it....araczynski - Thursday, August 24, 2006 - link

...I haven't read the article, but i did want to just make a comment...having just scored a brand new 7900gtx for $330 shipped, it feels good to be able to see the headlines for articles like this, ignore them, and think "...whew, i won't have to read anymore of these until the second generation of DX10's comes out..."

I'm guessing nvidia will be skipping the 8000's, and 9000's, and go straight for the 10,000's, to signal the DX10 and 'uber' (in hype) improvements.

either way, its nice to get out of the rat race for a few years.

MrJim - Thursday, August 24, 2006 - link

Why no Anisotropic filtering tests? Or am i blind?DerekWilson - Saturday, August 26, 2006 - link

yes, all tests are performed with at least 8xAF. Under games that don't allow selection of a specific degree of AF, we choose the highest quality texture filtering option (as in BF2 for instance).AF comes at fairly little cost these days, and it just doesn't make sense not to turn on at least 8x. I wouldn't personally want to go any higher without angle independant AF (like the high quality af offered on ATI x1k cards).