ATI's New Leader in Graphics Performance: The Radeon X1900 Series

by Derek Wilson & Josh Venning on January 24, 2006 12:00 PM EST- Posted in

- GPUs

R580 Architecture

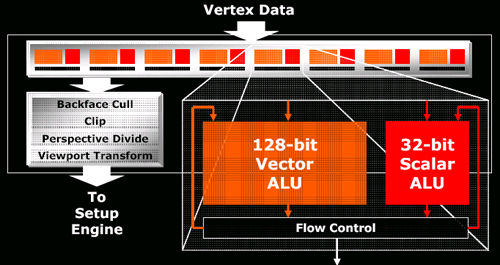

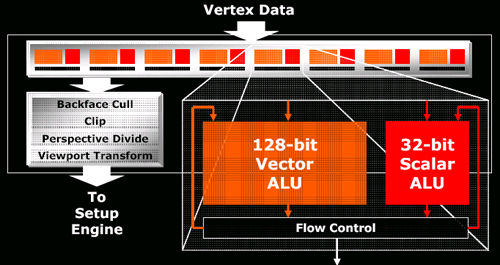

The architecture itself is not that different from the R520 series. There are a couple tweaks that found their way into the GPU, but these consist mainly of the same improvements made to the RV515 and RV530 over the R520 due to their longer lead time (the only reason all three parts arrived at nearly the same time was because of a bug that delayed the R520 by a few months). For a quick look at what's under the hood, here's the R520 and R580 vertex pipeline:

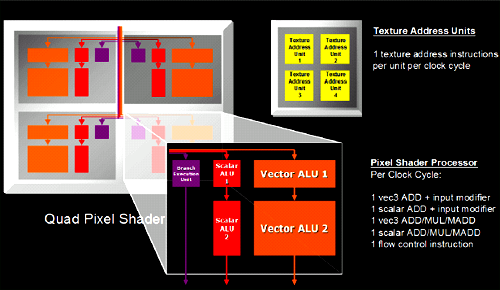

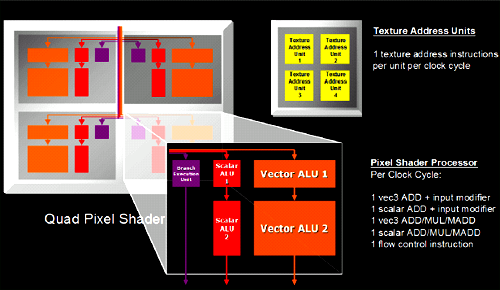

and the internals of each pixel quad:

The real feature of interest is the ability to load and filter 4 texture addresses from a single channel texture map. Textures which describe color generally have four components at every location in the texture, and normally the hardware will load an address from a texture map, split the 4 channels and filter them independently. In cases where single channel textures are used (ATI likes to use the example of a shadow map), the R520 will look up the appropriate address and will filter the single channel (letting the hardware's ability to filter 3 other components go to waste). In what ATI calls it's Fetch4 feature, the R580 is capable of loading 3 other adjacent single channel values from the texture and filtering these at the same time. This effectively loads 4 and filters four times the texture data when working with single channel formats. Traditional color textures, or textures describing vector fields (which make use of more than one channel per position in the texture) will not see any performance improvement, but for some soft shadowing algorithms performance increases could be significant.

That's really the big news in feature changes for this part. The actual meat of the R580 comes in something Tim Allen could get behind with a nice series of manly grunts: More power. More power in the form of a 384 million transistor 90nm chip that can push 12 quads (48 pixels) worth of data around at a blisteringly fast 650MHz. Why build something different when you can just triple the hardware?

To be fair, it's not a straight tripling of everything and it works out to look more like 4 X1600 parts than 3 X1800 parts. The proportions work out to match what we see in the current midrange part: all you need for efficient processing of current games is a three to one ratio of pixel pipelines to render backends or texture units. When the X1000 series initially launched, we did look at the X1800 as a part that had as much crammed into it as possible while the X1600 was a little more balanced. Focusing on pixel horsepower makes more efficient use of texture and render units when processing complex and interesting shader programs. If we see more math going on in a shader program than texture loads, we don't need enough hardware to load a texture every single clock cycle for every pixel when we can cue them up and aggregate requests in order to keep available resources busy more consistently. With texture loads required to hide latency (even going to local video memory isn't instantaneous yet), handling the situation is already handled.

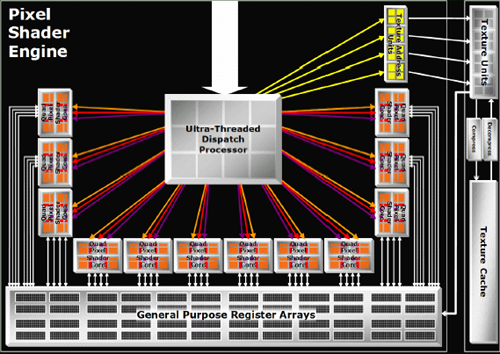

Other than keeping the number of texture and render units the same as the X1800 (giving the X1900 the same ratios of math to texture/fill rate power as the X1600), there isn't much else to say about the new design. Yes, they increased the number of registers in proportion to the increase in pixel power. Yes they increased the width of the dispatch unit to compensate for the added load. Unfortunately, ATI declined allowing us to post the HDL code for their shader pipeline citing some ridiculous notion that their intellectual property has value. But we can forgive them for that.

This handy comparison page will have to do for now.

The architecture itself is not that different from the R520 series. There are a couple tweaks that found their way into the GPU, but these consist mainly of the same improvements made to the RV515 and RV530 over the R520 due to their longer lead time (the only reason all three parts arrived at nearly the same time was because of a bug that delayed the R520 by a few months). For a quick look at what's under the hood, here's the R520 and R580 vertex pipeline:

and the internals of each pixel quad:

The real feature of interest is the ability to load and filter 4 texture addresses from a single channel texture map. Textures which describe color generally have four components at every location in the texture, and normally the hardware will load an address from a texture map, split the 4 channels and filter them independently. In cases where single channel textures are used (ATI likes to use the example of a shadow map), the R520 will look up the appropriate address and will filter the single channel (letting the hardware's ability to filter 3 other components go to waste). In what ATI calls it's Fetch4 feature, the R580 is capable of loading 3 other adjacent single channel values from the texture and filtering these at the same time. This effectively loads 4 and filters four times the texture data when working with single channel formats. Traditional color textures, or textures describing vector fields (which make use of more than one channel per position in the texture) will not see any performance improvement, but for some soft shadowing algorithms performance increases could be significant.

That's really the big news in feature changes for this part. The actual meat of the R580 comes in something Tim Allen could get behind with a nice series of manly grunts: More power. More power in the form of a 384 million transistor 90nm chip that can push 12 quads (48 pixels) worth of data around at a blisteringly fast 650MHz. Why build something different when you can just triple the hardware?

To be fair, it's not a straight tripling of everything and it works out to look more like 4 X1600 parts than 3 X1800 parts. The proportions work out to match what we see in the current midrange part: all you need for efficient processing of current games is a three to one ratio of pixel pipelines to render backends or texture units. When the X1000 series initially launched, we did look at the X1800 as a part that had as much crammed into it as possible while the X1600 was a little more balanced. Focusing on pixel horsepower makes more efficient use of texture and render units when processing complex and interesting shader programs. If we see more math going on in a shader program than texture loads, we don't need enough hardware to load a texture every single clock cycle for every pixel when we can cue them up and aggregate requests in order to keep available resources busy more consistently. With texture loads required to hide latency (even going to local video memory isn't instantaneous yet), handling the situation is already handled.

Other than keeping the number of texture and render units the same as the X1800 (giving the X1900 the same ratios of math to texture/fill rate power as the X1600), there isn't much else to say about the new design. Yes, they increased the number of registers in proportion to the increase in pixel power. Yes they increased the width of the dispatch unit to compensate for the added load. Unfortunately, ATI declined allowing us to post the HDL code for their shader pipeline citing some ridiculous notion that their intellectual property has value. But we can forgive them for that.

This handy comparison page will have to do for now.

120 Comments

View All Comments

bob4432 - Thursday, January 26, 2006 - link

Good for ATI, after some issues in the not so distant past it looks like the pendulum has swung back in their direction.i really like this, it should drop the 7800GT prices down maybe to the ~$200-$220(hoping, as nvidia want to keep the market hold...) which would actually give me a reason to switch to some flavor of pci-e based m/b, but being display limited @ 1280x1024 with a lcd, my x800xtpe is still chugging along nicely :)

Spoelie - Thursday, January 26, 2006 - link

it won't, they're in a different pricerange alltogether, prices on those cards will not drop before ati brings out a capable competitor to it.neweggster - Thursday, January 26, 2006 - link

How hard would it be for this new series of cards by ATI to be optimized for all benchmarking softwares? Well ask yourself that, I just got done talking to a buddy of mine whos working out at MSI. I swear I freaked out when he said that ATI is using an advantage they found by optimizing the new R580's to work better with the newest benchmarking programs like 3Dmark 06 and such. I argued with him thats impossible, or is it? Please let me know, did ATI possibly use optimizations built into the new R580 cards to gain this advantage?Spoelie - Thursday, January 26, 2006 - link

how would validating die-space on a gpu for cheats make any sense? If there is any cheat it's in the drivers. And no, the only thing is that 3dmark06 needs 24bit DST's for its shadowing and that wasn't supported in the x1800xt (uses some hack instead) and it is supported now. Is that cheating? The x1600 and x1300 have support for this as well btw, and they came out at the same time as the x1800.Architecturally optimizing for one kind of rendering being called a cheat would make nvidia a really bad company for what they did with the 6x00/Doom3 engine. But noone is complaining about higher framerates in those situations now are they?

Regs - Thursday, January 26, 2006 - link

....Where in this article do you see a 3D Mark score?mi1stormilst - Thursday, January 26, 2006 - link

It is not impossible, but unless your friend works in some high level capacity I would say his comments at best are questionable. I don't think working in shipping will qualify him as an expert on the subject?coldpower27 - Wednesday, January 25, 2006 - link

http://www.anandtech.com/video/showdoc.aspx?i=2679...">http://www.anandtech.com/video/showdoc.aspx?i=2679..."Notoriously demanding on GPUs, F.E.A.R. has the ability to put a very high strain on graphics hardware, and is therefore another great benchmark for these ultra high-end cards. The graphical quality of this game is high, and it's highly enjoyable to watch these cards tackle the F.E.A.R demo."

Wasn't use of this considered a bad idea as Nvidia cards have a huge performance penalty when used in this and the final buuld was supposed to be much better???

photoguy99 - Wednesday, January 25, 2006 - link

I noticed 1900x1440 is commonly benchmarked -Wouldn't the majority of people with displays in this range have 1920x1200 since that's what all the new LCDs are using? And it's the HD standard.

Aren't LCDs getting to be pretty capable game displays? My 24" Acer has a 6 ms (claimed) gray to gray response time, and can at least hold it's own.

Resolution for this monitor and almost all others this large: 1920x1200 - not 1920x1440.

Per Hansson - Wednesday, January 25, 2006 - link

Doing the math:Crossfire = 459w - 1900XTX = 341w = 118w, efficiency of PSU used@400w=78% so 118x0.78=92,04w

Per Hansson - Friday, January 27, 2006 - link

No replies huh? Cause I've read on other sites that the card draws upto 175w... Seems like quite a stretch so that was why I did the math to start with...