F.E.A.R. GPU Performance Tests: Setting a New Standard

by Josh Venning on October 20, 2005 9:00 AM EST- Posted in

- GPUs

The Failure of Soft Shadows and Parallax Mapping

Soft Shadows

We've seen how soft shadows can be used effectively in games like The Chronicles of Riddick, and Monolith has decided to add this as an option in FEAR. Used correctly, soft shadows greatly enhance lighting in a game by giving shadows cast by objects different levels of darkness making them more realistic. This process can take up a lot of processing power however, and that's no exception for FEAR.

Basically, soft shadows are an effect that show how shadows tend to fade at the edges or cast overlapping lines on walls or objects depending on different factors such as light angle and distance. If you've ever made a shadow-puppet, you can see this clearly, as multiple outlines of your hand shadow overlap on the wall with varying degrees of darkness (depending on the light source). And if you were to move your hand closer or farther away from the light, you can see how the soft shadows change dynamically.

The idea is to capture this effect in a game environment, but as any programmer would know, translating this to a game engine can be a very complex undertaking. Not only that, but as we mentioned before, calculating multiple shadows in real time can quickly become a major leech of processing power. With FEAR, we've seen how big of a performance hit that we had when we enabled soft shadows, but you may wonder, "does the effect at least look good?"

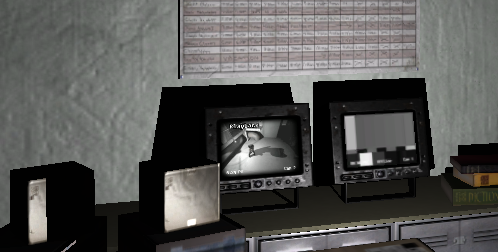

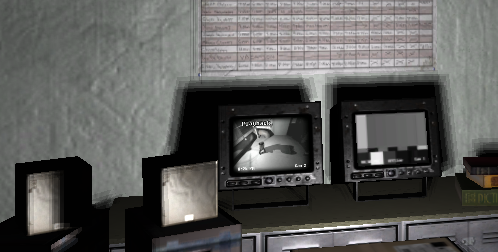

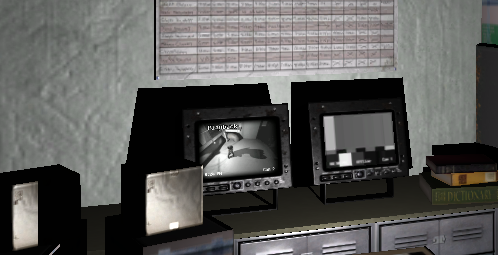

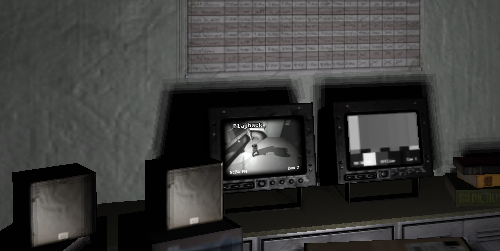

The short answer is "no". The way that FEAR incorporates soft shadows ends up looking unrealistic; more stratified and strange than soft. It simply looks as though the game draws multiple shadows at the edges of objects and offsets them up, down, left and right very slightly at different degrees of darkness regardless of the light source. This wouldn't be so bad if the multiple shadows were not readily noticeable as such. It also would have been nice if the "blur factor" were more dynamic; in other words, moving the shadows closer together or farther apart given where the object (say an enemy soldier) is in relation to the light sources and shadowed surfaces.

This is difficult to understand until you see it happening in the game, but you can get a better idea of it by looking at a few pictures. We took some screen shots of a scene with and without soft shadows enabled with both NVIDIA and ATI cards. Please ignore the slight lighting and position differences of these screens.

Parallax Mapping

While the detailed textures, excellent lighting, well done static and dynamic shadows (in spite of the soft shadow issue), large intricate particle systems, and various beautiful effects of FEAR come together to form an immersive and fluid graphical experience, there are a few caveats. To their credit, Monolith was very aggressive with the features that they included and are on the leading edge of technology. The use of a deep parallax mapping algorithm to represent damage is a very cool idea, but the implementation used in FEAR doesn't include key features such as self-occlusion and self-shadowing. When passing a wall with a chunk blown out, the hole will swim around, flatten out, and eventually look like unidentifiable goo stuck to the wall as the angle gets very steep.

The parallax mapping used looks great from angles where the entire interior of a hole can be seen. The problem occurs at viewing angles where a near edge would need to block the view of part of (or the entire) interior of the indention. Rather than occluding anything, parts of the texture that should become invisible are still shown (albeit distorted). This completely destroys the illusion of depth at steep angles by making the texture kind of swim until it totally loses its three-dimensionality. There are algorithms available that can represent correctly self-occlusion in parallax mapping. While we can appreciate cheaper parallax mapping algorithms as a kind of upgraded bump mapping, dramatic surface deformation should either be done more correctly or not at all in cases where the viewer can move to angles that break the effect.

But again, we would love to give credit where credit is due. We would rather see game developers experiment with new technology and put something out there than let the true power of our graphics cards remain dormant. Monolith was ahead of the curve with the graphics in Tron 2.0, and they haven't let us down with the quality of FEAR.

Soft Shadows

We've seen how soft shadows can be used effectively in games like The Chronicles of Riddick, and Monolith has decided to add this as an option in FEAR. Used correctly, soft shadows greatly enhance lighting in a game by giving shadows cast by objects different levels of darkness making them more realistic. This process can take up a lot of processing power however, and that's no exception for FEAR.

Basically, soft shadows are an effect that show how shadows tend to fade at the edges or cast overlapping lines on walls or objects depending on different factors such as light angle and distance. If you've ever made a shadow-puppet, you can see this clearly, as multiple outlines of your hand shadow overlap on the wall with varying degrees of darkness (depending on the light source). And if you were to move your hand closer or farther away from the light, you can see how the soft shadows change dynamically.

The idea is to capture this effect in a game environment, but as any programmer would know, translating this to a game engine can be a very complex undertaking. Not only that, but as we mentioned before, calculating multiple shadows in real time can quickly become a major leech of processing power. With FEAR, we've seen how big of a performance hit that we had when we enabled soft shadows, but you may wonder, "does the effect at least look good?"

The short answer is "no". The way that FEAR incorporates soft shadows ends up looking unrealistic; more stratified and strange than soft. It simply looks as though the game draws multiple shadows at the edges of objects and offsets them up, down, left and right very slightly at different degrees of darkness regardless of the light source. This wouldn't be so bad if the multiple shadows were not readily noticeable as such. It also would have been nice if the "blur factor" were more dynamic; in other words, moving the shadows closer together or farther apart given where the object (say an enemy soldier) is in relation to the light sources and shadowed surfaces.

This is difficult to understand until you see it happening in the game, but you can get a better idea of it by looking at a few pictures. We took some screen shots of a scene with and without soft shadows enabled with both NVIDIA and ATI cards. Please ignore the slight lighting and position differences of these screens.

Parallax Mapping

While the detailed textures, excellent lighting, well done static and dynamic shadows (in spite of the soft shadow issue), large intricate particle systems, and various beautiful effects of FEAR come together to form an immersive and fluid graphical experience, there are a few caveats. To their credit, Monolith was very aggressive with the features that they included and are on the leading edge of technology. The use of a deep parallax mapping algorithm to represent damage is a very cool idea, but the implementation used in FEAR doesn't include key features such as self-occlusion and self-shadowing. When passing a wall with a chunk blown out, the hole will swim around, flatten out, and eventually look like unidentifiable goo stuck to the wall as the angle gets very steep.

The parallax mapping used looks great from angles where the entire interior of a hole can be seen. The problem occurs at viewing angles where a near edge would need to block the view of part of (or the entire) interior of the indention. Rather than occluding anything, parts of the texture that should become invisible are still shown (albeit distorted). This completely destroys the illusion of depth at steep angles by making the texture kind of swim until it totally loses its three-dimensionality. There are algorithms available that can represent correctly self-occlusion in parallax mapping. While we can appreciate cheaper parallax mapping algorithms as a kind of upgraded bump mapping, dramatic surface deformation should either be done more correctly or not at all in cases where the viewer can move to angles that break the effect.

But again, we would love to give credit where credit is due. We would rather see game developers experiment with new technology and put something out there than let the true power of our graphics cards remain dormant. Monolith was ahead of the curve with the graphics in Tron 2.0, and they haven't let us down with the quality of FEAR.

117 Comments

View All Comments

giles00 - Tuesday, October 25, 2005 - link

I thought the bit-tech review was more relevant - they actually sat down and played the game, proving that the inbuilt graphics test doesn't bare any representation on real game play.http://www.bit-tech.net/gaming/2005/10/24/fear/1.h...">http://www.bit-tech.net/gaming/2005/10/24/fear/1.h...

here's a good quote:

lindy01 - Sunday, October 23, 2005 - link

Having to upgrade your hardware to the latest and greatest to get good looking games is crazy. In the last two years its spun out of control.My 9700pro lasted the longest, then I bought a 6800GT for $359.....my next purchase is a xbox360 for $399....I am sure if they make a version of Fear for it it will look great on my 50inch HD Sony TV. Ahhh and it probably wont ship full of bugs.

Dam if it were not for PC games my system would be a 1ghz P3 with 512megs of ram!

Regs - Monday, October 24, 2005 - link

I'm a little reluctant too this year to upgrade. The worse thing about it is when they make a good optimized graphics engine , like HL2's & Far Cry's, they dont seem to last very long. I expected at least a few other developers to make good quality games with them but that never happened. So the end result is that you're upgrading your PC for 2 or 3 titles a year. ID's OpenGL based engine was the only real "big" seller with Riddick and Quake 4 thankfully. Plus if you include the problem that if the games turned out not to be in your liking you're stuck with 1000 dollars worth of useless hardware.CronicallyInsane - Sunday, October 23, 2005 - link

I got the game, installed the new patch, and have been running @ 1024x768 just fine with the majority of the goodies on. Given, no soft shadows, and on 4x rather than 8x or 16x, but it looks beautiful to me.2.4Gig Northwood @ 3 gig

1 gig pc3200

Raptor 36G

6600gt agp @ 550/1.1

Try it before you bash it, ya know?

d

carl0ski - Sunday, October 23, 2005 - link

I am starting to think technology sites are forgeting they are to be reviewing the game.Not VIDEO cards.

So What the hell is this (not yet available) doing in an article helping us decide to buy a game?

we want to buy the game knowing whether it will run on what people own.

Geforce TI's

ATI 9800XT

ATI Radeon X1300 Pro

ATI Radeon X800 GT

NVIDIA GeForce 7800 GTX

NVIDIA GeForce 7800 GT

NVIDIA GeForce 6800 GT

NVIDIA GeForce 6600 GT

etc

AS that is what the mainstream/people own already

We want to know if it'll run on our own computers!!

simple as that.

How many of the 1,000,000's of copies a game is sold on do people run on $400 current generation Cards?

probably only a small percentage

most people i know who bought BF2 use cards ranging 6 months - 2 year old cards.

yacoub - Sunday, October 23, 2005 - link

Any chance this will actually happen?http://forums.anandtech.com/messageview.aspx?catid...">http://forums.anandtech.com/messageview...amp;thre...

Regs - Saturday, October 22, 2005 - link

You make reference to how the soft shadows are implemented to Riddick compared to FEARs yet I searched the site and there is no benchmarks or IQ comparisons of Riddick. If you asked me that's a major problem considering you have no evidence published to back up your own statement.Jeff7181 - Saturday, October 22, 2005 - link

This should be a game review... not a GPU review. Review the game, play the game how you'd actually play it... with sound enabled. THEN show us the FPS measurements.yacoub - Saturday, October 22, 2005 - link

A large number of Anandtech readers do not comprehend anything other than "GPU review" so you will likely not see a true game review anytime soon on a realistic rig. It's always only ever a GPU test with an FX-55. =/yacoub - Saturday, October 22, 2005 - link

You include the 6600GT and 6800GT but not the X800XL and X800XT, the two comparable cards. Stop with the 1800-series nonsense and post the BUYABLE ATI cards as well please! Would be nice for those of us considering upgrading to an X800XL or 6800GT to see how they stand up in FEAR. :(