NVIDIA GeForce 7800 GT: Rounding Out The High End

by Derek Wilson & Josh Venning on August 11, 2005 12:15 PM EST- Posted in

- GPUs

Half-Life 2 Performance

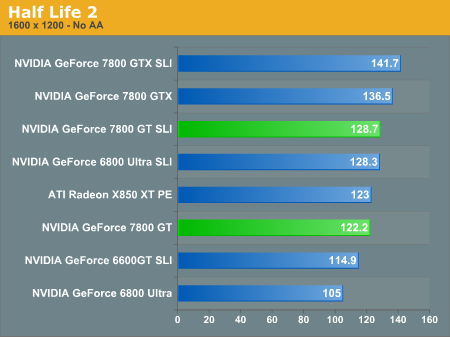

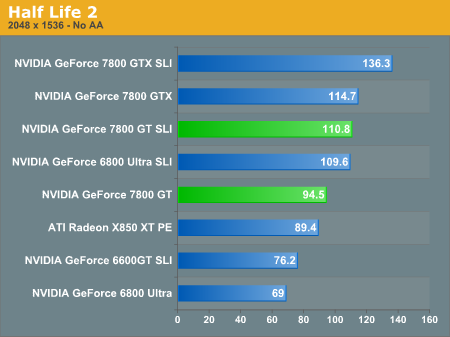

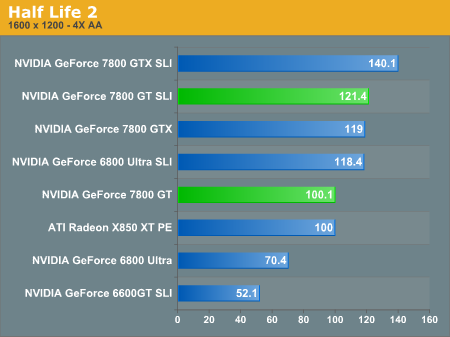

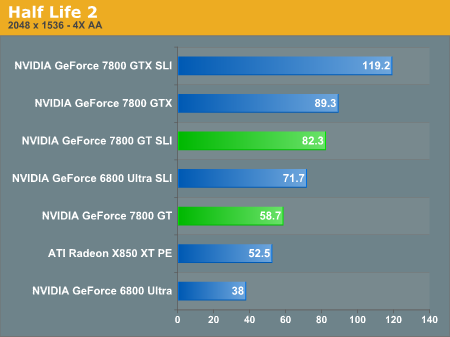

Half-Life 2, another graphically-intensive game, should see lots of improvement as well. Unlike Doom3, Half-Life 2 gets playable framerates at the higher resolutions with AA enabled on the 6800 Ultra, but you will still see a sizeable improvement with an upgrade to the 7800 GT.

Without AA, we see a modest 15% gain at 16x12, and 37% gain at 20x15 with the 7800 GT over the 6800U. With AA enabled, 16x12 gets a 42% gain and 20x15 gets 54.5%, showing that Half-Life 2 does a slightly better job with AA than Doom3.

Again, we see much larger gains with the 6800 Ultra in SLI configurations. The highest increase is 88.7% at 20x15 with AA enabled, about a 30 fps increase. 16x12 AA gets a 68% increase, and without AA, there's about a 22% increase at 16x12, and 59% increase at 20x15. This is more evidence for the superiority of the 6800U SLI over the 7800 GT, performance-wise, but whether or not it's worth the price as well as the extra power demands is debatable. Also note that other than 20x15 4xAA, the 7800 GT SLI and 6800 Ultra SLI are nearly the same performance. The extra memory bandwidth of the 6800 Ultra seems to help it match the additional processing power in quite a few tests.

77 Comments

View All Comments

IdBuRnS - Tuesday, November 29, 2005 - link

I just placed an order for a eVGA 7800GT with their free SLI motherboard.This will be replacing my ATI X800 Pro and MSI K8N Neo2 Platinum.

A554SS1N - Tuesday, September 6, 2005 - link

Ok, now for my views on the 7600GT...The 6600GT had exactly half the pixel-pipelines and memory bus of the 6800GT/Ultra, and this makes me think the 7600GT will be the same in relation to the 7800GTX. To add weight to this theory, a 128-bit would be much cheaper to produce, and with a smaller die size, be more economical and cooler. By being cooler, smaller fans can be used, saving more money. Also, NVidia would probably want to keep the PCB smaller for mainstream components (something that I would like myself).

So basically, my suggestion is that the 7600GT would be a 12-pipe, 128-bit card probably with those 12 pipes matched to 8 ROP's (like the 6600GT was 8-pipe matched to 4 ROP's). Around 5/6 Vertex pipelines would sound about right too. If teh core were at 450Mhz or even 500Mhz with 12-pipes, and paired with 1100Mhz memory, it would likely turn out somewhere inbetween a 6800 and 6800GT in performance, but importantly, would be:

- Cooler

- Potentially Quieter

- More energy efficient

- Smaller PCB

- Potentially cheaper

- More easy to produce, therefore able to provide lots of cores to the mass market

Just my opinions, but I can believe in a 12 pipe card more than 16 pipe mainstream card which I consider to be a "pipe dream".

Pythias - Friday, August 12, 2005 - link

You know its time to quit gaming when you have to have a card that costs a much as a house payment and a psu that could power a small city to run it.smn198 - Friday, August 12, 2005 - link

Marketing

DerekWilson - Friday, August 12, 2005 - link

We stand corrected ... After reading the comments on this article it is abundantly clear that your suggestion would be a compelling reason to release lower end G70 parts.Evan Lieb - Friday, August 12, 2005 - link

I think maybe some of you are taking the article a little too seriously. Most hardware articles nowadays are geared toward high end tech for good reason; it's interesting technology and a lot of people want to read about it. It's useful information to a lot of people, and a lot of people are willing to pay for it. You want entry level and mid range video reviewed too? That's fine, but you'll have to wait like everyone else, AT can't force NVIDIA to push out their 7xxx entry level/mid range tech any faster. When it's ready you'll probably see some type of review or roundup.Regs - Thursday, August 11, 2005 - link

Well there is still no AGP for 939 AGP owners and the performance difference between the Ultra and GT this year is a lot more significant from last years. I would hate to spend 500 dollars on a "crippled" 7800 GTX. Not to mention ATI is still a bench warmer in this competition. Just seems like upgrading this year is not even worth it to a 939 AGP owner no matter how much of a gamer you are. I'm disappointed in the selection this year. Performance is there, but the price/value and inconvenience is above and beyond. Last year was a great time to upgrade, while this year seems more like a money pit with no games to fill it over.bob661 - Friday, August 12, 2005 - link

Next year is probably a better time to upgrade for the AGP owners, I agree. For me, I want a 7600GT. If there will be no such animal then maybe a 7800GT at Xmas.dwalton - Thursday, August 11, 2005 - link

I intially agreed with that statement until I thought about 90nm parts. Correct me if I am wrong but Nvidia has no 90nm parts.While nvidia current line of 7xxx and 6xxx provide a broad range of performance. I'm sure nvidia can increase profit margins by producing 90nm parts.

Nvidia can simply take the 6800 GT and Ultra 90nm chips and rebadge them the 7600 vanilla and GT. Since this involves a simple process shrink and no tweaking, these new 90nm can possibly be clocked higher and draw less power while increasing profit margins, without the cost of designing new 7600 chips based off the G70 design. Making everyone happy.

coldpower27 - Friday, August 12, 2005 - link

I would like G70 technology on 90nm ASAP, I have a feeling Nvidia didn't do a shift to 90nm for NV40 for a reason, as that core is still based on AGP technology, and Nvidia currently doesn't have a native PCI-E part for 6800 Line, they are all using HSI on the GPU substrate from the NV45 design.NV40 on 0.13 micron is 287mm2 as pointed out by a previous poster, a full optical node shrink from 0.13 micron to 0.09 micron without any changes whatsoever, would bring NV40 287mm2 die size to ~ 172mm2 as full node optical shrink generally gives a die size of around 60%. This die size may not be enough to maintain a 256Bit Memory Interface,

Hence why Nvidia is rumored to do only a 0.11 micron process shrink (NV48) on the NV40 as that would bring a core down to about 230mm2 which is 80% of the size. Still large enuogh to maintain the 256Bit Memory Interface with little problem.

Making a 90nm G7x part for the mainstream segement directly would be very nice.

Let's say it has 16 Pipelines, and 8 ROP's to help save transistor space, plus the enhanced HDR buffers, and Transparency AA. It would be fairly close to the range I believe of 170mm2, it would probably still be limited at 128Bit Memory Interface, but the use of GDDR3 1.6ns @ 600MHZ could help alleviate the bandwidth problems some. Remember large amounts of memory bandiwdth combined with high fillrate is reserved for the higher segements, very hard to have your cake and eat it too in the mianstream.

Let's faice it for the time being, were not going to be getting fully fucntional high end cores at the 199US price point with 256Bit Memory Interface, so far we have gotten things like Radeon X800, Geforce 6800, 6800 LE, X800 SE, X800 GT. Etc etc. It just doesn't seem profitable to do so.

From what we have seen mianstream parts based on the tweaked technology are usually seen, RV410 Radeon X700, NV36 Geforce FX 5700 are mainstream cores based on the third and second generation of R300 and NV30 technology.

The 6800 @ 199US, 6800 GT @ 299US, 6800 U @ 399US is a temporay measure and production should slow on these cards as Nvidia ramps up the 90nm G7x based parts.