NVIDIA nForce Professional Brings Huge I/O to Opteron

by Derek Wilson on January 24, 2005 9:00 AM EST- Posted in

- CPUs

The New nForce Professional

The nForce Professional marks the fifth core logic offering from NVIDIA, who dubs their motherboard chipsets MCPs (for Media and Communications Processors). Never has the MCP moniker been truer than this time around.

Like the the Quadro and GeForce line, the nForce line is supported by NVIDIA's Unified Driver Architecture. This means that no matter what hardware you are running, any driver will work, whether past present or future. Since NVIDIA brings its UDA to both Windows and Linux, broad corporate support will be available for nForce Pro upon launch.

NVIDIA has also informed us that they have been validating AMD's dual core solutions on nForce Professional before launch as well. NVIDIA wants its customers to know that it's looking to the future, but the statement of dual core validation just serves to create more anticipation for dual core to course through our veins in the meantime. Of course, with dual core coming down the pipe later this year, the rest of the system can't lag behind.

We've seen several good steps in the connectivity department. At the same time, performance and scalability have become more dependant on core logic as functionality moves over the PCI Express bus, more storage needs SATA connections, and more devices are plugged into USB ports, for example. NVIDIA's unique solution is the combination of single chip core logic with the ability to drop multiple MCPs (of lesser function) on a motherboard for expanded I/O capabilities.

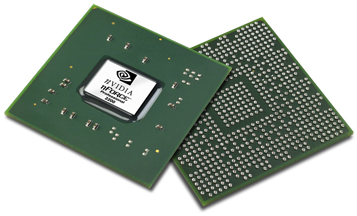

The nForce Professional 2200 MCP

One of the big questions that we first wanted answered was whether or not nForce Pro and nForce 4 Ultra/SLI were the same silicon with different parts turned on/off. NVIDIA maintains that they are different silicon, and it is entirely possible that they are. They did, in fact, give us transistor counts for nForce 4 and nForce Pro:

nForce 4: 22 Million Transistors

nForce Pro: 24 Million Transistors

Economically, it still doesn't make sense to run two different batches of silicon when functionality is so nearly identical, especially when features could just be turned off after the fact. Pro chips don't have the same volume as desktop chips, and desktop chips don't have the same margins as pro silicon. Combining the two allows a company to produce more volume for a single IC (which lowers cost per part) that feeds both high volume and high margin SKUs. Of course, as we saw in our recent article on modding nForce Ultra to SLI, there are some issues with running all your chips from the same silicon. The fact that potential Quadro users have been buying and modding GeForce cards for years speaks to the issue as well. Of course, there's more in a Quadro than just professional performance (build quality and support/service come to mind).

But just because something doesn't make economic sense doesn't mean that we don't want to see it happen. There's just something that doesn't sit right about charging a thousand dollars more for a card that has a few features enabled. We would rather see professional parts be worth their price. Part of that equation is running separate silicon for parts with pro features. We're glad to hear that this is what NVIDIA has said they are doing here.

For now, let's get on to what we do know about the nForce Pro.

55 Comments

View All Comments

smn198 - Friday, January 28, 2005 - link

It does do RAID-5!http://www.nvidia.com/object/IO_18137.html

w00t!

smn198 - Friday, January 28, 2005 - link

#18It can do RAID-5 according to http://www.legitreviews.com/article.php?aid=152

Near bottom of page:

"Update: NVIDIA contacted us to let us know that RAID 5 is also supported on the 2200 and 2050. They also didn't hesitate to point out that when the 2200 is matched with three 2050's, the RAID array can be spanned across 16 drives!"

However, nidia's site does not mention it! http://www.nvidia.com/object/feature_raid.html

I wonder. would be nice!

DerekWilson - Friday, January 28, 2005 - link

#50,each lane in PCIe consists of a serial up link and down link. this means that x16 actually has 4Gb/s up and down at the same time (thus the 8Gb/s number everyone always quotes). Saying 8Gb/s bandwidth without saying 4 up and 4 down is a lil misleading because that bandwidth can't move in one direction when needed.

#53,

4x SATA 3Gb/s -> 12Gb/s -> 1.5GB/s + 2GbE -> 0.25GB/s + USB 2.0 ~-> .5GB/s = 2.25 GB/s ... so this is really manageable bandwidht. Especially as its unlikely for all this to be moving while all 5 gig up and down of the 20 PCIe lanes are moving at the same time.

It's more likely that we'll see video cards setting aside 30% of the PCI Express b/w to nearly idle (as, again, upload is often not used). Unless using the 2 x16 SLI ... We're still not quite sure how much bandwidth this will use over the top and through the PCIe bus. But one card is definitely going to send data back up stream.

Each MCP has a 16x16 HT link @ 1GHz to the system... Bandwidth is 8GB/s (4 up and 4 down) ...

guyr - Thursday, January 27, 2005 - link

Can anyone explain how these MCPs work regarding throughput? What kind of clock rate do they have? 4 SATA II drives alone is 12 Gbps. Add 2 GigE and that is 14. Throw in 8 USB 2.0 and that almost an additional 4 Gbps. So if you add everything up, it looks to be over 20 Gbps! Oops, sorry, forgot about 20 lanes of PCIe. Anyway, has anyone identified a realistic throughput that can be expected? These specs are wonderful, but if the chip can only pass 100 MB/s, it doesn't mean anything.jeromechiu - Thursday, January 27, 2005 - link

#12, if you have a gigabit switch that supports port trunking, then you could use BOTH of the gigabit ports for faster intranet file-transfer. Hell! Perhaps you could add another two 4-port gigabit adaptors and give your PC a sort-of-10Gbps connection to the switch! ;)philpoe - Wednesday, January 26, 2005 - link

Being a newbie to PCI-E, if I read a PCI-Express FAQ correctly, aren't the x16 slots in use for graphics cards today 1 way only? Too bad the lanes can't be combined, or you could get to a 1-way x32 slot (apparently in the PCI-E spec). In any case, 4 x8 full duplex cards would be just the ticket for Infiniband (making all that Gbe worthless?) and 4 x2 slots for good measure :). Just think of 16x SATA-300 drives attached and RAID. Talk about a throughput monster.Imagine Sun, with the corporate-credible Solaris OS selling such a machine.

DerekWilson - Tuesday, January 25, 2005 - link

#32 henry, and anyone who saw my wrong math :-)You were right in your setup even though you only mentioned hooking up 4 x1 lanes -- 2 more could have been connected. Oops. I've corrected the article to reflect a configuration that actually can't be done (for real this time, I promise). Check my math again to be sure:

1 x16, 2 x4, 6 x1

that's 9 slots with only 8 physical connections. still with 10 lanes left over. In the extreme I could have said you can't do 9 x1 connectios on one board, but I wanted to maintain some semblance of reality.

Again, it looks like the nForce Pro is able to throw out a good deal of firepower ....

ceefka - Tuesday, January 25, 2005 - link

Plus I can't wait to see a rig like this doing benchies :-)ceefka - Tuesday, January 25, 2005 - link

In one word: amazing!Some of this logic eludes me, however.

There's no board that can fully exploit the theoretical connectivity of a 4-way opteron config with these chipsets?

SunLord - Tuesday, January 25, 2005 - link

I'd pay upto $450 for a dual cpu/chipset board as long as it gave me 2x16 1x4 and 1-3x1 connectors... as I see no use for pci-x when pci-e cards are coming out... Would make for one hell of a workstation to replace my aging athlon mp using tyan thunder k7 pro board. Even if the onbaord raid doesn't do raid 5 I can use the 4x slot for a sata2 raid card with little to no impact! Though 2 gigabit ports is kinda overkill. mmm 8x74GB(136GB) raptor raid 0/1 and 12x500GB(6TB) Raid 5 3Ware/AMCC controller.I can dream can't I? No clue what I would do with that much diskspace though... and still have enough room for 4 dvd-+rw dual layer burners hehe