GeForce 6200 TurboCache: PCI Express Made Useful

by Derek Wilson on December 15, 2004 9:00 AM EST- Posted in

- GPUs

Introduction

Imagine if getting the support for current generation graphics technology didn't require spending more than $79. Sure, performance wouldn't be good at all, and resolution would be limited to the lower end. But the latest games would all run with the latest features. All the excellent water effects in Half-life 2 would be there. Far Cry would run in all its SM 3.0 glory. Any game coming out until a good year into the DirectX 10 timeframe would run (albeit slowly) feature-complete on your impressively cheap card.A solution like this isn't targeted at the hardcore gamer, but at the general purpose user. This is the solution that keeps people from buying hardware that's obsolete before they get it home. The idea is that being cheap doesn't need to translate to being "behind the times" in technology. This gives casual consumers the ability to see what having a "real" graphics card is like. Games will look much better running on a full DX9 SM 3.0 part that "supports" 128MB of RAM (we'll talk about that later) than on an Intel integrated solution. Shipping higher volume with cheaper cards and getting more people into gaming translates to raising the bar on the minimum requirements for game developers. The sooner NVIDIA and ATI can get current generation parts into the game-buying world's hands, the sooner all game developers can write games for DX9 hardware at a base level rather than as an extra.

In the past, we've seen parts like the GeForce 4 MX, which was just a repackaged GeForce 2. Even today, we have the X300 and X600, which are based on the R3xx architecture, but share the naming convention of the R4xx. It really is refreshing to see NVIDIA take a stand and create a product lineup that can run games the same way from the top of the line to the cheapest card out there (the only difference being speed and the performance hit of applying filtering). We hope (if this part ends up doing well and finding a good price point for its level of performance) that NVIDIA will continue to maintain this level of continuity through future chip generations. We hope that ATI will follow suit with their lineup next time around. Relying on previous generation higher end parts to fulfill current lower end needs is not something that we want to see as long term.

We've actually already taken a look at the part that NVIDIA will be bringing out in two new flavors. The 3 vertex/4 pixel/2 ROP GeForce 6200 that came out only a couple months ago is being augmented by two lower performance versions, both bearing the moniker GeForce 6200 with TurboCache.

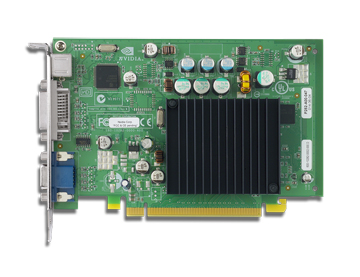

It's passively cooled, as we can see. The single memory module of this board is peeking out from beneath the heatsink on the upper right. NVIDIA has indicated that a higher performance version of the 6200 with TurboCache will follow to replace the current shipping 6200 models. Though better than non-existent parts such as the X700 XT, we would rather not see short-lived products hit the market. In the end, such anomalies only serve to waste the time of NVIDIA's partners and confuse customers.

For now, the two parts that we can expect to see will be differentiated by their memory bandwidth. The part priced at "under $129" will be a "13.6 GB/s" setup, while the "under $99" card will sport "10.8 GB/s" of bandwidth. Both will have core and memory clocks at 350/350. The interesting part is the bandwidth figure. On both counts, 8 GB/s of that bandwidth comes from the PCI Express bus. For the 10.8 GB/s part, the extra 2.8 GB/s comes from 16MB of local memory connected on a single 32bit channel running at a 700MHz data rate. The 13.6 GB/s version of the 6200 with TurboCache just gets an extra 32bit channel with another 16MB of RAM. We've seen pictures of boards with 64MBs of onboard RAM, pushing bandwidth way up. We don't know when we'll see a 64MB product ship, or what the pricing would look like.

So, to put it all together, either 112 or 96 MB of framebuffer is stored in system RAM and accessed via the PCI Express bus. Local graphics RAM holds the front buffer (what's currently on screen) and other high priority (low latency) data. If more than local graphics memory is needed, it is allocated dynamically from system RAM. The local graphics memory that is not set aside for high priority tasks is then used as a sort of software managed cache. And thus, the name of the product is born.

The new technology here is allowing writes directly from the GPU to system RAM. We've been able to perform reads from system RAM for quite some time, though technologies like AGP texturing were slow and never delivered on their promises. With a few exceptions, the GPU is able to see system RAM as a normal framebuffer, which is very impressive for PCI Express and current memory technology.

But it's never that simple. There are some very interesting problems to deal with when using system RAM as a framebuffer; this is not simply a driver-based software solution. The foremost and ever pressing issue is latency. Going from the GPU, across the PCI Express bus, through the memory controller, into the System RAM, and all the way back is a very long, round trip. Considering the fact that graphics cards are used to having instant access to data, something is going to have to give. And sure, the PCI Express bus may be 8 GB/s (4 up and 4 down, but it's less if you talk about actual utilization), but we are only going to be getting 6.4 GB/s out of the RAM. And that's if we are talking zero CPU utilization of memory and nothing else going on in the system, only what we're doing with the graphics card.

Let's take a closer look at why anyone would want to use system RAM as a framebuffer, and how NVIDIA has tried to solve the problems that lie within.

UPDATE: We got an email in our inbox from NVIDIA updating us on a change they have made to the naming of their TurboCache products. It seems they have listened to us and are including physical memory sizes on marketing/packaging. Here's what product names will look like:

GeForce 6200 w/ TurboCache supporting 128MB, including 16MB of local TurboCache: $79We were off on pricing a little bit, as the $129 figure we heard was actually for the 64MB/256MB part, and the 64-bit version we tested (which supports only 128MB) actually hits the price point we are looking for.

GeForce 6200 w/ TurboCache supporting 128MB, including 32MB of local TurboCache: $99

GeForce 6200 w/ TurboCache supporting 256MB, including 64MB of local TurboCache: $129

43 Comments

View All Comments

paulsiu - Tuesday, March 1, 2005 - link

I am not sure I like this product at the price point. If it was $50, then it would make sense, but as another poster pointed out, the older and faster 6200 with real memory is about $10 more.The marketing is also deceptive. 6200 Turbo cache sounds like it would be faster than the 6200.

In addition, this so call innovative use of system memory sounds like nothing more than integrated video. OK, it's faster, but aren't you increasing cpu load.

The review also use an Athlon 64 4000+, I am doubtful that users who buy an A64 4000+ is going to skip on the video card.

Paul

guarana - Thursday, January 27, 2005 - link

I was forced to go for a 6200TC 64MB (up to 256Mb) solution about a week ago. Had to upgrade my MoBo to a PCI-X version and had to get the cheapest card that i could find in the PCI-X flavour.I must say its a lot better than the FX5200 card i used to have ... I am running it with only 256Mb of system RAM so its not running at optimal performance , but i can run UT2003 with everything set to HIGH and in a 1280x1024 rez :)

A few stutters when the game actually starts , but after about 10seconds , the game runs smooth and without any issues ... dont know the exact FPS though :)

I score about 12000 points on 3dMark2001 with stock clocks (yeah 3DM2001 old, but its all i could download over-night)

Will let you know what happens when i finally get another 256Mb in the damn thing.

Jeff7181 - Wednesday, December 22, 2004 - link

I don't like this... why would I want the one that costs over $100 when I can get the 6200 for $110-210 that has it's own dedicated memory and performs better. It's stupid to replace the current 6200 with this pile. It would be fine as a $50-75 card, or for use in a laptop, or a HTPC... but don't replace the current 6200 with this.icarus4586 - Friday, December 17, 2004 - link

I have a laptop with a 64MB Mobility Radeon 9600 (350MHz GPU, 466MHz DDR MHz 128bit RAM), and I can run Far Cry at 1280x800, high settings, Doom 3 1024x768 high settings, Halo 1024x768 high settings, Half-Life 2 1280x800 high settings, all at around 30fps.This is, obviously, an AGP solution. I don't really know how it does it. I was very surprised at what it could pull off, especially the high resolutions, with only 64MB onboard.

What's going on?

Rand - Friday, December 17, 2004 - link

Have you heard whether the limited PCI-E X16 bandwidth of the I915 is true for the I925/825XE chipsets also?Also, I'm curious whether you've done any testing on the nForce 4 with only one DIMM so as to limit the system bandwidth and get some indication of how the GeForce6200TC scales in performance with greater/lesser system memory bandwidth available?

Rand - Friday, December 17, 2004 - link

DerekWilson-"As far as I understand Hypermemory, it is not capable of rendering directly to system memory."

In the past ATI has indicated all of the R300 derived cores are capable of writing directly to a texture in system memory.

At the very least HyperMemory implementation on the Radeon Express 200G chipset must be able to do so, as ATI supports implementations without any local RAM they have to be capable of rendering to system memory to operate.

The only difference I've noticed in the respective implementations thus far is that nVidia's Turbocache lowest local bus size if 32-bit, whereas ATI's implementation only supports as low as 64bit so the smallest local RAM they can use is 32MB. (Well, they can use no local RAM also, though that would obviously be considerably slower)

Rand - Friday, December 17, 2004 - link

DerekWilson - Thursday, December 16, 2004 - link

And you can bet that NVIDIA's Intel chipset will have a nice, speedy, optimized for SLI and TurboCache PCIe implimentation as well.PrinceGaz - Thursday, December 16, 2004 - link

Yeah, this does all seem to make some sort of sense now. But not much sense as I can't see why Intel would delibrately limit the bandwidth of the PCIe bus they were pushing so heavily. Unless the 925 chipset has a full bi-directional 4GB/s, and the 3 down/1 up is something they decided to impose on the cheaper 915 to differentiate it from the high-end 925.I guess it's safe to assume nVidia implemented bi-directional 4GB/s in the nForce4, given that they were also working on graphics cards that would be dependent on PCIe bandwidth. And unless there was a good reason for VIA, ATI, and SiS not to do so; I would imagine the K8T890, RX480/RS480, and SiS756 will also be full 4GB/s both ways.

DerekWilson - Thursday, December 16, 2004 - link

NVIDIA tells us its a limitation of 915. Looking back, they also heavily indicated that "some key chipsets" would support the same bandwidth as NVIDIA's own bridge solution at the 6 series launch. If you remember, their solution was really a 4GB total bandwidth solution (overclocked AGP 8x to "16x" giving half the PCIe bandwidth) ... Their diagrams all showed a 3 down 1 up memory flow. But they didn't explicitly name 915 at the time.