The Intel Skylake-X Review: Core i9 7900X, i7 7820X and i7 7800X Tested

by Ian Cutress on June 19, 2017 9:01 AM ESTI Keep My Cache Private

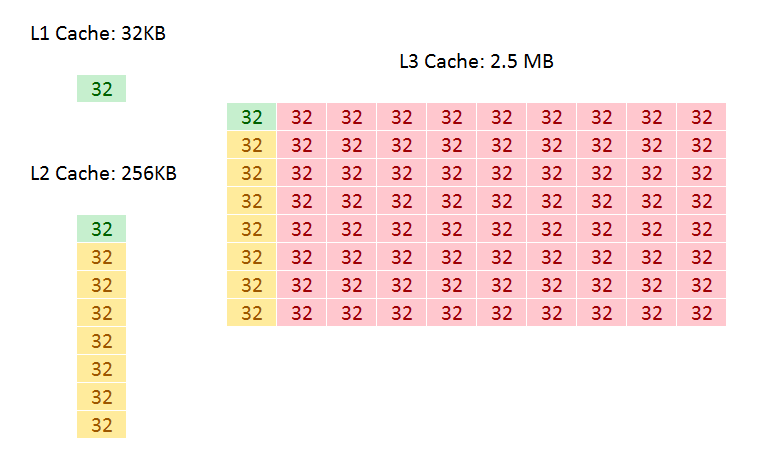

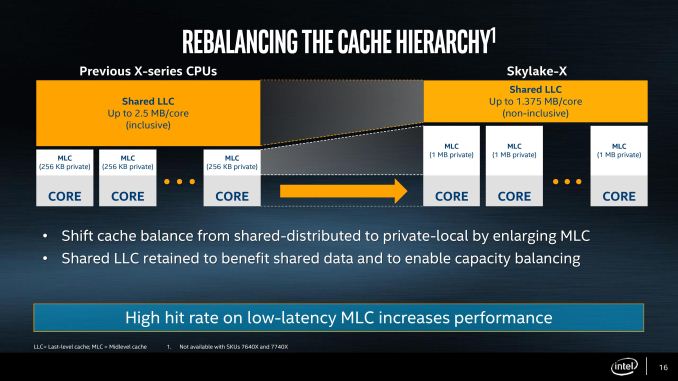

As mentioned in the original Skylake-X announcements, the new Skylake-SP cores have shaken up the cache hierarchy compared to previous generations. What used to be simple inclusive caches have now been adjusted in size, policy, latency, and efficiency, which will have a direct impact on performance. It also means that Skylake-S and Skylake-SP will have different instruction throughput efficiency levels. They could be the difference between chalk and cheese and a result, or the difference between stilton and aged stilton.

Let us start with a direct compare of Skylake-S and Skylake-SP.

| Comparison: Skylake-S and Skylake-SP Caches | ||

| Skylake-S | Features | Skylake-SP |

| 32 KB 8-way 4-cycle 4KB 64-entry 4-way TLB |

L1-D | 32 KB 8-way 4-cycle 4KB 64-entry 4-way TLB |

| 32 KB 8-way 4KB 128-entry 8-way TLB |

L1-I | 32 KB 8-way 4KB 128-entry 8-way TLB |

| 256 KB 4-way 11-cycle 4KB 1536-entry 12-way TLB Inclusive |

L2 | 1 MB 16-way 11-13 cycle 4KB 1536-entry 12-way TLB Inclusive |

| < 2 MB/core Up to 16-way 44-cycle Inclusive |

L3 | 1.375 MB/core 11-way 77-cycle Non-inclusive |

The new core keeps the same L1D and L1I cache structures, both implementing writeback 32KB 8-way caches for each. These caches have a 4-cycle access latency, but differ in their access support: Skylake-S does 2x32-byte loads and 1x32-byte store per cycle, whereas Skylake-SP offers double on both.

The big changes are with the L2 and the L3. Skylake-SP has a 1MB private L2 cache with 16-way associativity, compared to the 256KB private L2 cache with 4-way associativity in Skylake-S. The L3 changes to an 11-way non-inclusive 1.375MB/core, from a 20-way fully-inclusive 2.5MB/core arrangement.

That’s a lot to unpack, so let’s start with inclusivity:

An inclusive cache contains everything in the cache underneath it and has to be at least the same size as the cache underneath (and usually a lot bigger), compared to an exclusive cache which has none of the data in the cache underneath it. The benefit of an inclusive cache means that if a line in the lower cache is removed due it being old for other data, there should still be a copy in the cache above it which can be called upon. The downside is that the cache above it has to be huge – with Skylake-S we have a 256KB L2 and a 2.5MB/core L3, meaning that the L2 data could be replaced 10 times before a line is evicted from the L3.

A non-inclusive cache is somewhat between the two, and is different to an exclusive cache: in this context, when a data line is present in the L2, it does not immediately go into L3. If the value in L2 is modified or evicted, the data then moves into L3, storing an older copy. (The reason it is not called an exclusive cache is because the data can be re-read from L3 to L2 and still remain in the L3). This is what we usually call a victim cache, depending on if the core can prefetch data into L2 only or L2 and L3 as required. In this case, we believe the SKL-SP core cannot prefetch into L3, making the L3 a victim cache similar to what we see on Zen, or Intel’s first eDRAM parts on Broadwell. Victim caches usually have limited roles, especially when they are similar in size to the cache below it (if a line is evicted from a large L2, what are the chances you’ll need it again so soon), but some workloads that require a large reuse of recent data that spills out of L2 will see some benefit.

So why move to a victim cache on the L3? Intel’s goal here was the larger private L2. By moving from 256KB to 1MB, that’s a double double increase. A general rule of thumb is that a doubling of the cache increases the hit rate by 41% (square root of 2), which can be the equivalent to a 3-5% IPC uplift. By doing a double double (as well as doing the double double on the associativity), Intel is effectively halving the L2 miss rate with the same prefetch rules. Normally this benefits any L2 size sensitive workloads, which some enterprise environments such as databases can be L2 size sensitive (and we fully suspect that a larger L2 came at the request of the cloud providers).

Moving to a larger cache typically increases latency. Intel is stating that the L2 latency has increased, from 11 cycles to ~13, depending on the type of access – the fastest load-to-use is expected to be 13 cycles. Adjusting the latency of the L2 cache is going to have a knock-on effect given that codes that are not L2 size sensitive might still be affected.

So if the L2 is larger and has a higher latency, does that mean the smaller L3 is lower latency? Unfortunately not, given the size of the L2 and a number of other factors – with the L3 being a victim cache, it is typically used less frequency so Intel can give the L3 less stringent requirements to remain stable. In this case the latency has increased from 44 in SKL-X to 77 in SKL-SP. That’s a sizeable difference, but again, given the utility of the victim cache it might make little difference to most software.

Moving the L3 to a non-inclusive cache will also have repercussions for some of Intel’s enterprise features. Back at the Broadwell-EP Xeon launch, one of the features provided was L3 cache partitioning, allowing limited size virtual machines to hog most of the L3 cache if it was running a mission-critical workflow. Because the L3 cache was more important, this was a good feature to add. Intel won’t say how this feature has evolved with the Skylake-SP core at this time, as we will probably have to wait until that launch to find out.

As a side note, it is worth noting here that Broadwell-E was a 256KB private L2 but 8-way, compared to Skylake-S which was a 256KB private L2 but 4-way. Intel stated that the Skylake-S base core went down in associativity for several reasons, but the main one was to make the design more modular. In this case it means the L2 in both size and associativity are 4x from Skylake-S by design, and shows that there may be 512KB 8-way variants in the future.

264 Comments

View All Comments

jjj - Monday, June 19, 2017 - link

The 10 cores die is clearly 320+mm2 not 308mm2. The 308mm figure rounds down the mm based on those GamerNexus pics. From there, you slightly underestimate the size of other 2 die.Sarah Terra - Monday, June 19, 2017 - link

Fair point but what I take from this review is that you are going to be spending pretty much double the cost or higher of ryzen for a proc that will have a 30% larger power envelope if you want higher performance. Intel is scrambling here, well done AMD.jjj - Monday, June 19, 2017 - link

With 8 cores and up, thermal is a big issue when you OC Skylake X.. Power also to some extent.The 6 cores looks interesting vs the 7700k but not so much vs anything else. CPU+mobo gets you north of 600$ and that's a lot. If it had all the PCIe lanes enabled, there would be that but ,while plenty will buy it, it makes no sense to. And ofc there should be a Coffee Lake 6 cores soon , we'll see how it is priced- in consumer 6 cores with 2 mem chans is fine.

More than 6 cores are priced way too high and , if you need many cores, you buy for MT not ST so ST clocks are less relevant.

Intel moving in the same direction as AMD on the cache size front is interesting- larger L2 and smaller L3. Now they have "huge cache and memory latency issues"" just like Ryzen lol.

W/e, Intel's pricing is still far too high and this platform remains of minimal relevance.

ddriver - Monday, June 19, 2017 - link

Funny thou, when Ryzen under-performed in games that was no reason to not publish gaming benches, in fact being the platform's main weakness there was actually emphasis put on that... but when it comes to intel we gotta have special treatment... Let's hear it for objectivity!Granted the 7800X finally brings something of relatively decent value, but still no good reason to justify the purchase unless one insists on an intel product, for the brand, for thunderbolt or hypetane support.

"To play it safe, invest in the Core i9-7900X today."

Really? With Threadripper incoming in a matter of weeks? For less than 1000$ you will get 16 zen cores. It will definitely beat the 7900X by a decent margin in terms of performance, plus the massive I/O capabilities and also ECC support, which I'd say is vital. That just doesn't sound like a honest recommendation. Not surprising in the least.

ddriver - Monday, June 19, 2017 - link

Also, on top of that we have launch prices for Ryzen rather than current prices. Looks like a rather open attempt to diminish AMD's platform value.Ian Cutress - Tuesday, June 20, 2017 - link

We've always posted manufacturer MSRPs in our CPU charts. There has been no official price drop from AMD; if you're seeing lower, it's being run from the distributor level.On the TR issue, we basically haven't tested it and don't know the price. Lots of variables in the air, which is why the words are /if you want to play it safe/. Safe being the key word there.

ddriver - Tuesday, June 20, 2017 - link

Dunno Ian, in my book this sounds more like hasty than safe. The safe thing would be to wait out. Even without the incipient TR launch, early adoption is rather unsafe on its own. As it is, it sounds more like an attempt to dupe people into spending their money on intel in the eve of the launch of a superior value and performance product from a direct (and sole) competitor.It is true that nothing is still officially known about TR, but based on the ryzen marketing strategy and performance we can make safe and accurate speculations. I expect to see the top TR chip launched at 999$ offering at the very least 30% of performance advantage over the 7900X in a similar or slightly higher thermal budget, of course in workloads that can scale nicely up with the core count.

Comparing the 7900X to the 1800X, we have ~35% performance advantage for 205% the price and 150% the power usage. Based on that, it is a safe bet that TR is going to shine.

fanofanand - Monday, June 26, 2017 - link

Ian is a scientist, the less guessing the better. Give him an opportunity to review TR before giving suggestions. Doesn't that seem fair?t.s - Tuesday, June 20, 2017 - link

Play it safe? Really?? Please. As if everyone in this world's stupid.Ranger1065 - Wednesday, June 21, 2017 - link

There has never been a better time to give Intel the middle finger.