The Intel Optane Memory (SSD) Preview: 32GB of Kaby Lake Caching

by Billy Tallis on April 24, 2017 12:00 PM EST- Posted in

- SSDs

- Storage

- Intel

- PCIe SSD

- SSD Caching

- M.2

- NVMe

- 3D XPoint

- Optane

- Optane Memory

BAPCo SYSmark 2014 SE

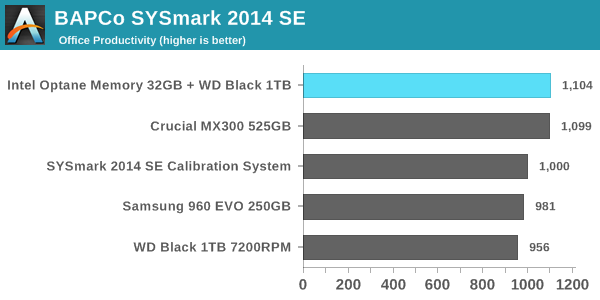

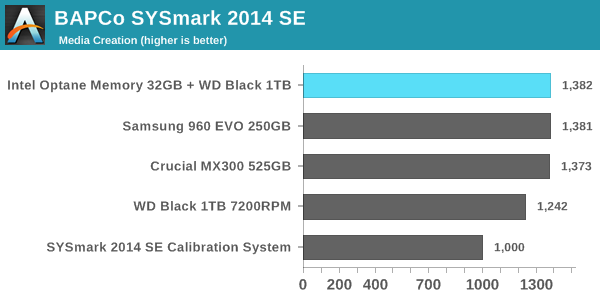

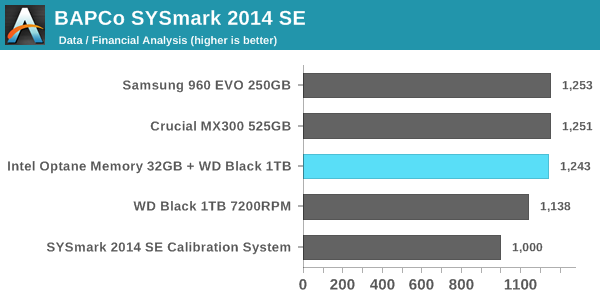

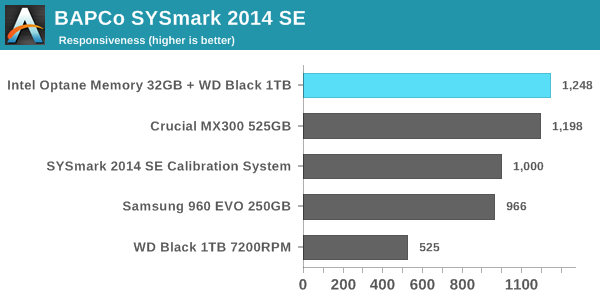

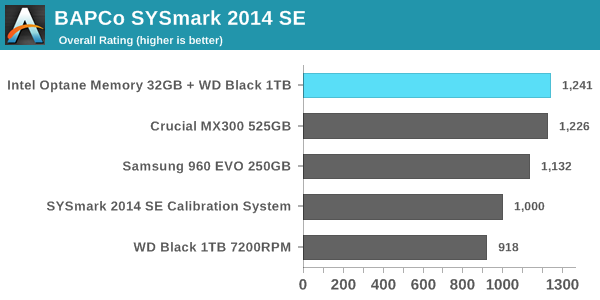

BAPCo's SYSmark 2014 SE is an application-based benchmark that uses real-world applications to replay usage patterns of business users in the areas of office productivity, media creation and data/financial analysis. In addition, it also addresses the responsiveness aspect which deals with user experience as related to application and file launches, multi-tasking etc. Scores are meant to be compared against a reference desktop (the SYSmark 2014 SE calibration system in the graphs below). While the SYSmark 2014 benchmark used a Haswell-based desktop configuration, the SYSmark 2014 SE makes the move to a Lenovo ThinkCenter M800 (Intel Core i3-6100, 4GB RAM and a 256GB SATA SSD). The calibration system scores 1000 in each of the scenarios. A score of, say, 2000, would imply that the system under test is twice as fast as the reference system.

SYSmark scores are based on total application response time as seen by the user, including not only storage latency but time spent by the processor. This means there's a limit to how much a storage improvement could possibly increase scores. It also means our Optane review system starts out with an advantage over the SYSmark calibration system due to the faster processor and more RAM.

In every performance category the Optane caching setup is either in first place or a close tie for first. The Crucial MX300 is tied with the Optane configuration for every sub-test except the responsiveness test, where it falls slightly behind. The Samsung 960 EVO 250GB struggles, partly because its low capacity and the low degree of parallelism that implies means it often cannot take advantage of the performance offered by its PCIe 3.0 x4 interface. The use of Microsoft's built-in NVMe driver instead of Samsung's may also be holding it back. As expected, the WD Black hard drive scores substantially worse than our solid-state configurations on every test, with the biggest disparity occurring in the responsiveness test: The WD Black hard drive will force users to spend more than twice as much time waiting on their computer than if it has a SSD.

Energy Usage

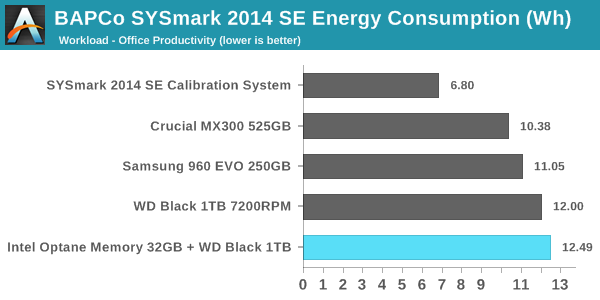

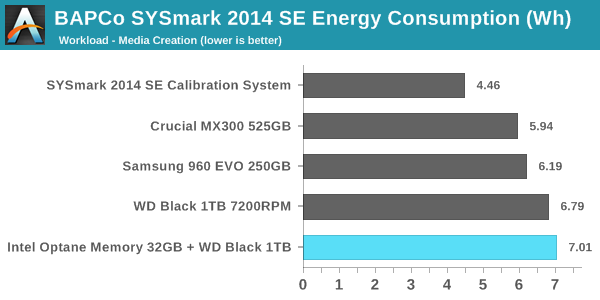

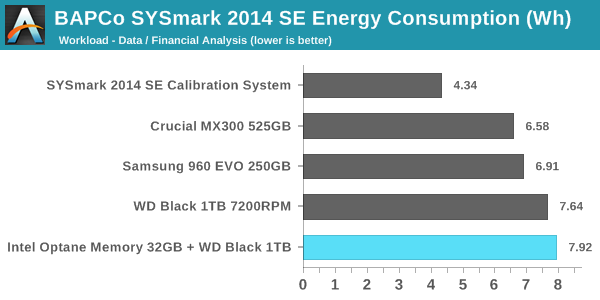

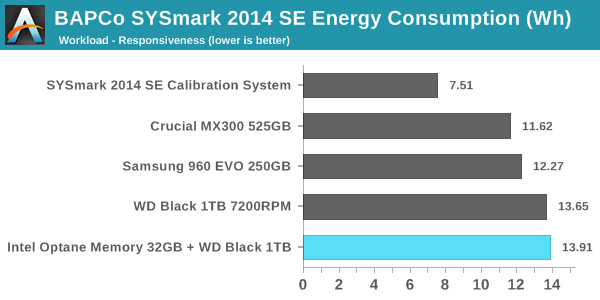

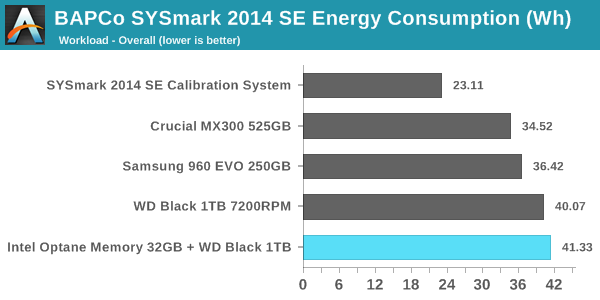

SYSmark 2014 SE also adds energy measurement to the mix. A high score in the SYSmark benchmarks might be nice to have, but, potential customers also need to determine the balance between power consumption and the efficiency of the system. For example, in the average office scenario, it might not be worth purchasing a noisy and power-hungry PC just because it ends up with a 2000 score in the SYSmark 2014 SE benchmarks. In order to provide a balanced perspective, SYSmark 2014 SE also allows vendors and decision makers to track the energy consumption during each workload. In the graphs below, we find the total energy consumed by the PC under test for a single iteration of each SYSmark 2014 SE workload and how it compares against the calibration systems.

The peak power consumption of a PCIe SSD under load can exceed the power draw of a hard drive, but over the course of a fixed workload hard drives will always be less power efficient. SSDs almost always complete the data transfer sooner, and they can enter and leave their low-power idle states far quicker. On a benchmark like SYSmark, there are no idle times long enough for a hard drive to spin down and save power.

With an idle power of 1W, the Optane cache module substantially increases the already high power consumption of the hard drive-based configurations. It does allow for the tests to complete sooner, but since the Optane module does nothing to accelerate the compute-bound portions of SYSmark, the total time saved is not enough to make up the difference. It also appears that the Optane caching is not being used to enable more aggressive power saving on the hard drive—Intel's probably flushing writes from the cache often enough to keep the hard drive spinning the whole time. What this adds up to is a difference that's quite clear but not big enough for desktop users to be too concerned with unless their electricity prices are high. The Optane Memory caching configuration is the most power-hungry option we tested, while the second-place performing Crucial MX300 configuration was most efficient, using about 16% less energy overall.

For mobile users, the power consumption of the Optane plus hard drive configuration is pretty much a deal-breaker. Our Optane review system is not optimized for power consumption the way a notebook system would be, so for a mobile user the Optane module would account for an even larger portion of the total battery draw, and battery life will take a serious hit.

110 Comments

View All Comments

YazX_ - Monday, April 24, 2017 - link

"Since our Optane Memory sample died after only about a day of testing"LOL

Chaitanya - Monday, April 24, 2017 - link

And it is supposed to have endurance rating 21x larger than a conventional NAND SSD.Sarah Terra - Monday, April 24, 2017 - link

Funny yes, but teething issues aside the random write Performance is several orders of magnitude faster than all existing storage mediums, this is the number one metric I find that plays into system responsiveness, boot times, and overall performance and the most ignored metric by all Meg's to date. They all go for sequential numbers, which don't mean jack except when doing large file copies.ddriver - Monday, April 24, 2017 - link

So let's summarize:1000 times faster than NAND - in reality only about 10x faster in hypetane's few strongest points, 2-6x better in most others, maximum thorough lower than consumer NVME SSDs, intel lied about speed about 200 times LOL. Also from Tom's review, it became apparent that until the cache of comparable enterprise SSDs fills up, they are just as fast as hypetane, which only further solidifes my claim that xpoint is NO BETTER THAN SLC, because that's what those drives use for cache.

1000 times the endurance of flash - in reality like 2-3x better than MLC. Probably on par with SLC at the same production node. Intel liked about 300-500 times.

10 times denser than flash - in reality it looks like density is actually way lower than. 400 gigs in what.. like 14 chips was it? Samsung has planar flash (no 3d) that has more capacity in a single chip.

So now they step forward to offer this "flash killer" as a puny 32 gb "accelerator" which makes barely any to none improvement whatsoever and cannot even make it through one day of testing.

That's quite exciting. I am actually surprised they brought the lowest capacity 960 evo rather than the p600.

Consumer grade software already sees no improvement whatsoever from going sata to nvme. It won't be any different for hypetane. Latency are low queue depth access is good, but that's mostly the controller here, in this aspect NAND SSDs have a tremendous headroom for improvement. Which is what we are most likely going to see in the next generation from enterprise products, obviously it makes zero sense for consumers, regardless of how "excited" them fanboys are to load their gaming machines with terabytes of hypetane.

Last but not least - being exclusive to intel's latest chips is another huge MEH. Hypetane's value is already low enough at the current price and limited capacity, the last thing that will help adoption is having to buy a low value intel platform for it, when ryzen is available and offers double the value of intel offerings.

Drumsticks - Monday, April 24, 2017 - link

Your bias is showing.1000x -> Harp on it all you want, but that number was for the architecture not the first generation end product. It represents where we can go, not where we are. I'll also note that Toms gave it their editor approved award - "As tested today with mainstream settings, Optane Memory performed as advertised. We observed increased performance with both a hard disk drive and an entry-level NVMe SSD. The value proposition for a hard drive paired with Optane Memory is undeniable. The combination is very powerful, and for many users, a better solution than a larger SSD."

"1000 times the endurance of flash -> You can concede that 3D XPoint density isn't as good as they originally envisioned, but it's still impressive, gen1, and has nowhere to go but up. It's not really worse than other competing drives per drive capacity - this cache supports like 3 DWPD basically. The MX300 750GB only supports like .3 DWPD. 10x better is still good.

10 times denser than flash -> DRAM, not Flash. And it's going to be much denser than DRAM.

Barely any to no improvement -> LOL, did you look at the graphs? Those lines at the bottom and on the left were 500GB and 250GB Sata and NVMe drives getting killed by Optane in a 32GB configuration. 3D XPoint was designed for low queue depth and random performance - i.e. things that actually matter, where it kills its competition. Even sequential throughput, which is far from its design intention, generally outperforms consumer drives.

So, Optane costs, in an enterprise SSD, 2-3x more than other enterprise drives, for record breaking low queue depth throughput that far surpasses its extra cost, while providing 10-80x less latency. In a consumer drive, Optane regularly approaches an order of magnitude faster than consumer drives in only a 32GB configuration.

If Optane is only as fast as SLC, I'd love to understand why the P4800X broke records as pretty much the fastest drive in the world, barring unrealistically high queue depths.

This 32GB cache might be a stopgap, and less compelling of a product in general because of its capacity, but that you could deny the potential that 3D XPoint holds is absolutely laughable. The random performance and low queue depth performance is undeniably better than NAND, and that's where consumer performance matters.

ddriver - Monday, April 24, 2017 - link

"I'd love to understand why the P4800X broke records"Because nobody bothered to make a SLC drive for many many years. The last time there were purely SLC drives on the market it was years ago, with controllers completely outdated compared to contemporary standards.

SLC is so good that today they only use it for cache in MLC and TLC drives. Kinda like what intel is trying to push hypetane as. Which is why you can see SSDs hitting hypetane IOPs with inferior controllers, until they run out of SLC cache space and performance plummets due to direct MLC/TLC access.

I bet my right testicle that with a comparable controller, SLC can do as well and even better than hypetane. SLC PE latencies are in the low hundreds of NANOseconds, which is substantially lower than what we see from hypetane. Endurance at 40 nm is rated at 100k PE cycles, which is 3 times more than what hypetane has to offer. It will probably drop as process node shrinks but still.

"10x better is still good"

Yet the difference between 10x and 1000x is 100x. Like imagine your employer tells you he's gonna pay you 100k a year, and ends up paying you a 1000 bucks instead. Surely not something anyone would object to LOL.

I am not having problems with "10x better". I am having problems with the fact it is 100x less than what they claimed. Did they fail to meet their expectations, or did they simply lie?

I am not denying hypetane's "potential". I merely make note that it is nothing radically better than nand flash that has not been compromised for the sake of profit. xpoint is no better than SLC nand. With the right controller, good old, even ancient and almost forgotten SLC is just as good as intel and micron's overhyped love child. Which is kinda like reinventing the wheel a few thousand years later, just to sell it at a few times what its actually worth.

My bias is showing? Nope, your "intel inside" underpants are ;)

Reflex - Monday, April 24, 2017 - link

SLC has severe limits on density and cost. It's not used because of that. Even at the same capacity as these initial Optane drives it would likely cost considerably more, and as Optane's density increases there is no ability to mitigate that cost with SLC, it would grow linearly with the amount of flash. The primary mitigations already exists: MLC and TLC. Of course those reduce the performance profile far below Optane and decrease it's ability to handle wear. Technically SLC could go with a stacked die approach, as MLC/TLC are doing, however nothing really stops Optane from doing the same making that at best a neutral comparison.ddriver - Monday, April 24, 2017 - link

SLC is half the density of MLC. Samsung has 2 TB of MLC worth in 4 flash chips. Gotta love 3D stacking. Now employ epic math skills and multiply 4 by 0.5, and you get a full TB of SLC goodness, perfectly doable via 3D stacked nand.And even if you put 3D stacking aside, which if I am not mistaken the sm961 uses planar MLC, 2 chips on each side for a full 1 TB. Cut that in half, you'd get 512 GB of planar SLC in 4 modules.

Now, I don't claim to be that good in math, but if you can have 512 GB of SLC nand in 4 chips, and it takes 14 for a 400 GB of xpoint, that would make planar SLC OVER 4 times denser than xpoint.

Thus if at planar dies SLC is over 4 times better, stacked xpoint could not possibly not possibly be better than stacked SLC.

Severe limits my ass. The only factor at play here is that SSDs are already faster than needed in 99% of the applications. Thus the industry would rather churn MLC and TLC to maximize the profit per grain of sand being used. The moment hypetane begins to take market share, which is not likely, they can immediately launch SLC enterprise products.

Also, it should be noted that there is still ZERO information about what the xpoint medium actually is. For all we know, it may well be SLC, now wouldn't that be a blast. Intel has made a bunch of claims about it, none of which seemed plausible, and most of which have already turned out to be a lie.

ddriver - Monday, April 24, 2017 - link

*multiply 2 by 0.5Reflex - Monday, April 24, 2017 - link

You can 3D stack Optane as well. That's a wash. You seem very obsessed with being right, and not with understanding the technology.