AMD Prepares 32-Core Naples CPUs for 1P and 2P Servers: Coming in Q2

by Ian Cutress on March 7, 2017 10:15 AM EST- Posted in

- Enterprise

- CPUs

- AMD

- SoCs

- Enterprise CPUs

- Zen

- Naples

- Ryzen

For users keeping track of AMD’s rollout of its new Zen microarchitecture, stage one was the launch of Ryzen, its new desktop-oriented product line last week. Stage three is the APU launch, focusing mainly on mobile parts. In the middle is stage two, Naples, and arguably the meatier element to AMD’s Zen story.

A lot of fuss has been made about Ryzen and Zen, with AMD’s re-launch back into high-performance x86. If you go by column inches, the consumer-focused Ryzen platform is the one most talked about and many would argue, the most important. In our interview with Dr. Lisa Su, CEO of AMD, the launch of Ryzen was a big hurdle in that journey. However, in the next sentence, Dr. Su lists Naples as another big hurdle, and if you decide to spend some time with one of the regular technology industry analysts, they will tell you that Naples is where AMD’s biggest chunk of the pie is. Enterprise is where the money is.

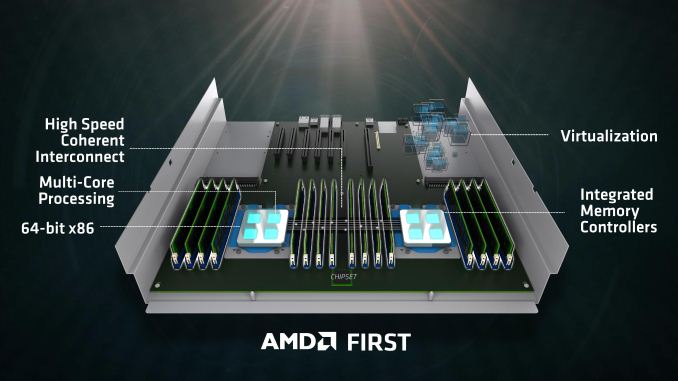

So while the consumer product line gets columns, the enterprise product line gets profits and high margins. Launching an enterprise product that gains even a few points of market share from the very large blue incumbent can implement billions of dollars to the bottom line, as well as provided some innovation as there are now two big players on the field. One could argue there are three players, if you consider ARM holds a few niche areas, however one of the big barriers to ARM adoption, aside from the lack of a high-performance single-core, is the transition from x86 to ARM instruction sets, requiring a rewrite of code. If AMD can rejoin and a big player in x86 enterprise, it puts a small stop on some of ARMs ambitions and aims to take a big enough chunk into Intel.

With today’s announcement, AMD is setting the scene for its upcoming Naples platform. Naples will not be the official name of the product line, and as we discussed with Dr. Su, Opteron one option being debated internally at AMD as the product name. Nonetheless, Naples builds on Ryzen, using the same core design but implementing it in a big way.

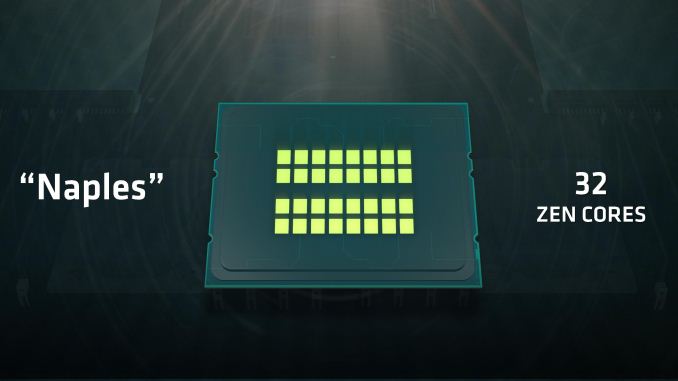

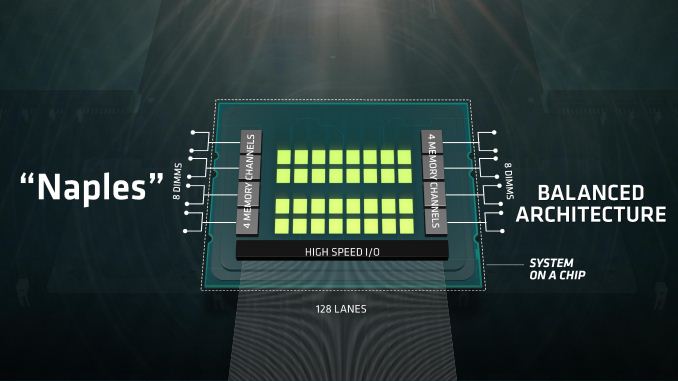

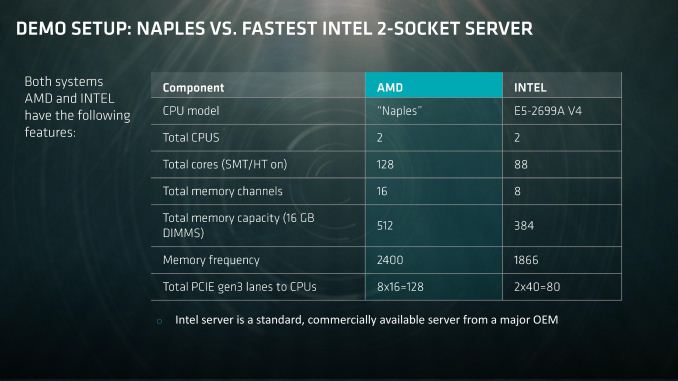

The top end Naples processor will have a total of 32 cores, with simultaneous multi-threading (SMT), to give a total of 64 threads. This will be paired with eight channels of DDR4 memory, up to two DIMMs per channel for a total of 16 DIMMs, and altogether a single CPU will support 128 PCIe 3.0 lanes. Naples also qualifies as a system-on-a-chip (SoC), with a measure of internal IO for storage, USB and other things, and thus may be offered without a chipset.

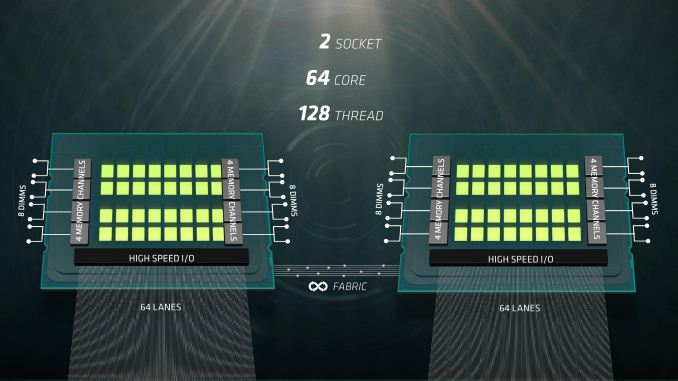

Naples will be offered as either a single processor platform (1P), or a dual processor platform (2P). In dual processor mode, and thus a system with 64 cores and 128 threads, each processor will use 64 of its PCIe lanes as a communication bus between the processors as part of AMD’s Infinity Fabric. The Infinity Fabric uses a custom protocol over these lanes, but bandwidth is designed to be on the order of PCIe. As each core uses 64 PCIe lanes to talk to the other, this allows each of the CPUs to give 64 lanes to the rest of the system, totaling 128 PCIe 3.0 again.

On the memory side, with eight channels and two DIMMs per channel, AMD is stating that they officially support up to 2TB of DRAM per socket, making 4TB in a single server. The total memory bandwidth available to a single CPU clocks in at 170 GB/s.

While not specifically mentioned in the announcement today, we do know that Naples is not a single monolithic die on the order of 500mm2 or up. Naples uses four of AMD’s Zeppelin dies (the Ryzen dies) in a single package. With each Zeppelin die coming in at 195.2mm2, if it were a monolithic die, that means a total of 780mm2 of silicon, and around 19.2 billion transistors – which is far bigger than anything Global Foundries has ever produced, let alone tried at 14nm. During our interview with Dr. Su, we postulated that multi-die packages would be the way forward on future process nodes given the difficulty of creating these large imposing dies, and the response from Dr. Su indicated that this was a prominent direction to go in.

Each die provides two memory channels, which brings us up to eight channels in total. However, each die only has 16 PCIe 3.0 lanes (24 if you want to count PCH/NVMe), meaning that some form of mux/demux, PCIe switch, or accelerated interface is being used. This could be extra silicon on package, given AMD’s approach of a single die variant of its Zen design to this point.

Note that we’ve seen multi-die packages before in previous products from both AMD and Intel. Despite both companies playing with multi-die or 2.5D technology (AMD with Fury, Intel with EMIB), we are lead to believe that these CPUs are similar to previous multi-chip designs, however there is Infinity Fabric going through them. At what bandwidth, we do not know at this point. It is also pertinent to note that there is a lot of talk going around about the strength of AMD's Infinity Fabric, as well as how threads are manipulated within a silicon die itself, having two core complexes of four cores each. This is something we are investigating on the consumer side, but will likely be very relevant on the enterprise side as well.

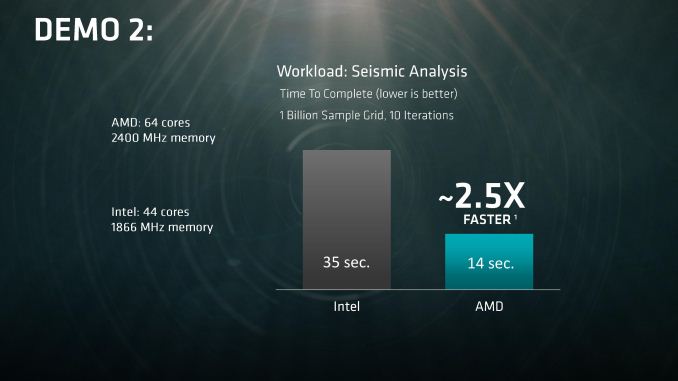

In the land of benchmark numbers we can’t verify (yet), AMD showed demonstrations at the recent Ryzen Tech Day. The main demonstration was a sparse matrix calculation on a 3D-dataset for seismic analysis. In this test, solving a 15-diagonal matrix of 1 billion samples took 35 seconds on an Intel machine vs 18 seconds on an AMD machine (both machines using 44 cores and DDR4-1866). When allowed to use its full 64-cores and DDR4-2400 memory, AMD shaved another four seconds off. Again, we can’t verify these results, and it’s a single data point, but a diagonal matrix solver would be a suitable representation for an enterprise workload. We were told that the clock frequencies for each chip were at stock, however AMD did say that the Naples clocks were not yet finalized.

What we don’t know are power numbers, frequencies, processor lists, pricing, partners, segmentation, and all the meaty stuff. We expect AMD to offer a strong attack on the 1P/2P server markets, which is where 99% of the enterprise is focused, particularly where high-performance virtualization is needed, or storage. How Naples migrates into the workstation space is an unknown, but I hope it does. We’re working with AMD to secure samples for Johan and me in advance of the Q2 launch.

91 Comments

View All Comments

lilmoe - Tuesday, March 7, 2017 - link

Given the MT showings of Ryzen, and the relatively lower price, this should wipe the floor with Intel in the server segment in density, performance AND efficiency. I really hope this takes off for AMD. This market is in dire need of solid competition.WinterCharm - Tuesday, March 7, 2017 - link

I agree. What a time to be alive... CPU's are getting exciting again!ddriver - Tuesday, March 7, 2017 - link

128 pcie lanes, that sounds like my kind of toy. Meanwhile, top end xeons come with only 32.128 lanes also means that at least by design ryzen outta have 32 rather than the announced 24 lanes. 8 lanes either disabled or reserved for system use?

They say in dual socket each chip loses half the pcie lanes, but I assume just because they need the pins for infinity fabric, rather than actually using pcie for socket to socket transfers, the pcie protocol isn't optimal for that task even if bandiwdth is plenty, those pins can be better utilized in multi socket systems.

Ryzen might suffer a bit in single threaded performance, but that is only because the architecture is optimized from the ground up for HPC and server in mind. It will make a great server chip, which is a smart move by AMD, seeing the kind of margins that market has compared to desktop. Ryzen is already great for content creation workstations, the only minor complaint being the pcie lane count, and the lack of ecc memory support, and Naples solves those two.

I assume pricing will be very nice too, because that's what they did in desktop, and in servers it will be even easier to massively undercut intel, because their server chips are even-more-ridiculously-overpriced. And amd needs to steal server market badly, so it can sell as many of those cores at much higher prices than they would in desktop.

A xeon E7-8890 v4 costs the whooping 7400$. A top end Naples will easily wipe the floor with it, and seeing how it is merely 4 500$ Ryzen dies slapped together, I would not be surprised to see that chip retailing at around 3000$.

ERJ - Tuesday, March 7, 2017 - link

E7-8890 is a 4 socket chip. A more fair comparison would be E5-2699 which is a dual processor chip (22-Core). Those go for around $3500 retail.ERJ - Tuesday, March 7, 2017 - link

Strike that...an 8 socket chip.ddriver - Tuesday, March 7, 2017 - link

The 4S version ain't much cheaper thou - 7000$. There is really no 2S direct equivalent, but if there was, I doubt it would be less than 6k$.ZeDestructor - Tuesday, March 7, 2017 - link

Actually, if you design your own cache-coherent QPI "switches" (like SGI), you can go much, much higher, into the thousands...DanNeely - Tuesday, March 7, 2017 - link

"128 lanes also means that at least by design ryzen outta have 32 rather than the announced 24 lanes. 8 lanes either disabled or reserved for system use?"This doesn't necessarily follow, while similar overall tinkering around the edges on common blocks is common. It's possible the extra PCIe lanes on the server version are in space holding SoC components on the consumer models.

ddriver - Tuesday, March 7, 2017 - link

Nah, it is most likely the same die, AMD don't have the resources to go nuts on custom dies. Also, it is not like ryzen has iGPU or something like that, there isn't really anything to replace for server because ryzen itself is a server design to begin with.extide - Wednesday, March 8, 2017 - link

They already said its the same die, Zepplin