The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

Star Swarm & The Test

For today’s DirectX 12 preview, Microsoft and Oxide Games have supplied us with a newer version of Oxide’s Star Swarm demo. Originally released in early 2014 as a demonstration of Oxide’s Nitrous engine and the capabilities of Mantle, Star Swarm is a massive space combat demo that is designed to push the limits of high-level APIs and demonstrate the performance advantages of low-level APIs. Due to its use of thousands of units and other effects that generate a high number of draw calls, Star Swarm can push over 100K draw calls, a massive workload that causes high-level APIs to simply crumple.

Because Star Swarm generates so many draw calls, it is essentially a best-case scenario test for low-level APIs, exploiting the fact that high-level APIs can’t effectively spread out the draw call workload over several CPU threads. As a result the performance gains from DirectX 12 in Star Swarm are going to be much greater than most (if not all) video games, but none the less it’s an effective tool to demonstrate the performance capabilities of DirectX 12 and to showcase how it is capable of better distributing work over multiple CPU threads.

It should be noted that while Star Swarm itself is a synthetic benchmark, the underlying Nitrous engine is relevant and is being used in multiple upcoming games. Stardock is using the Nitrous engine for their forthcoming Star Control game, and Oxide is using the engine for their own game, set to be announced at GDC 2015. So although Star Swarm is still a best case scenario, many of its lessons will be applicable to these future games.

As for the benchmark itself, we should also note that Star Swarm is a non-deterministic simulation. The benchmark is based on having two AI fleets fight each other, and as a result the outcome can differ from run to run. The good news is that although it’s not a deterministic benchmark, the benchmark’s RTS mode is reliable enough to keep the run-to-run variation low enough to produce reasonably consistent results. Among individual runs we’ll still see some fluctuations, while the benchmark will reliably demonstrate larger performance trends.

The Test

For today’s preview Microsoft, NVIDIA, and AMD have provided us with the necessary WDDM 2.0 drivers to enable DirectX 12 under Windows 10. The NVIDIA driver is 349.56 and the AMD driver is 15.200. At this time we do not know when these early WDDM 2.0 drivers will be released to the public, though we would be surprised not to see them released by the time of GDC in early March.

In terms of bugs and other known issues, Microsoft has informed us that there are some known memory and performance regressions in the current WDDM 2.0 path that have since been fixed in interim builds of Windows. In particular the WDDM 2.0 path may see slightly lower performance than the WDDM 1.3 path for older drivers, and there is an issue with memory exhaustion. For this reason Microsoft has suggested that a 3GB card is required to use the Star Swarm DirectX 12 binary, although in our tests we have been able to run it on 2GB cards seemingly without issue. Meanwhile DirectX 11 deferred context support is currently broken in the combination of Star Swarm and NVIDIA's drivers, causing Star Swarm to immediately crash, so these results are with D3D 11 deferred contexts disabled.

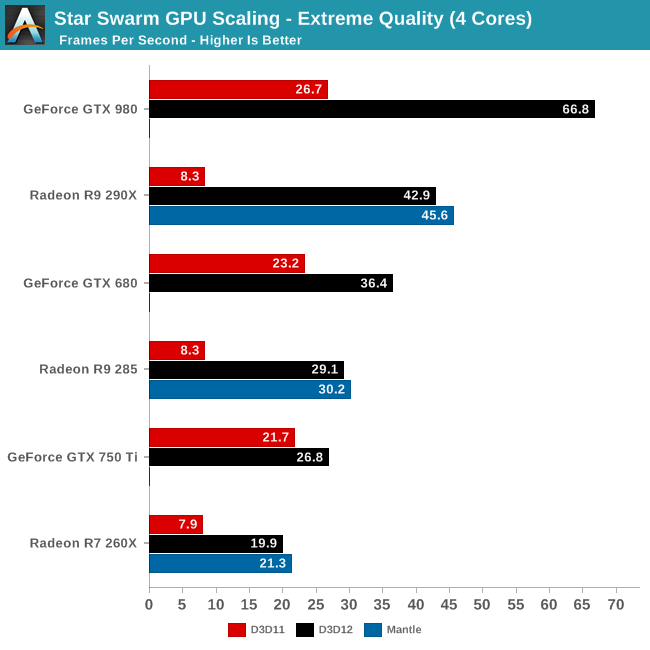

For today’s article we are looking at a small range of cards from both AMD and NVIDIA to showcase both performance and compatibility. For NVIDIA we are looking at the GTX 980 (Maxwell 2), GTX 750 Ti (Maxwell 1), and GTX 680 (Kepler). For AMD we are looking at the R9 290X (GCN 1.1), R9 285 (GCN 1.2), and R9 260X (GCN 1.1). As we mentioned earlier support for Fermi and GCN 1.0 cards will be forthcoming in future drivers.

Meanwhile on the CPU front, to showcase the performance scaling of Direct3D we are running the bulk of our tests on our GPU testbed with 3 different settings to roughly emulate high-end Core i7 (6 cores), i5 (4 cores), and i3 (2 cores) processors. Unfortunately we cannot control for our 4960X’s L3 cache size, however that should not be a significant factor in these benchmarks.

| DirectX 12 Preview CPU Configurations (i7-4960X) | |||

| Configuration | Emulating | ||

| 6C/12T @ 4.2GHz | Overclocked Core i7 | ||

| 4C/4T @ 3.8GHz | Core i5-4670K | ||

| 2C/4T @ 3.8GHz | Core i3-4370 | ||

Though not included in this preview, AMD’s recent APUs should slot between the 2 and 4 core options thanks to the design of AMD’s CPU modules.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 290X AMD Radeon R9 285 AMD Radeon R7 260X NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 750 Ti NVIDIA GeForce GTX 680 |

| Video Drivers: | NVIDIA Release 349.56 Beta AMD Catalyst 15.200 Beta |

| OS: | Windows 10 Technical Preview 2 (Build 9926) |

Finally, while we’re going to take a systematic look at DirectX 12 from both a CPU standpoint and a GPU standpoint, we may as well answer the first question on everyone’s mind: does DirectX 12 work as advertised? The short answer: a resounding yes.

245 Comments

View All Comments

Mr Perfect - Sunday, February 8, 2015 - link

That's not what he's saying though, he said TDP is some measure of what amount of heat is 'wasted" heat. As if there's some way to figure out what part of the 165 watts is doing computational work, and what is just turning into heat without doing any computational work. That's not what TDP measures.Also, CPUs and GPUs can routinely go past TDP, so I'm not sure where people keep getting TDP is maximum power draw from. It's seen regularly in the benchmarks here at Anandtech. That's usually one of the goals of the power section of reviews, seeing if the manufacturers TDP calculation of typical power draw holds up in the real world.

Mr Perfect - Sunday, February 8, 2015 - link

Although, now that I think about it, I do remember a time when TDP actually was pretty close to maximum power draw. But then Intel came out with the Netburst architecture and started defining TDP as the typical power used by the part in real world use, since the maximum power draw was so ugly. After a lot of outrage from the other companies, they picked up the same practice so they wouldn't seem to be at a disadvantage in regard to power draw. That was ages ago though, TDP hasn't meant maximum power draw for years.Strunf - Sunday, February 8, 2015 - link

TDP essentially means your GPU can work at that power input for a long time, in the past the CPU/GPU were close to it cause they didn't have throttle, idles and what not technologies. Today they have and they can go past the TDP for "short" period of times, with the help of thermal sensors they can adjust the power as they need without risking of burning down the CPU/GPU.YazX_ - Friday, February 6, 2015 - link

Dude, its total System power consumption not video card only.Morawka - Friday, February 6, 2015 - link

are you sure you not looking at factory overclocked cards? The 980 has a 8 pin and 6 pin connector. You gotta minus the CPU and Motherboard power.Check any reference review on power consumption

http://www.guru3d.com/articles_pages/nvidia_geforc...

Yojimbo - Friday, February 6, 2015 - link

Did you notice the 56% greater performance? The rest of the system is going to be drawing more power to keep up with the greater GPU performance. NVIDIA is getting much greater benefit of having 4 cores than 2, for instance. And who knows, maybe the GPU itself was able to run closer to full load. Also, the benchmark is not deterministic, as mentioned several times in the article. It is the wrong sort of benchmark to be using to compare two different GPUs in power consumption, unless the test is run significantly many times. Finally, you said the R9 290X-powered system consumed 14W more in the DX12 test than the GTX 980-powered system, but the list shows it consumed 24W more. Let's not even compare DX11 power consumption using this benchmark, since NVIDIA's performance is 222% higher.MrPete123 - Friday, February 6, 2015 - link

Win7 will be dominant in businesses for some time, but not gaming PCs where this will be benefit more.Yojimbo - Friday, February 6, 2015 - link

Most likely the main reasons for consumers not upgrading to Windows 10 will be laziness, comfort, and ignorance.Murloc - Saturday, February 7, 2015 - link

people who are CPU bottlenecked are not that kind of people given the amount of money they spend on GPUs.Frenetic Pony - Friday, February 6, 2015 - link

FREE. Ok. FREE. F and then R and then E and then another E.