The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

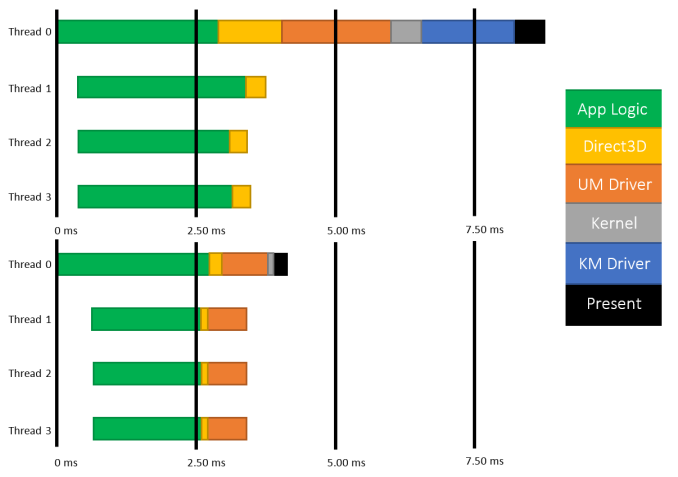

About a year and a half ago AMD kicked off the public half of a race to improve the state of graphics APIs. Dubbed "Mantle", AMD’s in-house API for their Radeon cards stripped away the abstraction and inefficiencies of traditional high-level APIs like DirectX 11 and OpenGL 4, and instead gave developers a means to access the GPU in a low-level, game console-like manner. The impetus: with a low-level API, engine developers could achieve better performance than with a high-level API, sometimes vastly exceeding what DirectX and OpenGL could offer.

While AMD was the first such company to publicly announce their low-level API, they were not the last. 2014 saw the announcement of APIs such as DirectX 12, OpenGL Next, and Apple’s Metal, all of which would implement similar ideas for similar performance reasons. It was a renaissance in the graphics API space after many years of slow progress, and one desperately needed to keep pace with the progress of both GPUs and CPUs.

In the PC graphics space we’ve already seen how early versions of Mantle perform, with Mantle offering some substantial boosts in performance, especially in CPU-bound scenarios. As awesome as Mantle is though, it is currently a de-facto proprietary AMD API, which means it can only be used with AMD GPUs; what about NVIDIA and Intel GPUs? For that we turn towards DirectX, Microsoft’s traditional cross-vendor API that will be making the same jump as Mantle, but using a common API for the benefit of every vendor in the Windows ecosystem.

DirectX 12 was first announced at GDC 2014, where Microsoft unveiled the existence of the new API along with their planned goals, a brief demonstration of very early code, and limited technical details about how the API would work. Since then Microsoft has been hard at work on DirectX 12 as part of the larger Windows 10 development effort, culminating in the release of the latest Windows 10 Technical Preview, Build 9926, which is shipping with an early preview version of DirectX 12.

GDC 2014 - DirectX 12 Unveiled: 3DMark 2011 CPU Time: Direct3D 11 vs. Direct3D 12

With the various pieces of Microsoft’s latest API finally coming together, today we will be taking our first look at the performance future of DirectX. The API is stabilizing, video card drivers are improving, and the first DirectX 12 application has been written; Microsoft and their partners are finally ready to show off DirectX 12. To that end, today we’ll looking at DirectX 12 through Oxide Games’ Star Swarm benchmark, our first DirectX 12 application and a true API efficiency torture test.

Does DirectX 12 bring the same kind of performance benefits we saw with Mantle? Can it resolve the CPU bottlenecking that DirectX 11 struggles with? How well does the concept of a low-level API work for a common API with disparate hardware? Let’s find out!

245 Comments

View All Comments

Jeffro421 - Thursday, February 12, 2015 - link

Something is horribly wrong with your results. I just ran this benchmark, on extreme, with a 270X 4GB and I got 39.61 FPS on DX11. You say a 290X only got 8.3 fps on DX11?http://i.imgur.com/JzX0UAa.png

Ryan Smith - Saturday, February 14, 2015 - link

You ran the Follow scenario. Our tests use the RTS scenario.Follow is a much lighter workload and far from reliable due to the camera swinging around.

0VERL0RD - Friday, February 13, 2015 - link

Been meaning to ask why both cards show vastly different total memory in Directx diag. Don't recall Article indicating how much memory each card had. Assuming they're equal. Is it normal for Nvidia to not report correct memory or is something else going on?Ryan Smith - Saturday, February 14, 2015 - link

The total memory reported is physical + virtual. As far as I can tell AMD is currently allocating 4GB of virtual memory, whereas NVIDIA is allocating 16GB of virtual memory.trisct - Friday, February 13, 2015 - link

MS needs a lot more Windows installs to make the Store take off, but first they need more quality apps and a competitive development stack. The same app on IOS or Android is almost always noticeably smoother with an improved UI (often extra widget behaviors that the Windows tablet versions cannot match). Part of this is maturity of the software, but Microsoft has yet to reach feature parity with the competing development environments, so its also harder for devs to create those smooth apps in the first place.NightAntilli - Friday, February 13, 2015 - link

We know Intel has great single core performance. So the lack of benefits for more than 4 cores is not unsurprising. The most interesting aspect would be to test the CPUs with weak single core performance, like the AMD FX series. Using the FX series rather than (only) the Intel CPUs would be more telling. 4 cores would not be enough to shift the bottleneck to the GPU with the FX CPUs. This would give a much better representation of scaling beyond 4 cores. Right now we don't know if the spreading of the tasks across multiple threads is limited to 4 cores, or if it scales equally well to 6 threads or 8 threads also.This is a great article, but I can't help feeling that we would've gotten more out of it if at least one AMD CPU was included. Either an FX-6xxx or FX-8xxx.

Ryan Smith - Saturday, February 14, 2015 - link

Ask and ye shall receive: http://www.anandtech.com/show/8968/star-swarm-dire...NightAntilli - Tuesday, February 17, 2015 - link

Thanks a lot :) The improvements are great.0ldman79 - Monday, February 16, 2015 - link

One benefit for MS to have (almost) everyone on a single OS is just how many man hours are spent patching the older OS? If they can set up the market to where they can drop support for Vista, 7 and 8 earlier than anticipated they will save themselves a tremendous amount of money.Blackpariah - Tuesday, February 17, 2015 - link

I'm just hoping the already outdated console hardware in PS4/Xbone won't hold things back too much for the pc folks. On a side note... I'm in a very specific scenario where my new gtx 970, with DX11, is getting 30-35 fps @ 1080P in battlefield 4 because the cpu is still an old Phenom 2 x4... while with my older R9 280, on Mantle, the framerate would stay above 50's at all times at almost identical graphic detail & same resolution.