Browser Face-Off: Battery Life Explored 2014

by Stephen Barrett on August 12, 2014 6:00 AM EST

It has been five years since we did a benchmark of web browsers effect on battery life and a lot has changed. Back then, our testing included Opera 9 & 10, Chrome 2, Firefox 3.5.2, Safari 4, and IE8. Just looking at those version numbers is nostalgic. Not only have the browsers gone through many revisions since then, but computer hardware and the Windows operating system are very different as well.

Windows Timers: Computer Architecture & Google Chrome

Before we get to any testing of battery life, we need to provide some background information on some of the changes, which requires a deeper understanding of how operating systems and hardware interface with each other. If you've browsed tech news recently, there has been coverage of a Google Chrome design decision from 2010. To recap, Google Chrome on Windows requests the operating system use a 1ms timer in an effort to increase web page rendering speed. Faster is better, but there is a problem with this technique.

For those unfamiliar with OS timers, they form a core component of any operating system. There are two fundamentally different ways to handle timing in a computer system, polling and interrupt modes. A polling system consists of software and hardware that continuously checks to see if something of interest has happened. For example, if a driver sets up a hardware device (i.e. a sound card) to acquire input and then continuously reads memory to check for new values, this is a polling system. However, if the driver sets up the device to acquire and then waits for interrupts (hardware notifications) that new data is available in memory, this is an interrupt system.

In general, interrupt mode is preferred as it saves significant resources and allows other threads to work while the corresponding thread sleeps. The vast majority of API calls a software developer has available do not even provide timing mode selection and simply use interrupt mode. Otherwise, a single application using polling could easily eat up an entire CPU core. There are other factors as well, like preemption, but they are out of scope of this article.

There's a problem with interrupt mode, however: it is slower for a variety of reasons. First, there is interrupt latency. Compared to polling mode checking for something to happen over and over, interrupts are always going to lose.

As an example, if I watch someone building a piece of furniture the entire time, I know exactly when they finish and I can use the furniture. On the other hand, if I wait for the builder to tell me, I could do other things in the meantime (work, sleep, play games, etc.). Of course I wouldn't know exactly when the furniture is ready and there would be a delay between when the furniture is complete and when I first begin using it.

General purpose operating systems like Windows are not typically concerned with interrupt latency. This is more important in embedded mechanical controls like those in your car. But there's another reason interrupts are slower than polling: timer coalescing.

To save power, Microsoft uses timer coalescing in Windows. Applications and drivers waiting for an event can specify their timeout in milliseconds, but in most cases the request time will be rounded to a multiple of Window's 15.6ms default timebase (prior to Windows 8, more on that later). For example, if I wrote some code that waits for an event for 200 milliseconds, Windows might actually sleep my thread for 202.8ms. When two threads request timers, this technique helps Windows continue to wake up at roughly 64 times per second instead of twice as much, 128 times per second.

The results of timer coalescing is that a web browser or other application could theoretically wait up to 15.6ms even when it requests to be scheduled in 1ms interrupts. When push comes to shove, some applications bypass the regular timer mechanism and ask Windows to use a 1ms timer, circumventing these delays. This handicaps CPU and OS power saving features because the longest the hardware and operating system can ever sleep is 1ms. Factoring in thread work time and wake time, sleep duration is likely much less than 1ms.

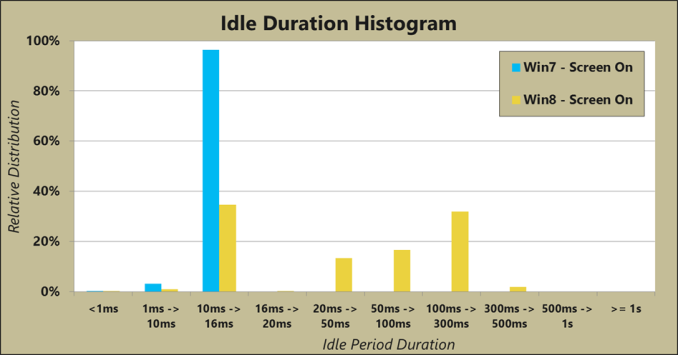

The power penalty of applications requesting a 1ms timer is exacerbated in Windows 8. In Windows 8, Microsoft maintains the same timer coalescing and timer request API calls, but they have implemented a tick-less kernel under the hood. With a tick-less kernel, the operating system doesn’t just try to round sleep times to a 'default timer resolution' of 15.6ms like Windows 7 and prior did, but instead at every wake event, Windows 8 analyzes the upcoming timed events and intelligently schedules its next wake up time. Therefore, sleep times could be either shorter or much longer than the previous default of 15.6ms. Depending on the distribution of wake up times, this could save significant power. Microsoft provided a blog post with some detail and data regarding the move.

Microsoft did not provide detail on what applications were running when they performed this test. However, we can tell from the data that Chrome was not running, otherwise the only values in the distribution would be at 1ms and below. And that's the crux of the problem.

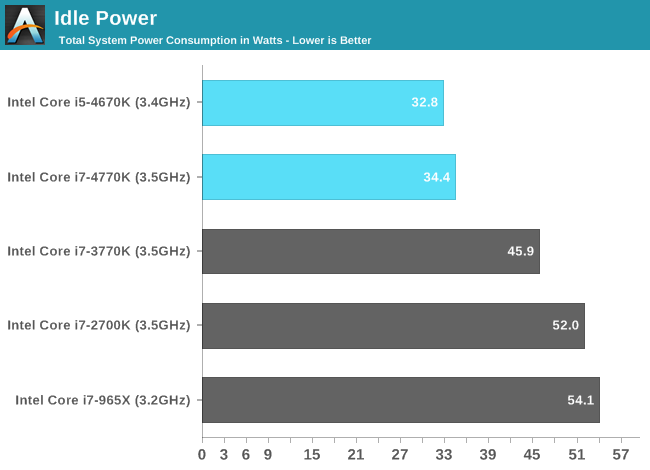

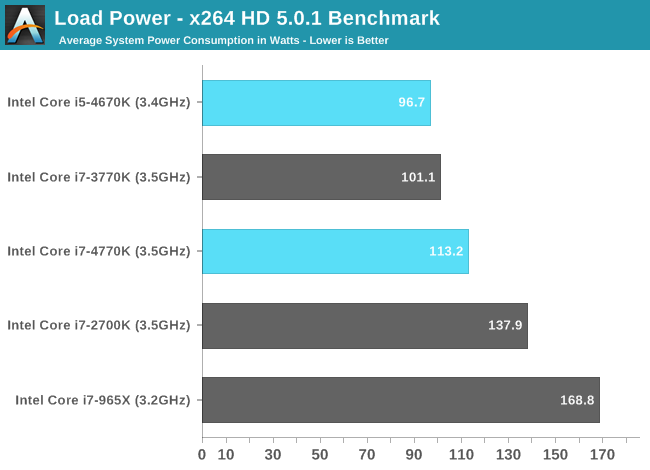

When Intel launched Haswell one of the focus points was idle power consumption. The theory is when you’re staring at a static screen reading an article or the device is ‘locked’, you can save significant battery life. Consider the following charts of power use for a desktop system:

The idle power of even a desktop Haswell is significantly better than Ivy Bridge

But the load power is the same or worse

Intel spent many years designing Haswell as an improvement over Ivy Bridge for power consumption, but they are at the mercy of application developers. If an application wakes the device up for work every 1ms, the idle power benefits of Haswell don’t have nearly the impact they could.

The developers of Chrome are not ignorant to this and optimized Chrome to turn off its 1ms timer request if the system is running on battery power. However, this optimization is not functional and Chrome unfortunately always requests a 1ms timer. Other developers have criticized this timer technique in Chrome, pointing out that other browsers and high performance applications do not follow this same design pattern and that relying on precise interrupts from a general purpose OS is fundamentally a flawed design. They have likened it to old video games that changed speed with the MHz of the CPU.

By way of comparison, IE and Firefox use a frame rate limiting technique, where they use the default timer resolution of 15.6ms but if a website requests a 1ms refresh rate, they check the system clock after waking up and compute how many iterations to perform to achieve a virtual 1ms update rate. For example, if the browser wakes up after 13ms, then perform 13 iterations before sleeping again. The result is that they do more work less often.

112 Comments

View All Comments

SanX - Wednesday, August 13, 2014 - link

Linux market share was below 1% for almost two decades.fokka - Tuesday, August 12, 2014 - link

i find it slightly worrying that firefox is tied last in this test. ff has been my browser of choice for years and using anything else than my nicely customized setup makes web-browsing less comfortable than what i'm used to. but getting an hour more battery life out of my aging laptop when away from the socket wouldn't be bad either.maybe i'll give chrome (or one of its derivatives) another chance and try to set it up to my liking as good as i can. we'll see.

CaedenV - Tuesday, August 12, 2014 - link

Mozilla has not managed to put out anything good recently. The last few years they have been playing a 'me too' game with Chrome, to the point now where they even look similar. Outside of that FF has gotten slow, fat, and buggy. Unless you have privacy concerns or pet plugins that only work with FF then it is time to move on to Chrome or IE.And the issue is not limited to their browser. I mean look at the FF Phone and some of their other recent projects... sad to see a traditionally good company flounder like this.

edzieba - Tuesday, August 12, 2014 - link

You could also try something like Palemoon, which is essentially firefox's core but with some of the more dubious UI decisions removed or reversible and a lot of legacy crud cleared out.asmian - Thursday, August 14, 2014 - link

+1 for Palemoon!After reading this post I was curious. I just transferred, migrating my Firefox profile with the tool available to Palemoon with absolutely no issues, and apart from some minor preference tweaks to the toolbars it's a virtually identical browsing experience but I can immediately see and feel the difference in faster page loading. All my extensions and stored passwords work right including FireFTP and site logins. And I'm now running 64-bit, unlike FF. Awesome. ;)

Since the major principle of Palemoon is to cut unnecessary code from the stock FF source to speed up page rendering, it is exactly the sort of alternative browser that should have been included in this test for comparison, since it is now so divergent from official FireFox. Especially since the prevailing logic seems to be "Chrome wins, but sucks for privacy" and Palemoon, from my reading and so far happy experience, doesn't compromise on either.

lilmoe - Tuesday, August 12, 2014 - link

I find your results rather interesting. So I have a question please.Which GPU was primarily active during your tests for hardware accelerated browsers (like IE) vs Chrome? IE is significantly more hardware accelerated, and therefore GPU intensive, than any other browser (on Windows). So it would be interesting to see if the dGPU was taxed during the test.

lilmoe - Tuesday, August 12, 2014 - link

Sorry, forgot to note one more thing. When hardware accelerated rendering is disabled in IE, I noticed a significant reduction in GPU load % VS enabled. I have the same CPU as the test in this benchmark (Core i7 4702MQ, but with an AMD dGPU), and strangely, even CPU power is reduced when GPU rendering is disabled. This wasn't the case in my previous laptop's case (Core 2 Duo), but it's interesting to note. The better your CPU, the less more inefficient GPU rendering becomes.lilmoe - Tuesday, August 12, 2014 - link

the more inefficient**(please add an edit button for our posts...)

Stephen Barrett - Tuesday, August 12, 2014 - link

All browsers used the integrated (Intel) GPUBillBear - Tuesday, August 12, 2014 - link

Since Apple made power efficiency a headline feature of the current version of Safari on OSX Mavericks, I would like to see how that tests out in the real world.Run the same test on a Retina Macbook Pro.