Manual Camera Controls and RAW in Android L

by Joshua Ho on July 20, 2014 8:31 AM EST- Posted in

- Smartphones

- Mobile

- Tablets

- Android L

For those that have followed the state of camera software in AOSP and Google Camera in general, it’s been quite clear that this portion of the experience has been a major stumbling block for Android. Third party camera applications are almost always worse for options and camera experience than first party ones. Manual controls effectively didn’t exist because the underlying camera API simply didn’t support any of this. Until recently, the official Android camera API has only supported three distinct modes. These modes were preview, still image capture, and video recording. Within these modes, capabilities were similarly limited. It wasn’t possible to do burst image capture in photo mode or take photos while in video mode. Manual controls were effectively nonexistent as well. Even something as simple as tap to focus wasn’t supported through Android’s camera API until ICS (4.0). In response to these issues, Android OEMs and silicon vendors filled the gap in capabilities with custom, undocumented camera APIs. While this opened up the ability to deliver much better camera experiences, these APIs were only usable in the OEM’s camera applications. If there were no manual controls, there was no way for users to get a camera application that had manual controls.

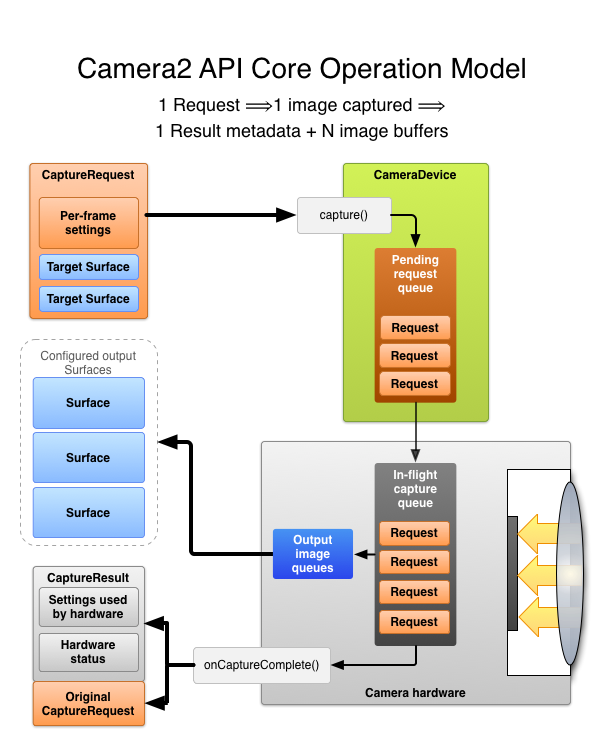

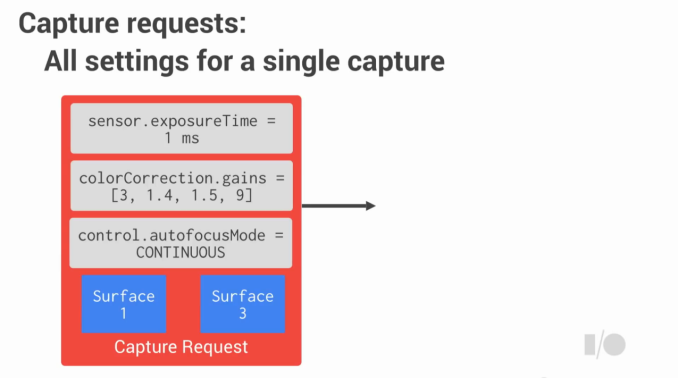

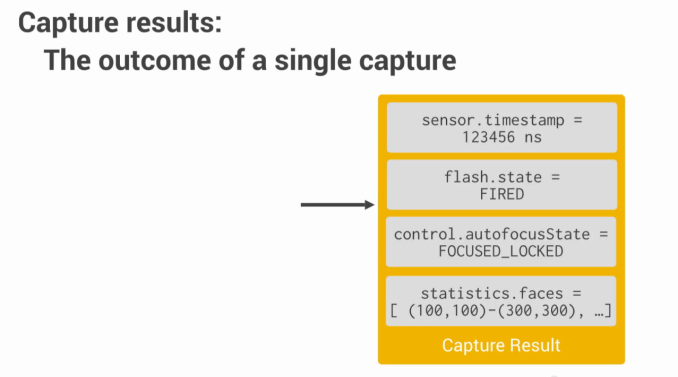

With Android L, this will change. Fundamentally, the key to understanding this new API is understanding that there are no longer distinct modes to work with. Photos, videos, and previews are all processed in the same exact way. This opens up a great deal of possibility, but also means more work on the part of the developer to do things correctly. Now, instead of sending capture requests in a given mode with global settings, individual requests for image capture are sent to a request queue and are processed with specific settings for each request.

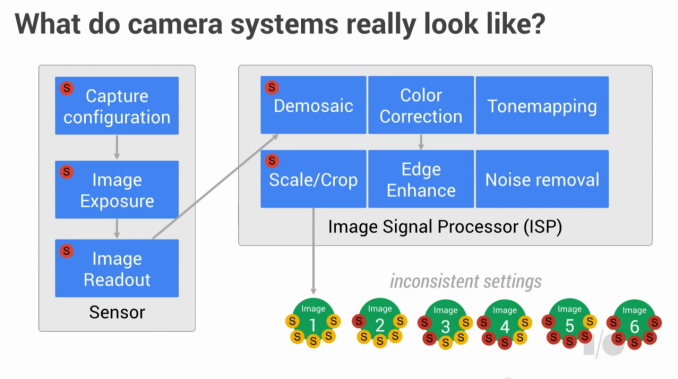

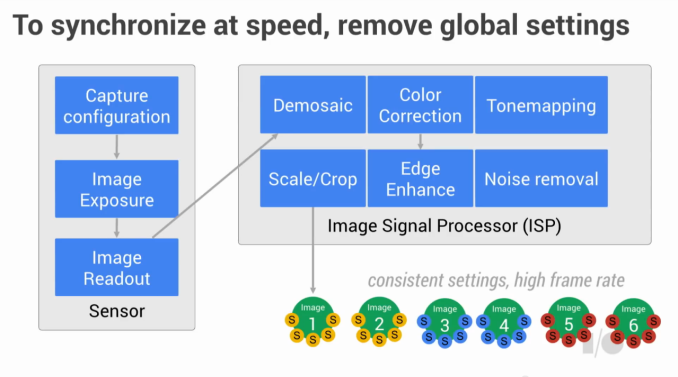

This sounds simple enough, but the implications are enormous. First, image capture is much faster. Before, if the settings for an image changed the entire imaging pipeline would have to clear out before another image could be taken. This is because any image that entered the pipeline would have settings changed while processing, which means that the settings would be inconsistent and incorrect. This slowed things down greatly because of this wait period after each change to capture settings. With the new API, you simply request captures with specific settings (device dependent) so there’s no need to wait on the pipeline with settings changes. This dramatically increases the maximum capture rate regardless of the format used. In other words, the old API set changes globally. This slowed down image capture every time image settings changed because all of the images in the pipeline had to be discarded once the settings were changed. In the new API, settings are done on a per-image basis. This means that no discarding has to happen, which means image capture stays fast.

The second implication is that the end user will have much more control over the settings that they can use. These have been discussed before in the context of iOS 8’s manual camera controls, but in effect it’s now possible to control shutter speed, ISO, focus, flash, white balance manually, along with options to control exposure level bias, exposure metering algorithms, and also select the capture format. This means that the images can be output as JPEG, YUV, RAW/DNG, or any other format that is supported.

While not an implication, the elimination of distinction between photo and video is crucial. Because these distinctions are removed, it’s now possible to do burst shots, full resolution photos while capturing lower resolution video, and HDR video. In addition, because the pipeline gives all of the information on the camera state for each image, Lytro-style image refocusing is doable, as are depth maps for post-processing effects. Google specifically cited HDR+ in the Nexus 5 as an example of what’s possible with the new Android camera APIs.

This new camera API will be officially released in Android L, and it’s already usable on the Android L preview for the Nexus 5. While there are currently no third party applications that take advantage of this API, there is a great deal of potential to make camera applications that greatly improve upon OEM camera applications. However, the most critical point to take away is that the new camera API will open up the possibility for applications that no one has thought of yet. While there are still issues with the Android camera ecosystem, with the release of Android L software won’t be one of them.

32 Comments

View All Comments

AnnihilatorX - Sunday, July 20, 2014 - link

The quality of the image has little to do with the format JPEG itself, the implementation of image processing APIs is the main factor.One can directly convert RAW to JPEG with no visible difference in the result. RAW allows better image at the end because it allows the often weak image processing software in the camera to be bypassed, then the image can be developed using software like Lightroom, and finally burn into a good JPEG.

AnnihilatorX - Sunday, July 20, 2014 - link

Forgot to mention RAW contains extra information from the sensors and thus enable more accurate processing in the developing stage.Filiprino - Sunday, July 20, 2014 - link

Usually JPEG used in phones uses a lot of compression, blurring the image a lot. The denoise filter is also too high.Of course you can use little compression when converting from RAW to JPEG and use a less agressive denoise filter.

soccerballtux - Tuesday, July 22, 2014 - link

I'm not a pro, I just want the camera app to do the magic for me so that my photos actually look like iPhone for once.Daniel Egger - Sunday, July 20, 2014 - link

Well, that's because you oversharpened a noisy image thus producing a somewhat sharp but also very busy picture. The processing engine probably employed some blurring as a primitive from of denoising and didn't sharp that much (maybe to keep the size down).I don't think RAW support will really matter because in the end the sensors used in most smart phones are still utter crap and I don't expect that to change much, unless using a compact camera running on Android. Trying to postprocess RAW capture of a crappy sensor is just a waste of precious time, people who are halfway serious about producing usable images will still have to buy a dedicated camera with a bigger sensor.

MyRandomUsername - Sunday, July 20, 2014 - link

The noise on the RAW is low if you consider it was a 750px, 100% crop from an 4k*3k picture. A RAW file out of a DSLR has the same high-frequency luma noise before you put it through a noise-reduction filter (which I didn't apply on the crop).It's okay if you don't like the detail though, since RAW is non-destructive you can just apply whatever amount of filtering you'd want. Undoubtedly there are going to be Android Apps like a camera app that internally shoots RAW but converts them on-the-go to JPEG with your own custom processing profiles.

Unlike my phone, I don't have my DSLR or compact with me everywhere so I welcome every improvement to the image quality on phones, skipping in-phone-processing happens to be a significant one.

ThoroSOE - Sunday, July 20, 2014 - link

The only thing I can say to having RAWs on a mobile phone is that I love, love, LOVE this option on my Lumia 1020. Even though the internal jpeg processing became quite decent with the Lumia Black update, it is still way behind the quality I can squeeze out of those RAWs myself. Yes, even the beast that the 1020 is in the mobile phone camera area severely lacks behind any DSLR. But as MyRandomUsername just said, the Lumia is the device I have on my almost all the time. This and not being forced to be on mercy of the internal image processing (this and having quite nice manual options while shooting nonetheless) makes a huge difference.Daniel Egger - Sunday, July 20, 2014 - link

> The noise on the RAW is low if you consider it was a 750px, 100% crop from an 4k*3k picture. A RAW file out of a DSLR has the same high-frequency luma noise before you put it through a noise-reduction filter (which I didn't apply on the crop).In broad daylight at lowest ISO? Not even 2 year old "enthusiast" compact cameras show any discernable luma noise before NR: http://www.dpreview.com/reviews/panasonic-lumix-dm...

Current DSLRs are even ways better than that: http://www.dpreview.com/reviews/nikon-d3300/10

> Unlike my phone, I don't have my DSLR or compact with me everywhere so I welcome every improvement to the image quality on phones, skipping in-phone-processing happens to be a significant one.

I'm a MLC user and from my own experience phone pictures come out so terrible anyway that RAW doesn't help a bit; usually those are snap, show, throw away. In most cases I don't even bother pulling out my Lumia because I'm very easily annoyed by shitty photos and I don't want myself to ruin my day.

thatguyyoulove - Sunday, July 20, 2014 - link

The point isn't to replace a DSLR. The point is to have significantly better detailed files to work with, which have much greater capacity to be adjusted in post, all of the times that you DON'T have a DSLR on you.You couldn't be more wrong about the sensors not being good enough. The sensors are more than capable for most use (obviously not large prints, but neither are most DSLRs). The gains in sharpness and image detail we've been seeing on RAW phones have been pretty good, and noise levels are pretty low sub ISO 1600. They compare pretty well to an entry level DSLR.

This is coming from a photographer who spends around $4-6k/year buying more photo equipment for his business. There have been plenty of times that I didn't have my MKIII with me that I had to use a cell phone for a quick picture and wished I could touch it up without exposing all sorts of artifacts. This looks like it will solve that problem.

soccerballtux - Tuesday, July 22, 2014 - link

isn't it possible to profile the CCD's response in different lighting situations using calibration cards and create an automatic multi-dimensional filter that fully optimizes automatically after shooting? I just want iPhone quality colors out of my photos.