Apple's Cyclone Microarchitecture Detailed

by Anand Lal Shimpi on March 31, 2014 2:10 AM EST

The most challenging part of last year's iPhone 5s review was piecing together details about Apple's A7 without any internal Apple assistance. I had less than a week to turn the review around and limited access to tools (much less time to develop them on my own) to figure out what Apple had done to double CPU performance without scaling frequency. The end result was an (incorrect) assumption that Apple had simply evolved its first ARMv7 architecture (codename: Swift). Based on the limited information I had at the time I assumed Apple simply addressed some low hanging fruit (e.g. memory access latency) in building Cyclone, its first 64-bit ARMv8 core. By the time the iPad Air review rolled around, I had more knowledge of what was underneath the hood:

As far as I can tell, peak issue width of Cyclone is 6 instructions. That’s at least 2x the width of Swift and Krait, and at best more than 3x the width depending on instruction mix. Limitations on co-issuing FP and integer math have also been lifted as you can run up to four integer adds and two FP adds in parallel. You can also perform up to two loads or stores per clock.

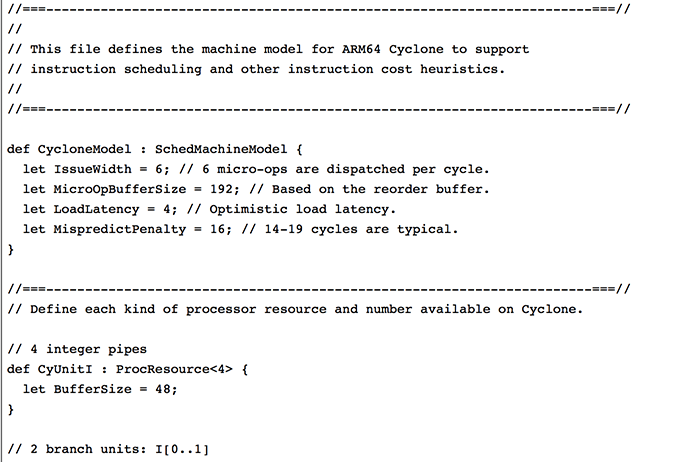

With Swift, I had the luxury of Apple committing LLVM changes that not only gave me the code name but also confirmed the size of the machine (3-wide OoO core, 2 ALUs, 1 load/store unit). With Cyclone however, Apple held off on any public commits. Figuring out the codename and its architecture required a lot of digging.

Last week, the same reader who pointed me at the Swift details let me know that Apple revealed Cyclone microarchitectural details in LLVM commits made a few days ago (thanks again R!). Although I empirically verified many of Cyclone's features in advance of the iPad Air review last year, today we have some more concrete information on what Apple's first 64-bit ARMv8 architecture looks like.

Note that everything below is based on Apple's LLVM commits (and confirmed by my own testing where possible).

| Apple Custom CPU Core Comparison | ||||||

| Apple A6 | Apple A7 | |||||

| CPU Codename | Swift | Cyclone | ||||

| ARM ISA | ARMv7-A (32-bit) | ARMv8-A (32/64-bit) | ||||

| Issue Width | 3 micro-ops | 6 micro-ops | ||||

| Reorder Buffer Size | 45 micro-ops | 192 micro-ops | ||||

| Branch Mispredict Penalty | 14 cycles | 16 cycles (14 - 19) | ||||

| Integer ALUs | 2 | 4 | ||||

| Load/Store Units | 1 | 2 | ||||

| Load Latency | 3 cycles | 4 cycles | ||||

| Branch Units | 1 | 2 | ||||

| Indirect Branch Units | 0 | 1 | ||||

| FP/NEON ALUs | ? | 3 | ||||

| L1 Cache | 32KB I$ + 32KB D$ | 64KB I$ + 64KB D$ | ||||

| L2 Cache | 1MB | 1MB | ||||

| L3 Cache | - | 4MB | ||||

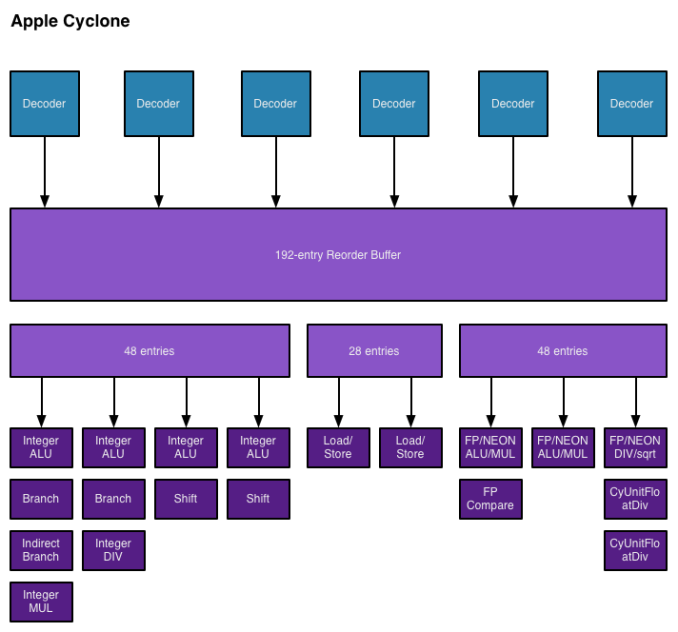

As I mentioned in the iPad Air review, Cyclone is a wide machine. It can decode, issue, execute and retire up to 6 instructions/micro-ops per clock. I verified this during my iPad Air review by executing four integer adds and two FP adds in parallel. The same test on Swift actually yields fewer than 3 concurrent operations, likely because of an inability to issue to all integer and FP pipes in parallel. Similar limits exist with Krait.

I also noted an increase in overall machine size in my initial tinkering with Cyclone. Apple's LLVM commits indicate a massive 192 entry reorder buffer (coincidentally the same size as Haswell's ROB). Mispredict penalty goes up slightly compared to Swift, but Apple does present a range of values (14 - 19 cycles). This also happens to be the same range as Sandy Bridge and later Intel Core architectures (including Haswell). Given how much larger Cyclone is, a doubling of L1 cache sizes makes a lot of sense.

On the execution side Cyclone doubles the number of integer ALUs, load/store units and branch units. Cyclone also adds a unit for indirect branches and at least one more FP pipe. Cyclone can sustain three FP operations in parallel (including 3 FP/NEON adds). The third FP/NEON pipe is used for div and sqrt operations, the machine can only execute two FP/NEON muls in parallel.

I also found references to buffer sizes for each unit, which I'm assuming are the number of micro-ops that feed each unit. I don't believe Cyclone has a unified scheduler ahead of all of its execution units and instead has statically partitioned buffers in front of each port. I've put all of this information into the crude diagram below:

Unfortunately I don't have enough data on Swift to really produce a decent comparison image. With six decoders and nine ports to execution units, Cyclone is big. As I mentioned before, it's bigger than anything else that goes in a phone. Apple didn't build a Krait/Silvermont competitor, it built something much closer to Intel's big cores. At the launch of the iPhone 5s, Apple referred to the A7 as being "desktop class" - it turns out that wasn't an exaggeration.

Cyclone is a bold move by Apple, but not one that is without its challenges. I still find that there are almost no applications on iOS that really take advantage of the CPU power underneath the hood. More than anything Apple needs first party software that really demonstrates what's possible. The challenge is that at full tilt a pair of Cyclone cores can consume quite a bit of power. So for now, Cyclone's performance is really used to exploit race to sleep and get the device into a low power state as quickly as possible. The other problem I see is that although Cyclone is incredibly forward looking, it launched in devices with only 1GB of RAM. It's very likely that you'll run into memory limits before you hit CPU performance limits if you plan on keeping your device for a long time.

It wasn't until I wrote this piece that Apple's codenames started to make sense. Swift was quick, but Cyclone really does stir everything up. The earlier than expected introduction of a consumer 64-bit ARMv8 SoC caught pretty much everyone off guard (e.g. Qualcomm's shift to vanilla ARM cores for more of its product stack).

The real question is where does Apple go from here? By now we know to expect an "A8" branded Apple SoC in the iPhone 6 and iPad Air successors later this year. There's little benefit in going substantially wider than Cyclone, but there's still a ton of room to improve performance. One obvious example would be through frequency scaling. Cyclone is clocked very conservatively (1.3GHz in the 5s/iPad mini with Retina Display and 1.4GHz in the iPad Air), assuming Apple moves to a 20nm process later this year it should be possible to get some performance by increasing clock speed scaling without a power penalty. I suspect Apple has more tricks up its sleeve than that however. Swift and Cyclone were two tocks in a row by Intel's definition, a third in 3 years would be unusual but not impossible (Intel sort of committed to doing the same with Saltwell/Silvermont/Airmont in 2012 - 2014).

Looking at Cyclone makes one thing very clear: the rest of the players in the ultra mobile CPU space didn't aim high enough. I wonder what happens next round.

182 Comments

View All Comments

zogus - Tuesday, April 1, 2014 - link

Um, have YOU used iOS, Icehawk? Over the years I've used iPhone 3G, 4 and 5, as well as iPad 1 and 3. Out of these, the only one that experienced regular out-of-memory issues was the 256MB iPad 1. iPhone 4's 512MB of RAM may feel cramped today, but it was plenty for 2010 purposes, and my current iPhone 5 and iPad 3 do not feel RAM starved at all on iOS 7.stevenjklein - Tuesday, April 1, 2014 - link

Actually, they HAVE doubled the amount of RAM since those old models shipped. The iPhone 4 and 4S had only ½GB, while the 5, 5S and 5C have 1GB. The original iPad shipped with ¼GB of RAM, while the current model has 1GB.jasonelmore - Monday, March 31, 2014 - link

Its not a HUGE problem NOW, but Apple is basically doing it to ensure you buy another phone 2-3 years down the line, because 1GB of ram wont hold up for long. Their software stack dictates performance of the hardware stack in essence. If they code iOS 8 to use more ram, which will happen x64 duh, then 1GB RAM devices will suffer sooner than 2GB RAM devicesPeteH - Tuesday, April 1, 2014 - link

@jasonelmoreI don't think Apple does that to ensure you buy another iPhone 2-3 years down the line, it seems too short sighted. After all, if your experience with your current iPhone is bad why would you get another one?

I think a more likely explanation is to maximize profits while also providing a quality user experience. From Apple's perspective, if there's no significant upside today to providing 2GB of RAM why would they?

stevenjklein - Tuesday, April 1, 2014 - link

Apple doesn't "cheap out" on RAM. They limit RAM because RAM is power-hungry; more RAM means shorter battery life.Increasing RAM without losing battery life means a bigger battery (which means a thicker, heaver phone).

Khato - Monday, March 31, 2014 - link

Regarding the GPU... take a look at the number of job listings Apple currently has for graphics hardware design. It's quite clear that they're intending to develop their own GPU IP, the question is what the intended product for it is - are they intending to replace Imagination in their smartphone/tablet SoC or is it for something else? Note that the only reason to get into it is to be able to better define their own power and performance targets - it's unlikely that they'll actually come up with a better architecture than any of the other players for quite some time.name99 - Monday, March 31, 2014 - link

The way I expect the GPU will play out is basically the same as the ARM story. Apple didn't start from scratch --- they were happy to license a bunch of IP from ARM.I expect they'd similarly license a bunch of IP from Imagination and modify that --- but not as extensive modifications as with the CPU. Like you say, better matching their targets.

This could take the following forms:

- better integration with the CPU (custom connection to a fast L3 shared with the CPU; common address space shared with CPU with all that implies for MMU)

- higher performance than Imagination ships (eg Imagination maxes out with 3 cores, Apple ships with 4)

- much more aggressive support for double precision, if Apple has grand plans for OpenCL that require DP to be as well supported as SP.

Khato - Monday, March 31, 2014 - link

I'd wondered about that approach as well, but I'm not clear on which levels Imagination licenses their graphics IP? Because I'd definitely agree that it makes sense to start out with that route and do comparatively minor customization of Imagination's design to better suit their targets at first. I'm just not certain if Imagination would actually let them or not - as said, I have no clue what their licensing model is like.Regardless, Apple getting into the GPU design side as well is definitely an interesting development. Especially if they actually go fully custom sooner rather than later since then Imagination will pretty much be out of high-end customers no?

Scannall - Wednesday, April 2, 2014 - link

According to this press release from Feb 6, 2014 quite a bit it seems. Apple does own about %10 of Imagination, so I am guessing it's pretty easy to work a deal.http://www.imgtec.com/corporate/newsdetail.asp?New...

techconc - Monday, March 31, 2014 - link

Khato, I agree with you in terms of the reason to get into the GPU business. However, I wouldn't bet against them in terms of coming up with a better architecture than the competition. Many said that when Apple entered the SoC / CPU business. Should be interesting to see how this plays out nonetheless.