The NVIDIA GeForce GTX 750 Ti and GTX 750 Review: Maxwell Makes Its Move

by Ryan Smith & Ganesh T S on February 18, 2014 9:00 AM ESTPower, Temperature, & Noise

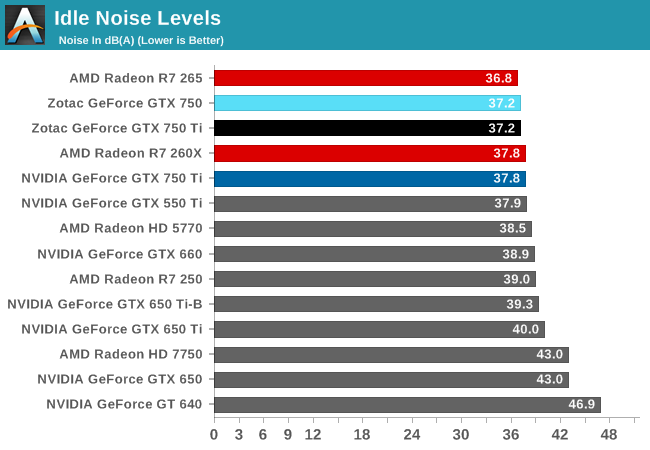

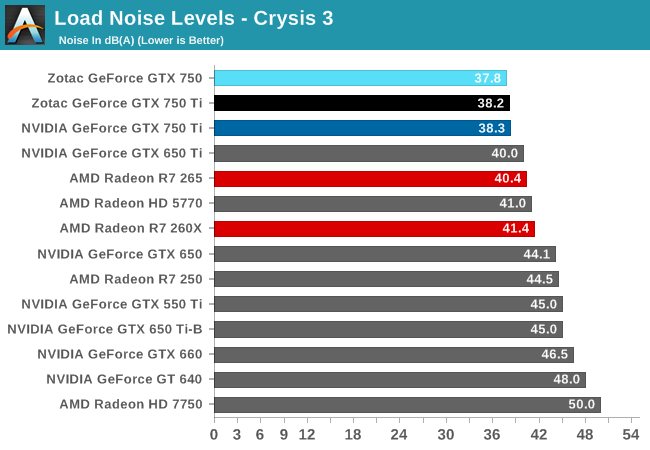

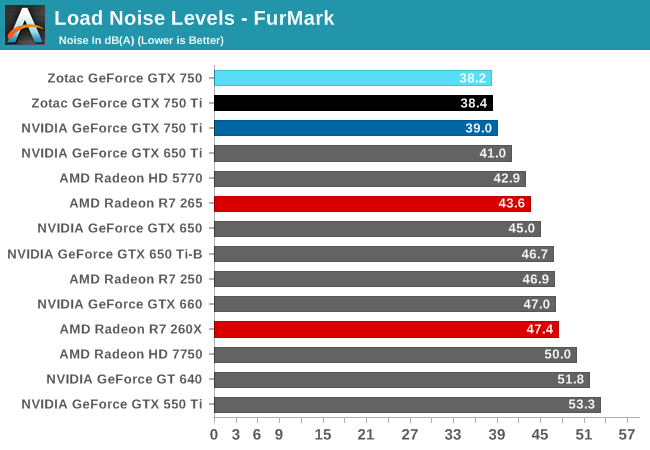

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

| GeForce GTX 750 Series Voltages | ||||

| Ref GTX 750 Ti Boost Voltage | Zotac GTX 750 Ti Boost Voltage | Zotac GTX 750 Boost Voltage | ||

| 1.168v | 1.137v | 1.187v | ||

For those of you keeping track of voltages, you’ll find that the voltages for GM107 as used on the GTX 750 series is not significantly different from the voltages used on GK107. Since we’re looking at a chip that’s built on the same 28nm process as GK107, the voltages needed to drive it to hit the desired frequencies have not changed.

| GeForce GTX 750 Series Average Clockspeeds | |||||

| Ref GTX 750 Ti | Zotac GTX 750 Ti | Zotac GTX 750 | |||

| Max Boost Clock |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Metro: LL |

1150MHz

|

1172MHz

|

1162MHz

|

||

| CoH2 |

1148MHz

|

1172MHz

|

1162MHz

|

||

| Bioshock |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Battlefield 4 |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Crysis 3 |

1149MHz

|

1174MHz

|

1162MHz

|

||

| Crysis: Warhead |

1150MHz

|

1175MHz

|

1162MHz

|

||

| TW: Rome 2 |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Hitman |

1150MHz

|

1175MHz

|

1162MHz

|

||

| GRID 2 |

1150MHz

|

1175MHz

|

1162MHz

|

||

| Furmark |

1006MHz

|

1032MHz

|

1084MHz

|

||

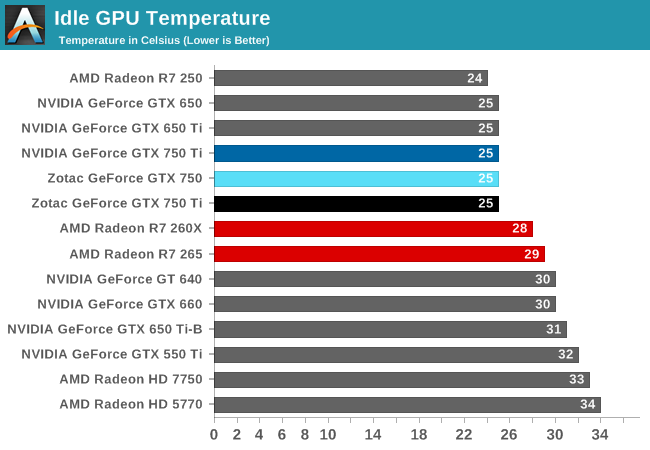

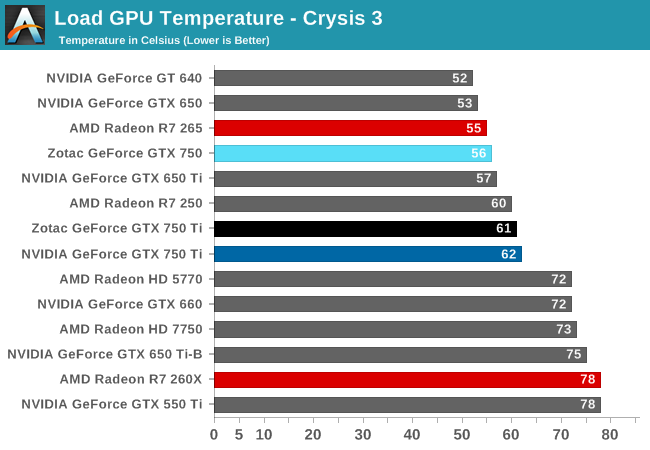

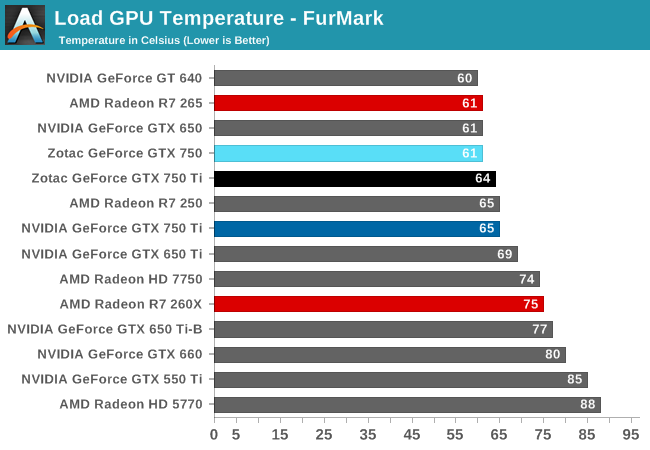

Looking at average clockspeeds, we can see that our cards are essentially free to run at their maximum boost bins, well above their base clockspeed or even their official boost clockspeed. Because these cards operate at such a low TDP cooling is rendered a non-factor in our testbed setup, with all of these cards easily staying in the 60C or lower range, well below the 80C thermal throttle point that GPU Boost 2.0 uses.

As such they are limited only by TDP, which as we can see does make itself felt, but is not a meaningful limitation. Both GTX 750 Ti cards become TDP limited at times while gaming, but only for a refresh period or two, pulling the averages down just slightly. The Zotac GTX 750 on the other hand has no such problem (the power savings of losing an SMX), so it stays at 1162MHz throughout the entire run.

177 Comments

View All Comments

RealiBrad - Tuesday, February 18, 2014 - link

If you were to run the AMD card 10hrs a day with the avg cost of electricity in the US, you would pay around $22 more a year in electricity. The AMD card gives a %19 boost in power for a %24.5 boost in power usage. That means that the Nvidia card is around %5 more efficient. Its nice that they got the power envelope so low, but if you look at the numbers, not huge.The biggest factor is the supply coming out of AMD. Unless they start making more cards, the the 750Ti will be the better buy.

Homeles - Tuesday, February 18, 2014 - link

Your comment is very out of touch with reality, in regards to power consumption/efficiency:http://www.techpowerup.com/reviews/NVIDIA/GeForce_...

It is huge.

mabellon - Tuesday, February 18, 2014 - link

Thank you for that link. That's an insane improvement. Can't wait to see 20nm high end Maxwell SKUs.happycamperjack - Wednesday, February 19, 2014 - link

That's for gaming only, it's compute performance/watt is still horrible compared to AMD though. I wonder when can Nvidia catch up.bexxx - Wednesday, February 19, 2014 - link

http://media.bestofmicro.com/9/Q/422846/original/L...260kh/s at 60 watts is actually very high, that is basically matching 290x in kh/watt ~1000/280watts, and beating out r7 265 or anything... if you only look at kh/watt.

ninjaquick - Thursday, February 20, 2014 - link

To be honest, all nvidia did was increase the granularity of power gating and core states, so in the event of pure burn, the TDP is hit, and the perf will (theoretically) droop.The reason the real world benefits from this is simply the way rendering works, under DX11. Commands are fast and simple, so increasing the number of parallel queues allows for faster completion and lower power (Average). So the TDP is right, even if the working wattage per frame is just as high as any other GPU. AMD doesn't have that granularity implemented in GCN yet, though they do have the tech for it.

I think this is fairly silly, Nvidia is just riding the coat-tails of massive GPU stalling on frame-present.

elerick - Tuesday, February 18, 2014 - link

Since the performance charts have 650TI Boost i looked up the TDP of 140W. When compared to the Maxwell 750TI with 60W TDP I am in awe of the performance per watt. I sincerely hope that the 760/770/780 with 20nm to give the performance a sharper edge but even if they are not it will still give people with older graphics cards more of a reason to finally upgrade since driver performance tuning will start favoring Maxwell over the next few years.Lonyo - Tuesday, February 18, 2014 - link

The 650TI/TI Boost aren't cards designed to be efficient. They are cut down cards with sections of the GPU disabled. While 2x perf per watt might be somewhat impressive, it's not that impressive given the comparison is made to inefficient cards.Comparing it to something like a GTX650 regular, which is a fully enabled GPU, might be more apt of a comparison, and probably wouldn't give the same perf/watt increases.

elerick - Tuesday, February 18, 2014 - link

Thanks, I haven't been following lower end model cards for either camp. I usually buy $200-$300 class cards.bexxx - Thursday, February 20, 2014 - link

Still just over 1.8x higher perf/watt: http://www.techpowerup.com/reviews/NVIDIA/GeForce_...