NVIDIA G-Sync Review

by Anand Lal Shimpi on December 12, 2013 9:00 AM EST

It started at CES, nearly 12 months ago. NVIDIA announced GeForce Experience, a software solution to the problem of choosing optimal graphics settings for your PC in the games you play. With console games, the developer has already selected what it believes is the right balance of visual quality and frame rate. On the PC, these decisions are left up to the end user. We’ve seen some games try and solve the problem by limiting the number of available graphical options, but other than that it’s a problem that didn’t see much widespread attention. After all, PC gamers are used to fiddling around with settings - it’s just an expected part of the experience. In an attempt to broaden the PC gaming user base (likely somewhat motivated by a lack of next-gen console wins), NVIDIA came up with GeForce Experience. NVIDIA already tests a huge number of games across a broad range of NVIDIA hardware, so it has a good idea of what the best settings may be for each game/PC combination.

Also at CES 2013 NVIDIA announced Project Shield, later renamed to just Shield. The somewhat odd but surprisingly decent portable Android gaming system served another function: it could be used to play PC games on your TV, streaming directly from your PC.

Finally, NVIDIA has been quietly (and lately not-so-quietly) engaged with Valve in its SteamOS and Steam Machine efforts (admittedly, so is AMD).

From where I stand, it sure does look like NVIDIA is trying to bring aspects of console gaming to PCs. You could go one step further and say that NVIDIA appears to be highly motivated to improve gaming in more ways than pushing for higher quality graphics and higher frame rates.

All of this makes sense after all. With ATI and AMD fully integrated, and Intel finally taking graphics (somewhat) seriously, NVIDIA needs to do a lot more to remain relevant (and dominant) in the industry going forward. Simply putting out good GPUs will only take the company so far.

NVIDIA’s latest attempt is G-Sync, a hardware solution for displays that enables a semi-variable refresh rate driven by a supported NVIDIA graphics card. The premise is pretty simple to understand. Displays and GPUs update content asynchronously by nature. A display panel updates itself at a fixed interval (its refresh rate), usually 60 times per second (60Hz) for the majority of panels. Gaming specific displays might support even higher refresh rates of 120Hz or 144Hz. GPUs on the other hand render frames as quickly as possible, presenting them to the display whenever they’re done.

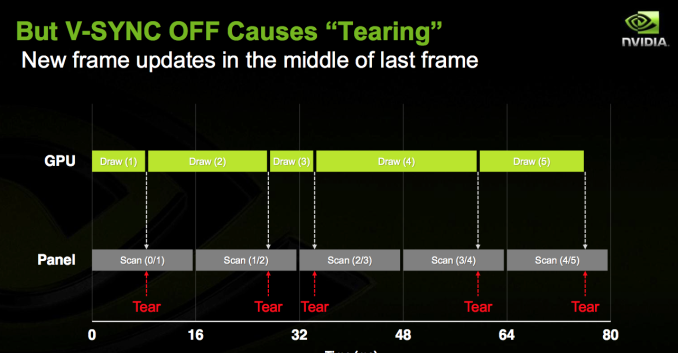

When you have a frame that arrives in the middle of a refresh, the display ends up drawing parts of multiple frames on the screen at the same time. Drawing parts of multiple frames at the same time can result in visual artifacts, or tears, separating the individual frames. You’ll notice tearing as horizontal lines/artifacts that seem to scroll across the screen. It can be incredibly distracting.

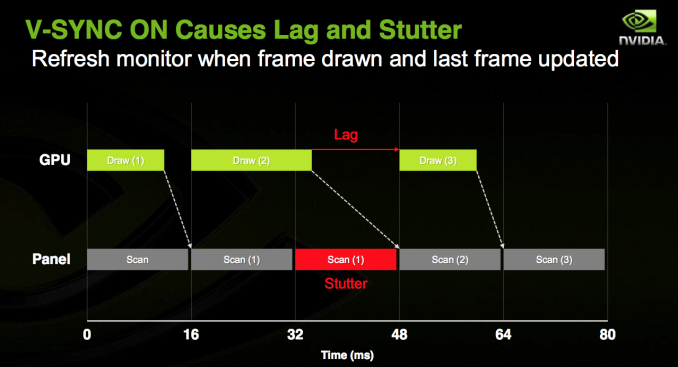

You can avoid tearing by keeping the GPU and display in sync. Enabling vsync does just this. The GPU will only ship frames off to the display in sync with the panel’s refresh rate. Tearing goes away, but you get a new artifact: stuttering.

Because the content of each frame of a game can vary wildly, the GPU’s frame rate can be similarly variable. Once again we find ourselves in a situation where the GPU wants to present a frame out of sync with the display. With vsync enabled, the GPU will wait to deliver the frame until the next refresh period, resulting in a repeated frame in the interim. This repeated frame manifests itself as stuttering. As long as you have a frame rate that isn’t perfectly aligned with your refresh rate, you’ve got the potential for visible stuttering.

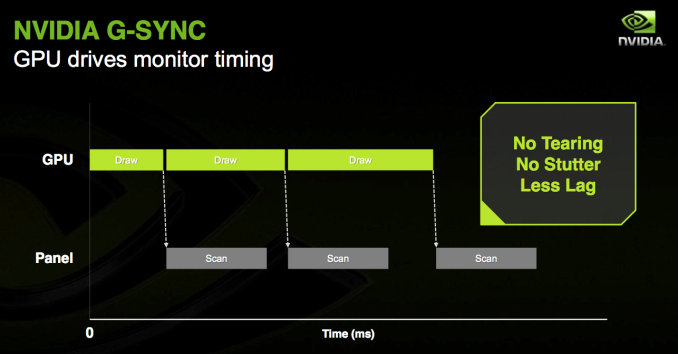

G-Sync purports to offer the best of both worlds. Simply put, G-Sync attempts to make the display wait to refresh itself until the GPU is ready with a new frame. No tearing, no stuttering - just buttery smoothness. And of course, only available on NVIDIA GPUs with a G-Sync display. As always, the devil is in the details.

How it Works

G-Sync is a hardware solution, and in this case the hardware resides inside a G-Sync enabled display. NVIDIA swaps out the display’s scaler for a G-Sync board, leaving the panel and timing controller (TCON) untouched. Despite its physical location in the display chain, the current G-Sync board doesn’t actually feature a hardware scaler. For its intended purpose, the lack of any scaling hardware isn’t a big deal since you’ll have a more than capable GPU driving the panel and handling all scaling duties.

G-Sync works by manipulating the display’s VBLANK (vertical blanking interval). VBLANK is the period of time between the display rasterizing the last line of the current frame and drawing the first line of the next frame. It’s called an interval because during this period of time no screen updates happen, the display remains static displaying the current frame before drawing the next one. VBLANK is a remnant of the CRT days where it was necessary to give the CRTs time to begin scanning at the top of the display once again. The interval remains today in LCD flat panels, although it’s technically unnecessary. The G-Sync module inside the display modifies VBLANK to cause the display to hold the present frame until the GPU is ready to deliver a new one.

With a G-Sync enabled display, when the monitor is done drawing the current frame it waits until the GPU has another one ready for display before starting the next draw process. The delay is controlled purely by playing with the VBLANK interval.

You can only do so much with VBLANK manipulation though. In present implementations the longest NVIDIA can hold a single frame is 33.3ms (30Hz). If the next frame isn’t ready by then, the G-Sync module will tell the display to redraw the last frame. The upper bound is limited by the panel/TCON at this point, with the only G-Sync monitor available today going as high as 6.94ms (144Hz). NVIDIA made it a point to mention that the 144Hz limitation isn’t a G-Sync limit, but a panel limit.

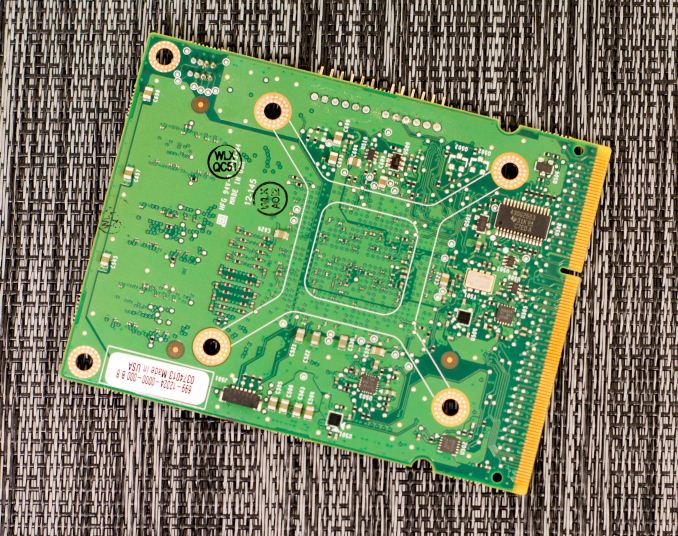

The G-Sync board itself features an FPGA and 768MB of DDR3 memory. NVIDIA claims the on-board DRAM isn’t much greater than what you’d typically find on a scaler inside a display. The added DRAM is partially necessary to allow for more bandwidth to memory (additional physical DRAM devices). NVIDIA uses the memory for a number of things, one of which is to store the previous frame so that it can be compared to the incoming frame for overdrive calculations.

The first G-Sync module only supports output over DisplayPort 1.2, though there is nothing technically stopping NVIDIA from adding support for HDMI/DVI in future versions. Similarly, the current G-Sync board doesn’t support audio but NVIDIA claims it could be added in future versions (NVIDIA’s thinking here is that most gamers will want something other than speakers integrated into their displays). The final limitation of the first G-Sync implementation is that it can only connect to displays over LVDS. NVIDIA plans on enabling V-by-One support in the next version of the G-Sync module, although there’s nothing stopping it from enabling eDP support as well.

Enabling G-Sync does have a small but measurable performance impact on frame rate. After the GPU renders a frame with G-Sync enabled, it will start polling the display to see if it’s in a VBLANK period or not to ensure that the GPU won’t scan in the middle of a scan out. The polling takes about 1ms, which translates to a 3 - 5% performance impact compared to v-sync on. NVIDIA is working on eliminating the polling entirely, but for now that’s how it’s done.

NVIDIA retrofitted an ASUS VG248QE display with its first generation G-Sync board to demo the technology. The V248QE is a 144Hz 24” 1080p TN display, a good fit for gamers but not exactly the best looking display in the world. Given its current price point ($250 - $280) and focus on a very high refresh rate, there are bound to be tradeoffs (the lack of an IPS panel being the big one here). Despite NVIDIA’s first choice being a TN display, G-Sync will work just fine with an IPS panel and I’m expecting to see new G-Sync displays announced in the not too distant future. There’s also nothing stopping a display manufacturer from building a 4K G-Sync display. DisplayPort 1.2 is fully supported, so 4K/60Hz is the max you’ll see at this point. That being said, I think it’s far more likely that we’ll see a 2560 x 1440 IPS display with G-Sync rather than a 4K model in the near term.

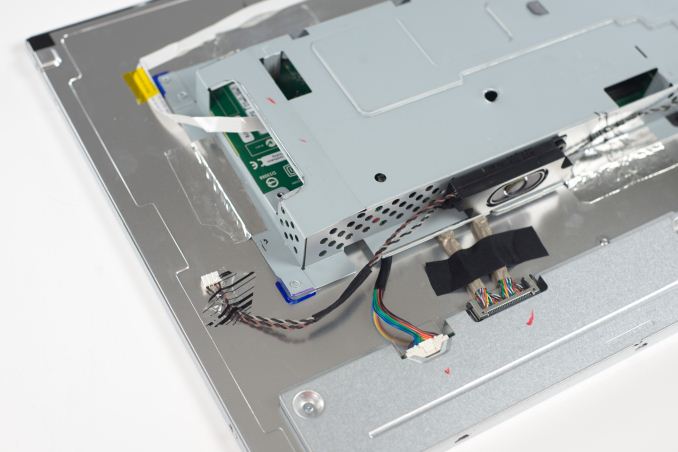

Naturally I disassembled the VG248QE to get a look at the extent of the modifications to get G-Sync working on the display. Thankfully taking apart the display is rather simple. After unscrewing the VESA mount, I just had to pry the bezel away from the back of the display. With the monitor on its back, I used a flathead screw driver to begin separating the plastic using the two cutouts at the bottom edge of the display. I then went along the edge of the panel, separating the bezel from the back of the monitor until I unhooked all of the latches. It was really pretty easy to take apart.

Once inside, it’s just a matter of removing some cables and unscrewing a few screws. I’m not sure what the VG248QE looks like normally, but inside the G-Sync modified version the metal cage that’s home to the main PCB is simply taped to the back of the display panel. You can also see that NVIDIA left the speakers intact, there’s just no place for them to connect to.

It looks like NVIDIA may have built a custom PCB for the VG248QE and then mounted the G-Sync module to it.

The G-Sync module itself looks similar to what NVIDIA included in its press materials. The 3 x 2Gb DDR3 devices are clearly visible, while the FPGA is hidden behind a heatsink. Removing the heatsink reveals what appears to be an Altera Arria V GX FPGA.

The FPGA includes an integrated LVDS interface, which makes it perfect for its role here.

193 Comments

View All Comments

extide - Thursday, December 12, 2013 - link

I think you are on to something here, something like a modification of the packet based DP protocol. Now, the speed of the packets in DP depends on the resolution and refresh rate. Why not make the packets come whenever they are ready (in 3d) and at a regular rate (in 2d). THen have a monitor with an lcd panel expecting a signal like that.I mean, I think in the end the way nVidia did it right here is just a way to make it work right now with the constraints of the exiting lcd panels and DP protocols. In the future, I could easily see this sort of tech being built in to future protocols, video cards, and monitors, and probably all odne without needing an expensive FPGA and additional RAM.

Egg - Friday, December 13, 2013 - link

I don't see how drawing pixel by pixel solves anything. The issue arises whenever you do not draw a full frame.ZKriatopherZ - Saturday, December 14, 2013 - link

Yes, and if you aren't drawing frame by frame on the monitor there is no issue :D I'm saying remove the frame by frame drawing on the display side completely. This would create an effective "refresh" rate that is only limited by how many pixels the card can push and how fast the pixels can change on the display side. You could also take it a step further and move away from the frame by frame output on the software side. Fantastic example is the desktop display where 50% of the time the only change on the display is the movement of the mouse cursor. Even in a fps game where most of the screen changes constantly it would still benefit from the lack of a frame holdup since what we call refresh rate would no longer exist.Truthfully this is all speculative, I don't have enough experience to tell if there are shortcomings here I'm overlooking but nothing blatant stands out at the moment.

blitzninja - Saturday, December 21, 2013 - link

Here's one problem with the video card pushing out data on a per pixel basis. It would take a LOT more data.When a scene is rendered, the instructions essentially target a set of pixels and apply an effect to them (colour, saturation, brightness, etc) and when all calculations are done the final product is sent to the screen to be rendered (tearing is when the not all of the new image has been copied into the monitor's frame buffer).

The problem is that the GPU is blind to any upcoming draws calls in that it does not know which pixels will be affected until the calculations are done. This means that there is no way for the GPU to know when a particular pixel is full computed or "rendered" and ready to be sent to the monitor's frame buffer.

A better solution would be to, for 2D applications, check for pixel changes from one frame to another and simply send the change.

For 3D is see this as impossible since any small change (camera movement or otherwise) will require a complete re-render due to the nature of 3D and how the calculations are done (the GPU has no idea how to render a scene, it simply follows instructions layed out by the developer, so it can't figure out which pixels it can skip).

blitzninja - Saturday, December 21, 2013 - link

Quick clarification: The increase in data would be the need for the GPU to continuously overwrite pixels in the monitor's frame buffer in 3D mode until all draws are complete.ZKriatopherZ - Saturday, December 28, 2013 - link

Does this have to do with the developer or the established rendering API? (OpenGL or DirectX) If the API is designed to output that way wouldn't that make both development and implementation easier? I get what you are saying about camera movement but there is a speed limitation caused by rendering frame by frame as well. if you are dealing with something like a tn panel that has a quick color to color change the effective fps on a per pixel basis becomes closer to 300 fps (or we could call it pixel per second here) even if you are drawing full screens.I also am wrapping my head around what you are saying about 3d applications. Ultimately they are still outputting to a 2d display. I understand there are shaders and other effects that may require a full screen write and it sounds like the Graphics Card, OS, API's and Display are all set up that way. It may take some serious effort to really take a step back and take a more efficient approach based on the current display technology. Ultimately though if you can quickly kind of go through and make the changes to allow this to take place I do feel like it will end up being a much more efficient and faster approach. It may just not be as easy as it first seemed to me.

otherwise - Thursday, December 12, 2013 - link

In the future, we're all going to need a new monitor. Depending on the price premium; and assuming these make their way into IPS displays; it might be hard to justify buying a non-Gsync monitor over a GSync monitor. I doubt many are going to run out to buy a new $400 display right now; but this will have a powerful effect on consumer behavior down the line.oranos - Thursday, December 12, 2013 - link

whats your point? Maybe this site should stop posting all tech articles that don't fit into wide mainstream demographics?Black Obsidian - Thursday, December 12, 2013 - link

My point was obviously that this seemed to be a technology currently useful to virtually nobody.Ian pointed out future applications that I hadn't considered, which was exactly the sort of feedback I was hoping for.

BeVar - Thursday, December 12, 2013 - link

@Black Obsidian> No! This is just a marketing gimmick. A costly one at that. I like you would just buy a batter video board. But, as Art Linkletter said "people are funny".