NVIDIA G-Sync Review

by Anand Lal Shimpi on December 12, 2013 9:00 AM ESTHow it Plays

The requirements for G-Sync are straightforward. You need a G-Sync enabled display (in this case the modified ASUS VG248QE is the only one “available”, more on this later). You need a GeForce GTX 650 Ti Boost or better with a DisplayPort connector. You need a DP 1.2 cable, a game capable of running in full screen mode (G-Sync reverts to V-Sync if you run in a window) and you need Windows 7 or 8.1.

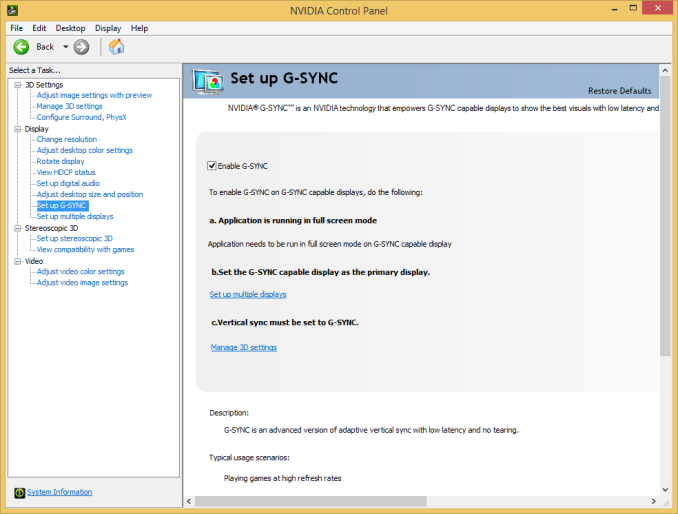

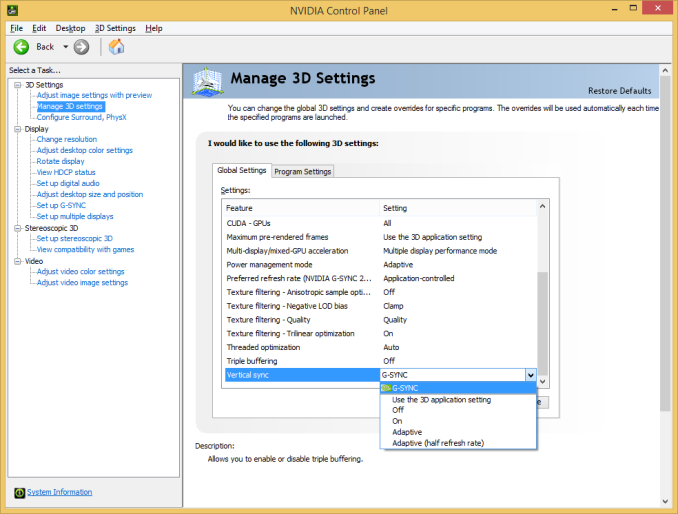

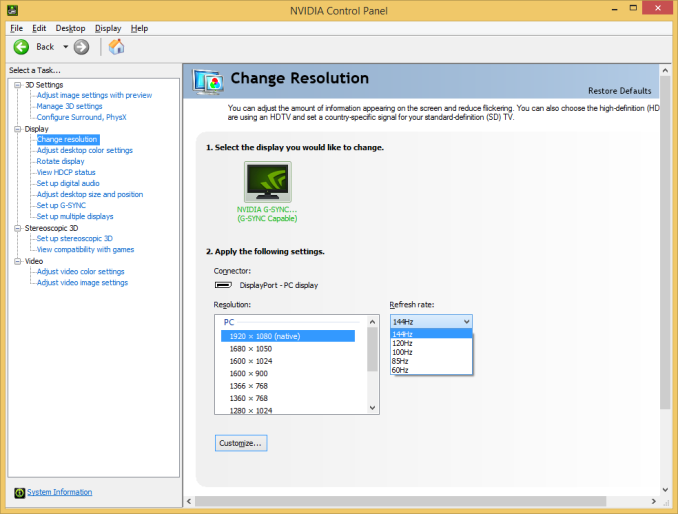

G-Sync enabled drivers are already available at GeForce.com (R331.93). Once you’ve met all of the requirements you’ll see the appropriate G-Sync toggles in NVIDIA’s control panel. Even with G-Sync on you can still control the display’s refresh rate. To maximize the impact of G-Sync NVIDIA’s reviewer’s guide recommends testing v-sync on/off at 60Hz but G-Sync at 144Hz. For the sake of not being silly I ran all of my comparisons at 60Hz or 144Hz, and never mixed the two, in order to isolate the impact of G-Sync alone.

NVIDIA sampled the same pendulum demo it used in Montreal a couple of months ago to demonstrate G-Sync, but I spent the vast majority of my time with the G-Sync display playing actual games.

I’ve been using Falcon NW’s Tiki system for any experiential testing ever since it showed up with NVIDIA’s Titan earlier this year. Naturally that’s where I started with the G-Sync display. Unfortunately the combination didn’t fare all that well, with the system exhibiting hard locks and very low in-game frame rates with the G-Sync display attached. I didn’t have enough time to further debug the setup and plan on shipping NVIDIA the system as soon as possible to see if they can find the root cause of the problem. Switching to a Z87 testbed with an EVGA GeForce GTX 760 proved to be totally problem-free with the G-Sync display thankfully enough.

At a high level the sweet spot for G-Sync is going to be a situation where you have a frame rate that regularly varies between 30 and 60 fps. Game/hardware/settings combinations that result in frame rates below 30 fps will exhibit stuttering since the G-Sync display will be forced to repeat frames, and similarly if your frame rate is equal to your refresh rate (60, 120 or 144 fps in this case) then you won’t really see any advantages over plain old v-sync.

I've put together a quick 4K video showing v-sync off, v-sync on and G-Sync on, all at 60Hz, while running Bioshock Infinite on my GTX 760 testbed. I captured each video at 720p60 and put them all side by side (thus making up the 3840 pixel width of the video). I slowed the video down by 50% in order to better demonstrate the impact of each setting. The biggest differences tend to be at the very beginning of the video. You'll see tons of tearing with v-sync off, some stutter with v-sync on, and a much smoother overall experience with G-Sync on.

While the comparison above does a great job showing off the three different modes we tested at 60Hz, I also put together a 2x1 comparison of v-sync and G-Sync to make things even more clear. Here you're just looking for the stuttering on the v-sync setup, particularly at the very beginning of the video:

Assassin’s Creed IV

I started out playing Assassin’s Creed IV multiplayer with v-sync off. I used GeForce Experience to predetermine the game quality settings, which ended up being maxed out even on my GeForce GTX 760 test hardware. With v-sync off and the display set to 60Hz, there was just tons of tearing everywhere. In AC4 the tearing was arguably even worse as it seemed to take place in the upper 40% of the display, dangerously close to where my eyes were focused most of the time. Playing with v-sync off was clearly not an option for me.

Next was to enable v-sync with the refresh rate left at 60Hz. Lots of AC4 renders at 60 fps, although in some scenes both outdoors and indoors I saw frame rates drop down into the 40 - 51 fps range. Here with v-sync enabled I started noticing stuttering, especially as I moved the camera around and the difficulty of what was being rendered varied. In some scenes the stuttering was pretty noticeable. I played through a bunch of rounds with v-sync enabled before enabling G-Sync.

I enabled G-Sync, once again leaving the refresh rate at 60Hz and dove back into the game. I was shocked; virtually all stuttering vanished. I had to keep FRAPS running to remind me of areas where I should be seeing stuttering. The combination of fast enough hardware to keep the frame rate in the G-Sync sweet spot of 40 - 60 fps and the G-Sync display itself produced a level of smoothness that I hadn’t seen before. I actually realized that I was playing Assassin’s Creed IV with an Xbox 360 controller literally two feet away from my PS4 and having a substantially better experience.

Batman: Arkham Origins

Next up on my list was Batman: Arkham Origins. I hadn’t played the past couple of Batman games but they always seemed interesting to me so I was glad to spend some time with this one. Having skipped the previous ones, I obviously didn’t have the repetitive/unoriginal criticisms of the game that some other seemed to have had. Instead I enjoyed its pace and thought it was a decent way to kill some time (or in this case, test a G-Sync display).

Once again I started off with v-sync off with the display set to 60Hz. For a while I didn’t see any tearing, that was until I ended up inside a tower during the second mission of the game. I was panning across a small room and immediately encountered a ridiculous amount of tearing. This was even worse than Assassin’s Creed. What’s interesting about the tearing in Batman was that it really felt more limited in frequency than in AC4’s multiplayer, but when it happened it was substantially worse.

Next up was v-sync on, once again at 60Hz. Here I noticed sharp variations in frame rate resulting in tons of stutter. The stutter was pretty consistent both outdoors (panning across the city) and indoors (while fighting large groups of enemies). I remember seeing the stutter and noting that it was just something I’m used to expecting. Traditionally I’d fight this on a 60Hz panel by lowering quality settings to at least drive for more time at 60 fps. With G-Sync enabled, it turns out I wouldn’t have to.

The improvement to Batman was insane. I kept expecting it to somehow not work, but G-Sync really did smooth out the vast majority of stuttering I encountered in the game - all without touching a single quality setting. You can still see some hiccups, but they are the result of other things (CPU limitations, streaming textures, etc…). That brings up another point about G-Sync: once you remove GPU/display synchronization as a source of stutter, all other visual artifacts become even more obvious. Things like aliasing and texture crawl/shimmer become even more distracting. The good news is you can address those things, often with a faster GPU, which all of the sudden makes the G-Sync play an even smarter one on NVIDIA’s part. Playing with G-Sync enabled raises my expectations for literally all other parts of the visual experience.

Sleeping Dogs

I’ve been wanting to play Sleeping Dogs ever since it came out, and the G-Sync review gave me the opportunity to do just that. I like the premise and the change of scenery compared to the sandbox games I’m used to (read: GTA), and at least thus far I can put up with the not-quite-perfect camera and fairly uninspired driving feel. The bigger story here is that running Sleeping Dogs at max quality settings gave my GTX 760 enough of a workout to really showcase the limits of G-Sync.

With v-sync (60Hz) on I typically saw frame rates around 30 - 45 fps, but there were many situations where the frame rate would drop down to 28 fps. I was really curious to see what the impact of G-Sync was here since below 30 fps G-Sync would repeat frames to maintain a 30Hz refresh on the display itself.

The first thing I noticed after enabling G-Sync is my instantaneous frame rate (according to FRAPS) dropped from 27-28 fps down to 25-26 fps. This is that G-Sync polling overhead I mentioned earlier. Now not only did the frame rate drop, but the display had to start repeating frames, which resulted in a substantially worse experience. The only solution here was to decrease quality settings to get frame rates back up again. I was glad I ran into this situation as it shows that while G-Sync may be a great solution to improve playability, you still need a fast enough GPU to drive the whole thing.

Dota 2 & Starcraft II

The impact of G-Sync can also be reduced at the other end of the spectrum. I tried both Dota 2 and Starcraft II with my GTX 760/G-Sync test system and in both cases I didn’t have a substantially better experience than with v-sync alone. Both games ran well enough on my 1080p testbed to almost always be at 60 fps, which made v-sync and G-Sync interchangeable in terms of experience.

Bioshock Infinite @ 144Hz

Up to this point all of my testing kept the refresh rate stuck at 60Hz. I was curious to see what the impact would be of running everything at 144Hz, so I did just that. This time I turned to Bioshock Infinite, whose integrated benchmark mode is a great test as there’s tons of visible tearing or stuttering depending on whether or not you have v-sync enabled.

Increasing the refresh rate to 144Hz definitely reduced the amount of tearing visible with v-sync disabled. I’d call it a substantial improvement, although not quite perfect. Enabling v-sync at 144Hz got rid of the tearing but still kept a substantial amount of stuttering, particularly at the very beginning of the benchmark loop. Finally, enabling G-Sync fixed almost everything. The G-Sync on scenario was just super smooth with only a few hiccups.

What’s interesting to me about this last situation is if 120/144Hz reduces tearing enough to the point where you’re ok with it, G-Sync may be a solution to a problem you no longer care about. If you’re hyper sensitive to tearing however, there’s still value in G-Sync even at these high refresh rates.

193 Comments

View All Comments

dagnamit - Thursday, December 12, 2013 - link

Agreed. You would think that getting the display and the thing that talks to the display speaking the same language would be close to first on the list.DanNeely - Thursday, December 12, 2013 - link

Same here. I'm not going to rush out and buy a 1080p gsync monitor; but even in a year or two an extra $120 on a 4k monitor isn't going to be a large hit relatively speaking and gaming at <60 FPS will be a lot more common there than at 1080p.Black Obsidian - Thursday, December 12, 2013 - link

4K is a really good point; I hadn't considered the utility of this sort of thing on much higher-resolution monitors.TheHolyLancer - Thursday, December 12, 2013 - link

Here is the thing, if you have it all. Then a SLI/CF or Titan / 290X setup will mean that you will more than likely able to max out the graphics, and G-sync and V-Sync becomes more or less the same.The target market is for when you are on a budget and is playing with mid range cards and the card cannot push 60 / 120 / 144 fps (or 30 fps, if that is your thing...) consistently at 1080 or 4K. Which means price becomes an issue, if you are going to buy a midrange card, likely you are going to reuse your existing monitor, or maybe get a nice cheap one unless the G-sync enabled models (and cards) are not significantly expensive that you can step up to a better card that can then run it nicely at full speed via v-sync.

So if they can price it so that a new monitor + new nv gpu is the same as a new monitor of same size and speed + new amd gpu + say 20 dollars then that is fine. But if they can't do that then for a mid range gpu dropping 20 or 30 dollar more can mean a lot more performance for the buck; unless you are already at the upper end of midrange, to go from upper midrange to high end is a large jump in cost. And even then, if people want to keep the monitor they have, then there will likely be NO way that this will take off, because even a cheap 1080 is ~100 dollars, and that means a huge jump in quality of the GPU if you used it on the card itself rather than with the monitor.

The killer app would be if G-Sync would work with any bog ol' monitor (or that all future monitor is sold with this soc enabled). Then it would become a nice new feature that is good for many people.

Kamus - Friday, December 13, 2013 - link

"Here is the thing, if you have it all. Then a SLI/CF or Titan / 290X setup will mean that you will more than likely able to max out the graphics, and G-sync and V-Sync becomes more or less the same."This is just flat out wrong...

I play BF4 on a 290x on a 120hz monitor. And there are very few maps that mantain a consistent framerate. So as soon as the framerate dips below 120 i start seeing suttering. And that's on the smooth maps. There are maps, like "seige of shangai" where the framerate hovers from 80 all the way down to 30-40 FPS... Vsync would be a HUGE deal in situations like that.

TL;DR= Gsync is a big deal, even for high end rigs.

Da W - Thursday, December 12, 2013 - link

The gamer that has it all certainly won't invest on a 1080p Tn panel.Here's the problem right now: a bunch of things that will get implemented later. Isn't the solution in hardware? Will I have to replace my panel next year? And then, my panel will be tied to NVIDIA?

Not just yet. AMD will surely come with an open source solution next year, as usual.

SlyNine - Thursday, December 12, 2013 - link

Lots of gamers will invest in TN panels because that technology is actually better for games. But it does come at a compromise.rarson - Sunday, December 15, 2013 - link

I just bought a 27" QHD IPS monitor for $285. From the games I've played on it so far, I'd say you're nuts if you buy a 1080p TN panel over a monitor like this.tlbig10 - Tuesday, December 17, 2013 - link

And I'll counter with saying you're nuts for overlooking 120/144hz TN panels *if* the main use for your machine is gaming. I have the VG248QE, have enabled LightBoost on it, and I would *never* use my wife's 27" QHD IPS for gaming because I would lose the butter smoothness a 120hz LB monitor gets me. Yes her display has better color reproduction, but it is a mess in BF4 with all its ghosting and 60hz choppiness. Until you've seen what LightBoost and 120hz is like in a first person shooter, you can't call us "nuts".And those of you on 120 or 144hz monitors who aren't using LightBoost, do yourself a favor and check it out. There is a substantial difference between LB 120hz and plain 144hz.

ZKriatopherZ - Thursday, December 12, 2013 - link

I think a lot of this is leftover garbage from the way CRT displays needed to be implemented. Seems like we should be removing hardware here not adding it. Flat Panels when introduced to that ecosystem needed to output on a frame by frame basis even though the only real limitation seems to be the pixel color to color refresh. Since LCD pixels are more like a switch wouldn't a video card output and display system that updated on an independent per pixel basis be more efficient and better suited to modern displays? I understand games have frame buffers you would need to interpret but that can all be addressed in the video card hardware. If you have a card capable of drawing to the screen in such a way wouldn't that make this additional hardware unnecessary and eliminate the tearing problem?